Alexey Gritsenko @agritsenko

Research @ Google Brain // opinions my own The Netherlands Joined April 2010-

Tweets32

-

Followers349

-

Following147

-

Likes103

@_akhaliq I added OWL-ViT v2 to the plot. A single OWLv2 B/16 model, finetuned on O365+VG, covers all speed/accuracy combinations: Simply adjust the inference resolution to match your latency requirements. No re-training needed. arxiv.org/abs/2306.09683

Really nice work from my collaborators. I am particularly excited to see positive transfer of fine-grained information from image-level pre-training to instance-level tasks such as object detection. With SPARC, OWL-ViT improves on LVIS and COCO, which super encouraging.

Really nice work from my collaborators. I am particularly excited to see positive transfer of fine-grained information from image-level pre-training to instance-level tasks such as object detection. With SPARC, OWL-ViT improves on LVIS and COCO, which super encouraging.

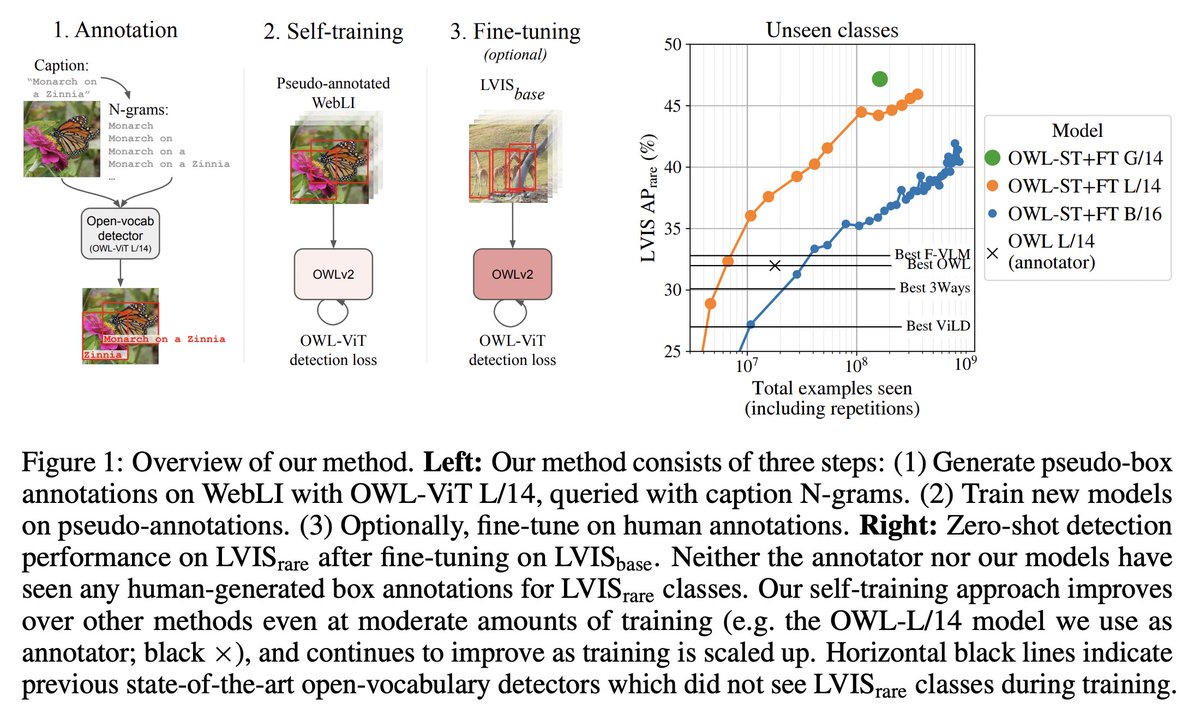

We just open-sourced OWL-ViT v2, our improved open-vocabulary object detector that uses self-training to reach >40% zero-shot LVIS APr. Check out the paper, code, and pretrained checkpoints: arxiv.org/abs/2306.09683 github.com/google-researc…. With @agritsenko and @neilhoulsby.

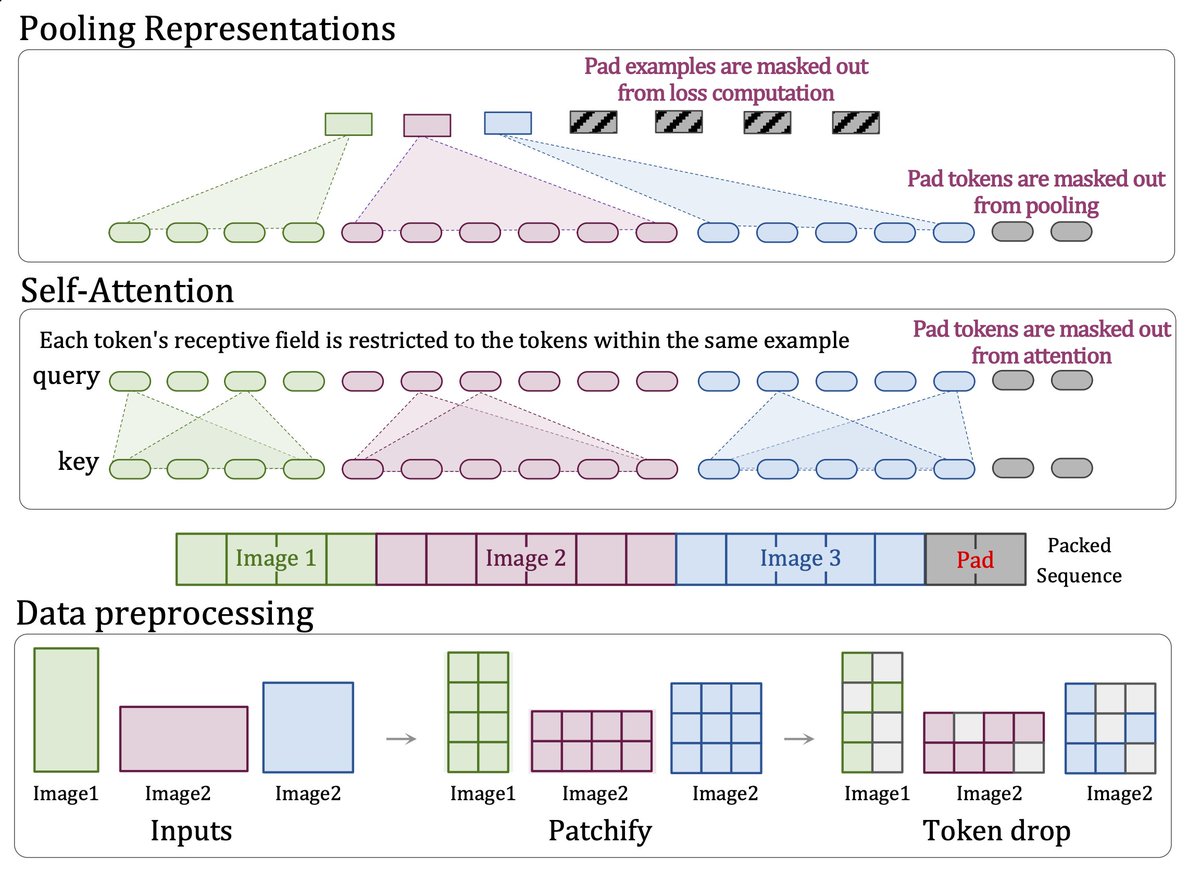

We explore several ways to accelerate the training in the paper: Token dropping is a nice trick to reduce the training cost of ViT models (or get better models for the same cost). With NaViT one can take it several steps further!

We explore several ways to accelerate the training in the paper: Token dropping is a nice trick to reduce the training cost of ViT models (or get better models for the same cost). With NaViT one can take it several steps further!

Check out NaViT, a native resolution ViT for all aspect ratios, enhancing training efficiency & performance. By preserving aspect ratios, it improves fairness-signal annotation, useful where metrics like group calibration are noise-sensitive. NaViT helps overcome such challenges.

Check out NaViT, a native resolution ViT for all aspect ratios, enhancing training efficiency & performance. By preserving aspect ratios, it improves fairness-signal annotation, useful where metrics like group calibration are noise-sensitive. NaViT helps overcome such challenges. https://t.co/1UUvjmd1jJ

Do you want to accelerate your vision model without losing quality? NaViT takes images of arbitrary resolutions and aspect ratios - no more resizing to square with constant resolution. One cool implication is that you can control compute/quality tradeoff by resizing:

Do you want to accelerate your vision model without losing quality? NaViT takes images of arbitrary resolutions and aspect ratios - no more resizing to square with constant resolution. One cool implication is that you can control compute/quality tradeoff by resizing:

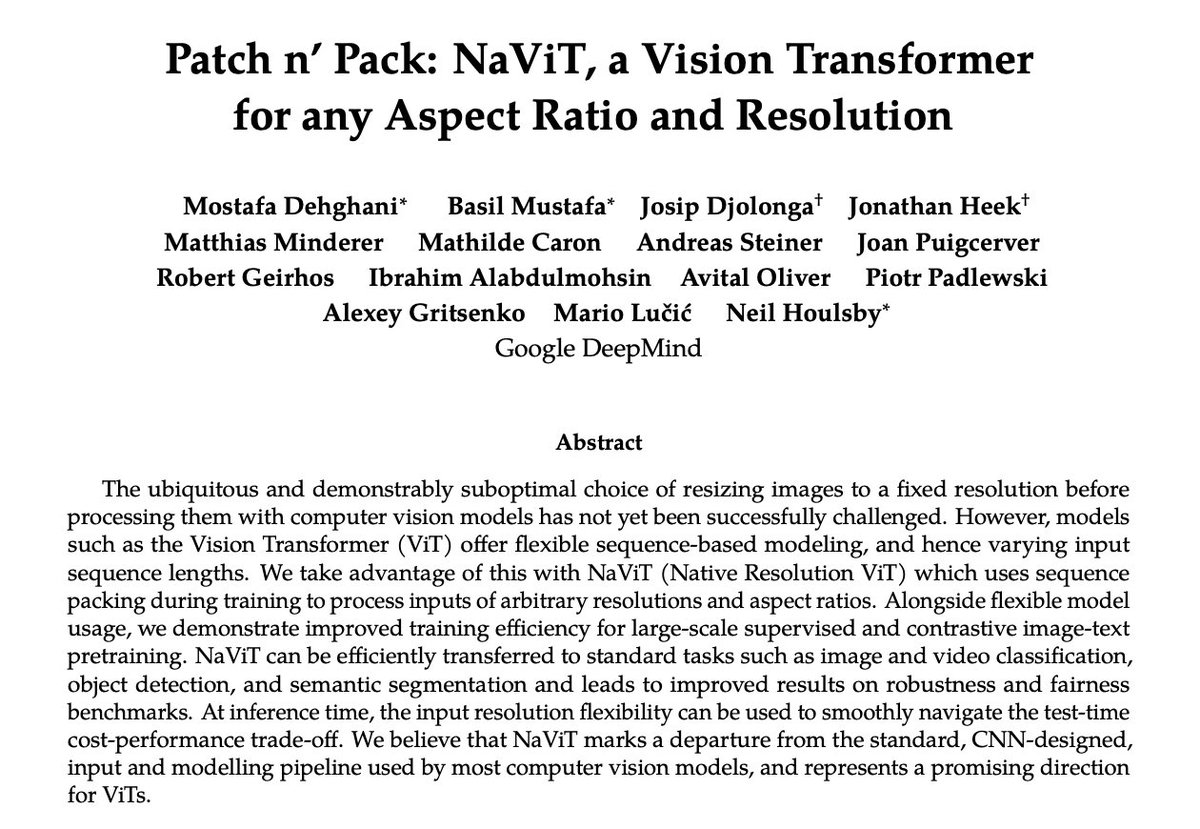

1/ Excited to share "Patch n' Pack: NaViT, a Vision Transformer for any Aspect Ratio and Resolution". NaViT breaks away from the CNN-designed input and modeling pipeline, sets a new course for ViTs, and opens up exciting possibilities in their development. arxiv.org/abs/2307.06304

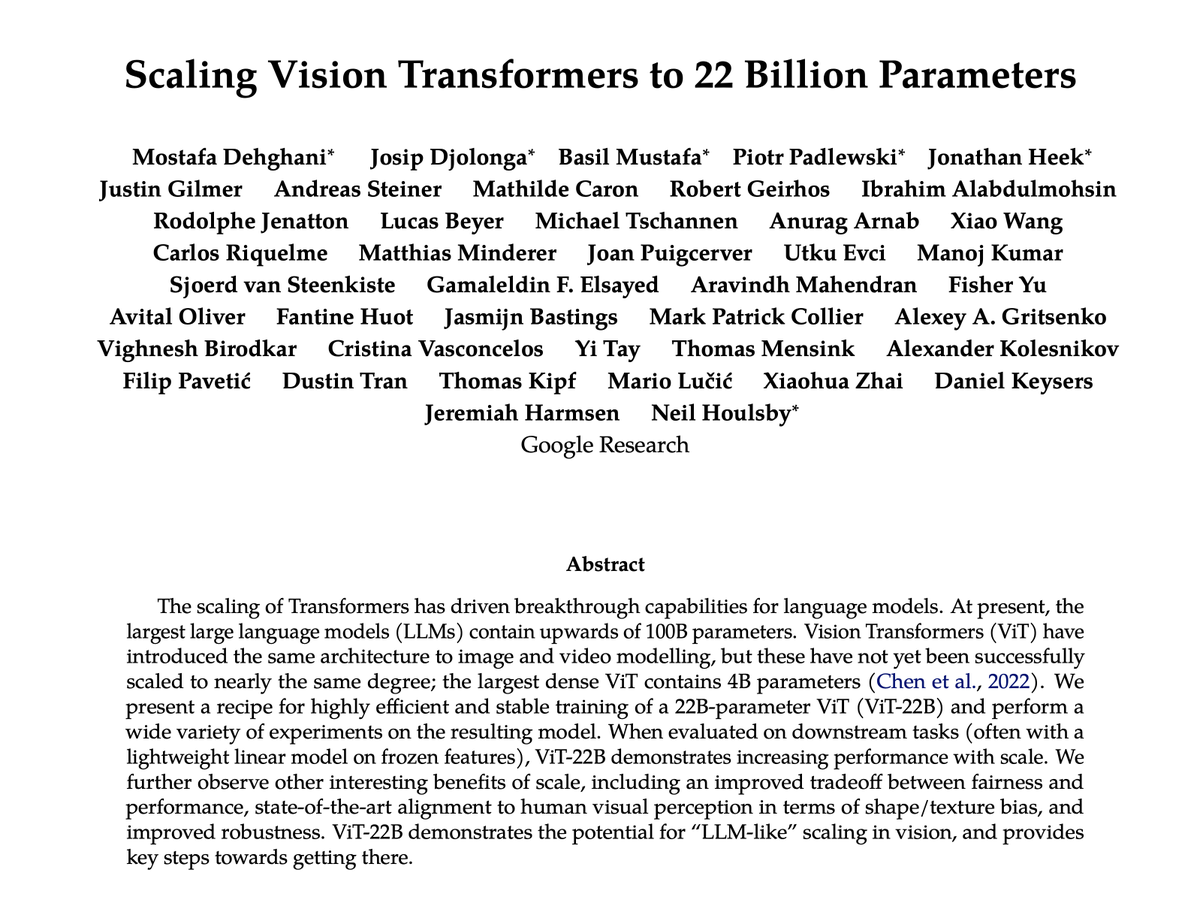

Really exciting progress on scaling Vision Transformers. Turns out being clever about applying lessons learned in language modelling leads to exceptional results in vision as well!

Really exciting progress on scaling Vision Transformers. Turns out being clever about applying lessons learned in language modelling leads to exceptional results in vision as well!

1/ Today we are excited to introduce Phenaki: phenaki.github.io, short-link-to-paper, a model for generating videos from text, with prompts that can change over time, and that is able to generate videos that can be as long as multiple minutes!

Excited to announce Imagen Video, our new text-conditioned video diffusion model that generates 1280x768 24fps HD videos! #ImagenVideo imagen.research.google/video/ Work w/ @wchan212 @Chitwan_Saharia @jaywhang_ @RuiqiGao @agritsenko @dpkingma @poolio @mo_norouzi @fleet_dj @TimSalimans

OWL-ViT by @GoogleAI is now available @huggingface Transformers. The model is a minimal extension of CLIP for zero-shot object detection given text queries. 🤯 🥳 It has impressive generalization capabilities and is a great first step for open-vocabulary object detection! (1/2)

Generate data in random order? A masked language models as generative model? All this and more in "Autoregressive Diffusion Models" with @agritsenko @BastingsJasmijn @poolio @vdbergrianne @TimSalimans. For details see arxiv.org/abs/2110.02037. Some explanations below...

Proud to share Scenic, a JAX/Flax library that I helped develop, and use daily myself. We put a lot of emphasis on optimising each and every bit of library to make it easy to use, yet efficient - supercharging research into large-scale computer vision research. Check it out!

Proud to share Scenic, a JAX/Flax library that I helped develop, and use daily myself. We put a lot of emphasis on optimising each and every bit of library to make it easy to use, yet efficient - supercharging research into large-scale computer vision research. Check it out!

Sander Dieleman @sedielem

50K Followers 2K Following Research Scientist at Google DeepMind. I tweet about deep learning (research + software), music, generative models (personal account).

Yi Tay @YiTayML

29K Followers 97 Following chief scientist / cofounder @RekaAILabs 🫠 past: research scientist @google brain 🤯 currently learning to be a dad 🍼

Neil Houlsby @neilhoulsby

4K Followers 317 Following Professional AI researcher; amateur athlete. Senior Staff RS in the Google Deepmind, Zürich. Attempts triathlons.

Jim Fan @DrJimFan

229K Followers 3K Following @NVIDIA Sr. Research Manager & Lead of Embodied AI (GEAR Lab). Creating foundation models for Humanoid Robots & Gaming. @Stanford Ph.D. @OpenAI's first intern.

Joan Puigcerver @joapuipe

859 Followers 376 Following Software Engineer in Research at Google DeepMind, Zürich.

Nathan Benaich @nathanbenaich

51K Followers 32K Following solo member of investment staff @airstreet, brewing ambition @airstreetcafe, next token predictor @airstreetpress

Basil Mustafa @_basilM

1K Followers 130 Following researching ML @ google brain ZRH | no strong opinions about AI, but very strong opinions about why herbal infusions are awful and should not be called teas

Thomas Wolf @Thom_Wolf

68K Followers 4K Following Co-founder and CSO @HuggingFace - open-source and open-science

Thomas Kipf @tkipf

25K Followers 1K Following AI Research at @GoogleDeepMind. Ex-Physicist. Graph Neural Networks & Controllable Generative Models (e.g. GCNs, Structured World Models, Slot Attention).

Colin Raffel @colinraffel

30K Followers 654 Following nonbayesian parameterics, sweet lessons, and random birds. Friend of @srush_nlp

Zachary Nado @zacharynado

5K Followers 648 Following Research engineer @googlebrain. Past: software intern @SpaceX, ugrad researcher in @tserre lab @BrownUniversity. All opinions my own.

Jeremiah Harmsen @JeremiahHarmsen

1K Followers 488 Following Creator of #TensorFlowHub and @TensorFlow Serving. Lead in Google Brain.

_5_2Hertz @2hertz553435

5 Followers 942 Following

Hector Garcia Rodrigu.. @hector_grhv

78 Followers 688 Following Research @Huawei . Previously Amazon, UCL.

Peter Chen @peterxichen

3K Followers 1K Following Covariant CEO and Co-Founder. Previously @OpenAI, @UCBerkeley PhD.

Otniel-Bogdan Mercea @MerceaOtniel

45 Followers 431 Following PhD @MPI_IS,@uni_tue,@HelmholtzMunich🇩🇪 Prev 2 x @Google🇫🇷,@EdinburghUni🇬🇧,@everseen🇷🇴 Multi-modal efficient learning

appbootup35412 @appbootup352277

9 Followers 587 Following

Imad Khwaja @flyingblackswan

164 Followers 2K Following SaaS Growth || SEO Marketing Agency || Entrepreneur

Tech2You @tech_2you

40 Followers 200 Following En tech2You de Ai2You acercamos al publico en general todo el potencial de la Inteligencia artificial, el software libre y la automatización, IA para HUMANOS.

Reuven Peretz @peretz_reuven

59 Followers 93 Following

Dmitrijs Balabka 🇱.. @dmitrybalabka

167 Followers 951 Following MSc in CS, Machine Learning Engineer with +10 years of Software Engineering @ Ecentria Group, US e-commerce

김수빈 @ksb21st

6 Followers 317 Following

quietsmile @quietsmile_hk

16 Followers 823 Following

Daniel Furrer @assimil8or

77 Followers 229 Following Software Engineer, Tech Lead Manager @Google Core ML

Anastasija Ilic @anastasija56572

6 Followers 9 Following Research Engineer @GoogleDeepMind | MEng @Cambridge_Uni

Ioana Bica @IoanaBica95

2K Followers 714 Following Research Scientist @GoogleDeepMind | Board of Directors @WiMLworkshop | Ex PhD Student @UniofOxford,@turinginst and @cambridge_cl BA & MPhil

Darko @Darko1521056

279 Followers 4K Following

Abdullah 🌧️🖤 @abdulla90674184

54 Followers 605 Following None are more hopelessly enslaved than those who falsely believe they are free.

Mehul Jain @_mehul_jain

766 Followers 1K Following Bootstrapped my AI consulting firm to $2 Million ARR. Tweets about #Tech, #AI/ML and #ProductDevelopment. CTO @aidetic_in. Building @fabrichq_ai.

Michael @Wagieacc

334 Followers 2K Following

Martin Shkreli (e/acc.. @wagieeacc

99K Followers 8K Following despite all my ragie I'm still just a wagie in a cagie working on DL Software: https://t.co/FVn3NRNrLe https://t.co/CgaoMfhUHd

FENG Yang @fy2598099

7 Followers 177 Following

Abdoulaye Diack @A__Diack

3K Followers 2K Following AI/ML Program Management @ Google Research. ex Google Brain. Speaker (EN/FR) Opinions are my own. He/His. https://t.co/RFzicyHPhR

christian scheier @chris_scheier

137 Followers 2K Following

David @DavidSHolz

53K Followers 5K Following founder @midjourney, prev founder leap motion, nasa, max planck

Gokul @gokstudio

1K Followers 5K Following ML Engineer @Apple, ex-IBM Research. Ex Intern, Google Research. MSc CS at @ETH. Views my own, Retweet != Endorsement.

Aldizi @az_mtl

415 Followers 728 Following Applied ML Research @Mila_Quebec with BEng. and MASc. in biomed. eng. + neuroscience + deep learning. @polymtl alum. EN/FR. He/him. Views are my own. 🇨🇦

Michael Baumgartner @mibaumgartner_

211 Followers 743 Following PhD Student @mic_dkfz working on Medical Object Detection, Previously @RWTH

Cristóbal Alcázar @vamos_alcazar

370 Followers 4K Following 🌵Pura palabrería | @huggingface 🤗 Student Ambassador | MSc in Data Science student at Universidad de Chile | @acalonia_x

Thomas @TH_Wars

17 Followers 103 Following

!(NoRisk) @risk_generator

78 Followers 1K Following insecure underachiever. margin called since 2007. hft & short-term strats, NN/ML(non critical path). python/c++

Nino Scherrer @ninoscherrer

586 Followers 2K Following Interested in rigorous LLM evals, synthetic data, causality, robustness & AI safety/ethics | RS @PatronusAI | Prev: @VectorInst, @Mila_Quebec, @MPI_IS, @ETH_en

Pim Van den Bosch @PimVandenBosch2

25 Followers 263 Following

YoussAnon - ⵢⵓⵙ.. @MachineLearnerY

467 Followers 3K Following ⵣ/🇲🇦 In a world full of machines, the biggest act of resistance is to be human.

Jatin Arutla @JatinArutla

568 Followers 1K Following 23. MSc Artificial Intelligence at the University of Birmingham. FPL 2016/17 OR: 9th.

Rohit Karki @RohitKa46660754

138 Followers 4K Following

Yann LeCun @ylecun

710K Followers 718 Following Professor at NYU. Chief AI Scientist at Meta. Researcher in AI, Machine Learning, Robotics, etc. ACM Turing Award Laureate.

Sander Dieleman @sedielem

50K Followers 2K Following Research Scientist at Google DeepMind. I tweet about deep learning (research + software), music, generative models (personal account).

Andrej Karpathy @karpathy

978K Followers 904 Following 🧑🍳. Previously Director of AI @ Tesla, founding team @ OpenAI, CS231n/PhD @ Stanford. I like to train large deep neural nets 🧠🤖💥

Sara Hooker @sarahookr

39K Followers 7K Following I lead @CohereForAI. Formerly Research @Google Brain @GoogleDeepmind. ML Efficiency at scale, LLMs, @trustworthy_ml. Changing spaces where breakthroughs happen.

Yi Tay @YiTayML

29K Followers 97 Following chief scientist / cofounder @RekaAILabs 🫠 past: research scientist @google brain 🤯 currently learning to be a dad 🍼

Sindy Löwe @sindy_loewe

3K Followers 361 Following PhD Student with @WellingMax at the University of Amsterdam. Deep Learning with Structured Representations.

Ben Poole @poolio

17K Followers 1K Following research scientist at google brain. phd in neural nonsense from stanford.

Jeff Dean (@🏡) @JeffDean

296K Followers 6K Following Chief Scientist, Google DeepMind and Google Research. Co-designer/implementor of things like @TensorFlow, MapReduce, Bigtable, Spanner, Gemini .. (he/him)

Google AI @GoogleAI

2.2M Followers 23 Following Google AI is focused on bringing the benefits of AI to everyone. In conducting and applying our research, we advance the state-of-the-art in many domains.

Kyunghyun Cho @kchonyc

61K Followers 2K Following a combination of a mediocre scientist, a mediocre manager, a mediocre advisor & a mediocre PC at @nyuniversity (@CILVRatNYU) & @genentech (@PrescientDesign).

Lucas Beyer (bl16) @giffmana

56K Followers 444 Following Researcher (Google DeepMind/Brain in Zürich, ex-RWTH Aachen), Gamer, Hacker, Belgian. Mostly gave up trying mastodon as [email protected]

Neil Houlsby @neilhoulsby

4K Followers 317 Following Professional AI researcher; amateur athlete. Senior Staff RS in the Google Deepmind, Zürich. Attempts triathlons.

Joan Puigcerver @joapuipe

859 Followers 376 Following Software Engineer in Research at Google DeepMind, Zürich.

(((ل()(ل() 'yoav))).. @yoavgo

46K Followers 2K Following

Basil Mustafa @_basilM

1K Followers 130 Following researching ML @ google brain ZRH | no strong opinions about AI, but very strong opinions about why herbal infusions are awful and should not be called teas

Ferenc Huszár @fhuszar

40K Followers 1K Following Secular Bayesian. Associate Professor in Machine Learning @Cambridge_CL. Talent aficionado at https://t.co/RbJkoLguey Alum of @Twitter, Magic Pony and @Balderton

Google DeepMind @GoogleDeepMind

942K Followers 275 Following We’re a team of scientists, engineers, ethicists and more, committed to solving intelligence, to advance science and benefit humanity.

Max Welling @wellingmax

32K Followers 428 Following

Thomas Wolf @Thom_Wolf

68K Followers 4K Following Co-founder and CSO @HuggingFace - open-source and open-science

Ioana Bica @IoanaBica95

2K Followers 714 Following Research Scientist @GoogleDeepMind | Board of Directors @WiMLworkshop | Ex PhD Student @UniofOxford,@turinginst and @cambridge_cl BA & MPhil

Christine Anyansi @christinanyansi

19 Followers 20 Following phd student in bioinformatics... is the future here yet?

Piotr Padlewski @PiotrPadlewski

1K Followers 319 Following Chief Meme Officer @ https://t.co/CtBrcKmliI, ex-Google Deepmind/Brain Zurich

Xiaohua Zhai @XiaohuaZhai

3K Followers 206 Following Senior Staff Researcher @GoogleDeepMind team in Zürich

Ibrahim Alabdulmohsin.. @ibomohsin

906 Followers 581 Following AI Research Scientist at @GoogleDeepmind

Filip Pavetić @FPavetic

42 Followers 133 Following

Mathilde Caron @mcaron31

1K Followers 27 Following Research Scientist @googIeresearch Grenoble ⛰️ Previously PhD student @Inria & @MetaAI (FAIR)

Donald J. Trump @realDonaldTrump

87.3M Followers 51 Following 45th President of the United States of America🇺🇸

Felix Raimundo @gama_search

301 Followers 320 Following Postdoc @UMassChan into cancer research, single-cell and epigenomics, previously @GoogleAI and @institut_curie @gamazeps on most platforms (he/him)

Michael Tschannen @mtschannen

1K Followers 617 Following Machine learning researcher @GoogleDeepMind. Past: @Apple, @awscloud AI, @ETH_en. Multimodal/representation learning.

Ruiqi Gao @RuiqiGao

5K Followers 512 Following Research scientist @Google DeepMind. Generative modeling, representation learning.

Chitwan Saharia @Chitwan_Saharia

3K Followers 289 Following @ideogram_ai Past: Sr. Research Scientist @GoogleAI || B. Tech, CSE, @IITBombay

Jonathan Ho @hojonathanho

4K Followers 151 Following

Hugging Face @huggingface

342K Followers 189 Following The AI community building the future. https://t.co/VkRPD0VKaZ #BlackLivesMatter #stopasianhate

Andreas Steiner @AndreasPSteiner

556 Followers 121 Following Researching #ComputerVision at #Google using JAX/Flax (https://t.co/Sz1Dg3tKwD). views are my own.

Alexander Kolesnikov @__kolesnikov__

4K Followers 158 Following Research scientist at @googledeepmind, Zürich.

Carlos Riquelme @rikelhood

2K Followers 2K Following head of language models team @StabilityAI previously researcher @GoogleBrain @Stanford @Oxford & @UAM_Madrid amateur writer at https://t.co/Qa9neIqmg8

Joelle Pineau @jpineau1

10K Followers 352 Following AI researcher. VP AI Research (FAIR), @AIatMeta. Professor of Computer Science, @mcgillu. Core academic member, @Mila_Quebec

Jelmer Cnossen @jelmer_cnossen

324 Followers 611 Following Researcher at VU Amsterdam, interested in biophysics, deep learning for biology, lab automation, Shenzhen and making hardware.

Avital Oliver @avitaloliver

2K Followers 877 Following Neural Network Plumber @OpenAI. Formerly Google Brain. Worked on https://t.co/vRUtCR2oEI. I used to think I'd become a math professor.

jörn jacobsen @jh_jacobsen

2K Followers 2K Following pushing representation learning boundaries at 🍏 prev: @VectorInst/@UofT, @bethgelab, @UvA_Amsterdam, @maxplanckpress

Olivier Bachem @OlivierBachem

3K Followers 305 Following Senior Staff Research Scientist at @GoogleDeepMind where I lead the team that built the RLHF technology used in Bard, PaLM 2, Gemini, and other Google products.

Bas Veeling @BasVeeling

1K Followers 986 Following K’Nex’ing molecules at Microsoft Research. Previously student researcher at Google Brain and PhD student at AMLab.

Colin Raffel @colinraffel

30K Followers 654 Following nonbayesian parameterics, sweet lessons, and random birds. Friend of @srush_nlp

Mario Lucic @MarioLucic_

3K Followers 148 Following Staff Research Scientist @ https://t.co/pXedOGSgT3. Gemini Video and Audio-video understanding.

Delft Aerospace Rocke.. @daretudelft

3K Followers 666 Following Official page of Delft Aerospace Rocket Engineering, the student rocketry team of the TU Delft that designs, build and flies record breaking rockets!

Shen Zhuoran @CMS_Flash

176 Followers 127 Following Pushing general intelligence via code LLMs at Stealth Startup. Ex-@GoogleAI/@Cruise/@PonyAI_tech. Alum @HKUniversity. 💎 Terran @StarCraft II.Don Metzler @metzlerd

3K Followers 602 Following Research Scientist at Google Research. Research interests: Large Language Models, Machine Learning, Information Retrieval.

Emiel Hoogeboom @emiel_hoogeboom

2K Followers 156 Following Research Scientist at Google Brain. Formerly PhD student with @wellingmax at the Univ. of Amsterdam, Research intern at Qualcomm AI Research and Google Brain.

Colin White @crwhite_ml

990 Followers 760 Following Automating, explaining, and de-biasing neural networks. Head of Research at @abacusai. Prev @SCSatCMU

Stefano Ermon @StefanoErmon

13K Followers 362 Following Associate Professor of #computerscience @Stanford #AI #ML #Sustainability

Yang Song @DrYangSong

10K Followers 886 Following Leading the Strategic Explorations team @OpenAI. Score-Based Models. Diffusion Models. Consistency Models.

Ricky T. Q. Chen @RickyTQChen

4K Followers 809 Following Research Scientist at FAIR NY, Meta. I build simplified abstractions of the world through the lens of dynamics and flows.

Cassandra B.C. @michaeljburry

1.5M Followers 0 Following

Mohammed AlQuraishi @MoAlQuraishi

10K Followers 358 Following MLing biomolecules en route to structural systems biology. Asst Prof of Systems Biology and CS @Columbia. Prev. @Harvard SysBio; @Stanford Genetics, Stats.

@timnitGebru@dair-com.. @timnitGebru

169K Followers 3K Following she/her I am at @[email protected] via the #TwitterMigration. DAIR's Mastodon account is at [email protected]

Shimon Whiteson @shimon8282

15K Followers 404 Following Professor of Computer Science at Oxford. Head of Research at Waymo UK.Excited by the generality of CLIP, but need more fine-grained details in your representation? Introducing SPARC, a simple and scalable method for pretraining multimodal representations with fine-grained information. 🥳 1/6

Another computer vision banger made in Zürich (not by me, but my colleagues @MJLM3 @agritsenko @neilhoulsby)

Excited to share that @Google's OWLv2 model is now available in 🤗 Transformers! This model is one of the strongest zero-shot object detection models out there, improving upon OWL-ViT v1 which was released last year🔥 How? By self-training on web-scale data of over 1B examples⬇️

We just open-sourced OWL-ViT v2, our improved open-vocabulary object detector that uses self-training to reach >40% zero-shot LVIS APr. Check out the paper, code, and pretrained checkpoints: arxiv.org/abs/2306.09683 github.com/google-researc…. With @agritsenko and @neilhoulsby.

@neilhoulsby @m__dehghani @_basilM @JonathanHeek @MJLM3 @mcaron31 @AndreasPSteiner @joapuipe @ibomohsin @avitaloliver @PiotrPadlewski I really think you're underselling the third chart. The x axis is logarithmic, these are very serious efficiency gains for applications. Amazing job

ViT is overdue a modern LM-style input pipeline to exploit Transformer's flexibility to combine inputs. NaViT (Native ViT) trains on sequences of images of arbitrary resolutions and aspect ratios. There is lots to unpack, see Mostafa's thread! A feature that I particularly…

1/ Excited to share "Patch n' Pack: NaViT, a Vision Transformer for any Aspect Ratio and Resolution". NaViT breaks away from the CNN-designed input and modeling pipeline, sets a new course for ViTs, and opens up exciting possibilities in their development. arxiv.org/abs/2307.06304

Thorough investigation by @agritsenko on position embeddings for NaViT. An important component to get right to enable good generalisation to new resolutions and aspect ratios.

2/4 For NaViT, we delved into various dimensions of exploration: (1) Coordinate reflection: Absolute, where embeddings depend on the absolute patch index, or Fractional, where embeddings depend on the relative distance across the image.

12/ With NaViT, you have so many knobs to control the compute<>quality tradeoff, both during training and inference: x.com/piotrpadlewski…

Do you want to accelerate your vision model without losing quality? NaViT takes images of arbitrary resolutions and aspect ratios - no more resizing to square with constant resolution. One cool implication is that you can control compute/quality tradeoff by resizing:

11/ NaViT enables variable "per example" token dropping. This is cool as it allows you to intelligently tailor your token dropping strategy to match the properties of the input, e.g., drop more tokens for high-res images and fewer for low-res ones. x.com/joapuipe/statu…

We explore several ways to accelerate the training in the paper: Token dropping is a nice trick to reduce the training cost of ViT models (or get better models for the same cost). With NaViT one can take it several steps further!

10/ NaViT paves the way to fully resolution-agnostic models. A component that demands special care in this context is positional embedding. We thoroughly investigated various aspects to find a setup that effectively generalizes to unforeseen resolutions. x.com/agritsenko/sta…

1/4 To be truly resolution-agnostic, we must be clever with the positional embedding in NaViT. The original ViT uses 1D/2D absolute pos. emb. that is not directly applicable to unseen resolutions. We study several ideas to find a setup that best generalises to new resolutions.

4/4 We found that in general, factorised embeddings (when x and y are combined additively) greatly improves handling variable aspect ratios. Fractional Fourier positional embedding excels at generalising to unseen resolutions.

3/4 (2) Embedding type: Fixed (sinusoidal), learned, and parametric (Fourier) embeddings. (3) Factorization: Should x and y coordinates be embedded independently or jointly? When embedding jointly, we can combine embeddings by addition or stacking.

2/4 For NaViT, we delved into various dimensions of exploration: (1) Coordinate reflection: Absolute, where embeddings depend on the absolute patch index, or Fractional, where embeddings depend on the relative distance across the image.

1/4 To be truly resolution-agnostic, we must be clever with the positional embedding in NaViT. The original ViT uses 1D/2D absolute pos. emb. that is not directly applicable to unseen resolutions. We study several ideas to find a setup that best generalises to new resolutions.

Really excited to share NaViT - our latest work on *efficiently* extending ViTs to handle variable resolution and variable aspect ratio images. We're bringing back one of the cool features of ResNets - the ability to apply them to different image sizes at train at test time.

Patch n' Pack: NaViT, a Vision Transformer for any Aspect Ratio and Resolution paper page: huggingface.co/papers/2307.06… The ubiquitous and demonstrably suboptimal choice of resizing images to a fixed resolution before processing them with computer vision models has not yet been…

While this is super-useful for scalable pre-training, it is not the most effective for smaller-scale from-scratch training. See Piotr's comment: x.com/piotrpadlewski…

Other method of reducing compute is dropping random tokens. This turned out to be much worse strategy, as the quality drops much faster

(2) We can also change the drop rate as a function of the step number. In particular, decreasing the drop rate over time improves performance (but increasing it hurts!).

We explore two options which are trivial to handle by NaViT, since sequence packing leaves the final sequence length unaffected. (1) A resolution-dependent sampled drop rate outperforms fixed dropping across all rates (and only becomes worse than no dropping at very high rates).

We explore several ways to accelerate the training in the paper: Token dropping is a nice trick to reduce the training cost of ViT models (or get better models for the same cost). With NaViT one can take it several steps further!

1/ Excited to share "Patch n' Pack: NaViT, a Vision Transformer for any Aspect Ratio and Resolution". NaViT breaks away from the CNN-designed input and modeling pipeline, sets a new course for ViTs, and opens up exciting possibilities in their development. arxiv.org/abs/2307.06304

Check out NaViT, a native resolution ViT for all aspect ratios, enhancing training efficiency & performance. By preserving aspect ratios, it improves fairness-signal annotation, useful where metrics like group calibration are noise-sensitive. NaViT helps overcome such challenges.

Patch n' Pack: NaViT, a Vision Transformer for any Aspect Ratio and Resolution paper page: huggingface.co/papers/2307.06… The ubiquitous and demonstrably suboptimal choice of resizing images to a fixed resolution before processing them with computer vision models has not yet been…

Do you want to accelerate your vision model without losing quality? NaViT takes images of arbitrary resolutions and aspect ratios - no more resizing to square with constant resolution. One cool implication is that you can control compute/quality tradeoff by resizing:

Patch n' Pack: NaViT, a Vision Transformer for any Aspect Ratio and Resolution paper page: huggingface.co/papers/2307.06… The ubiquitous and demonstrably suboptimal choice of resizing images to a fixed resolution before processing them with computer vision models has not yet been…