Generate data in random order? A masked language models as generative model? All this and more in "Autoregressive Diffusion Models" with @agritsenko @BastingsJasmijn @poolio @vdbergrianne @TimSalimans. For details see arxiv.org/abs/2110.02037. Some explanations below...

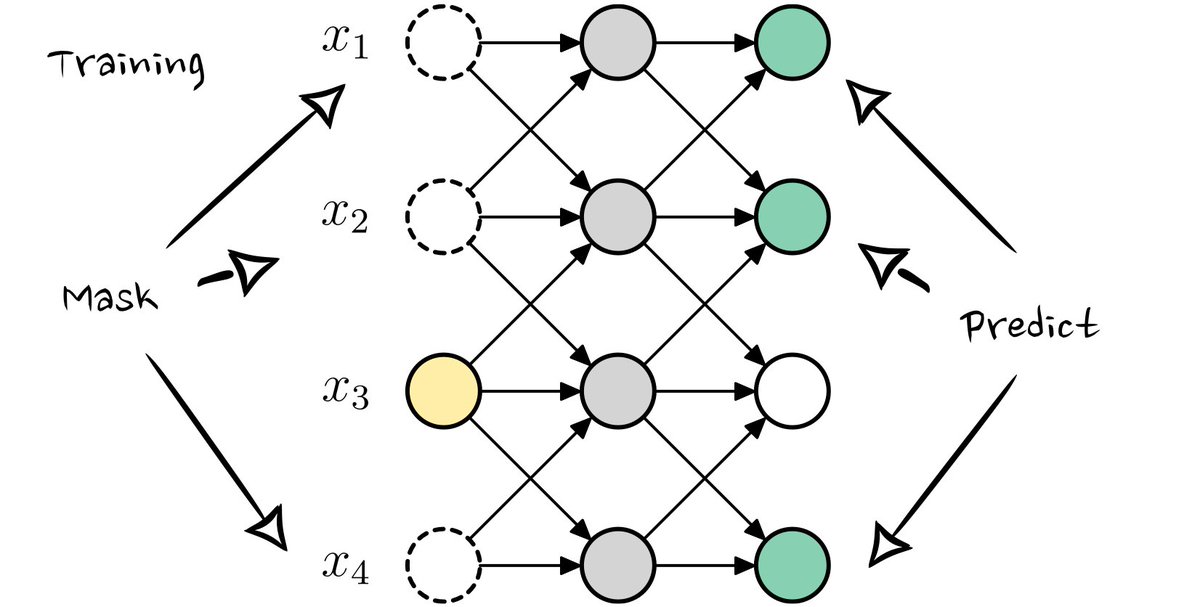

Training of Autoregressive Diffusion Models (ARDMs). In the basic form, they are trained like a masked language model, but where the _number_ of masked variables also varies. Each train step some variables are masked, and those are predicted from the remaining ones.

Sampling takes multiple steps. First a random generation order is picked. Then, one-by-one the model does a forward pass and samples a value. Those values are filled-in for the next forward pass.

Extensions include parallelization (using multiple predictions at once) and generation in stages (predicting more significant bits first).

@emiel_hoogeboom Sounds very similar to the mask-predict non-autoregressive decoding algorithm