Generate data in random order? A masked language models as generative model? All this and more in "Autoregressive Diffusion Models" with @agritsenko @BastingsJasmijn @poolio @vdbergrianne @TimSalimans. For details see arxiv.org/abs/2110.02037. Some explanations below...

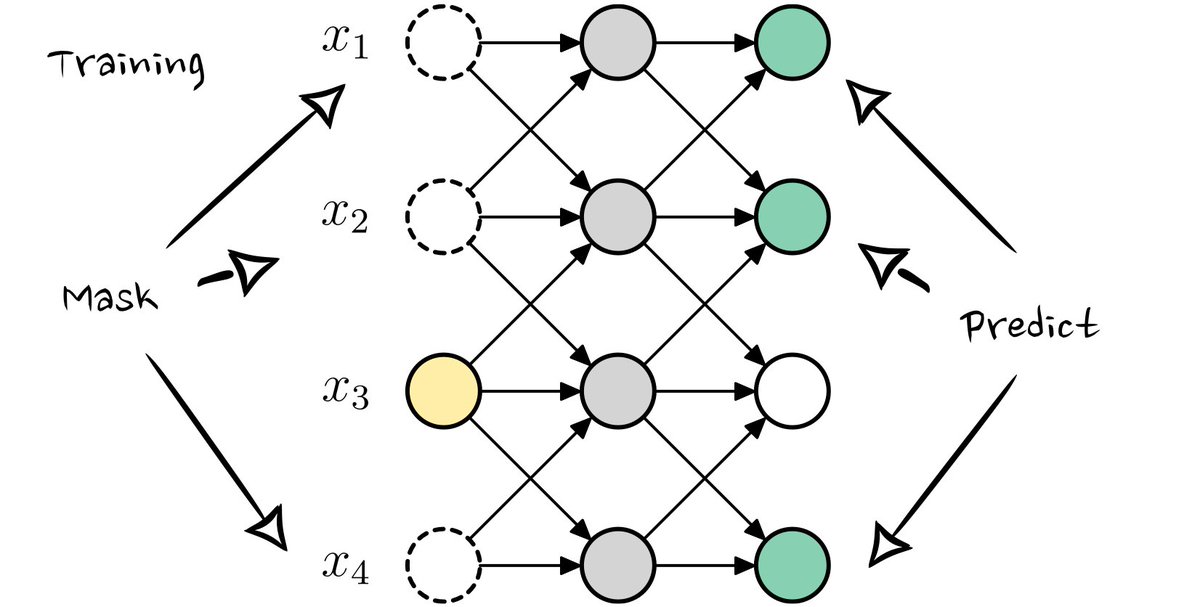

Training of Autoregressive Diffusion Models (ARDMs). In the basic form, they are trained like a masked language model, but where the _number_ of masked variables also varies. Each train step some variables are masked, and those are predicted from the remaining ones.

Sampling takes multiple steps. First a random generation order is picked. Then, one-by-one the model does a forward pass and samples a value. Those values are filled-in for the next forward pass.

Extensions include parallelization (using multiple predictions at once) and generation in stages (predicting more significant bits first).

ARDMs are conceptually very interesting as well. Their basic form lies at the intersection of "order agnostic ARMs" and "absorbing discrete diffusion". In fact, the _continuous-time limit_ of absorbing diffusion turns out to be an order agnostic ARM.

When sampling with random orders (for which it's trained), the model works well as seen above. An interesting failure case is when we force a very specific order, like a raster-scan. Technically one of the D! permutations, but very atypical -> the model is very confused😂

A cool application is lossless compression in only a limited number of encoding/decoding forward passes (due to the parallelisation). Compared to (continuous) latent variable models, compression "per image" is a lot better.

Very interested if this would work well in further downstream extensions and applications like impainting, de-corruption, compression.

Also, for a very nice explanation of the method see this video by @ykilcher youtube.com/watch?v=2h4tRs…