5b same thing again: focus model changes to those that keep the same capacity (~params for Transformer MLMs with fixed seqlen) but speed things up. It's a shame that vast majority of papers (including sometimes mine) completely ignore reporting wall-clock speed or slowdowns.

5c changes include: - SA: remove biases, many variants tried, none kept. - MLP: remove biases, make gated, nothing else. - Scaled sin embedding + LN - pre-norm helps, but only when increasing LR - In the head, MLP can be dropped (same with ViT). - Again: gray text interesting!

6a/N training - Stick to the simplest MLM objective - Optimizer: Adam. No win from fancier. - I want to point out that AdaFactor is meant to save memory but behave like Adam, so no win is a win! - They mention no win from Shampoo @_arohan_ but aren't confident it's a good impl.

6b training: lr schedule! They tried many, but this is where I disagree with the paper. Most schedules either don't warmup (-> lower peak lr!) or don't cooldown (-> 0 at the end). The only two that work clearly better than the rest are the only two with warmup and cooldown!

addendum to 6b: the figure from my screenshot is in the appendix. Other papers have shown even pre-norm needs warmup. 6c training: - no dropout, tokendrop, or length curric. - micro-batch 96 accum into 1.5-4k, linearly increased during training. Auto-tuning looks mostly linear.

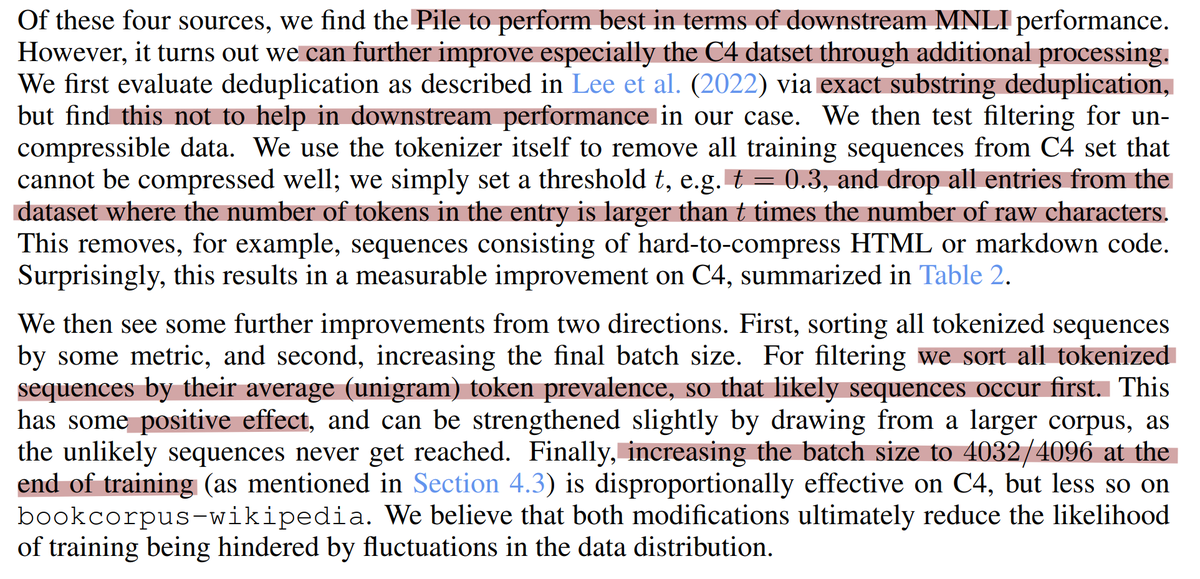

7/N data - Try pile subsets, c4, book+wiki - dedup (exact substring) not helpful - remove uncompressible data "t=0.3": keep only if ntokens < 0.3 * nchars - sort: data with fequent tokens first (think "easy/common text first") - grow batch-size at end

8 results left: overall, it's getting pretty close to original BERT which used 45-136x more total FLOPS (4d on 16 TPUs) right: and when training for 16x longer (2d on 8 GPUs), the same recipe actually improves on original BERT quite a bit, reaching RoBERTa levels of performance.

9/9 final thoughts. - I really like the "trend reversal" of seeing how much can be done with limited compute. - I am a big fan of the gray text passages for things that were tried but didn't work. - The lr sched part is fishy, but not super important. - Impressive bibliography!

PS: This thread took me almost as long as a paper review. Looks like I procrastinate my CVPR reviews by making twitter paper reviews instead ¯\_(ツ)_/¯

@giffmana I am just somewhat in between reading and skimming it. I feel this paper from my previous alma mater is less impressive than some papers in the same spirit like the ConvNeXt paper. TBH. The outcome is not very outstanding but people may enjoy training LMs with off-the-shelf HWs.

@peratham I like this one more because unlike convnext, it also mentions all the things that did *not* work, which is very valuable info. (I also like convnext)