Excited to present our work with @ashvinair and @svlevine, Offline RL with Implicit Q-Learning (IQL), a simple method that achieves SOTA performance on D4RL arxiv.org/abs/2110.06169 and works 4x faster than prior SOTA github.com/ikostrikov/imp… Thread below

6

21

119

0

26

Download Image

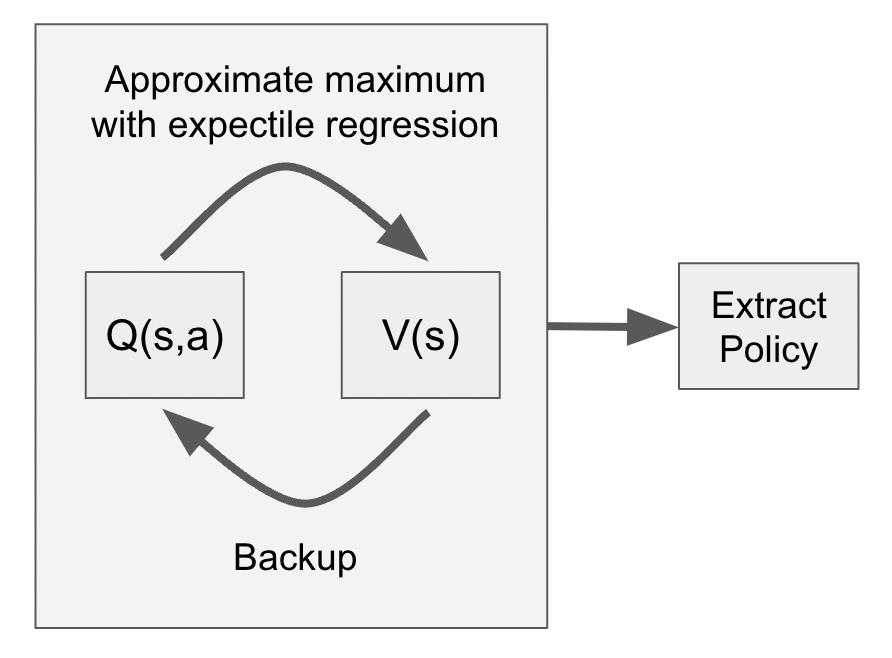

Actor-Critic algorithms can fail for offline RL when the actor outputs out-of-dataset actions for TD backups. What if we just do TD learning with the dataset actions? That is very stable, but it learns the behavior policy value function while we want the optimal value function.

Instead, to approximate a maximum of a Q-function instead of training an actor, IQL performs expectile regression, which does not require sampling out-of-dataset actions: