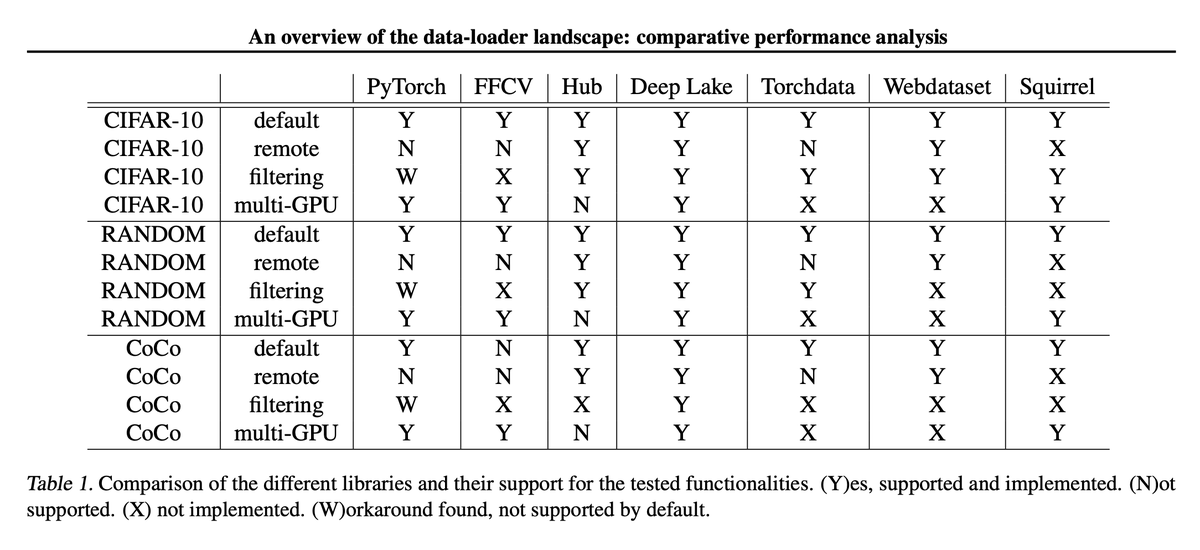

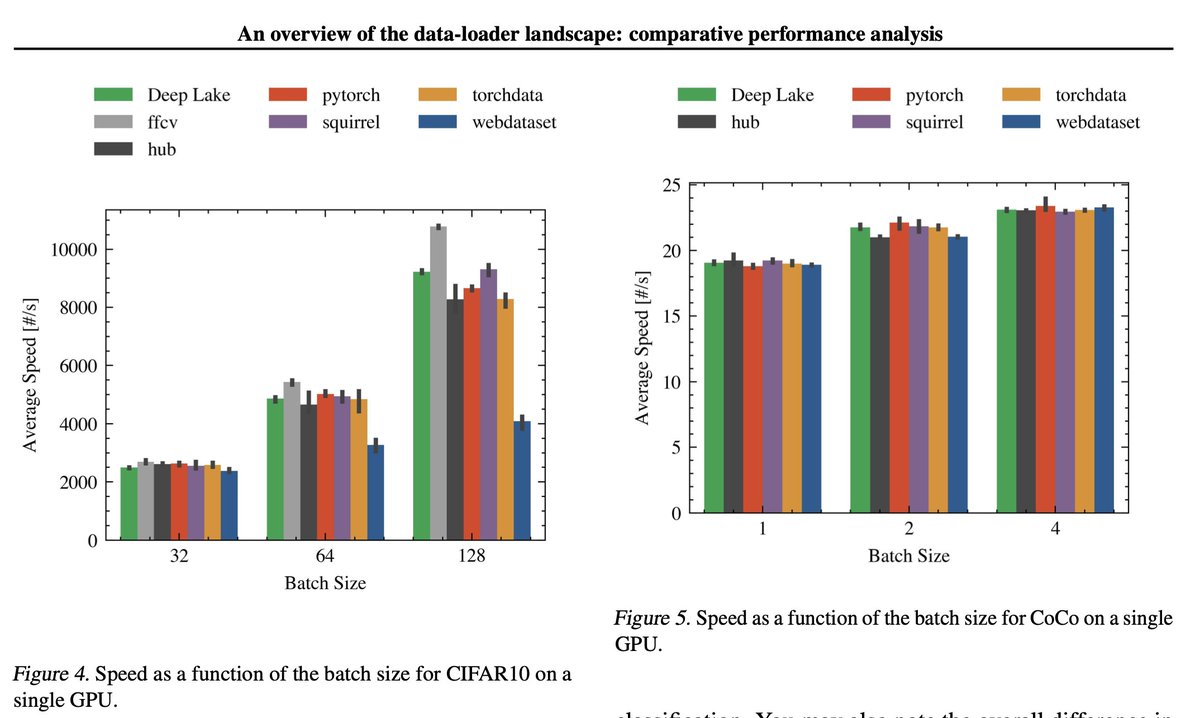

An Overview of the Data-Loader Landscape: Comparative Performance Analysis Iason Ofeidis, Diego Kiedanski, Leandros Tassiulas tl;dr: use ffcv if you can and DeepLake otherwise. arxiv.org/abs/2209.13705…

@ducha_aiki Should also have included tf.data. I hate it with a passion, but can't deny its efficiency, especially when reading data over the wire like in cloud environments.

@giffmana @ducha_aiki Yeah, definitely should compare tf.data / TFDS ... it's my default for most cloud training, esp in GCP even though I'm always using PyTorch. For other large scale training webdataset is the default.

@giffmana @ducha_aiki There appear to be issues with the analysis, and don't feel it focuses on interesting configs/scenarios. Doing any analysis of CIFAR in formats like webdataset is pretty much pointless.

@wightmanr @ducha_aiki Good point. We can actually hold all the tested datasets in RAM nowadays ¯\_(ツ)_/¯

@giffmana @wightmanr @ducha_aiki Every time I try to write some extra fancy streaming dataloader or optimized disk loading beyond the standard stuff, I realize it'd probably be cheaper to run fewer experiments on a bigger machine 😂

@marktenenholtz @giffmana @wightmanr @ducha_aiki Or back in grad school when everyone was trying to configure Spark when you could just run it on a different machine using pandas 😆

@marktenenholtz @wightmanr @ducha_aiki Yes! And the older we get, the more we value our time ;-)