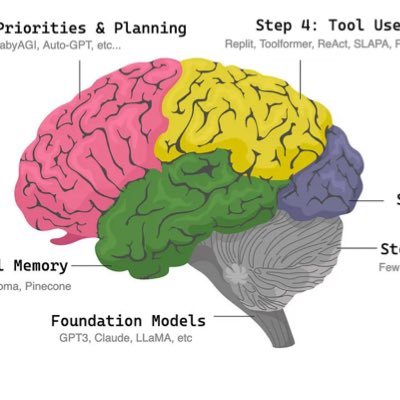

It was incredible seeing @lateinteraction in action @MIT_CSAIL with @ecardenas300!! 🔥🏛️🔥 Omar gave an amazing talk spanning all things from ColBERT to Multi-Hop Baleen RAG and DSPy! 🧠 The slide image below shares a part of the talk that has heavily resonated with us since -- on when to use which LLM optimization strategy based on model size: • 100B+, Instruction Tuning (Command R+, GPT-4 / Claude Opus / Gemini Ultra) • 7-13B, Few-Shot Examples (Llama2, Mistral 7B) • <1B, Gradient Descent (T5-Large) There is definitely some overlap here and you definitely can gradient descent tune 7B sized LLMs fairly easily, but I think this is a really nice nugget for thinking about the DSPy compilers at a high-level and maybe which one to reach for first when starting to optimize your programs based on the LLM you will be using! Aside from the technical discussion 😂, it was really amazing seeing Omar at MIT! He has put together an unbelievable dissertation and the future is bright for DSPy, ColBERT and the emerging community! Go Omar! 🚀

@CShorten30 @lateinteraction @MIT_CSAIL It was amazing to witness this live. 🤌 Congrats on all your research, Omar!

@CShorten30 @lateinteraction @MIT_CSAIL @ecardenas300 he cooked something, he deserved it, congrats omar—

@CShorten30 @lateinteraction @MIT_CSAIL @ecardenas300 Happy to see the DSPy gang together! :)

@CShorten30 @lateinteraction @MIT_CSAIL @ecardenas300 a high alpha heuristic by @lateinteraction 🧐 • 100B+, do Instruction Tuning (Command R+, GPT-4 / Claude Opus / Gemini Ultra) • 7-13B, do Few-Shot Examples (Llama2, Mistral 7B) • <1B, do Gradient Descent (T5-Large)