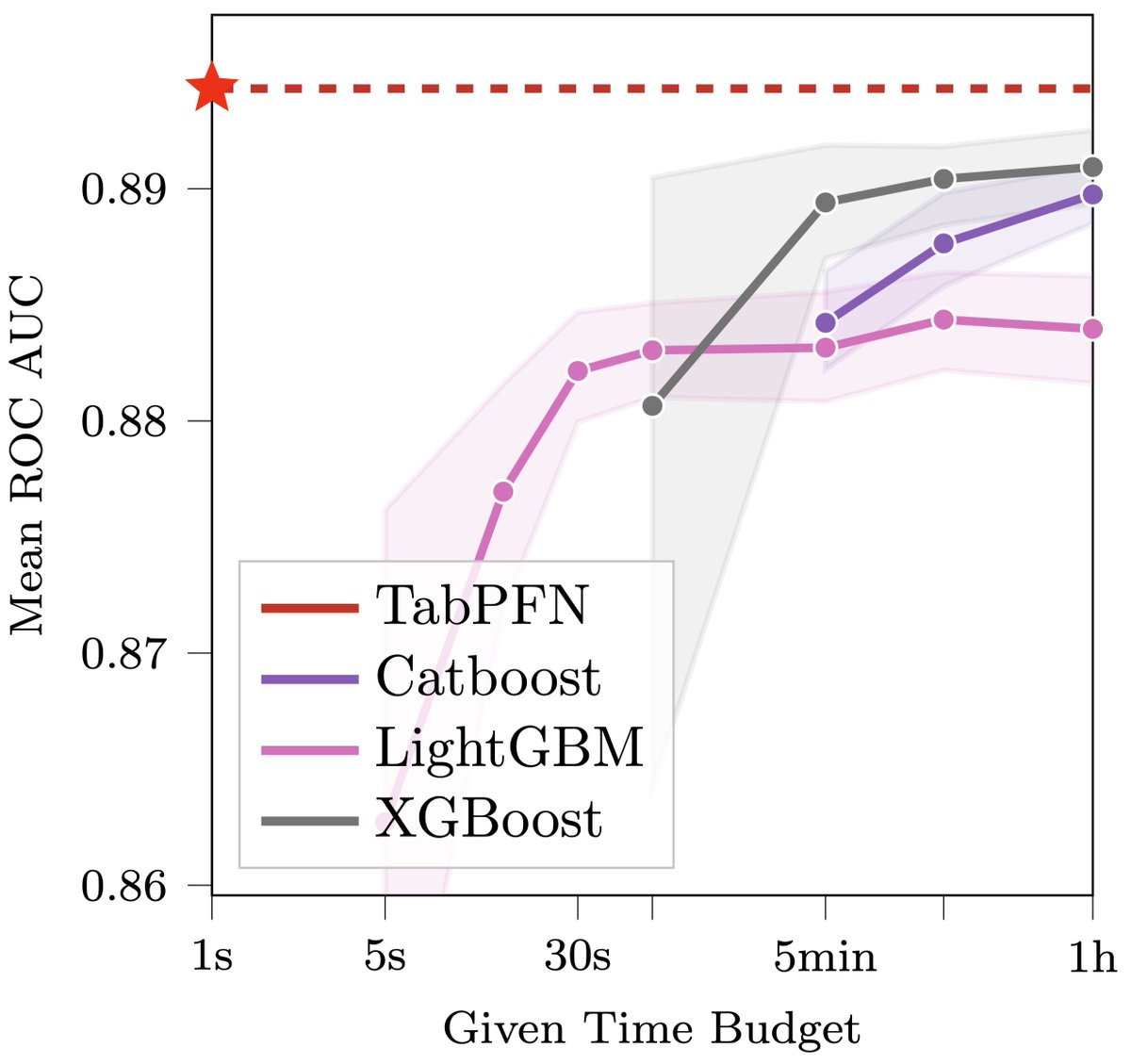

This may revolutionize data science: we introduce TabPFN, a new tabular data classification method that takes 1 second & yields SOTA performance (better than hyperparameter-optimized gradient boosting in 1h). Current limits: up to 1k data points, 100 features, 10 classes. 🧵1/6

TabPFN is radically different from previous ML methods. It is meta-learned to approximate Bayesian inference with a prior based on principles of causality and simplicity. Here‘s a qualitative comparison to some sklearn classifiers, showing very smooth uncertainty estimates. 2/6

If you'd like to play with TabPFNs yourself, here is a direct link to the Colab with a scikit-learn like interface: colab.research.google.com/drive/194mCs6S…

@FrankRHutter Why is there a need for fit() given you say it is pretrained already? Your sklearn example has a fit() step before the prediction.

@FrankRHutter Just read through the paper, awesome fresh idea combining approximate Bayesian inference and transformers for tabular data! Re the 1k in your tweet: when I understood correctly, the synthetic datasets for the priors were up to 1024 ex, but in the paper you are referring to 2k?