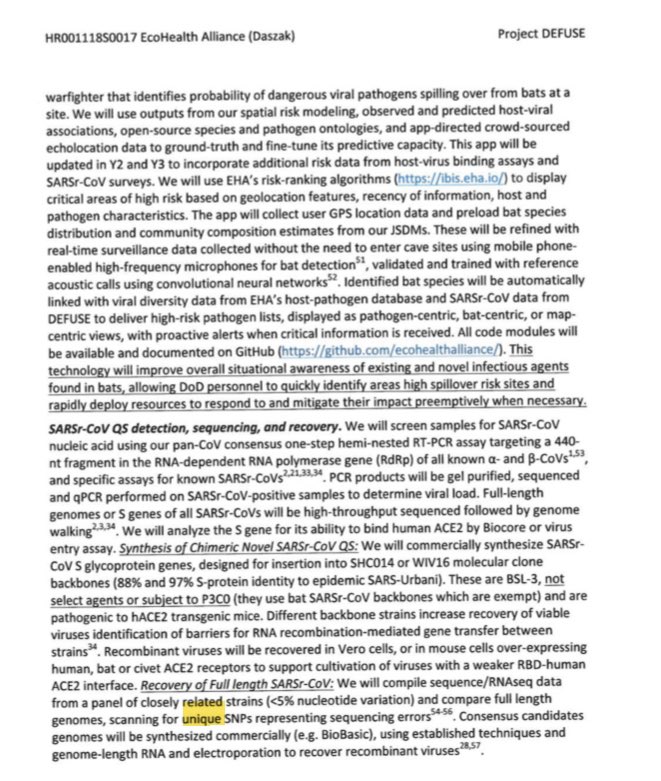

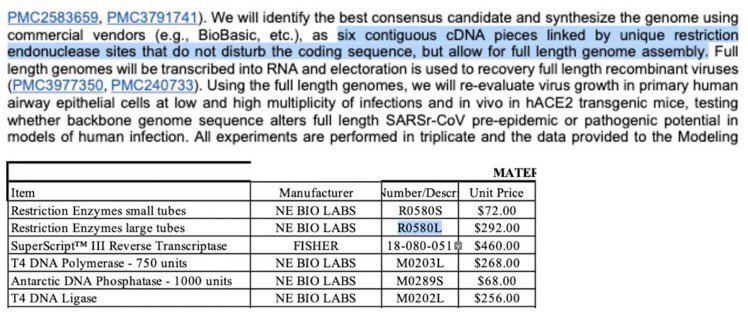

gab.com/Flavinkins/pos… gab.com/Flavinkins/pos… During the construction of full-length genomes by DEFUSE, the actual methodology is “consensus sequence construction”—e.g. they gather multiple sequences, usually a pool of reads, and then during the construction process, reads (and SNVs) are selected based on whether it can generate the correct site for the cloning process, in addition to the usual consensus generation process, where “unique mutations” from batches of QS are specifically singled out, and, if judged to be driven by a likely sequencing error, removed. The introduction of random designed single nucleotide changes to introduce the necessary site would effectively undo the intended utility of consensus construction, where “sufficiently supported” reads from multiple genomes or multiple reads in SRA (note the “Yunnan bats” libraries were constructed and sequenced in mid 2019) are assembled with the constraint of generating an readily clonable genome, and which one to several alternative test sequences (generate and evaluate until 3-5 viable sequences are obtained each year) are evaluated per pool of genomes and reads utilized. This consensus construction process is constrained by the need to generate a pattern that is easy to clone, and to prevent introducing additional “sequencing errors”, the specific sites are always picked from existing sequences e.g. those that are used in the construction of the consensus. If you just pick a random mutation there is a major risk that (especially in the ORF1ab) the RNA structure is broken and the result is not viable, which is in fact one of the reasons why they want to clone consensus sequences out of sample databases and Short read sequencing data in the full-length QS validation tests, in the first place.

Also, if they “don’t leave the sites in”, then they would not have said that they will “link by unique restriction endonuclease sites that do not disturb the coding sequence” because cutting the sites out mean there won’t be a concern to not disturb the coding sequence. You can only disturb the coding sequences and only need to ensure the introduction of only “sites that do not disturb the coding sequences” if you leave the sites in. As they are planning on using several Spike variations per each backbone, leaving the sites in are necessary to make sure that they can then swap the fragments (Spike included) out later. journals.plos.org/plosntds/artic…

In fact, the first time the Baric team didn’t use BglI, they chose to keep all their type IIS sites in. The number if fragments is again 6. ncbi.nlm.nih.gov/pmc/articles/P… now they were also purchasing BsmBI in DEFUSE, so just swap the SapI with BsmBI (which the WIV, lacking the Baric REase and reagent library, would only have BsaI and BsmBI available as readily-made kits that may be purchased since there is actually no commercial finished kit for SapI available) and you are looking for as why they need to specifically mention “sites that does not disturb the coding sequence” when coming to linking the “six contiguous cDNA pieces”.

Only BsaI and BsmBI came with detailed kit compositions and instructions that can be closely followed and guranteed to work on the get-go. You don’t want your precious synthesized cDNA to not cut and ruin your assembly if you have to then identify and dial in the digestion protocols yourself.

Keep in mind that the other MERS-CoV, PgCoV, etc. in publications known, are actually all not mentioned as “consensus” genomes. arxiv.org/abs/2104.01533 Actual known consensus’s genomes, found leaked out of the WIV, were identified with several distinct cloning strategies (one used exotic animals (camels) from the Hubei jiufeng zoo x.com/nestcommander/… , and which clear patterns weren’t identified) and an absence of bat hosts, archive.ph/EiCQW Are found to be much more divergent from any one of the known relatives compared to “nearly identical”. It also invalidates the idea that “all samples are published from the WIV”.

You say, “but how about the methods that don’t leave the sites in!” Unfortunately, that also require an absence of interleaving between the BsaI and BsmBI sites and that you don’t have internal sites that are too close to each other. Both drive interference. And to have even just the “there should not be interleaving between sites” you are looking at an <1/35 chance. Again. These chances are directly translated to the probability of seeing one in a spillover because natural spillovers doesn’t care about what kind of RE site combinations you have. Also notice that how all papers that does this were not replacing parts of the genome (such as swapping Spikes or adding foreign proteins into the genome). This is because if you were to cut the sites out, then there is nothing for you to cut the result back open to change the genome (especially when using adapted strains like in the PEDV paper) or take any interesting passage results and test on different backbones. Note how cloning pgCoV-GD is much difficult than cloning SARS-CoV-2. And notice how only the WIV and GIABR have it in 2019, but not any markets. No spillovers even to alleged rescue workers should tell you something, contradicting the extreme observed spillover capacity in cells and animal models. Human ACE2 are the most affected by the cell culture results. Again, no RXXR in MP789/pgCoV-GD, even though potential activation of S2’ by furin post-cleavage of S1-S2 is possible. nature.com/articles/s4156…

Finally, just for something. With BglI, usually you don’t get to 6 fragments even if you change a lot of sites and introduce a lot of “unique mutations representing sequencing errors” into Sarbecovirus genomes.

Also, the BsaXI debate here is actually more relevant to the process of RFLP validation, which are sites which are introduced during the engineering process of a specific protein change that are intended to be reamplified and digested to track the changes of the engineering result. Does the resulting site fit? Which other site variations can be used? If we use targeted RNA recombination on live isolates, link.springer.com/chapter/10.100…… does the resulting modification work? Introducing RE sites alongside the “small changes” help scientists track their work, and is commonly used in these kind of work regarding changing small parts of proteins or genomes.

Also, in case you wonder what they did to the PEDV clone (which IS the first time they cloned a CoV without using BglI), the type IIS sites are all retained. They say it creates “unique overhangs between each fragment”. And no, this clone is NOT the genome shown on the right of the picture, which came from SARS-CoV-2.