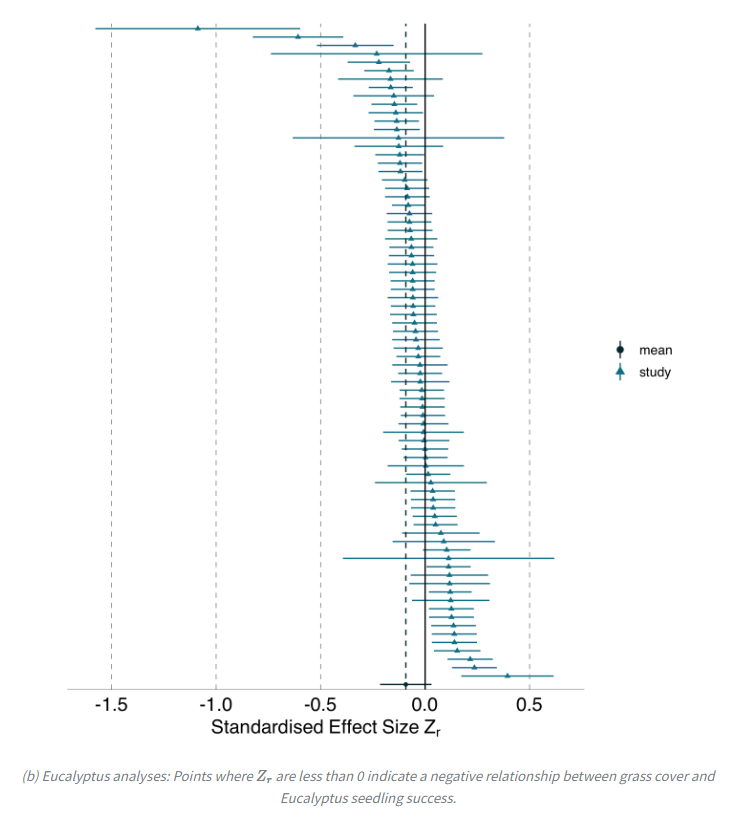

Scary: 73 teams tested the same hypotheses with the same data. Some found negative results, some positive, some nada. No effect of expertise or confirmation bias. "Idiosyncratic researcher variability is a threat to the reliability of scientific findings." osf.io/preprints/meta…

This isn't just a problem for the social sciences. A recent preprint found exactly the same pattern in ecology and evolutionary biology: same hypotheses, same data – but very different results. ecoevorxiv.org/repository/vie…

@SteveStuWill Now do teams across different cultures

@SteveStuWill Follow the science! Loved your book btw.

@SteveStuWill I did data analysis in the corporate world, almost no one bothered trying to understand the data, variables, or systems being quantified. Basically their view of data was "make up numbers to 'prove' my lie" Nearly everything corporate is pseudoscience, even studies they quote

@SteveStuWill Almost all of them found infinitesimal results. So not scary.

@SteveStuWill @abcampbell Social science should be stated prominently in the title

@SteveStuWill @WiringTheBrain Here’s a summary of the research paper: “Observing many researchers using the same data and hypothesis reveals a hidden universe of uncertainty” (I wonder if they would get the same results if they did it 73 more times 🤔)

@SteveStuWill I think I see the problem. Attempting to use 'social' and 'science' in the same paragraph, or research paper.🙄🤣

@SteveStuWill I wonder if statistical methods are scientific in the same way as - say - lab measurements in physics or chemistry. Likely not: it is hidden subjective choice in the former vs. accidental variation in the latter.

@SteveStuWill Let's be fair to everyone who is observing: the thesis "that greater immigration reduces support for social policies among the public" is garbage. Most social theories that are broad like this are also garbage. I know that some view only holistic hypotheses as worthwhile. /1