Lei Li @_TobiasLee

Ph.D. student @hkunlp2020. Previously @PKU1898 COYG @Arsenal opinions are my own. lilei-nlp.github.io Hong Kong Joined August 2015-

Tweets680

-

Followers2K

-

Following878

-

Likes3K

I have a new blog post about the so-called “tokenizer-free” approach to language modeling and why it’s not tokenizer-free at all. I also talk about why people hate tokenizers so much!

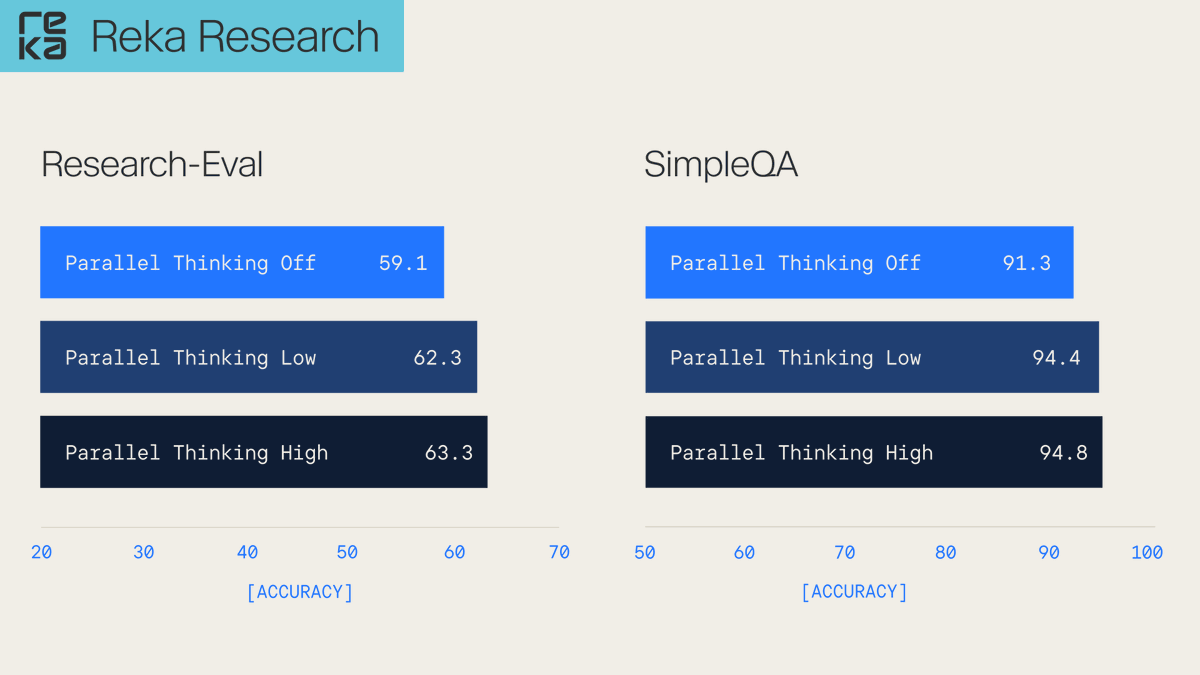

Introducing Parallel Thinking for Reka Research! Instead of one line of reasoning, we explore multiple paths in parallel, then resolve the best answer. Big accuracy gains on Research-Eval (+4.2) and SimpleQA (+3.5). Now live in the Reka Research API!

Apple presents MANZANO A Simple and Scalable Unified Multimodal Model with a Hybrid Vision Tokenizer

🔥LLaVA-OneVision upgraded to V1.5🔥 We @lmmslab present 🌋LLaVA-OV-1.5🌋, a fully open framework for democratized multimodal training * Superior Performance surpassing Qwen2.5-VL * High-Quality Data at Scale * Ultra-Efficient Training Framework - Repo: github.com/EvolvingLMMs-L…

i cant tell lol

👋 Say Hi to MiMo-Audio! Our BREAKTHROUGH in general-purpose audio intelligence. 🎯 Scaling pretraining to 100M+ hours leads to EMERGENCE of few-shot generalization across diverse audio tasks! 🔥 Post-trained MiMo-Audio-7B-Instruct: • crushes benchmarks: SOTA on MMSU, MMAU,…

Introducing Reka Speech: an efficient and accurate transcription & translation model. 🗣️ On modern GPUs (e.g. H100), it runs 8x–35x faster than existing solutions for batch processing.

Qwen should be placed at the S-tier.

🚀 Think your Video MLLMs are the best? Put them to the test on the Video-TT Challenge! 🔥 Compete for the top spot, prove your model’s power, and win amazing cash prizes! 🏆 🚀 Registration is now open! Details: sites.google.com/view/video-tt-… Registration: codabench.org/competitions/1…

Fuck it. Today, we open source FineVision: the finest curation of datasets for VLMs, over 200 sources! > 20% improvement across 10 benchmarks > 17M unique images > 10B answer tokens > New capabilities: GUI navigation, pointing, counting FineVision 10x’s open-source VLMs.

VLM critic-training with RLVR yields comprehensive boosts. SoTa on MMMU (71.9) with MiMo-VL-7B-RL-2508 Also, the critic model can pick the best answer from N candidates, as we found out in VL-RewardBench

VLM critic-training with RLVR yields comprehensive boosts. SoTa on MMMU (71.9) with MiMo-VL-7B-RL-2508 Also, the critic model can pick the best answer from N candidates, as we found out in VL-RewardBench

When an article about hpc/optimization is called "anatomy of" then you know the authors know their shit. For those who don't know, this is basically HPC's "attention is all you need" but less overcooked:

When an article about hpc/optimization is called "anatomy of" then you know the authors know their shit. For those who don't know, this is basically HPC's "attention is all you need" but less overcooked: https://t.co/KWhx3NWPRY

🚨 Apple just released FastVLM on Hugging Face - 0.5, 1.5 and 7B real-time VLMs with WebGPU support 🤯 > 85x faster and 3.4x smaller than comparable sized VLMs > 7.9x faster TTFT for larger models > designed to output fewer output tokens and reduce encoding time for high…

🚨 New benchmark release 🚨 We're introducing Research-Eval: a diverse, high-quality benchmark for evaluating search-augmented LLMs 👉 Blogpost: reka.ai/news/introduci… 👉 Dataset + code: github.com/reka-ai/resear… 🧵 Here's why this matters:

This week, we open-sourced NVIDIA-Nemotron-Nano-v2-9B: our next-generation efficient hybrid model. - 6× faster than Qwen3-8B at reasoning tasks. - Retained long-context capability (8k → 262k trained, usable at 128k) First true demonstration that reasoning models can be…

Introducing DeepSeek-V3.1: our first step toward the agent era! 🚀 🧠 Hybrid inference: Think & Non-Think — one model, two modes ⚡️ Faster thinking: DeepSeek-V3.1-Think reaches answers in less time vs. DeepSeek-R1-0528 🛠️ Stronger agent skills: Post-training boosts tool use and…

thanks for trying our MiMo models🥰🥰

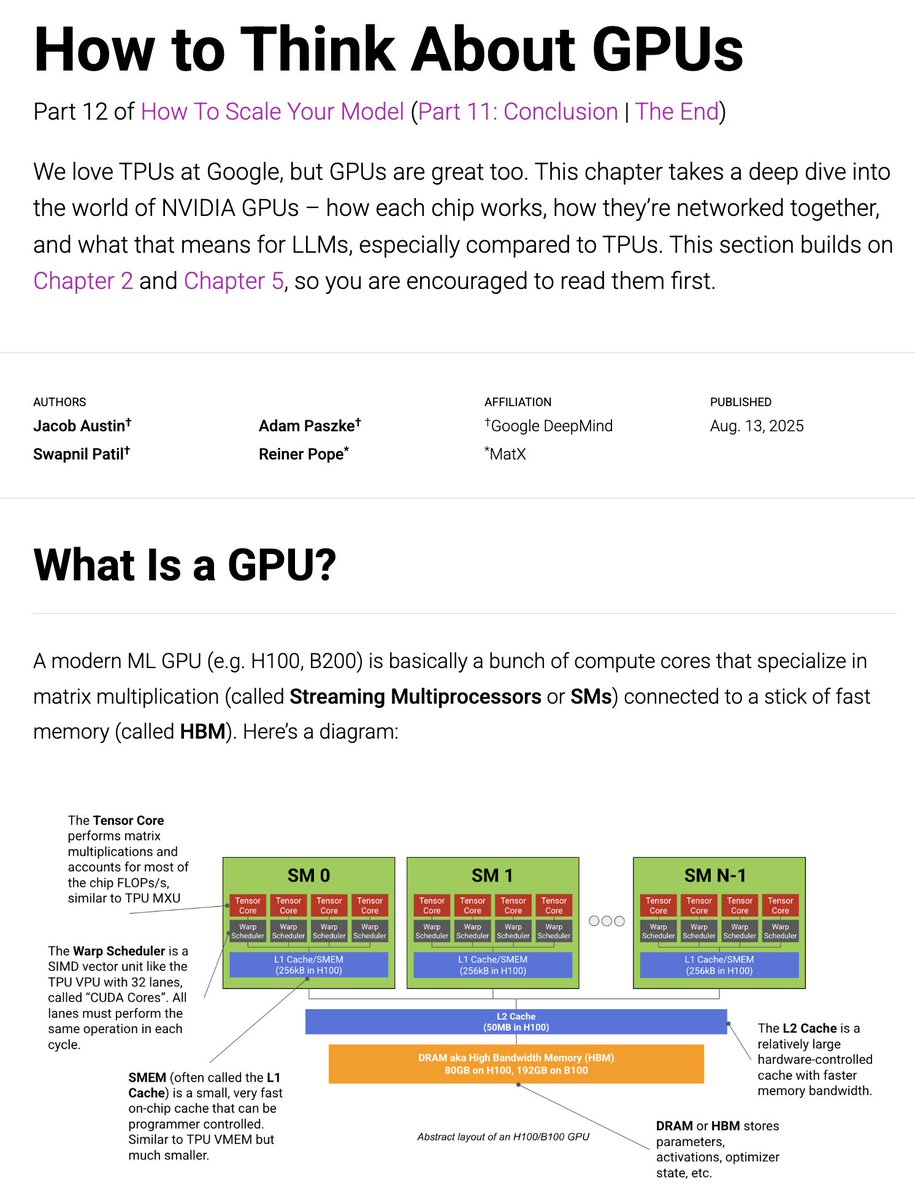

Today we're putting out an update to the JAX TPU book, this time on GPUs. How do GPUs work, especially compared to TPUs? How are they networked? And how does this affect LLM training? 1/n

Xiaoyu YE @XiaoyuYE122162

2 Followers 50 Following

Fauzan Tahir @FauzanTahi62194

0 Followers 11 Following Building with React & Django | Exploring Computer Vision & GenAI

Fruivor @Fruivor1647367

63 Followers 2K Following

Kai Zhang @KaiZhang_CS

98 Followers 523 Following CS PhD @OSUNLP with @ysu_nlp. Prev @AIatMeta @MSFTResearch @GoogleDeepMind. my former account @DrogoKhal4 was wrongly suspended...

Mukesh @mithraics_

190 Followers 2K Following ML, RecSys & LLM @ 84.51. Be Kind. Stay Positive. Don't Judge.

S @shavniek

5 Followers 866 Following

thanhhm82 @thanhhm82

42 Followers 340 Following Patcell: Your trusted partner in software development, providing mobile/web apps, mobile/online games, and advanced AI solutions to help you achieve your goals.

Haorui He @HeHarry_11

68 Followers 310 Following CS Ph.D. student at HKU @HKUniversity; LLM/Social Computing/Speech Processing; Fan @BVB

Andrea Yuan-Tao Pigna... @pigna_content

39 Followers 251 Following

Stelian Balta @stelyb

28K Followers 205 Following Innovation Maximalist | Founder and CEO HyperChain Capital

CRAQUE DRISHIBLOOM (b... @DRISHIGAMI10

361 Followers 4K Following PRX/AL/CFO/TSW/VKS/MKOI | mtg/mlbb/hok/tft/apex enthusiast| griffin boys and knight fan | DWG21/GENG25/OMG23/JDG23/AL25/FS25/BLG25 fan | @drishibloom10

Damn Congrats @pynoity33550336

1 Followers 9 Following

Wenyan Li @Wenyan62

270 Followers 196 Following PhD student at the CoAStaL NLP Group, University of Copenhagen. Former researcher at Comcast AI and SenseTime.

MeroyGibson @wbAtZ2N0nCusC

19 Followers 564 Following

Drodou @Drodou715

9 Followers 827 Following

Harsh Vardhan Singh @harsh_sing20281

32 Followers 1K Following

links @lC3wQ1nrVVe0q3H

0 Followers 394 Following

Li Kuan @likuan1995

31 Followers 15 Following PhD student @ HKUST. ML & Agents. https://t.co/8BITkpgKQO Author of Tongyi DeepResearch

Zhiyuan You @realzhiyuanyou

7 Followers 119 Following Phd Student in MMLab, @CUHKofficial, Master and Bachelor from @sjtu1896 CVer in Low-level Vision, Image Quality Assessment, Image Restoration

Mahmoud Mohamed @7odz22

66 Followers 1K Following

AprilClemens @ZvGriKfhV7m5H

26 Followers 575 Following

Don Cullinan @cullinan92619

299 Followers 8K Following

Yuji Zhang @Yuji_Zhang_NLP

581 Followers 327 Following Postdoc@UIUC, advised by Prof. Heng Ji @hengjinlp and Prof. Chengxiang Zhai. Robust and trustworthy LLMs. LLM hallucination. LLM knowledge. LLM reasoning.

A Side Byproduct 🐋... @ByproductD_

22 Followers 1K Following Bio person in the Bio. Doesn't byproduct imply side? oops Kinda need to choose what to follow

sana @sana4226939029

5 Followers 135 Following girl who loves science & asks too many questions 🧪✨ | curious about the world & all the ideas

Alex J Best @AlexJBest

341 Followers 3K Following Researcher in math+formal methods+ml. Working on using formal verification to train models for mathematics and reasoning @harmonicmath

Zuko Capital @ZukoCapital

0 Followers 2K Following

Alfredo Espinoza @alfredo_ep

212 Followers 3K Following Estamos sobre hombros de gigantes. Truth is rarely pure, and never simple. This too shall pass.

Hurdy Gurdy Man @zaGbian

123 Followers 3K Following

Grace Shao @Gracemzshao

843 Followers 1K Following AI Proem on Substack | Podcast Differentiated Understanding | China tech and AI analyst

Random @random_seed_42

33 Followers 1K Following

Stayup @viddolee

396 Followers 3K Following Software & ML Engineer | Smart Contract Developer | CFD Trader | Timing is everything: 1000ms = 1s, 1ms = 1,000,000ns

AK @rishlash

165 Followers 4K Following

˗ˏˋHubert´ˎ˗ @bashpenguin

193 Followers 5K Following I like penguins, Cats, search engines, potatoes & garlic. ❤️🐈(fili)🐈(dicki)🐈(manchas)🐈(sinnombre✝️)🐈(filipo)🐈(lily✝️)🐈(lucy)🐈⬛(anubis)

David Mok @DMokNow

730 Followers 2K Following @Labelbox AI | Love building & scaling tech startups, 🏀, and🍟

Govind K @t2govind

2K Followers 6K Following Principal Research Engineer @Microsoft. Developing experiences that people want

Zhipeng(Jason Z) Wang... @PKUWZP

637 Followers 964 Following LLM Systems/Infra/Multimodal/RL, PhD @RiceUniversity, Sr Manager @LinkedIn. ex:{@GoogleResearch, @AWS, @Apple, @PKU1898 @WashU}, TSC Committer @DeepSpeedAI

Sherry Tongshuang Wu @tongshuangwu

6K Followers 1K Following Assist. Prof @SCSatCMU , CS PhD @uwcse. HCI+AI, map general-purpose models to specific use cases! prev. intern @MSFTResearch @GoogleAI @Apple. She/her.

Dan Roy @roydanroy

57K Followers 2K Following ML / AI researcher. Research Director and Canada CIFAR AI Chair, @VectorInst. Professor, @UofT (Statistics/CS).

Dong Zhang @dongzha35524835

570 Followers 609 Following MS Student at FudanNLP Lab @FudanUniv | Developing SpeechGPT-Series

Yong Jae Lee @yong_jae_lee

996 Followers 127 Following Professor, Computer Sciences, UW-Madison. I am a computer vision and machine learning researcher.

Yuji Zhang @Yuji_Zhang_NLP

581 Followers 327 Following Postdoc@UIUC, advised by Prof. Heng Ji @hengjinlp and Prof. Chengxiang Zhai. Robust and trustworthy LLMs. LLM hallucination. LLM knowledge. LLM reasoning.

typedfemale @typedfemale

39K Followers 538 Following a really exciting new account "advanced pytorch user" - @cHHillee alt: @typedalt

Horace He @cHHillee

42K Followers 536 Following @thinkymachines Formerly @PyTorch "My learning style is Horace twitter threads" - @typedfemale

Yuhao Dong @dyhTHU

91 Followers 193 Following

yuyin zhou @yuyinzhou_cs

2K Followers 882 Following Assistant Professor @ucsc, Postdoctoral Researcher @Stanford @StanfordAIMI, Medical Image Analysis, Machine Learning / former Ph.D. @JohnsHopkins M.S. @UCLA

Transactions on Machi... @TmlrOrg

6K Followers 5 Following Transactions on Machine Learning Research (TMLR) is a new venue for dissemination of machine learning research

Yifei Hu @hu_yifei

4K Followers 602 Following Machine Learning Researcher @reductoai | Prev: PhD @LifeAtPurdue | Opinions my own

刘小排 @bourneliu66

9K Followers 78 Following AI产品创业者 Raphael AI - https://t.co/tJOiC33sAI AnyVoice - https://t.co/MQkmOW2lbu Fast3D - https://t.co/6m3JO8jJvc

Fuzhao Xue (Frio) @XueFz

5K Followers 684 Following Research Scientist @GoogleDeepmind | Scaling & Distillation #Gemini | Zero-shot Cooking Learner🧑🍳

Shuchao Bi @shuchaobi

13K Followers 692 Following Research @Meta Superintelligence Labs, RL/post-training/agents; Previously Research @OpenAI on multimodal and RL; Opinions are my own.

Yi Wu @jxwuyi

1K Followers 103 Following AI/RL researcher, Assistant Prof. at @Tsinghua_Uni, leading the RL lab at @AntResearch_, PhD at @berkeley_ai, frequent flyer and milk tea lover.

Ying Sheng @ying11231

12K Followers 730 Following @lmsysorg @sgl_project | Prev. @xAI @Stanford | Assist Prof @UCLA. (delayed) | Do it anyway | Live to fight another day

马东锡 NLP @dongxi_nlp

29K Followers 786 Following Prev. PhD @Stockholm_Uni | Alumni @KTHuniversity @uppsalauni Sharing insights on AI, autonomous agents, and large language & reasoning models

Neel Nanda @NeelNanda5

32K Followers 123 Following Mechanistic Interpretability lead DeepMind. Formerly @AnthropicAI, independent. In this to reduce AI X-risk. Neural networks can be understood, let's go do it!

Seungone Kim @seungonekim

2K Followers 935 Following Ph.D. student @LTIatCMU and intern at @AIatMeta (FAIR) working on (V)LM Evaluation & Systems that SeIf-Improve | Prev: @kaist_ai @yonsei_u

jietang @jietang

3K Followers 108 Following Professor @ Tsinghua University Artificial General Intelligence, Large Language Model

OpenRouter @OpenRouterAI

55K Followers 308 Following Discover and use the latest LLMs. 500+ models (incl. 50+ free), explorable data, private chat, & a unified API. https://t.co/qJG5mKrigL

Stratechery @stratechery

152K Followers 3 Following Articles and Updates from https://t.co/A7bGqyJ7db. For the author, follow @benthompson.

Jack Clark @jackclarkSF

89K Followers 5K Following @AnthropicAI, ONEAI OECD, co-chair @indexingai, writer @ https://t.co/3vmtHYkIJ2 Past: @openai, @business @theregister. Neural nets, distributed systems, weird futures

Ben Thompson @benthompson

256K Followers 2K Following Author/Founder of @stratechery. Host of @ditheringfm @sharptechpod. @notechben for sports. @monkbent on other networks. Home on the Internet.

SemiAnalysis @SemiAnalysis_

37K Followers 18 Following

Dylan Patel @dylan522p

96K Followers 944 Following SemiAnalysis Boutique AI & Semiconductor Research and Consulting DMs are open for consulting, quotes, or to talk shop

Jordan Schneider @jordanschnyc

52K Followers 4K Following Newsletter: https://t.co/j23uuLxE59 join 50k readers Podcast: https://t.co/4sx8iev5Az Fellow: @cnasdc

jasmine sun @jasminewsun

11K Followers 1K Following generative adversarial ✰ writing/podcasting https://t.co/pyrKX4WRwv https://t.co/t1oTgfwzZc

Dwarkesh Patel @dwarkesh_sp

130K Followers 917 Following Host of @dwarkeshpodcast https://t.co/3SXlu7fy6N https://t.co/4DPAxODFYi https://t.co/hQfIWdM1Un

Alberto Romero @Alber_RomGar

5K Followers 1K Following Writes a blog about AI that's actually about people

Arvind Narayanan @random_walker

124K Followers 497 Following Princeton CS prof. Director @PrincetonCITP. I use X to share my research and commentary on the societal impact of AI. BOOK: AI Snake Oil. Views mine.

Cameron R. Wolfe, Ph.... @cwolferesearch

27K Followers 676 Following Research @Netflix • Writer @ Deep (Learning) Focus • PhD @optimalab1 • I make AI understandable

Melanie Mitchell @MelMitchell1

49K Followers 673 Following Professor, Santa Fe Institute. Mostly posting on https://t.co/4NpA2IL5Va (at-melaniemitchell). More thoughts at https://t.co/nC43NHRozX.

Joey Gonzalez @profjoeyg

5K Followers 409 Following Professor @UCBerkeley, co-director of @LMSysorg, and co-founder @RunLLM

Helen Toner @hlntnr

29K Followers 1K Following AI, national security, China. Part of the founding team at @CSETGeorgetown (opinions my own). Author of Rising Tide on substack: https://t.co/LKAoyL00iB

Micah Goldblum @micahgoldblum

8K Followers 766 Following 🤖Prof at Columbia University 🏙️. All things machine learning.🤖

HKUNLP @hkunlp2020

117 Followers 85 Following We are a group of researchers working on natural language processing in the Department of Computer Science at the University of Hong Kong.