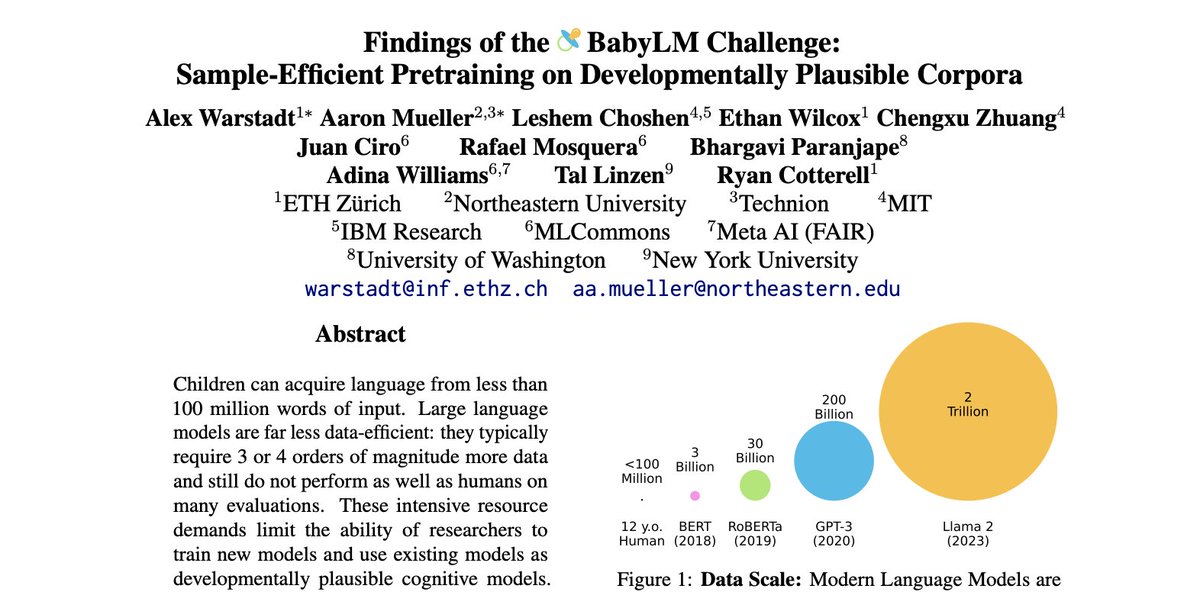

LLMs are now trained >1000x as much language data as a child, so what happens when you train a "BabyLM" on just 100M words? The proceedings of the BabyLM Challenge are now out along with our summary of key findings from 31 submissions: aclanthology.org/volumes/2023.c… Some highlights 🧵

14

201

1K

127K

687

Download Image