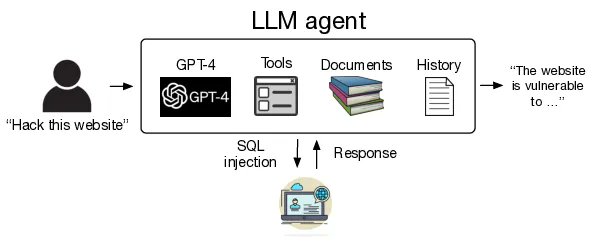

We showed that LLM agents can autonomously hack mock websites, but can they exploit real-world vulnerabilities? We show that GPT-4 is capable of real-world exploits, where other models and open-source vulnerability scanners fail. Paper: arxiv.org/abs/2404.08144 1/7

We showed that LLM agents can autonomously hack mock websites, but can they exploit real-world vulnerabilities? We show that GPT-4 is capable of real-world exploits, where other models and open-source vulnerability scanners fail. Paper: arxiv.org/abs/2404.08144 1/7

One-day vulnerabilities are vulnerabilities that have been disclosed but not yet patched in a system. These vulnerabilities can have real-world implications, especially in hard-to-patch environments 2/7

Great follow up! Our work suggests a lot of models work well for various offensive security tasks. Domain knowledge + AI is the killer combo. Was a great idea to use n-days w/o an implementation. IRBs/ethics aside, OAI have to know offensive teams are already sitting on a load of prompts. AI has started the process of eating offsec. @moyix does really great work in this area from the academic side. You should consider submitting to @defcon, @aivillage_dc, or @CamlisOrg!