Nice paper providing a way to compute stochastic gradients using only forward-mode autodiff (w/o backprop) thanks to perturbations (with unit mean and variance). This is especially useful for large architectures, as memory can be released along the way. arxiv.org/abs/2202.08587

Combined with usual stochastic gradients, this gives doubly stochastic gradients (where stochasticity comes from both perturbations and training points).

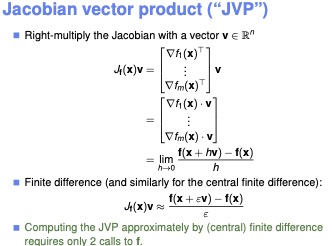

In fact, Jacobian-vector products / directional derivatives used by their technique can be computed using finite difference in just two calls to the function, so forward-mode autodiff could even be avoided...

@mblondel_ml This is super cool. Perhaps, finite difference directional gradients could be better approximations than pseudo gradients (for instance in difficult to differentiate forward functions like argsort/rounding). Any idea about issues with "almost all random vectors are orthogonal"?