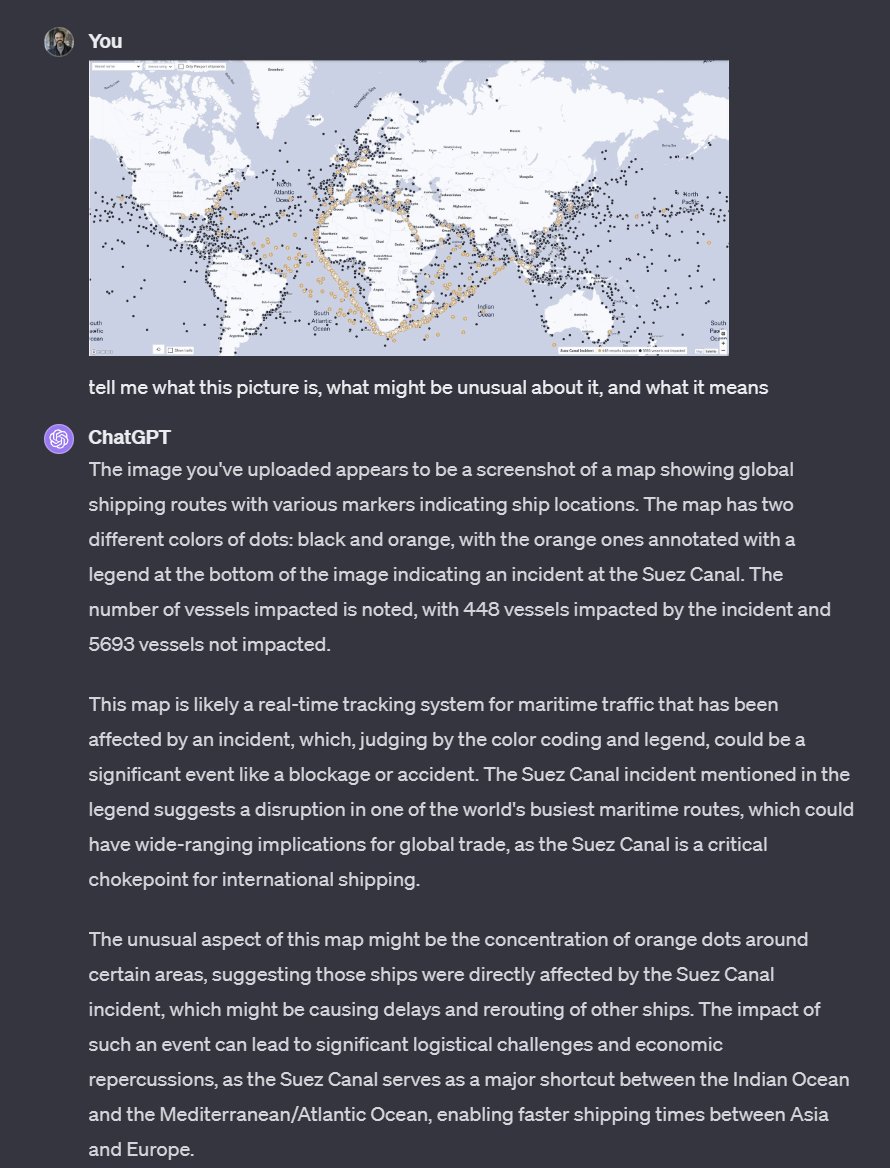

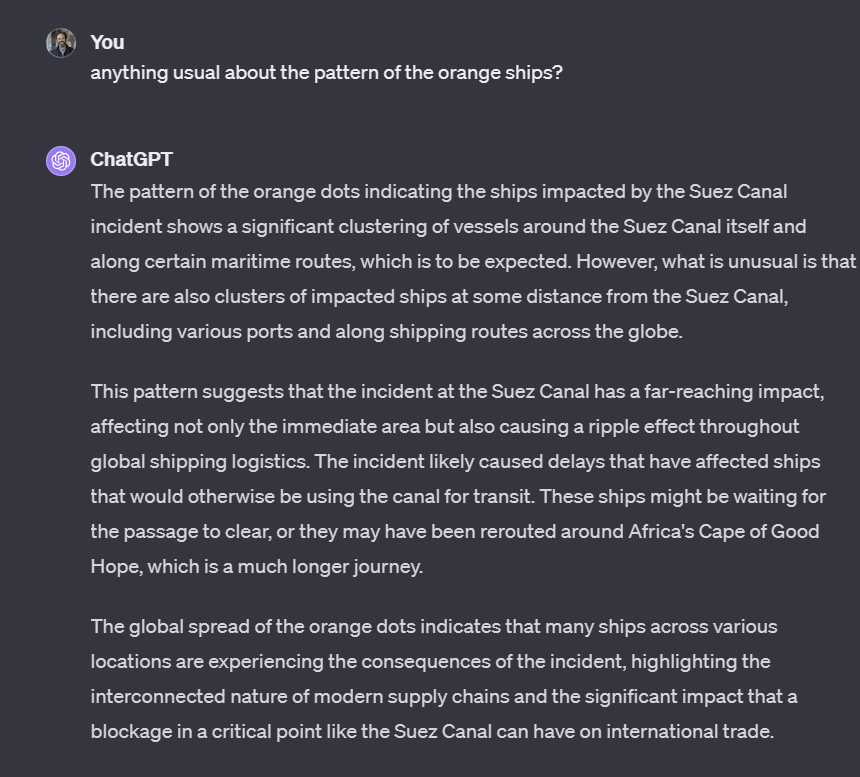

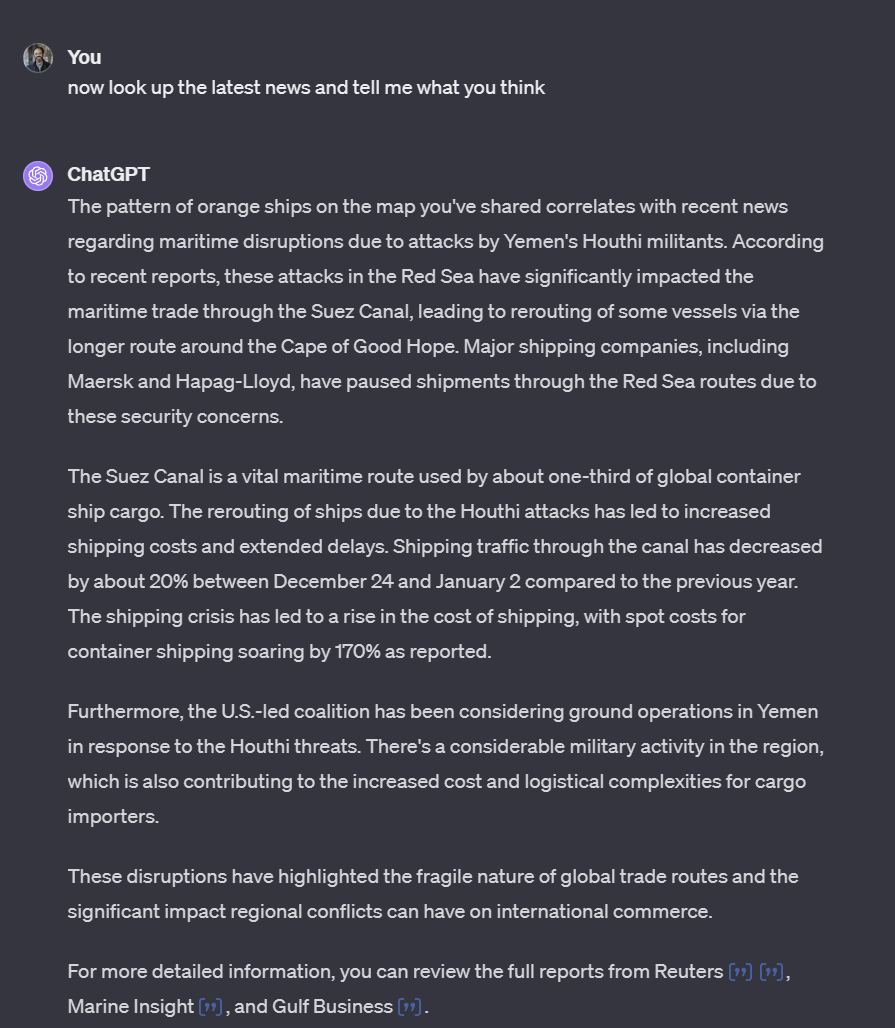

GPT-4 is a competent analyst & it really takes advantage of any context it is given. I gave it a map of disrupted global shipping routes from @typesfast, and it did a pretty good guess about what was happening from the image. Once I asked it to look at the news, it nailed it.

@emollick @typesfast Now have it read out the news on an AI phone call :D x.com/usebland/statu…

@emollick @typesfast Now have it read out the news on an AI phone call :D x.com/usebland/statu…

@emollick @typesfast Did u ask for better routes?

@emollick @typesfast I did the same but without the orange dots tip in google bard . It sees anomalies but does know the meaning of the arrows. Then I explained a little bit and the analysis was better. You can try this picture.

@emollick @typesfast I gave gpt4 a US map with states colored different colors and a legend explaining those colors and I asked it to list the states under their color. It hallucinated answers and then said it couldn’t perform the task because it can’t actually “visually analyze an image.” 🤷♂️

@emollick @typesfast Will be interesting to look back at this post in 1 year or 10 years and see how we feel about every aspect of it

@emollick @typesfast I agree 100%, this is artificial intelligence. The only real difference between a human is that it has NO WILL. We are the controller

@emollick @typesfast I get the Gromit "predict next token" metaphor, but despite it's cut off, it's a mystery to me how the probabilistic nature throws up totally credible analysis based on something it's never heard of

@emollick @typesfast Interesting! I tried that same first prompt with the same image, with Microsoft Copilot (GPT-4) and Google Bard, and drew completely different unsatisfactory outcomes. Looks like OpenAI is still leading the pack.

@emollick @typesfast Interesting. But the visualisation is already about disrupted shipping routes, right? So it interprets visualization, not making an analysis of data extracted without any context from an image? Still impressive.