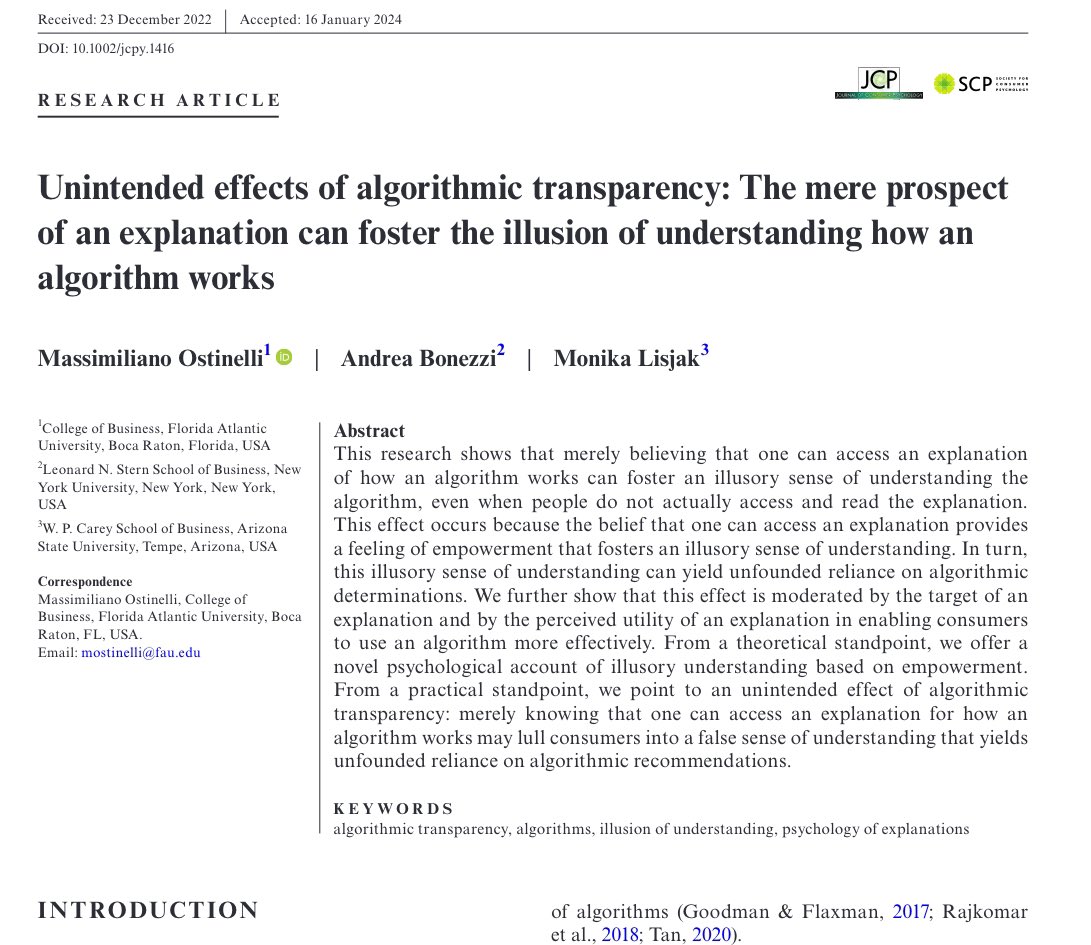

We can’t explain how you get a particular answer from AI, which can make people distrust AI results… unless you just tell them that an explanation of the system is available Then they trust the results more even if they never look at the explanation. (Also a way GDPR backfires)

@emollick I think distrust also comes from the fact that manu people were used to get trusted information at a high transaction cost (i.e. the service economy, formal education, etc). Now you can get a probabilistic candidate (an LLM’s output) for almost nothing in cost and time

@emollick Will we ever be able to perfectly explain/trace and LLMs output, or is that just the nature of it?

@emollick Weird, because "I can explain" always makes things worse in person to person communications!

@emollick We can’t understand all of how an AI works, just like we don’t fully understand how consciousness works. We shouldn’t have to know everything there is to know about something in order to trust it. Though a skepticism towards AI more generally is healthy I think.