Search results for #NLPaperAlert

#NLPaperAlert: If you are in Malta for #EACL2024, check out our latest work on Sentence Alignment and Machine Translation! Great work led by @FranMolfese and @SBejgu from @SapienzaNLP. #NLProc #AI 📄: aclanthology.org/2024.eacl-long…

#NLPaperAlert: If you are in Malta for #EACL2024, check out our latest work on Sentence Alignment and Machine Translation! Great work led by @FranMolfese and @SBejgu from @SapienzaNLP. #NLProc #AI 📄: aclanthology.org/2024.eacl-long…

#NLPaperAlert 😴 Aren't you tired of the monotonous way ChatGPT responds? 💡Infuse your preferences to personalize the way your LLM responds, based on our new alignment method 🥣Personalized Soups🥣 ✅ Great work led by @jang_yoel , check it out!!

#NLPaperAlert 😴 Aren't you tired of the monotonous way ChatGPT responds? 💡Infuse your preferences to personalize the way your LLM responds, based on our new alignment method 🥣Personalized Soups🥣 ✅ Great work led by @jang_yoel , check it out!!

📢#NLPaperAlert Incredible work led by @seungonekim @jshin491 & Yejin Cho! ➡️ Ranking responses is subjective ➡️ Do you prefer short vs long, formal vs informal, creative vs precise responses? ➡️ 🔥Prometheus 🔥➡️Open LM trained to respect custom criteria Check it out!

📢#NLPaperAlert Incredible work led by @seungonekim @jshin491 & Yejin Cho! ➡️ Ranking responses is subjective ➡️ Do you prefer short vs long, formal vs informal, creative vs precise responses? ➡️ 🔥Prometheus 🔥➡️Open LM trained to respect custom criteria Check it out!

📢#NLPaperAlert Is superhuman performance hype truly grounded? Check out our #ACL2023NLP paper with a thorough analysis of popular #NLU benchmarks (#SuperGLUE and #SQuAD) and recommandations. Joint work w/many world-renowned #NLP researchers! arxiv.org/abs/2305.08414 #NLProc

📢 #NLPaperAlert 🌟Active Learning Over Multiple Domains in Natural Language Tasks 🌟 To appear at Workshop on Distribution Shifts (DistShift) at #NeurIPS2022 later this week! 📜: arxiv.org/abs/2202.00254 This is honestly the hardest ML problem I’ve ever worked on. 👉🧵

📢 #NLPaperAlert: Outliers Dimensions that Disrupt Transformers Are Driven by Frequency with @gpuccetti92 @bkbrd Felice Dell'Orletta TLDR: remember how BERT over-relies on just 48 magic params? They're related to token frequency in training! /1 arxiv.org/abs/2205.11380

#NLPaperAlert Proud of our new work! New findings, SOTA results, and, my personal favourite, 🌟 Open sourcing new models 🌟, significantly better than current T5s!

#NLPaperAlert Proud of our new work! New findings, SOTA results, and, my personal favourite, 🌟 Open sourcing new models 🌟, significantly better than current T5s!

Social bias is not the only type of bias in NLP models. My collaborators and I Introduce 🆘: Systematic offensive stereotyping bias, define it, propose a method to measure it and validate it in our #COLING2022 paper efatmae.github.io/publications/2… #nlproc #NLPaperAlert 🧵(1/8)

#NLPaperAlert: QA Dataset Explosion🔥is out in ACM CSUR, updated with 30+ new resources! with @nlpmattg @IAugenstein We surveyed 200+ QA/RC datasets to develop a taxonomy of formats & reasoning skills. Also discussed: modalities, domains, non-English data arxiv.org/abs/2107.12708

#NLPaperAlert 📢 Awesome new paper by @rajivmovva Q: As LLMs grow ever larger, how can we compress them? A: Combining these popular methods yields 🌟super-multiplicative🌟 compression ratios📈! 1/

#NLPaperAlert 📢 Awesome new paper by @rajivmovva Q: As LLMs grow ever larger, how can we compress them? A: Combining these popular methods yields 🌟super-multiplicative🌟 compression ratios📈! 1/

📢 #NLPaperAlert #COLING2022 Machine Reading, Fast And Slow: When Do Models "Understand" Language? TLDR: instead of claiming broad "language understanding", why don't we define the reasoning expected in specific cases? arxiv.org/abs/2209.07430 with @sagnikrayc @IAugenstein /1

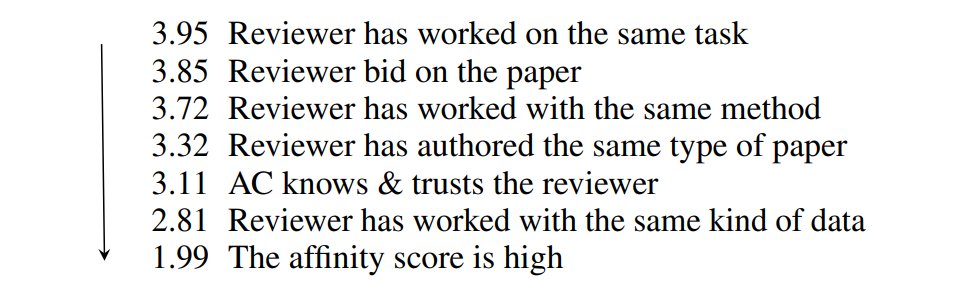

📢 #NLPaperAlert: What Factors Should Paper-Reviewer Assignments Rely On? arxiv.org/abs/2205.01005 with @Ternethorn, to appear at #NAACL2022 TLDR: How should conferences match papers to reviewers, so as to avoid #Reviewer2? #NLProc community says: not with similarity scores!🧵 /1

📢📜#NLPaperAlert 🌟Active Learning over Multiple Domains in NLP🌟 In new NLP tasks, OOD unlabeled data sources can be useful. But which ones? We try active learning ♻️, domain shift 🧲, and multi-domain sampling🔦 methods to see what works [1/] arxiv.org/abs/2202.00254

#NLPaperAlert: Generalization in NLI: Ways (Not) To Go Beyond Simple Heuristics With @prajjwal_1 @bkbrd, accepted by insights-workshop.github.io arxiv.org/abs/2110.01518 A proud example of a paper genre I'd call "research realism": a mix of negative & (cautiously) positive results /1