✒️Write LLM tests as unit tests AI engineers should write good tests for their application, just as software engineers write unit and integration tests ⭐️We've added a new testing workflow that helps bridge the gap You can add a `unit` decorator to your tests, run in pytest, and then have them logged to LangSmith! Then results are logged to LangSmith, where you can (1) track results over time, (2) inspect traces/errors more easily A nice medium between aspects of LLM based testing and traditional unit testing 📓Docs: docs.smith.langchain.com/evaluation/faq… 📹YouTube: youtu.be/ZA6ygagspjA

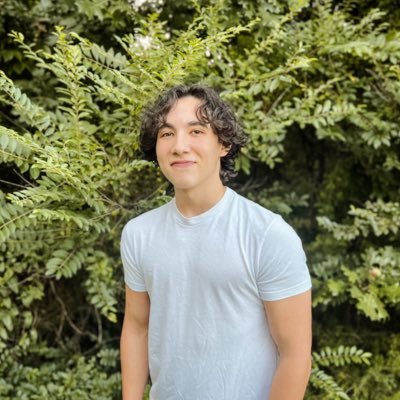

@hwchase17 What if the prompts are changing and you don't know the output code you want ahead of time, any tips here?

@hwchase17 @BlueBirdBack How do I actually ensure that everything runs correctly when I use a huge language model to generate SQL commands from normal instructions?

@hwchase17 Nice. We have been using a similar strategy, BUT not always there is a guaranteed test in the sense that if it doesn't fail the test now it won't fail test just the next try. So, we run test 3 times with a little variation of prompts if there is any.

@hwchase17 House of cards, castle of turds.