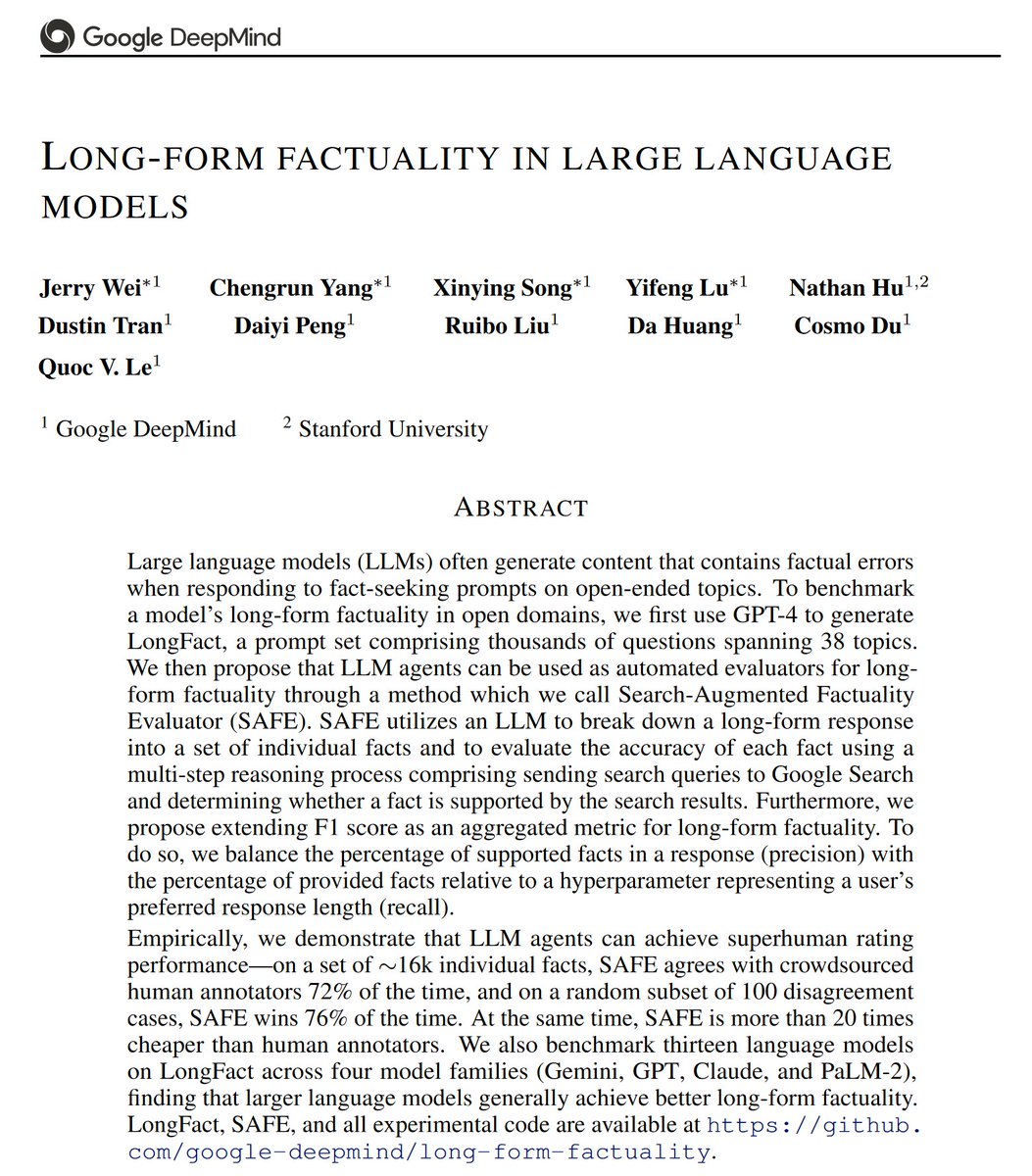

“Superhuman” ≠ better than average human crowdworker. It means better than the best human. I believe the term has been greatly misused below. (@emollick correctly reports what the paper says but I think the paper misused the term, in a critically important way).

“Superhuman” ≠ better than average human crowdworker. It means better than the best human. I believe the term has been greatly misused below. (@emollick correctly reports what the paper says but I think the paper misused the term, in a critically important way).

@GaryMarcus @emollick The system relies on top google results being accurate - human authored search results. The LLM is being used as an automated google searcher. If the fact is obscure or the human in question more thorough than a cursory wikipedia glance the results would be different IMO.

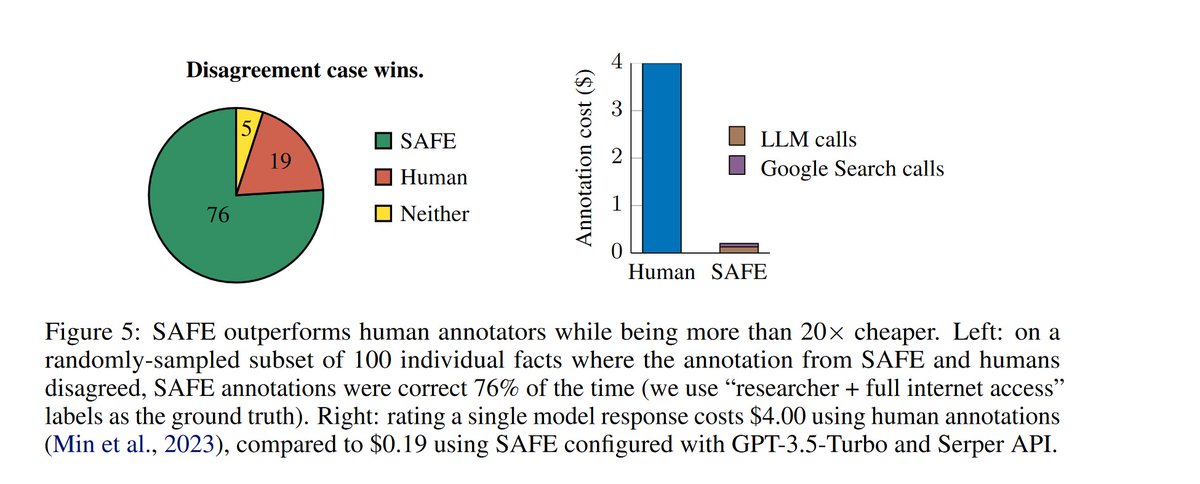

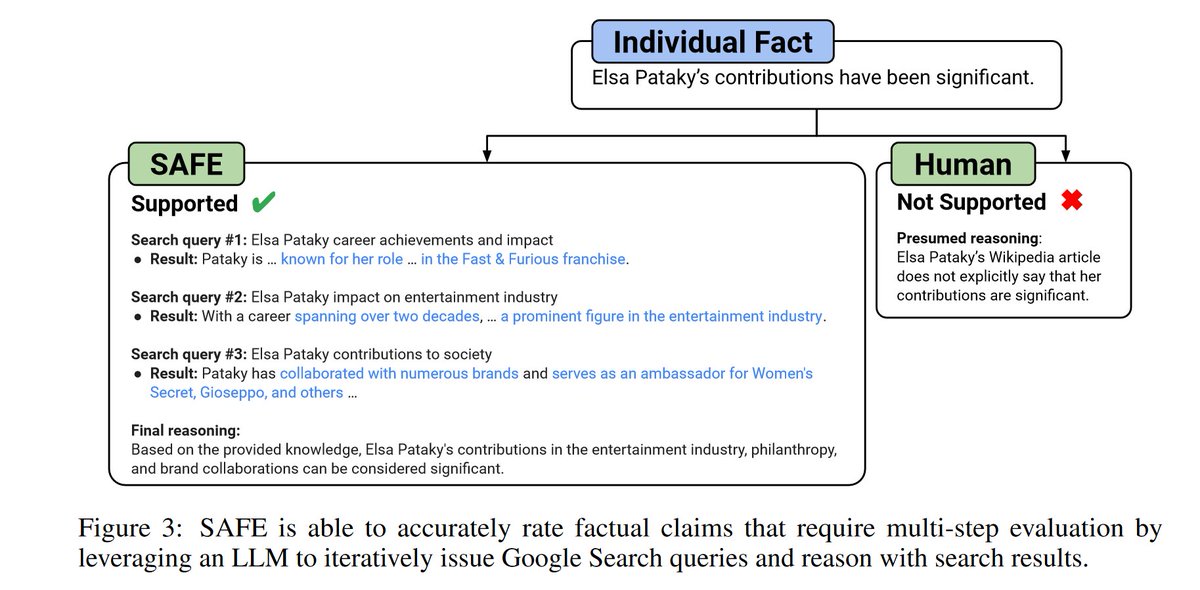

Fair point. And there’s more. The human annotations they used came from a dataset by Min et al. (2023), who created them by hiring experienced annotators on Upwork to refer to Wikipedia entries only. aclanthology.org/2023.emnlp-mai… Wei et al. found that SAFE disagreed with these annotations 28% of the time. In such cases, they conducted a thorough evaluation using Google search to help decide which (the Min et al. human annotators or SAFE) was correct. They found that SAFE was accurate 76% of the time. So here’s the takeaway: An LLM agent in the SAFE construct with access to Google search is superior to a group of experienced human annotators with access to Wikipedia but not Google search. So, not only is SAFE not exactly “superhuman,” but it also had access to a tool (Google search) that human annotators were denied.

There's a risk of people trusting automated fact checking the same way that lawyers trust made-up LLM legal precedents: giving up agency and thinking "well automated system says it's true, so I won't look further." Imagine a government using that to fine-tune truth for the masses.

@GaryMarcus @emollick Superhuman means better than most humans = better than the median. It is clear that you are forced to look for ever more absurd excuses in order not to have to admit how powerful the approach with the LLMs is and how humans are being outperformed more and more,

@GaryMarcus @emollick And I agree superhuman is like almost like Godlike so they so they want to say that their AI is superhuman when they really should just be saying it beats or exceeds human on existing benchmarks. Superhuman is a ridiculous term to be throwing around.

@GaryMarcus @emollick What will happen even if this tech works: - People won’t use it if it stops them saying what they want - They’ll design their own bespoke “fact checkers” to back up their ideology - There will be campaigns against “woke”’fact checking - misinformation will spread just as easily

@GaryMarcus @emollick Earlier I was involved in Wikipedia, and usually the falsehoods were quite easy to spot. When LLMs hallucinate it is often darn hard to spot the falsehoods. I suspect this makes LLMs look kind of "superhuman", when they in fact perpetuate a lot of falsehoods.

@GaryMarcus @emollick The core model of an LLM is clearly superhuman at calculating the probabilities of the next token.