Piotr Nawrot @p_nawrot

PhD student in #NLProc @Edin_CDT_NLP | Previously intern @Nvidia & @MetaAI piotrnawrot.github.io Warsaw Joined July 2014-

Tweets236

-

Followers3K

-

Following219

-

Likes498

Heyo, @francoisfleuret wants to have a platform to post links to arxiv papers with max 200 characters TL;DRs and voting system, so I just built it tldr-ai.org It's an unpolished MVP yet, but still, I can't wait for you to start using it and sharing your feedback!

Given this pace of long-context development, KV-Cache-Compression becomes increasingly more important for efficient inference. If you're interested in the topic go take a look at our Dynamic Memory Compression (arxiv.org/abs/2403.09636) which works on top of GQA for extra 2x…

Given this pace of long-context development, KV-Cache-Compression becomes increasingly more important for efficient inference. If you're interested in the topic go take a look at our Dynamic Memory Compression (arxiv.org/abs/2403.09636) which works on top of GQA for extra 2x…

Young scientists regularly ask me for career advice. Academia or industry? Big company or startup? US or Europe? Good scientists in AI disciplines are fortunate to have many choices. But choosing can be stressful. I always give the same advice. 1/10

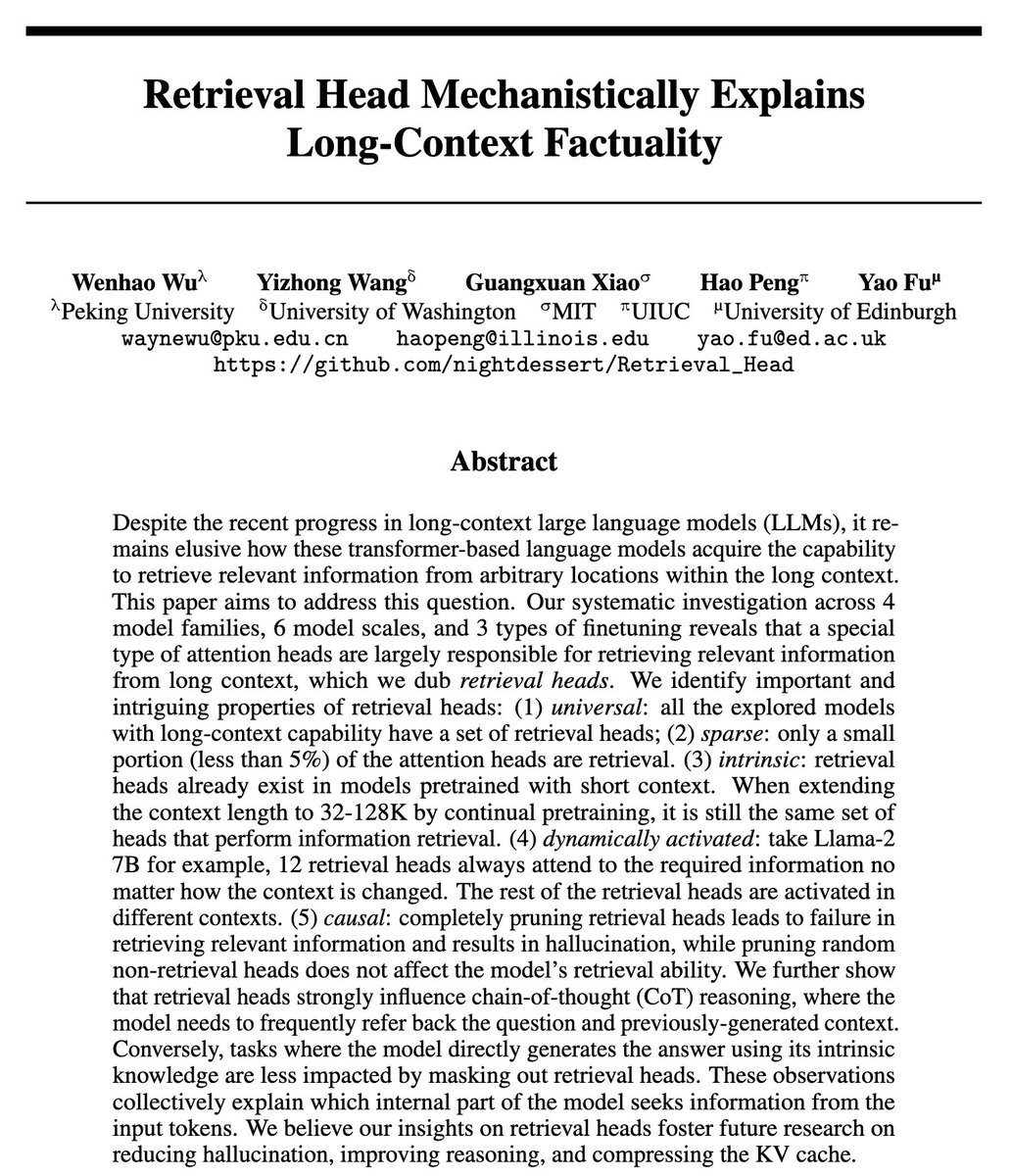

From Claude100K to Gemini10M, we are in the era of long context language models. Why and how a language model can utilize information at any input locations within long context? We discover retrieval heads, a special type of attention head responsible for long-context factuality

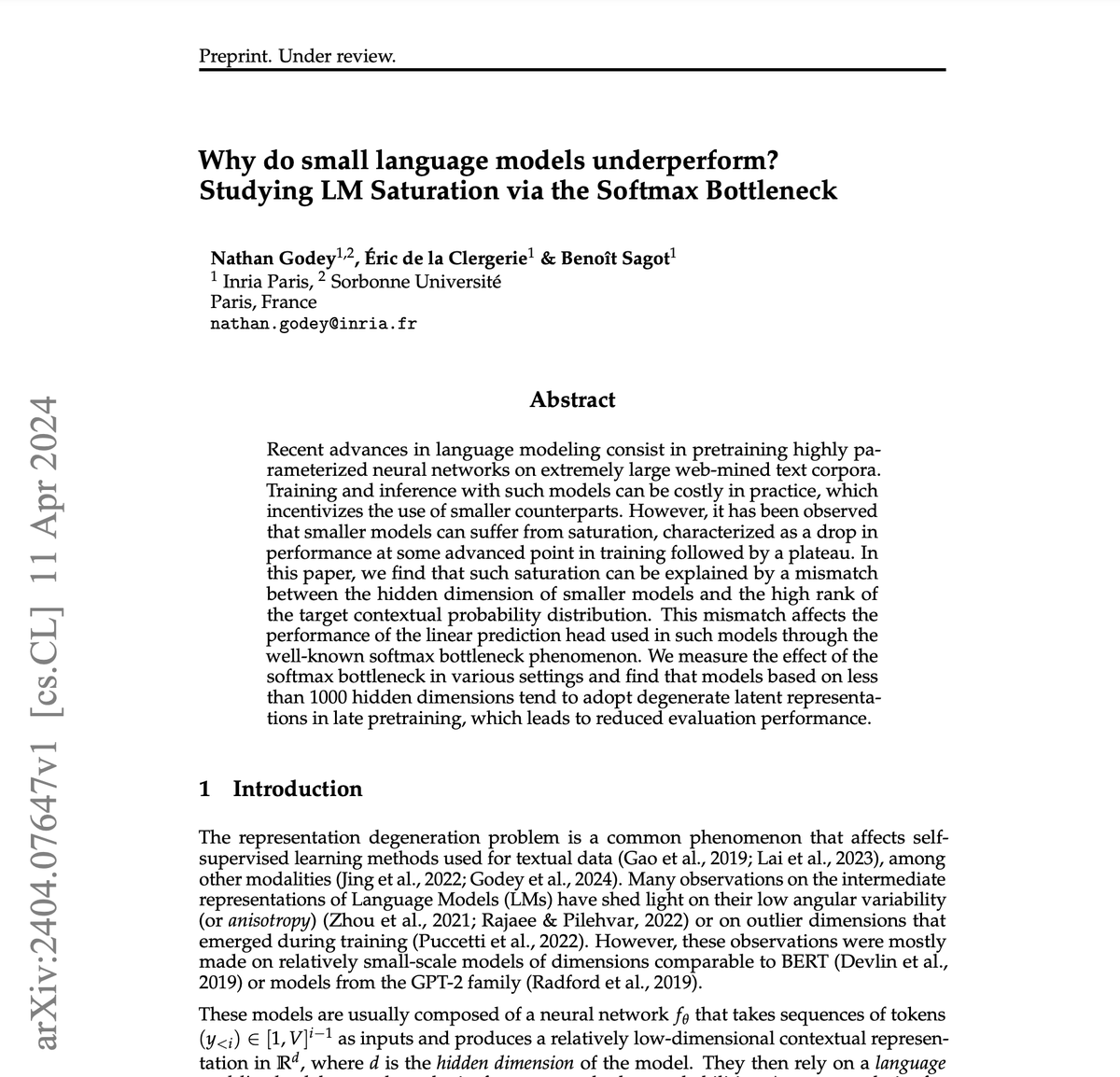

🤏 Why do small Language Models underperform? We prove empirically and theoretically that the LM head on top of language models can limit performance through the softmax bottleneck phenomenon, especially when the hidden dimension <1000. 📄Paper: arxiv.org/pdf/2404.07647… (1/10)

It’s common practise to quantise LLMs to {4, 8}-bit to increase throughput/latency at a small cost to model accuracy. I was thinking how quantisation behaves for long-context scenarios (>100k) where there's a lot of tokens to process but e.g. so little values to encode your…

I'm trying to tackle a (impossible?) task of keeping up with the long-context LLM evaluation field and below is a list of recent papers I've found that introduce some new long context evaluation schema / dataset. Please give me a hand to have this list up-to-date, at least for…

Excited to share our latest work on improving LLM pre-training! 🚀 The amazing @yuzhaouoe et al. found that focusing on how pre-training sequences are composed and attended over can significantly improve the generalisation properties of LLMs on a wide array of downstream tasks,…

Very interesting approach for learnable KV cache compression that results in huge speedups, highly recommend checking out the paper! I especially enjoyed the approach — it's quite close to the field of adaptive computation in DL, which to me is quite undervalued these days

Very interesting approach for learnable KV cache compression that results in huge speedups, highly recommend checking out the paper! I especially enjoyed the approach — it's quite close to the field of adaptive computation in DL, which to me is quite undervalued these days

scent teleportation should be getting more publicity 🌺🌷💐 i want to download roses; email candles to my friends; store the distinct smell of a certain forest on a specific day on a thumb drive to revisit years later this is way cooler than Sora or the latest LLM finetune

scent teleportation should be getting more publicity 🌺🌷💐 i want to download roses; email candles to my friends; store the distinct smell of a certain forest on a specific day on a thumb drive to revisit years later this is way cooler than Sora or the latest LLM finetune

*Dynamic Memory Compression: Retrofitting LLMs for Accelerated Inference* by @p_nawrot @AdrianLancucki @PontiEdoardo A dynamic KV cache for LLM generation that can be trained to satisfy a given memory budget. arxiv.org/abs/2403.09636

Lots of cool work in the long-context space recently! I award bonus points to this paper for having an efficient implementation w/ realized throughput gains. Nice work Piotr 🫡.

Lots of cool work in the long-context space recently! I award bonus points to this paper for having an efficient implementation w/ realized throughput gains. Nice work Piotr 🫡.

Congratulations, interesting how Github stars seem to correlate to superfluous parameters 😉

emanon @JianSuji

67 Followers 1K Following

Aashish Sairam @AnditwentBooM

28 Followers 34 Following Football | Dance |Listening to music | CSK! | Student | Cricket | Gooner of course! | Iceplex | And that is all about me...!

Andrew Carr (e/🤸) @andrew_n_carr

15K Followers 3K Following science @getcartwheel AI writer @tldrnewsletter advisor @arcade_ai Past - Codegen @OpenAI, Brain @GoogleAI, world ranked Tetris player

vishal @sirsystems2

36 Followers 2K Following

Kayla Cardillo @kaylacardillo

7K Followers 2K Following voted #1 most interesting human by the robots I train. committed to making a tangible difference in society with tech enrichment

Jędrzej Maczan @jedmaczan

219 Followers 168 Following https://t.co/TdsFvi4ymt 💙 @keep_FOMO_away. ASR research for dysarthric speech. Lead AI/ML @DNV_Group. Cover painting: https://t.co/rs0nMCNnN6

Daniel Atonge @AtongeDaniel

70 Followers 649 Following Development Lead TechVenia | Passionate Software & Cloud Engineer 👨🏽💻

精神病狗婊子杂.. @frkglp

0 Followers 3K Following 神病狗婊子杂种邓小平,刘少奇就是整个世界的敌人,它那套歪把戏不除,世界战乱不断。Cgkl精神病狗婊子杂种习近平被凌迟处死。Cgk凌迟处死精神病狗婊子杂种中共狗屁家族邓小平,习近平,陈云,刘少奇,陈一新,张又侠,何卫东,刘振立,苗华,董军。锸s你跟踪本人的精神病狗婊子杂种全部中共空军、警察、台湾间谍

song @sliver0425

4 Followers 350 Following

jr @jamesrichmanx

16K Followers 223 Following

$$$ @sp1d3r_8eyes

58 Followers 398 Following

ThomasROBERTparis5636.. @ThomasROBERT_75

0 Followers 95 Following

loris luise @lorisluise

111 Followers 1K Following

Luz Arbry @arbry_l

103 Followers 5K Following

Abraham Antony @AAntony09

22 Followers 322 Following Tech enthusiast passionate about innovation and problem-solving. Helping companies find top talent at https://t.co/O9oxzat068. Always eager to connect and learn!

fan @fan_ghxyydx

0 Followers 12 Following

Ibraheem @CODER1r

149 Followers 1K Following | Bio | |---|---| | Building awesome softwares 💻 | "Passionate software engineer transforming ideas into elegant code. | #softwareengineer |

Ruibo Liu @RuiboLiu

2K Followers 1K Following Research Scientist @GoogleDeepMind. AI Research with Humans in Mind.

liuyong @forrestbing

265 Followers 5K Following I am a researcher in AIGC, Multi-modality and VitrualHuman tech direction

Agastya Seth @agastya_seth

29 Followers 137 Following Techie | Innovator | Musician - Senior Software Engineer (R&D) at Cadence Design Systems

🧠ZedNexus 🚀e/ac.. @RozzPower

7 Followers 37 Following 🚀 AI Acceleration Enthusiast | Embracing the future at warp speed 🧠 Exploring the nexus of AI & human experience 🌐 Connected to the pulse of tech evolution

Sai Wanna Aung @aung_sai47631

0 Followers 6 Following

Suvo 🇮🇳 @suvasish114

80 Followers 852 Following 🎓 MSc CS grad student 🔍 Tweet about #tech #geopolitics 🗿 Interested in #DeepLearning and #LLM

thermal2 @thermal262

15 Followers 267 Following

acidoom @acidoom

93 Followers 964 Following

Bryan Briney @bryanbriney

455 Followers 463 Following Associate Professor at Scripps Research, studying antibody responses to immunization and infection. Big fan of open science and dogs.

Airth @ElementalPower7

30 Followers 313 Following

Wayne Painters 🌻 �.. @PaintersWayne

1K Followers 2K Following Let’s do this! Follow me in the fight against sedition, hypocrisy and anti-democracy in an already great country. Don’t give in to the magat gaslighting! 🌊

lhgf @HrLhgf

1 Followers 734 Following

Nikhil Reddy @NikhilR80173692

1 Followers 73 Following

Isaac Morton @MrPenguinardo

6K Followers 2K Following

Beef with Big Data @beefwithbigdata

22 Followers 53 Following Exploring ideas in data analytics to optimize efficiency and sustainability in the beef industry. Please visit my blog at the link below.

Oskar Holmstrom @oskar_holmstrom

27 Followers 181 Following PhD student in NLP at Linköping University. Working on improving language models with external knowledge bases.

Abi Aryan 🛠️ @GoAbiAryan

7K Followers 2K Following 🗜ML Engineer 🛠️ Building Multi-Modal Models @AbideAI 🖋 Writing #LLMOps - Managing LLMs in Production book (O'Reilly) 🐦 Talk to me about LLMs, MLSys & SecOps

Aviad Tsherniak @aviadt

210 Followers 510 Following AI for science; Previously ML for cancer research @BroadInstitute; Founder @CancerDataSci @CancerDepMap

Jędrzej Maczan @jedmaczan

219 Followers 168 Following https://t.co/TdsFvi4ymt 💙 @keep_FOMO_away. ASR research for dysarthric speech. Lead AI/ML @DNV_Group. Cover painting: https://t.co/rs0nMCNnN6

Nathan Lambert @natolambert

25K Followers 690 Following Figuring out AI @allen_ai, "rl boi" DM me papers. Writes @interconnectsai, talks @retortai Has phd and some credentials

Kelly Marchisio (St. .. @cheeesio

1K Followers 558 Following Multilingual NLP @cohere. Formerly: PhD @jhuclsp Alexa Fellow @amazon dev @Google MPhil @cambridgenlp EdM @hgse 🔑🔑¬🧀 (@kelvenmar20)

Devendra Chaplot @dchaplot

8K Followers 365 Following Building next-gen AI at @MistralAI. Past: Research Scientist at Facebook AI Research. Ph.D. @SCSatCMU, BTech @iitbombay CS.

Peter David Fagan �.. @peterdavidfagan

144 Followers 466 Following PhD student in robotics at the University of Edinburgh.

AK @_akhaliq

310K Followers 3K Following AI research paper tweets, ML @Gradio (acq. by @HuggingFace 🤗) dm for promo follow on Hugging Face: https://t.co/q2Qoey80Gx

Andreas Grivas @andreasgrv

375 Followers 550 Following PhD Candidate in Natural Language Processing at the University of Edinburgh.

Peter J. Liu @peterjliu

4K Followers 2K Following Research Scientist @ Google B̵r̵a̵i̵n̵ DeepMind, frontier language models research (aka chatbot engineer). Opinions are my own. 🤖🔄🚀

Matt Mahoney @mattmahoneyfl

725 Followers 296 Following Data compression research, teaching C++, ultrarunning

Zhenyu (Allen) Zhang @KyriectionZhang

271 Followers 223 Following Ph.D. student @UTAustin || In-coming Research Intern @Meta || Previously @MSFTResearch @Livermore_Lab || Machine Learning, Quantum Computing

Beidi Chen @BeidiChen

6K Followers 343 Following Asst. Prof @CarnegieMellon, Visiting Researcher @Meta, Postdoc @Stanford, Ph.D. @RiceUniversity, Large-Scale ML, a fan of Dota2.

Kevin Patrick Murphy @sirbayes

42K Followers 334 Following Research Scientist at Google Brain / Deepmind. Interested in Bayesian Machine Learning.

Graham Neubig @gneubig

31K Followers 588 Following Associate professor at CMU, studying natural language processing and machine learning.

Yangqing Jia @jiayq

12K Followers 263 Following Founder @leptonai. @UCBerkeley alumni. ex @google & @facebook. ex vp @AlibabaGroup. Open source work on caffe, @pytorch, @tensorflow, & @onnxai.

Konstantin Kisin @KonstantinKisin

528K Followers 2K Following Politically Non-Binary Satirist Podcast: @triggerpod Speaking: [email protected] Media: [email protected] Book: https://t.co/GgTCJ7iPE8

Québec.AI @Quebec_AI

147K Followers 76 Following Québec Artificial Intelligence ( Français : @Quebec_IA ) #QuebecAI

Mosh Levy @mosh_levy

267 Followers 163 Following phd student @biunlp. studying ai robustness and behaviors.

Max Ryabinin @m_ryabinin

1K Followers 167 Following Large-scale deep learning & research @togethercompute Learning@home/Hivemind author, PhD in decentralized DL

Jonathan Richard Schw.. @schwarzjn_

4K Followers 229 Following Efficient Machine Learning @Harvard | ex- Senior RS @GoogleDeepMind | PhD @ucl @gatsbyucl | Open, international & collaborative science 🇬🇧🇩🇪🇺🇲🇭🇰🇯🇵

RJ Skerry-Ryan @rustyryan

796 Followers 1K Following 🌮🤖 Speech and Language Generative Modeling. Sound Understanding, Machine Perception @ Google. @mixxxdj core team ♊🌊 @[email protected] @[email protected]

Aleksander Madry @aleks_madry

31K Followers 166 Following Head of Preparedness at OpenAI and MIT faculty (on leave). Working on making AI more reliable and safe, as well as on AI having a positive impact on society.

main @main_horse

8K Followers 477 Following AGI Believer. Haven't applied @OpenAI. Likes are not always endorsement.

Grant Sanderson @3blue1brown

365K Followers 362 Following Pi creature caretaker. Contact/faq: https://t.co/brZwdQfdif

Jinjie Ni @NiJinjie

154 Followers 291 Following Researcher @NUSingapore working on LLMs; Ph.D. in CS @NTUsg; Prev research intern @AlibabaDAMO; Open to research collaborations!

Vinh Q. Tran @vqctran

1K Followers 282 Following i research language models @Google, all thoughts my own, he/him

jack morris @jxmnop

11K Followers 764 Following getting my phd in nlp @cornell_tech 🚠 // academic optimist // tweeting from the snack aisle at trader joes

Carlos Gemmell @carlos_gemmell

135 Followers 80 Following Co-Founder & CTO at Malt AI. PhD in NLP at the University of Glasgow. Machine learning | LLM Distillation | LLMs for code

Alex Gu @minimario1729

2K Followers 2K Following phd @MIT_CSAIL, llm for math and code. intern @MetaAI and analyst @pillar_vc. prev @BigCodeProject, @MITIBMLab, @JaneStreetGroup, @PonyAI_tech

Sebastian Gehrmann @sebgehr

5K Followers 2K Following Head of NLP, CTO office, @Bloomberg. (he/him) Generating natural language, one word at a time. Also making sense of that language afterwards. views my own

Shikhar @ShikharMurty

1K Followers 127 Following PhD student at @StanfordNLP, @StanfordAILab. Ex: @GoogleDeepMind, @MSFTResearch Interested in structure and interpretation of human language

Alex Graveley @alexgraveley

31K Followers 933 Following I’m Alex Graveley, creator of GitHub Copilot, AI Tinkerers, Dropbox Paper, MobileCoin, and Hackpad. Building @ai_minion Hiring https://t.co/nsHar8OLPC

Andrew Carr (e/🤸) @andrew_n_carr

15K Followers 3K Following science @getcartwheel AI writer @tldrnewsletter advisor @arcade_ai Past - Codegen @OpenAI, Brain @GoogleAI, world ranked Tetris player

Shital Shah @sytelus

10K Followers 8K Following Deep learning research and code. If universe is an optimizer, what is the loss function? All opinions are my own.

jörn jacobsen @jh_jacobsen

2K Followers 2K Following pushing representation learning boundaries at 🍏 prev: @VectorInst/@UofT, @bethgelab, @UvA_Amsterdam, @maxplanckpress

Xiang Yue @xiangyue96

2K Followers 434 Following Postdoc @LTIatCMU. PhD from Ohio State @osunlp. Training & evaluating foundation models. Pushing the boundaries of AI🤖. Previously @MSFTResearch.

Avijit Thawani (Avi) @thawani_avijit

838 Followers 1K Following Graduating PhD @USC_ISI. LLMs/GenAI. Fintech Founding MLE. Filmmaker 100k+ views. Lived in UK, Singapore, India, US. ex: Microsoft Research, Amazon Alexa, AI2.

Rafael Rafailov @rm_rafailov

3K Followers 637 Following Ph.D. Student at @StanfordAILab. I work on Foundation Models and Decision Making. Previously @GoogleDeepMind @UCBerkeley

kyutai @kyutai_labs

6K Followers 6 Following

Ilya Sutskever @ilyasut

370K Followers 2 Following towards a plurality of humanity loving AGIs @openai

Ben Kuhn @benskuhn

7K Followers 289 Following Care a lot and try hard • making language models safer @AnthropicAI • prev CTO @WaveSenegal 🐧❤️

Nico Daheim @ndaheim_

200 Followers 391 Following @ELLISforEurope PhD student in NLP and ML at @UKPLab @TUDarmstadt and @ETH_en. Previously MSc. in Data Science @RWTH.

Akari Asai @AkariAsai

11K Followers 650 Following Ph.D. student @uwcse & @uwnlp. NLP. IBM Ph.D. fellow (2022-2023). Meta student researcher (2023-) . ☕️ 🐕 🏃♀️🧗♀️🍳

Grzegorz Chrupała �.. @gchrupala

6K Followers 1K Following Associate Professor at Tilburg University Computational Linguistics • Machine Learning@p_nawrot I, for one, am still interested in this :)

@p_nawrot convergence to very low loss in a few thousand steps, the model predicts the same word all the time

@p_nawrot I tried converting small unidirectionally pretrained prenorm models and seen them collapse when trained with bidirectional attention — wondering what the conditions are to ensure successful conversion

Even in a simplest case of evaluating MMLU in 5-shot setting, the bi-directional attention would allow the "shots" to look at each other and maybe it would boost the in-context learning capabilities? I'm really curious why I didn't see folks trying such things.

@jeethu Yeah, it feels very orthogonal, just how GQA is orthogonal to quantization too! We're working on releasing the quantization results too!

@p_nawrot Interesting and novel work, thanks a lot for sharing! Looks orthogonal to KV cache quantization. I wonder if both can be applied together?

What happened to simulating encoder-decoder with decoder-only by fine-tuning autoregressive GPTs to do bidirectional attention over the input (prompt)? I felt that it was an obvious thing to do as a post pre-training step and everyone was talking about it a year ago at…

@p_nawrot Thank u pretty much! Welcome to drop by!

Chatting with @GroqInc’s CEO @JonathanRoss321. Groq has super fast token generation capabilities now. And, I was excited also to hear about his plans to scale up capacity aggressively and also expand this to other models than just LLMs! This is a good time to be building AI…

@p_nawrot @kaffyou Because unlike 32 bit -> 16 bit quantization at lower precision is not straight forward. If we simply quantize 16bit -> 8 bit, there will be significant loss in performance. To overcome this, quantization -> dequantization happens on demand and this process leads to slower gen.

@p_nawrot Quantization techniques like zero point quantization or absmax quantization leads to drop in accuracy at lower precision. Hence new techniques like LLM.int8() are used at lower precision. Check out paper on LLM.int8() for more details.

Given this pace of long-context development, KV-Cache-Compression becomes increasingly more important for efficient inference. If you're interested in the topic go take a look at our Dynamic Memory Compression (arxiv.org/abs/2403.09636) which works on top of GQA for extra 2x…

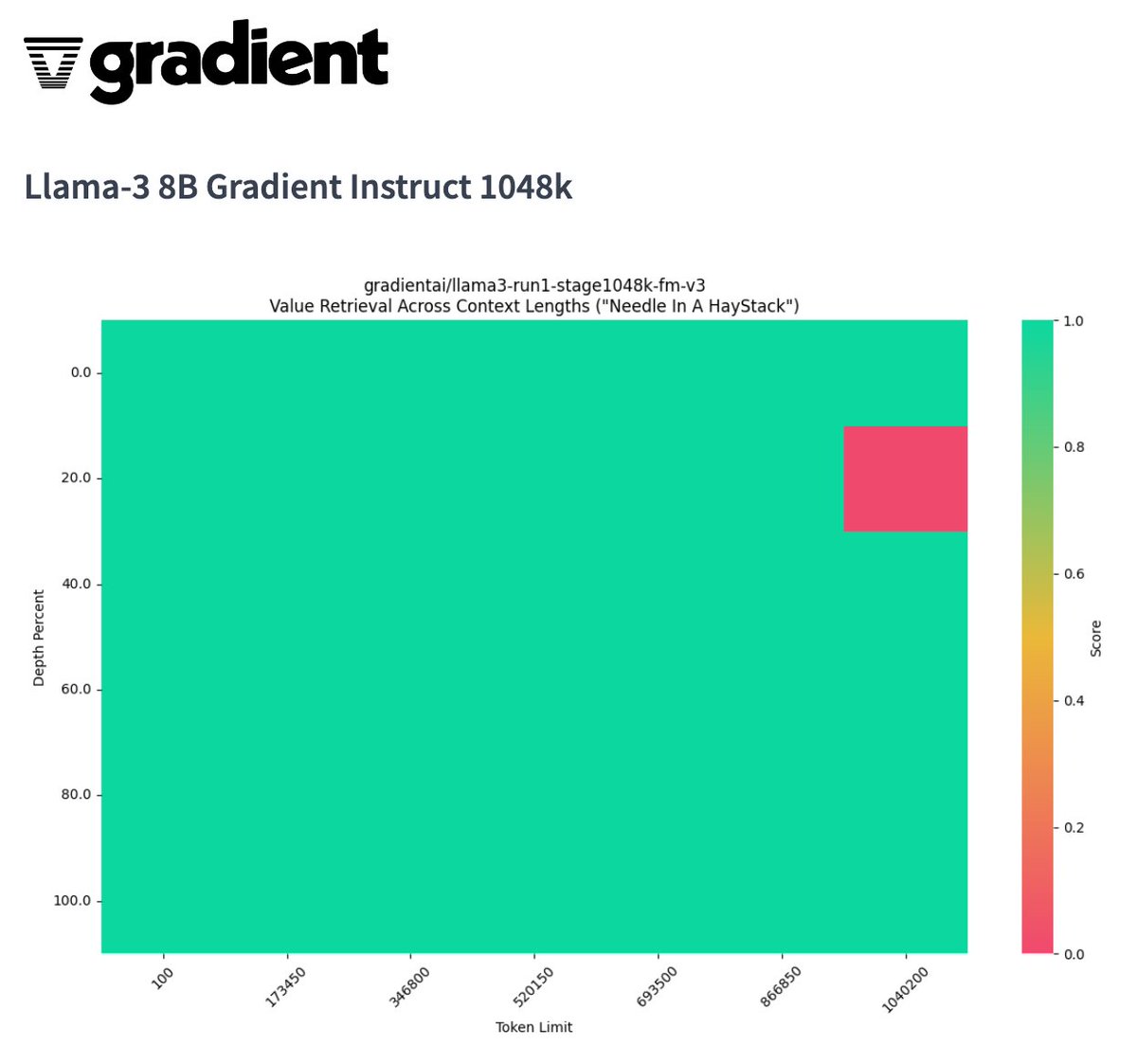

We've been in the kitchen cooking 🔥 Excited to release the first @AIatMeta LLama-3 8B with a context length of over 1M on @huggingface - coming off of the 160K context length model we released on Friday! A huge thank you to @CrusoeEnergy for sponsoring the compute. Let us know…

Heyo, @francoisfleuret wants to have a platform to post links to arxiv papers with max 200 characters TL;DRs and voting system, so I just built it tldr-ai.org It's an unpolished MVP yet, but still, I can't wait for you to start using it and sharing your feedback!

We've been in the kitchen cooking 🔥 Excited to release the first @AIatMeta LLama-3 8B with a context length of over 1M on @huggingface - coming off of the 160K context length model we released on Friday! A huge thank you to @CrusoeEnergy for sponsoring the compute. Let us know…

How would you run an efficient forward pass for a model such as this?

We've been in the kitchen cooking 🔥 Excited to release the first @AIatMeta LLama-3 8B with a context length of over 1M on @huggingface - coming off of the 160K context length model we released on Friday! A huge thank you to @CrusoeEnergy for sponsoring the compute. Let us know…

@langer_han I usually go with @p_nawrot 😅

Life update: today is my first day as a Member of Technical Staff at @cohere!

Young scientists regularly ask me for career advice. Academia or industry? Big company or startup? US or Europe? Good scientists in AI disciplines are fortunate to have many choices. But choosing can be stressful. I always give the same advice. 1/10