Niklas Muennighoff @Muennighoff

@ContextualAI | Interests: AI/LLM Research & Health ❤️ | Past: @huggingface @PKU1898 muennighoff.github.io Joined May 2020-

Tweets93

-

Followers5K

-

Following319

-

Likes510

We've added some experiments on GRIT + KTO in the paper to improve generative performance (arxiv.org/abs/2402.09906). Also, I'll give a talk on GRIT in 6 hours (below) if you want to discuss/learn more🙂

We've added some experiments on GRIT + KTO in the paper to improve generative performance (arxiv.org/abs/2402.09906). Also, I'll give a talk on GRIT in 6 hours (below) if you want to discuss/learn more🙂 https://t.co/TWnZebHAsy

MTEB is the most common text embedding benchmark with 190K installs/mon & 120K leaderboard visits/mon. We're extending it to be massively multilingual. Anyone is invited to contribute & co-author an upcoming publication📜 Details: github.com/embeddings-ben…

MTEB is the most common text embedding benchmark with 190K installs/mon & 120K leaderboard visits/mon. We're extending it to be massively multilingual. Anyone is invited to contribute & co-author an upcoming publication📜 Details: github.com/embeddings-ben…

RAG 2.0 is about making retrieval-augmented generation more end-to-end & learned, e.g. Self-RAG, RA-DIT, GRIT - High-impact research direction imo! 😊

RAG 2.0 is about making retrieval-augmented generation more end-to-end & learned, e.g. Self-RAG, RA-DIT, GRIT - High-impact research direction imo! 😊

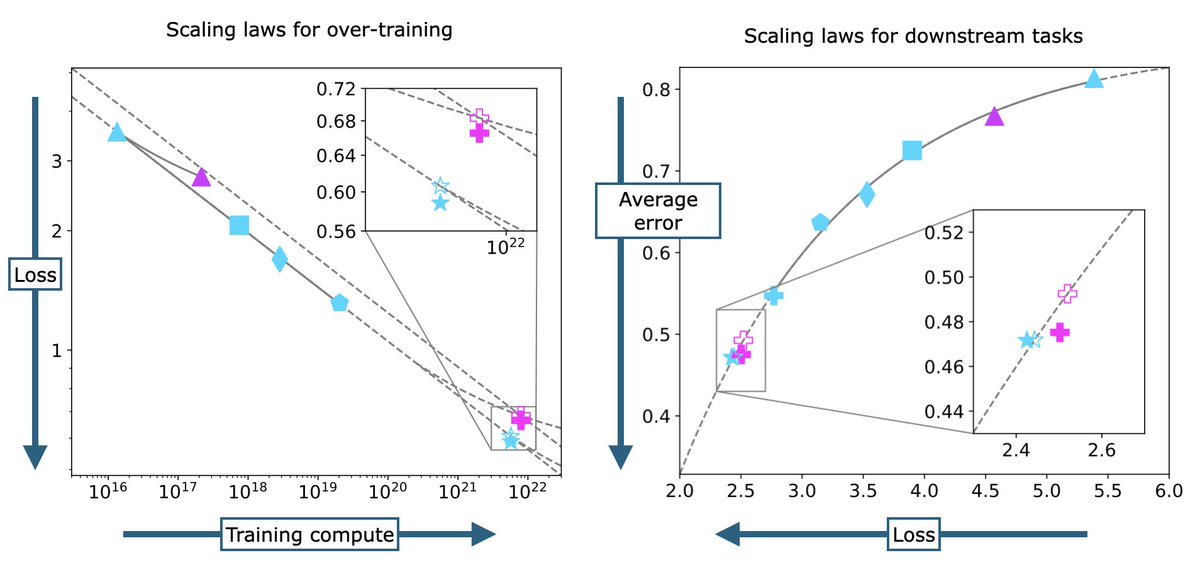

The best LLMs now train way beyond Chinchilla compute-optimality ("over-training") -- but how predictable is scaling in this regime?🎢 Work by the amazing @sy_gadre shows that it's very predictable🔎

The best LLMs now train way beyond Chinchilla compute-optimality ("over-training") -- but how predictable is scaling in this regime?🎢 Work by the amazing @sy_gadre shows that it's very predictable🔎 https://t.co/Fz5jbExo72

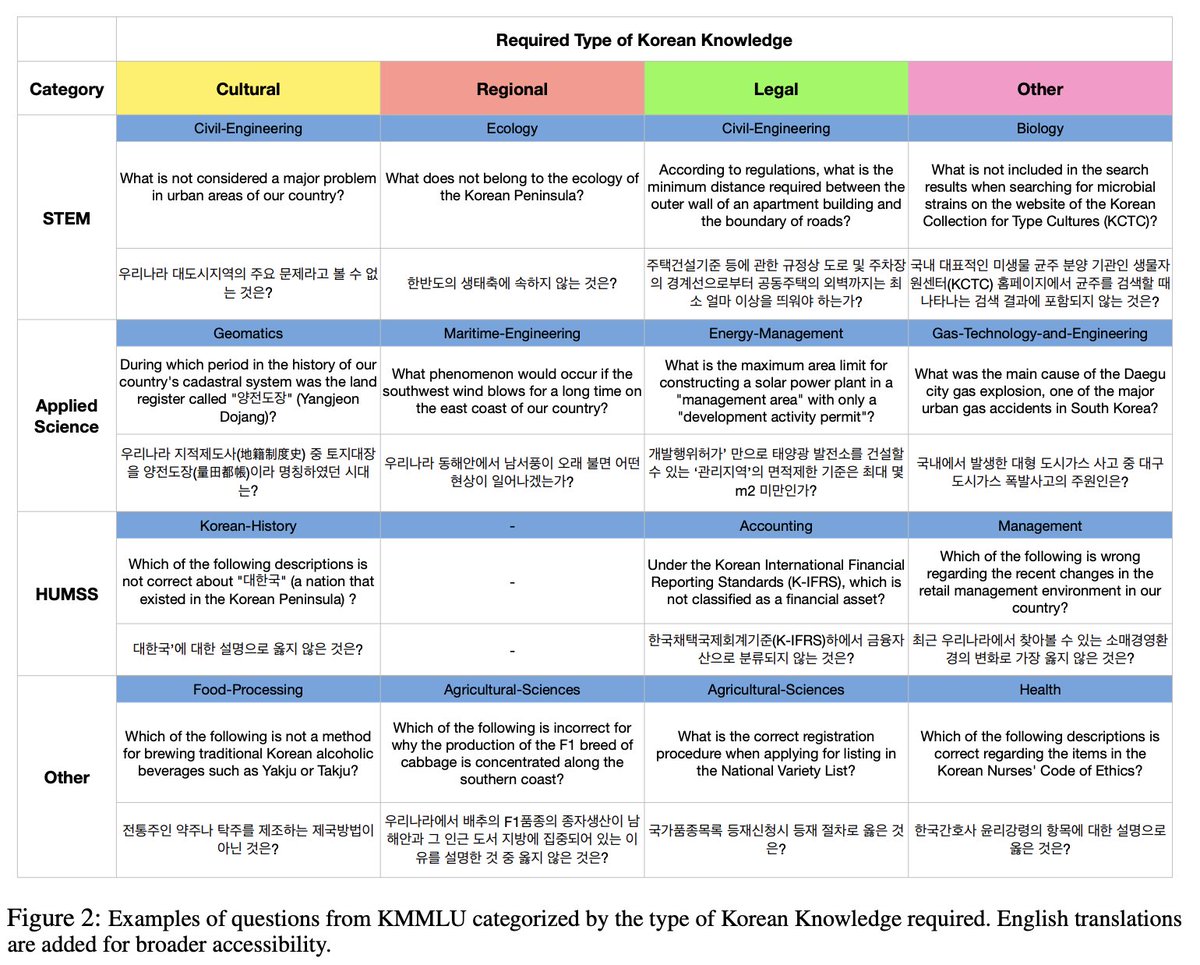

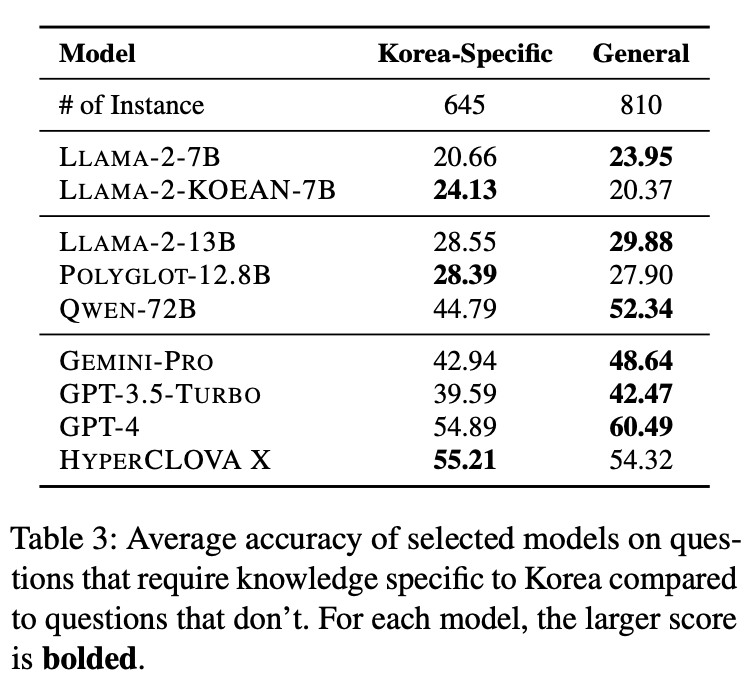

The best open model on Korean MMLU (KMMLU) is the primarily Chinese & English Qwen model. Surprising to me & hints at cool research - maybe @huybery has thoughts🤔 Great work by the talented @gson_AI & team❤️

StarCoder2 15B is trained on 4.3 trillion total tokens via 4.5 epochs!💫 Great work by @BigCodeProject ❤️

StarCoder2 15B is trained on 4.3 trillion total tokens via 4.5 epochs!💫 Great work by @BigCodeProject ❤️

What’s the most impactful LLM📚data research rn? Find out in this paper by the talented @AlbalakAlon arxiv.org/abs/2402.16827 Good directions imo🙂: ▶️Curriculum training ▶️Sample-level weights (extending DoReMi) ▶️Quality-filter+repeating (extending Scaling Data-Constrained LMs)

What’s the most impactful LLM📚data research rn? Find out in this paper by the talented @AlbalakAlon arxiv.org/abs/2402.16827 Good directions imo🙂: ▶️Curriculum training ▶️Sample-level weights (extending DoReMi) ▶️Quality-filter+repeating (extending Scaling Data-Constrained LMs)

For BLOOMZ/mT0 we had to rely on the finding that instruction tuning generalizes to unseen langs to use them beyond their 46. By tuning on 101 langs via Aya data/xP3x, the Aya models have much better coverage leading to better performance🌍🌎🌏Very impressed by the Aya team💙

For BLOOMZ/mT0 we had to rely on the finding that instruction tuning generalizes to unseen langs to use them beyond their 46. By tuning on 101 langs via Aya data/xP3x, the Aya models have much better coverage leading to better performance🌍🌎🌏Very impressed by the Aya team💙

Abhilasha Ravichander @lasha_nlp

3K Followers 2K Following Postdoc @allen_ai, working on Natural Language Processing (#NLProc) | PhD @SCSatCMU @LTIatCMU | Friend of @NLPWithFriends | @[email protected]

nikesh patil @NikeshPatil1998

1 Followers 155 Following Passionate Java developer | Code enthusiast | Problem solver | | Sharing insights and tips on Java development | | Lifelong learner | #JavaDeveloper.

Alex Dimakis @AlexGDimakis

13K Followers 2K Following UT Austin Professor. Researcher in Machine Learning and Information Theory. National AI Institute on the Foundations of Machine Learning (IFML) Co-director.

Ashish Arora @__AshArr__

0 Followers 54 Following

Vanshit Mehta @vanshitkmehta

2 Followers 55 Following Software Dev @reliancejio , Building Cool Things.

Shubhanshu Arya @thisisshubh21

0 Followers 54 Following Aspiring Software Developer 👨💻. Always try to learn something. Loves 🍎

Dhruv Charne @CharneDhruv

1 Followers 133 Following

Dhrumil Bhut @BhutDhrumil

11 Followers 145 Following

Mohammed Saqib Patel @patel_saqib26

41 Followers 648 Following

Ahmed Hisham @AhmedHi08078280

0 Followers 50 Following

Jacek (Jomsborg.eth) @timelessdev

1K Followers 5K Following The DAO investor. Early @Aleph__zero inv. Decentralization. Born on Vikings island called Jomsborg. Applied math. My posts are not financial advise.

Charles Vaske @CharlesVaske

761 Followers 943 Following I work on genomics, but love all of biology and any means to investigate it with math, probability, and computation. He/him/his/they/their.

Tech Enthusiast @Im_techie

2 Followers 52 Following

Rupam Ash @rupam_ash

28 Followers 124 Following Computer Science Student . Tech Enthusiast . Learning MERN Stack .

Afroz Mohiuddin @afrozenator

1K Followers 5K Following Research Engineer at Google Brain. Interested in Science, Psychology, Investing, Design and generally almost everything. Good Thoughts, Good Words, Good Deeds.

Hassan arzoo @hssnarzoo

16 Followers 220 Following

Arif Ahmad @arif_ahmad_py

282 Followers 7K Following All things AI, Computer Science and Circuits! Prev. @GoogleAI

!(hardyNeverCodes) @solanki_haard

39 Followers 131 Following check my ongoing endeavors https://t.co/5U9sMlWI1Q Making robust Backends for Webapps|| NodeJs||Express Love To REACT⚛️ Currently Diving Into World Of WEB3

Sahil Verma @Sahil1V

460 Followers 1K Following PhD student @uwcse. Robustness and Interpretability in ML. Former intern at @amazon, @itsArthurAI, @ETH_en, @MIT, @NUSingapore. Undergrad @IITKanpur

Zhengping JIANG @zhengping_jiang

51 Followers 397 Following PhD Student in Natural Language Processing at JHU-CLSP

Harish R @HarishR93882470

9 Followers 63 Following

Jalil Umer @JalilDev

1 Followers 53 Following

Dhruv Rajput @dhruuuvv__

2 Followers 62 Following

Ross @ma1547372858

15 Followers 1K Following

Vigneshwaran N @Vigneshwaran__N

47 Followers 670 Following ML/NLP engineer. Curious about people and minds.

Wenting Zhao @wzhao_nlp

812 Followers 356 Following PhD student @cornell_tech Food for life, NLP for soul!

Dipan Mondal @dipanmondal22

18 Followers 62 Following Nothing is life but there are something in math.

LLM360 @llm360

1K Followers 50 Following A framework for open-source LLMs to foster transparency, trust, and collaborative research.

Steve Li @steveshenli

137 Followers 160 Following CS + Stat @ Harvard. Previously AI Research at BAIR

Aryan Panchal @naughtypaanda

2 Followers 139 Following

Vansh Chitransh @Vansh_Twts

4 Followers 57 Following

Jeff Rasley @jeffra45

678 Followers 928 Following @SnowflakeDB AI Research Team. @MSFTDeepSpeed co-founder, @BrownCSDept PhD, @uwcse alum

sarah guo // convicti.. @saranormous

91K Followers 3K Following startup investor and builder, founder @w_conviction. accelerating AI adoption, interested in progress. tech podcast: @nopriorspod

Shuang Li @ShuangL13799063

5K Followers 755 Following Incoming Assistant Professor at the University of Toronto and Vector Institute. Generative AI (Vision/Language), Embodied AI, Robotics.

merve @mervenoyann

56K Followers 4K Following open-sourceress at @huggingface 🧙🏻♀️ proud mediterrenean 🍋 I do TL;DR on ML papers

Anne Ouyang @anneouyang

3K Followers 582 Following Incoming CS PhD student @Stanford, currently cuDNN @Nvidia | M.Eng, B.S. in CS @MIT | self-improving ML systems + performance engineering

Yann Dubois @yanndubs

4K Followers 1K Following PhD student @stanfordAILab | Prev: AI resident @metaai, @vectorinst, @CambridgeMLG

Kenneth Enevoldsen @KCEnevoldsen

326 Followers 696 Following interdisciplinary Ph.D. Student working on representation learning in Clinical NLP and Genetics at @AarhusUni and @interact_minds

noahdgoodman @noahdgoodman

2K Followers 109 Following Professor of natural and artificial intelligence @Stanford. Research Scientist at @GoogleDeepMind. (@StanfordNLP @StanfordAILab etc)

Katherine Tian @kattian_

716 Followers 494 Following cs/stat @harvard, working on calibration & factuality of LLMs, prev @GoogleAI tensorflow, golden state @warriors fan

Orion Weller @orionweller

863 Followers 745 Following PhD student @jhuclsp. Previously: @apple, @allen_ai, @byu. #NLProc and #IR research

Sijia Liu @letti_liu

47 Followers 163 Following Research Scientist @Amazon AGI. | Interests: AI/LLMs/Conversations. | Previously: @CarnegieMellon @pku1898

Akash Mahajan @akashmjn

595 Followers 393 Following MTS @ContextualAI | prev in awe of PNW beauty 🏔 @Azure Speech; @Stanford @atherenergy @iitmadras

Aditya Bindal @adbindal

134 Followers 681 Following Mostly AI, Cricket, Reading. VP Product @ContextualAI

Shikib Mehri @shikibmehri

339 Followers 808 Following MTS @ContextualAI | Previously @AmazonScience; PhD @LTIatCMU

John Yang @jyangballin

2K Followers 450 Following CS/NLP MS student @princeton_nlp Previously @Berkeley_EECS

Reinhard Heckel @HeckelReinhard

409 Followers 286 Following Associate Professor at Technical University of Munich and Adjunct Faculty at Rice University

Xindi Wu @cindy_x_wu

940 Followers 808 Following PhD student @PrincetonCS | Data-centric multimodal ml | prev @RealityLabs @roboVisionCMU @CMU_Robotics @Snapchat

Sungdong Kim @SungdongKim4

370 Followers 174 Following Research Scientist @ NAVER Cloud; MS&PhD student @ KAIST #NLP #LLM #Alignment

Seungone Kim @seungonekim

929 Followers 832 Following Incoming Ph.D. student @LTIatCMU, M.S. student @kaist_ai working on LLM Evaluation & Systems that Improve with (Human) Feedback | Prev: @yonsei_u @NAVER_AI_Lab

Holy Lovenia @HolyLovenia

70 Followers 19 Following

Jiawei Liu @JiaweiLiu_

2K Followers 957 Following Simplifying the making of great software. PhD Student @plfmse @IllinoisCS.

Yuxiang Wei @YuxiangWei9

290 Followers 216 Following PhD student @IllinoisCS. Incoming AI/ML Intern @SnowflakeDB

Federico Cassano @ellev3n11

126 Followers 67 Following Undergraduate Researcher @neu_prl Upcoming @scale_AI Previous industry research @cursor_ai, @Roblox, @trailofbits Papers here: https://t.co/PgUSaxXs1B

Carolyn Anderson @linguistcarolyn

629 Followers 772 Following @Wellesley CS professor and computational linguist. Studies meaning with computational and experimental tools. https://t.co/0k477lFlwd

Lingming Zhang @LingmingZhang

1K Followers 308 Following Associate Professor @plfmse @IllinoisCS. Enjoy breaking, fixing, and synthesizing software. SE | PL | FM | LLM4Code

Miltos Allamanis 🇪.. @miltos1

1K Followers 338 Following Researching deep learning for generating and understanding programs. Research Scientist @GoogleAI Also at @[email protected] (Opinions are my own.)

Liangming Pan (on job.. @PanLiangming

1K Followers 717 Following Postdoc at @ucsantabarbara @ucsbNLP | Ph.D. from @NUSingapore @wing_nus | Researcher in #NLProc | Interests: Reasoning, QA, Generation, Fact Checking

Matt Valoatto @mvaloatto

2K Followers 646 Following Entrepreneur, designer, investor @huggingface 🤗, @deforum_art, @talktomem1, wingmate / interested in AI, design, art, tech, science / happy dad of 2

Haewon Jeong @HaewonJeong00

240 Followers 183 Following Assistant Prof @UCSB ECE. Previously, Ph.D student @CMU_ECE & Post-doc @Harvard @hseas. She/her/hers. https://t.co/eukRWcPU9i

Shiyu Chang @CodeTerminator

686 Followers 400 Following Assistant Professor at UC Santa Barbara. Tweets reflect my views alone.

William Wang @WilliamWangNLP

14K Followers 719 Following UCSB NLP Lab + ML Center. https://t.co/6TOnqbk6YT https://t.co/KJYhnav3Et Mellichamp Chair Prof. at UCSB CS. PhD @ CMU SCS. Areas: #NLProc, Machine Learning, AI.

Xinyi Wang @XinyiWang98

795 Followers 299 Following UC Santa Barbara CS PhD student working on ML/NLP

Pengcheng Yin @pengchengyin

577 Followers 123 Following @GoogleDeepMind. Formerly a Neulab member @LTIatCMU. Interested in machine learning for NLP and code, dog training and aviation.

Aakanksha Chowdhery @achowdhery

7K Followers 3K Following LLMs @ Google DeepMind :: PaLM, Gemini // Previously @MSFTResearch, @Stanford, @Princeton // views my own and subject to change

Anton Lozhkov @anton_lozhkov

2K Followers 283 Following Open-sourcing Language Models @huggingface ✨

Marc Marone @ruyimarone

421 Followers 586 Following PhD student at Johns Hopkins @jhuclsp. Previously @microsoft Semantic Machines, @mstranslator, @GeorgiaTech

Tianbao Xie @TianbaoX

1K Followers 1K Following Ph.D. student of @XLangNLP lab and @HKUNLP group 2022. Advised by @taoyds and @ikekong . e/ia

XLang NLP Lab @XLangNLP

509 Followers 27 Following a group of nlpers at @HKUniversity working on language model agents, executable language grounding, code generation, semantic parsing, and interactive systems.

Tu Vu @tuvllms

3K Followers 894 Following Research Scientist @GoogleDeepMind & Assistant Professor @VT_CS. PhD from @UMass_NLP. #NLProc@natfriedman Section 7 of "scaling data constrained language models" has an experiment supporting this claim. ,

@zhangir_azerbay This part is interesting.

@zhangir_azerbay This is the best demonstration I've seen so far! Thank you. But it doesn't totally settle things for me. At 20% code the performance is on average the same as with 0% code. At 30% code it's only modestly better than 4 epochs without code. Is that right?

@natfriedman I think @Muennighoff's paper showed this! arxiv.org/abs/2305.16264 > training LLMs on a mix of NL data and Python data at 10 different mixing rates and find that mixing in code is able to provide a 2× increase in effective tokens even when evaluating only NL tasks.

@Muennighoff Thanks for your scientifically rigorous talk, @Muennighoff!

link to the MTEB legal benchmark huggingface.co/spaces/mteb/le…

@Voyage_AI_ @Voyage_AI_ is dedicated to building better generalist, domain-specific, or fine-tuned embedding models and rerankers. Plz check out our recent products: voyage-code-2: x.com/tengyuma/statu… rerank-lite-1: x.com/Voyage_AI_/sta… 📄 API references: docs.voyageai.com/docs/introduct…

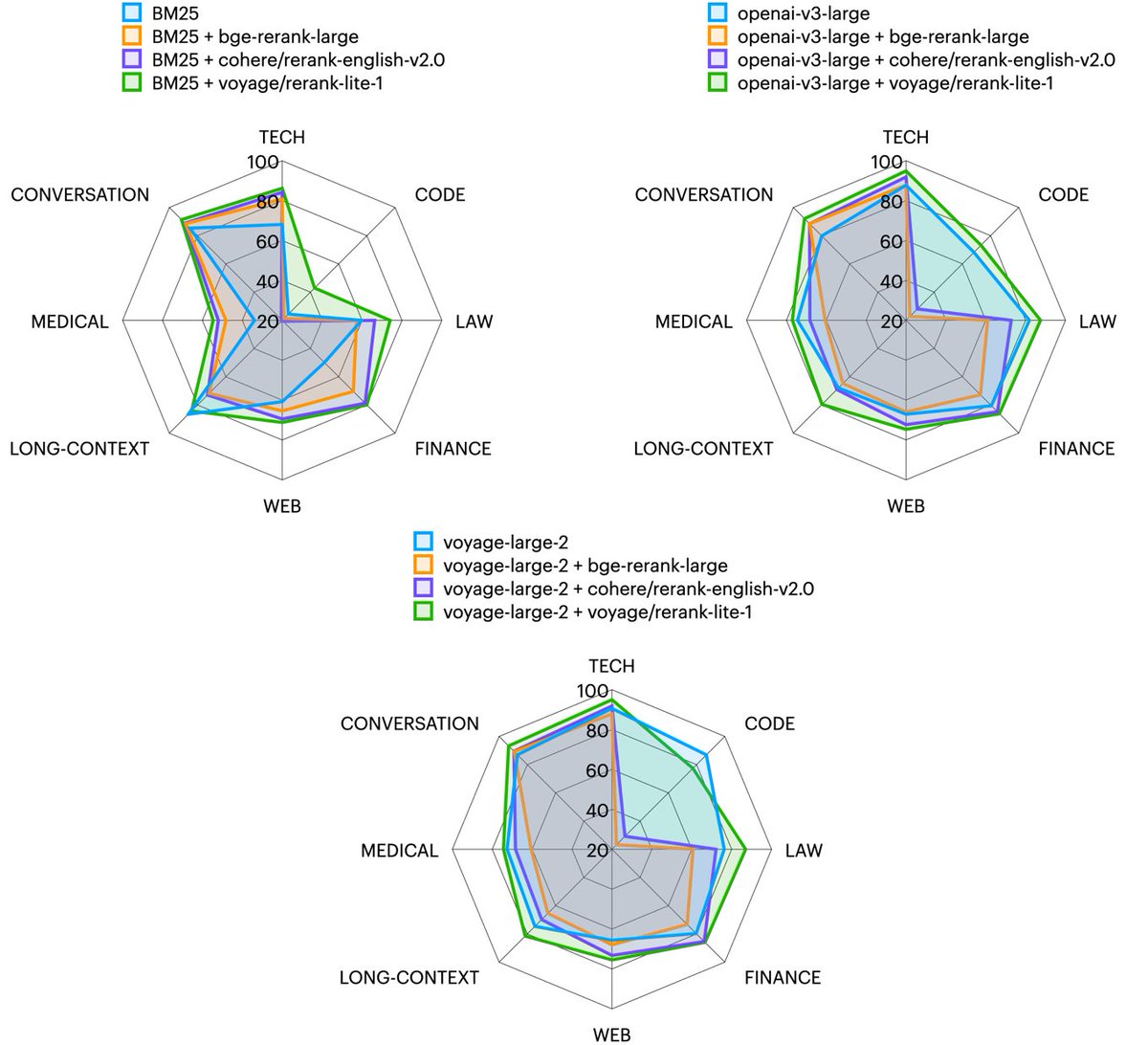

Rerankers refine the retrieval in RAG. 🆕📢 Excited to announce our first reranker, rerank-lite-1: state-of-the-art in retrieval accuracy on 27 datasets across domains (law, finance, tech, long docs, etc.), enhancing various search methods, vector-based or lexical. 🧵

@Voyage_AI_ Below: long-context retrieval results. More in blog post 📖: blog.voyageai.com/2024/04/15/dom… Please check it out! The first 50M tokens are on us. We’d also love to support academic retrieval research and benchmarking. Please write to us at [email protected] for more free tokens.

🆕📢 @Voyage_AI_'s new embedding model for legal and long-context retrieval and RAG: voyage-law-2! 1.🥇 # 1 on MTEB legal retrieval benchmark with a large margin 2.📜 Best quality for long-context (16K) 3.✨ Improved quality across domains 4.🛒 On AWS Marketplace #RAG #LLMs

A big thank to existing contributor and an especially large thanks to the team of reviewers; @imenelker, @isaacchung1217, @Muennighoff, and @m_bernstorff 🎉

If you want to join this open project you can find good first issues to start with here: github.com/embeddings-ben…

- We now cover 247 languages, including code! 😎 - We include the longEmbed benchmark 📃 - A big thanks to PR and paper author Dawei Zhu - We include multiple code retrieval tasks 👩💻 However, we are still missing many important languages like: Urdu, Greek, Icelandic, Punjabi...

It rocks indeed! And actually the development of one of the most comprehensive benchmarks to date is going great 🌐

I would like to thank every person that is contributing to MMTEB, this community rocks!🚀 Thank you everyone and keep going!

I would like to thank every person that is contributing to MMTEB, this community rocks!🚀 Thank you everyone and keep going!

more details in this announcement! fixed data link: huggingface.co/datasets/allen…

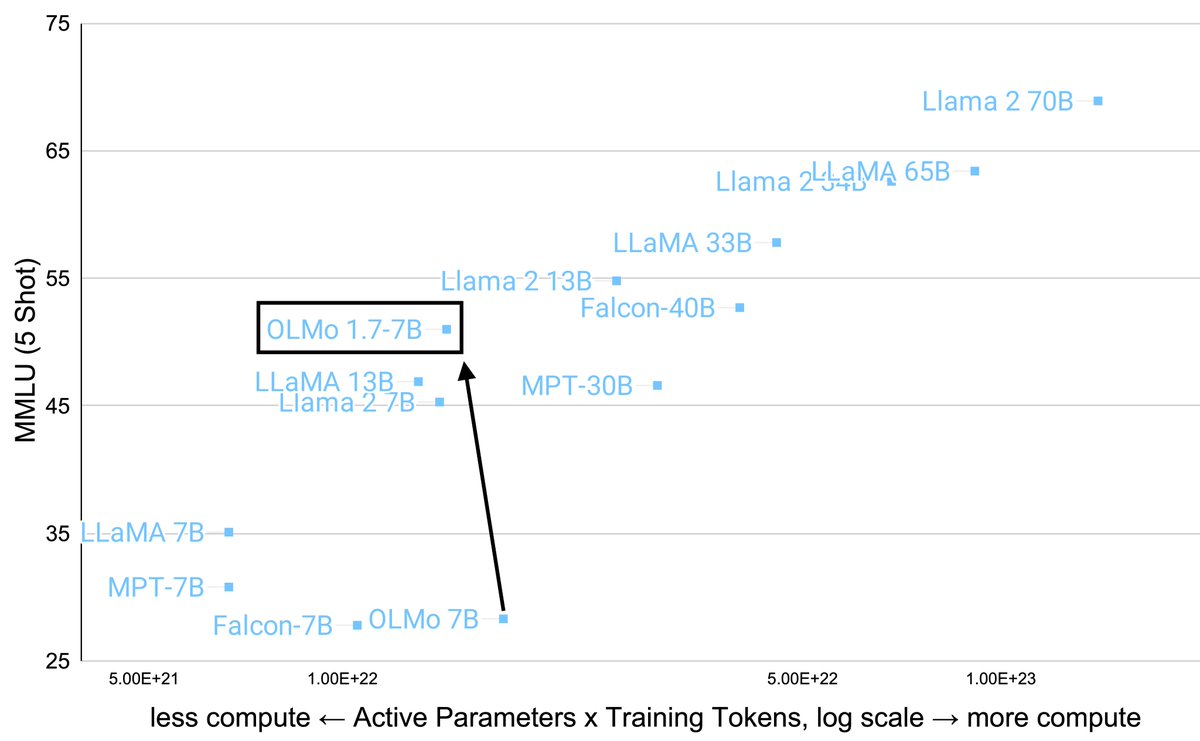

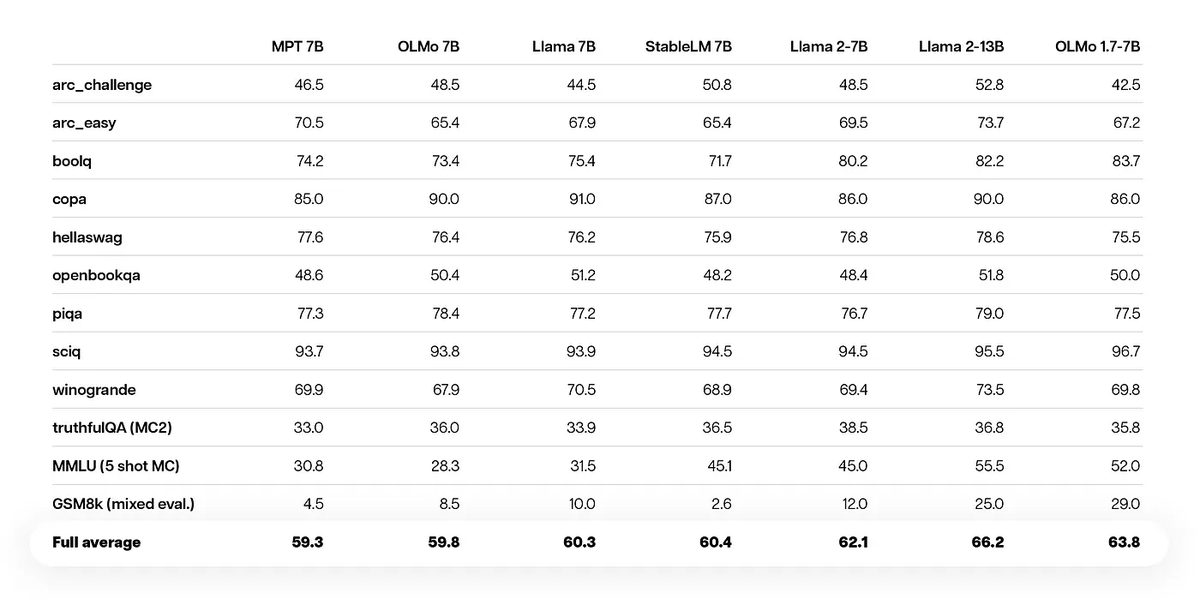

Announcing our latest addition to the OLMo family, OLMo 1.7!🎉Our team's efforts to improve data quality, training procedures and model architecture have led to a leap in performance. See how OLMo 1.7 stacks up against its peers and peek into the technical details on the blog:…

notable stuff: 🦉ton of perf boost from mixing instruct data at end (e.g., flan) 🐋anneal learning rate (Fig 9b in arxiv.org/abs/2403.08763) 🐞changing data mix boosts MMLU at some cost to other evals 🍇huggingface.co/allenai/dolma 🧀huggingface.co/allenai/OLMo-1…

Announcing our latest addition to the OLMo family, OLMo 1.7!🎉Our team's efforts to improve data quality, training procedures and model architecture have led to a leap in performance. See how OLMo 1.7 stacks up against its peers and peek into the technical details on the blog:…

Announcing our latest addition to the OLMo family, OLMo 1.7!🎉Our team's efforts to improve data quality, training procedures and model architecture have led to a leap in performance. See how OLMo 1.7 stacks up against its peers and peek into the technical details on the blog:…

Introducing our best OLMo yet. OLMo 1.7-7B outperforms LLaMa2-7B, approaching LLaMa2-13B at MMLU and GSM8k. High-quality data and staged training are key. I am so proud of our team making such significant improvement in a short period after our first release.

Announcing our latest addition to the OLMo family, OLMo 1.7!🎉Our team's efforts to improve data quality, training procedures and model architecture have led to a leap in performance. See how OLMo 1.7 stacks up against its peers and peek into the technical details on the blog:…

@natolambert @vikhyatk i think @mechanicaldirk and @Muennighoff are currently cookin something up 👀