Tim Dettmers @Tim_Dettmers

PhD Student at @UW. I blog about deep learning and PhD life at https://t.co/Y78KDJJFE7. timdettmers.com/about Seattle, WA Joined October 2012-

Tweets3K

-

Followers28K

-

Following818

-

Likes3K

Long-context Llama 3 finetuning is here! 🦙 Unsloth supports 48K context lengths for Llama-3 70b on a 80GB GPU - 6x longer than HF+FA2 QLoRA finetuning Llama-3 70b is 1.8x faster, uses 68% less VRAM & Llama-3 8b is 2x faster and fits in a 8GB GPU! Blog: unsloth.ai/blog/llama3

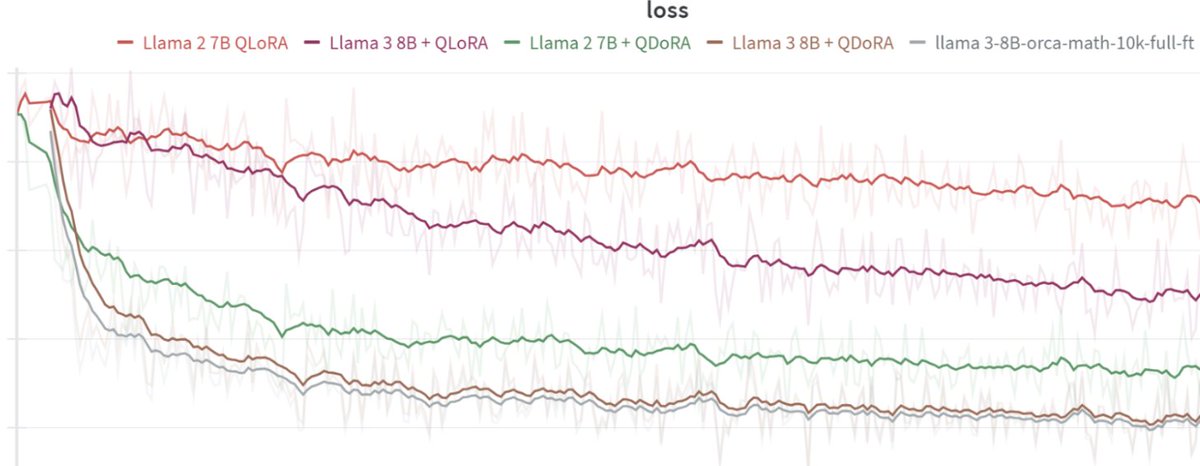

Today at @answerdotai we've got something new for you: FSDP/QDoRA. We've tested it with @AIatMeta Llama3 and the results blow away anything we've seen before. I believe that this combination is likely to create better task-specific models than anything else at any cost. 🧵

Next level: QLoRA fine-tuning 4-bit Llama 3 8B on iPhone 15 pro. Incoming (Q)LoRA MLX Swift example by David Koski: github.com/ml-explore/mlx… works with lot's of models (Mistral, Gemma, Phi-2, etc)

Easily Fine-tune @AIatMeta Llama 3 70B! 🦙 I am excited to share a new guide on how to fine-tune Llama 3 70B with @PyTorch FSDP, Q-Lora, and Flash Attention 2 (SDPA) using @huggingface build for consumer-size GPUs (4x 24GB). 🚀 Blog: philschmid.de/fsdp-qlora-lla… The blog covers: 👨💻…

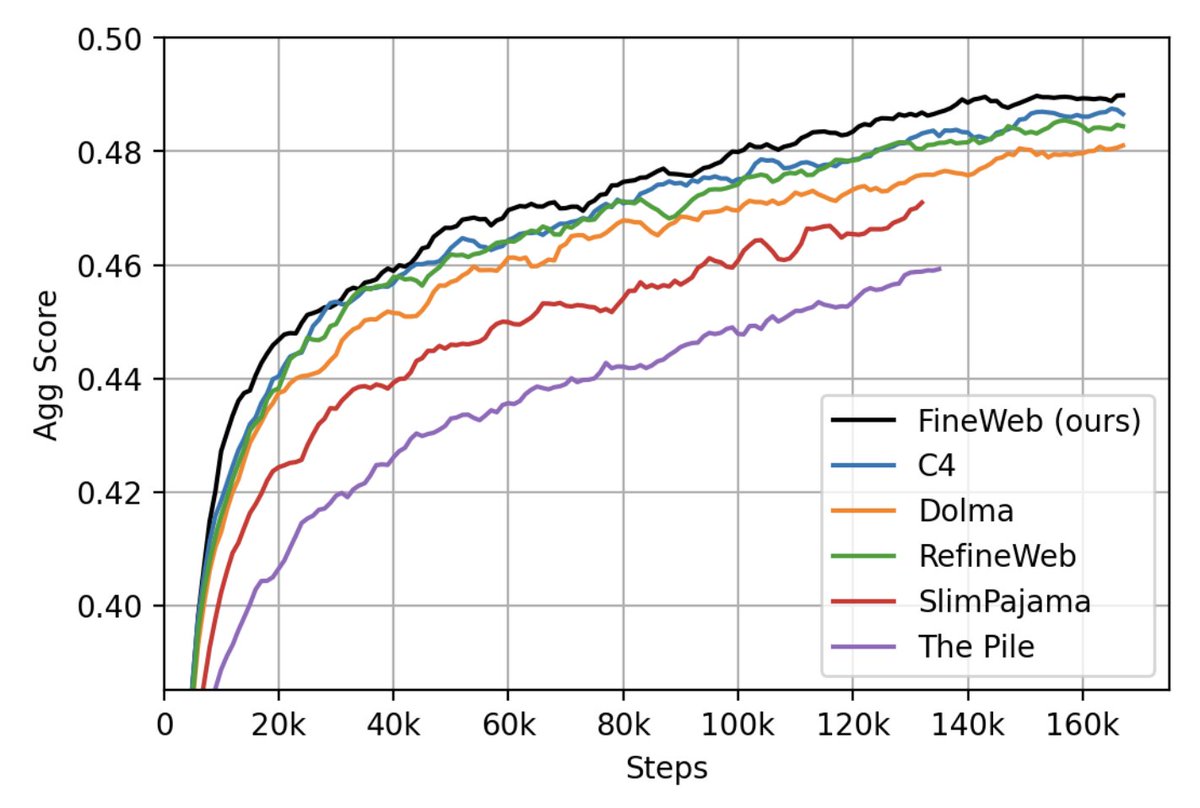

We have just released 🍷 FineWeb: 15 trillion tokens of high quality web data. We filtered and deduplicated all CommonCrawl between 2013 and 2024. Models trained on FineWeb outperform RefinedWeb, C4, DolmaV1.6, The Pile and SlimPajama!

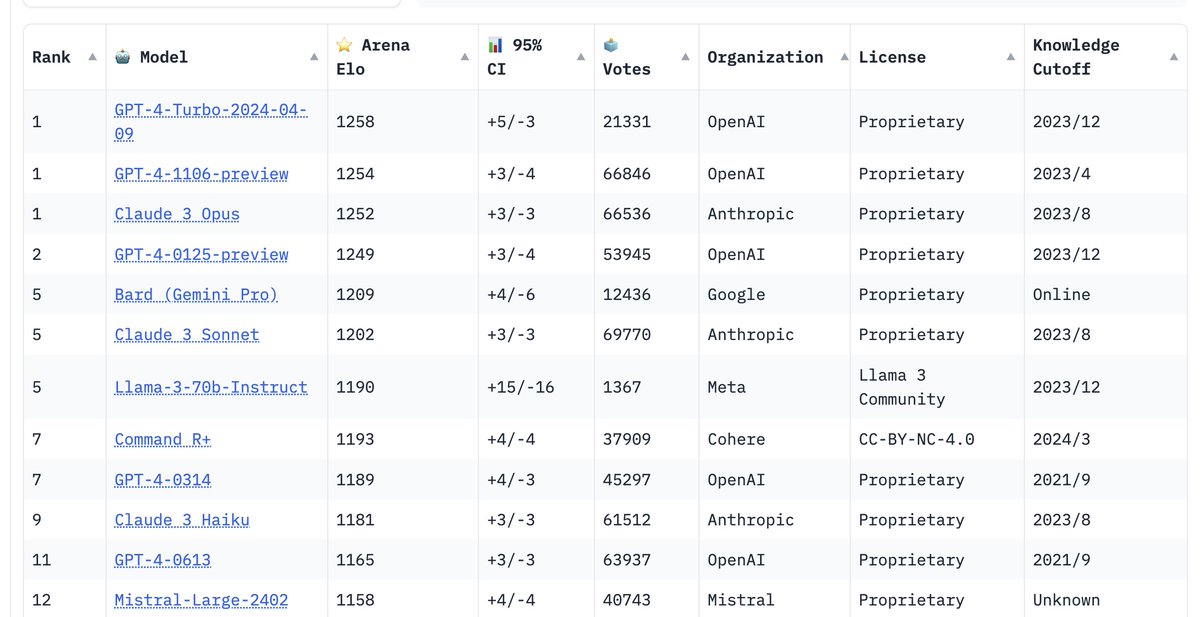

very early LMSys Arena results peg llama3-70B at 5th place (the variance is still pretty high, so it can jump up or down a bit). This is so exciting. Can't wait to see how the 405B fares once it is released. chat.lmsys.org/?leaderboard

Yes, both the 8B and 70B are trained way more than is Chinchilla optimal - but we can eat the training cost to save you inference cost! One of the most interesting things to me was how quickly the 8B was improving even at 15T tokens.

Yes, both the 8B and 70B are trained way more than is Chinchilla optimal - but we can eat the training cost to save you inference cost! One of the most interesting things to me was how quickly the 8B was improving even at 15T tokens.

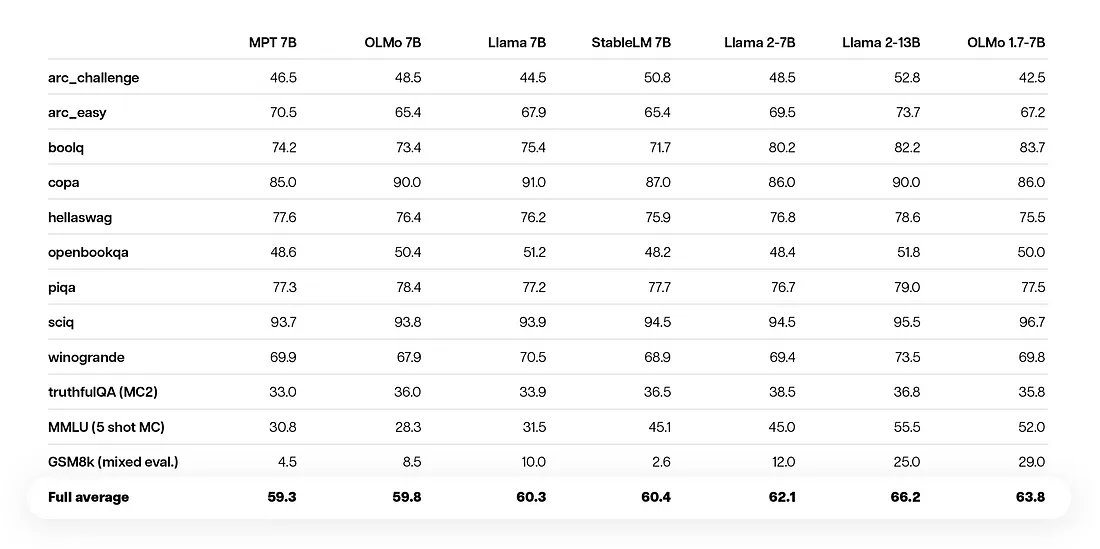

What does it take to get a good MMLU score? Turns out: decent data, instructions in pretraining, fuzzy dedup, and quality filtering. just dropped OLMo 1.7-7b… nice perf lift over 1.0! Blog: blog.allenai.org/olmo-1-7-7b-a-… Model: huggingface.co/allenai/OLMo-1… Data: huggingface.co/allenai/dolma

We just released Mixtral-8x22B-v0.1 and Mixtral-8x22B-Instruct-v0.1: - Free to use under Apache 2.0 license - Outperforms all open models - Native function calling - Masters English, French, Italian, German and Spanish. - Seq_len = 64K mistral.ai/news/mixtral-8…

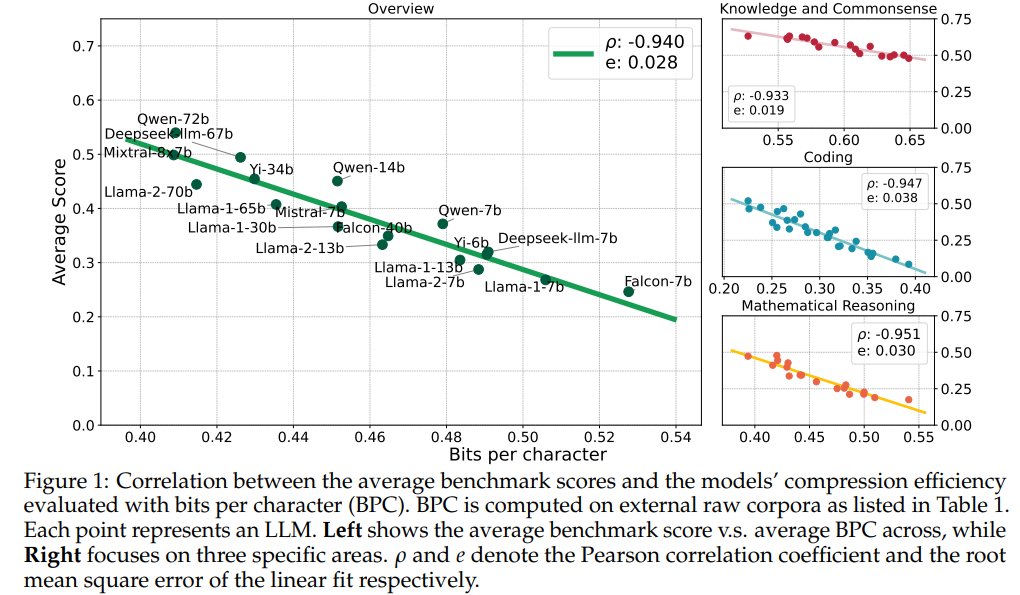

Compression Represents Intelligence Linearly LLMs' intelligence – reflected by average benchmark scores – almost linearly correlates with their ability to compress external text corpora repo: github.com/hkust-nlp/llm-… abs: arxiv.org/abs/2404.09937

🚀 Introducing Pile-T5! 🔗 We (EleutherAI) are thrilled to open-source our latest T5 model trained on 2T tokens from the Pile using the Llama tokenizer. ✨ Featuring intermediate checkpoints and a significant boost in benchmark performance. Work done by @lintangsutawika, me…

Grok is going multimodal! It’s incredible to see how fast a small, focused team can move. Kudos to the amazing team @xai that made this possible x.ai/blog/grok-1.5v

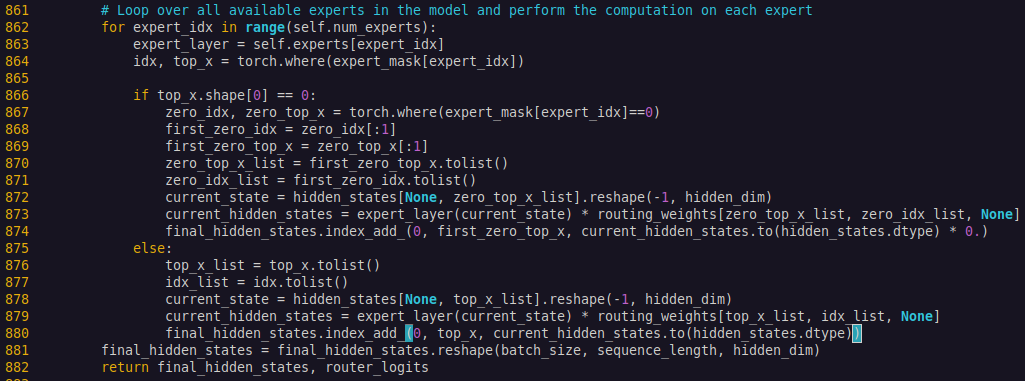

Finally got deepspeed zero 3 working with QLoRA for mixtral-8x22b. 1. install latest deepspeed `pip install git+github.com/microsoft/deep…` 2. update modeling_mixtral.py to include all experts in forward pass

Meta announces 2nd-gen inference chip MTIAv2. * 708TF/s Int8 / 353TF/s BF16 * 256MB SRAM, 128GB memory * 90W TDP. 24 chips per node, 3 nodes per rack. * standard PyTorch stack (Dynamo, Inductor, Triton) for flexibility Fabbed on TSMC's 5nm process, its fully programmable via the…

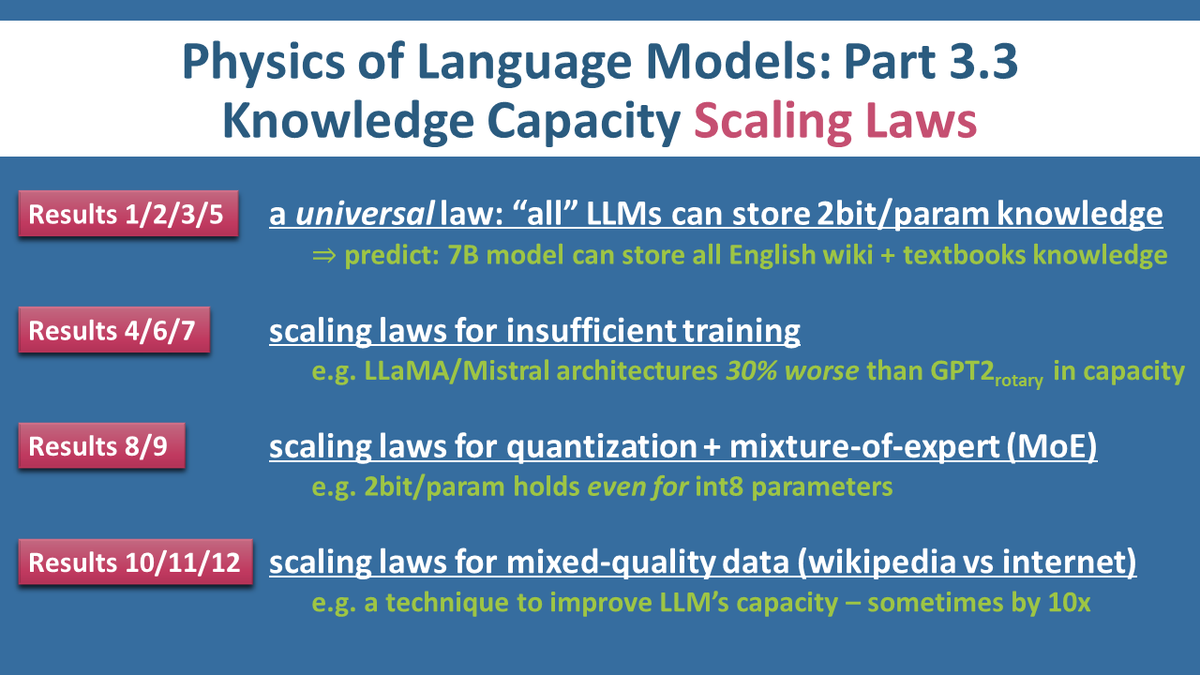

Our 12 scaling laws (for LLM knowledge capacity) are out: arxiv.org/abs/2404.05405. Took me 4mos to submit 50,000 jobs; took Meta 1mo for legal review; FAIR sponsored 4,200,000 GPU hrs. Hope this is a new direction to study scaling laws + help practitioners make informed decisions

Transformer with dynamic depth has been well explored in pre-LLM era. Glad to see the MoD work is making progress on this front with a hardware-aware efficient implementation! A thread of prior work on the core ideas in case you’re looking for some alpha:

Transformer with dynamic depth has been well explored in pre-LLM era. Glad to see the MoD work is making progress on this front with a hardware-aware efficient implementation! A thread of prior work on the core ideas in case you’re looking for some alpha:

Accelerating MoE model inference with Locality-Aware Kernel Design 🔥 Check out several different work decomposition and scheduling algorithms for MoE GEMMs and how at the hardware level, why column-major scheduling produces the highest speedup. hubs.la/Q02rRKh00

SWE-agent is blazing fast, and when it works it feels like magic! In this short demo I show how it solved a real bug in the neural network training code in scikit-learn. I also explain the process behind our agent-computer interface design choices.

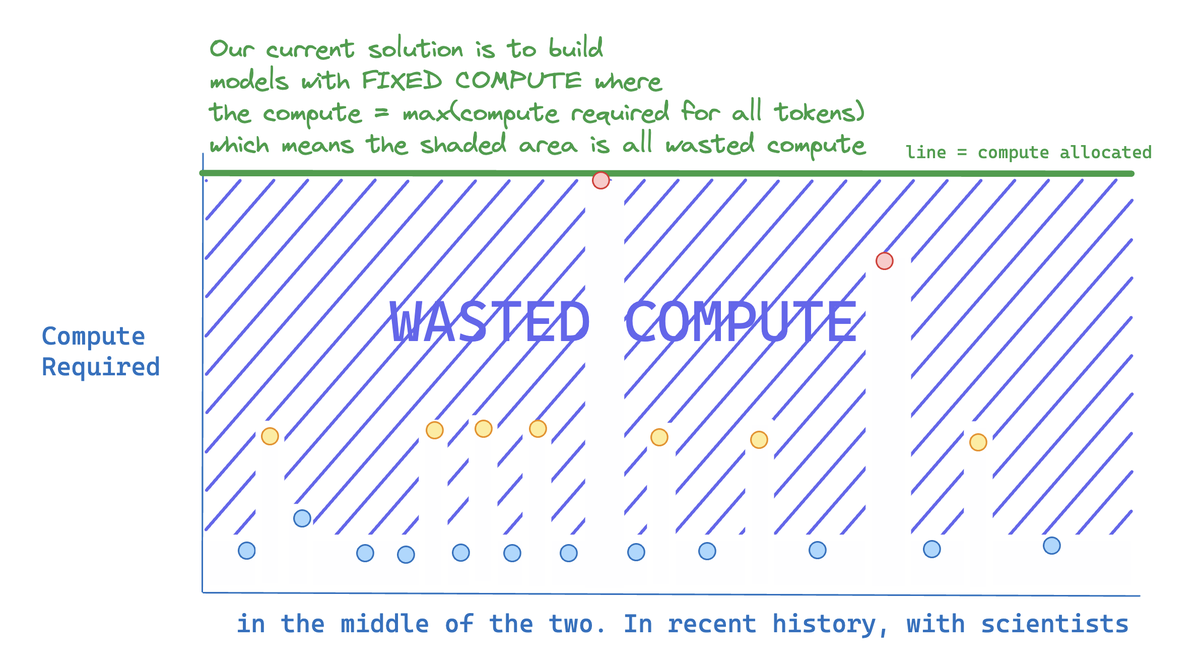

Why Google Deepmind's Mixture-of-Depths paper, and more generally dynamic compute methods, matter: Most of the compute is WASTED because not all tokens are equally hard to predict

This is excellent work — a big step forward in quantization! It enables full 4-bit matmuls, which can speed up large batch inference by a lot. Anyone deploying LLMs at scale will soon use this or similar techniques.

This is excellent work — a big step forward in quantization! It enables full 4-bit matmuls, which can speed up large batch inference by a lot. Anyone deploying LLMs at scale will soon use this or similar techniques.

Soumith Chintala @soumithchintala

186K Followers 877 Following Cofounded and lead @PyTorch at Meta. Also dabble in robotics at NYU. AI is delicious when it is accessible and open-source.

Jeremy Howard @jeremyphoward

222K Followers 5K Following 🇦🇺 Co-founder: @AnswerDotAI & @FastDotAI ; Hon Professor: @UQSchoolITEE ; Digital Fellow: @Stanford

Delip Rao e/σ @deliprao

46K Followers 5K Following Busy inventing the shipwreck. @Penn. Past: @johnshopkins, @UCSC, @Amazon, @Twitter ||Art: #NLProc, Vision, Speech, #DeepLearning || Life: 道元, improv, running 🌈

Horace He @cHHillee

23K Followers 449 Following Working at the intersection of ML and Systems @ PyTorch "My learning style is Horace twitter threads" - @typedfemale

Eric Jang @ericjang11

69K Followers 3K Following physical AGI at 1X. Author of "AI is Good for You" https://t.co/eFg4WXhg0p

Percy Liang @percyliang

49K Followers 408 Following Associate Professor in computer science @Stanford @StanfordHAI @StanfordCRFM @StanfordAILab @stanfordnlp | cofounder @togethercompute | Pianist

Sasha Rush @srush_nlp

52K Followers 464 Following Professor, Programmer in NYC. Cornell Tech, Hugging Face 🤗 https://t.co/cZl0wTfqGz

Rosanne Liu @savvyRL

33K Followers 966 Following Cofounded & running @ml_collective. Host of Deep Learning Classics & Trends. Research at Google DeepMind. DEI/DIA Chair of ICLR & NeurIPS. Writing https://t.co/IbycyGfnDR

Kyunghyun Cho @kchonyc

61K Followers 2K Following a combination of a mediocre scientist, a mediocre manager, a mediocre advisor & a mediocre PC at @nyuniversity (@CILVRatNYU) & @genentech (@PrescientDesign).

Ross Wightman @wightmanr

18K Followers 1K Following Computer Vision @ 🤗. Ex head of Software, Firmware Engineering at a Canadian 🦄. Currently building ML, AI systems or investing in startups that do it better.

clem 🤗 @ClementDelangue

91K Followers 5K Following Co-founder & CEO @HuggingFace 🤗, the open and collaborative platform for AI builders

Akari Asai @AkariAsai

11K Followers 650 Following Ph.D. student @uwcse & @uwnlp. NLP. IBM Ph.D. fellow (2022-2023). Meta student researcher (2023-) . ☕️ 🐕 🏃♀️🧗♀️🍳

Tanishq Mathew Abraha.. @iScienceLuvr

54K Followers 1K Following PhD at 19 | Founder and CEO at @MedARC_AI | Research Director at @StabilityAI | @kaggle Notebooks GM | Biomed. engineer @ 14 | TEDx talk➡https://t.co/xPxwKTq6Qb

Radek Osmulski 🇺�.. @radekosmulski

25K Followers 554 Following Resources to take your Machine Learning skills to the next level 🧪 Senior Data Scientist, RecSys @NVIDIAAI 🏫 @fastdotai trained DL Eng 📝 https://t.co/By87iXx5Pu

Julien Chaumond @julien_c

47K Followers 1K Following Co-founder and CTO at @huggingface 🤗. ML/AI for everyone, building products to propel communities fwd. @Stanford + @Polytechnique

Miles Brundage @Miles_Brundage

43K Followers 10K Following Policy research at @openai. I mostly tweet about AI, animals, and sci-fi. He/him. Views my own.

Thomas Wolf @Thom_Wolf

68K Followers 4K Following Co-founder and CSO @HuggingFace - open-source and open-science

Sam Bowman @sleepinyourhat

35K Followers 3K Following AI alignment + LLMs at NYU & Anthropic. Views not employers'. No relation to @s8mb. I think you should join @givingwhatwecan.

2wl @2wlearning

228 Followers 206 Following Documenting my progress learning ML every day. 2 more weeks

Jonathan Koh @Jonatha20094223

3 Followers 4K Following

Crypto Magician @Cybernetic_77

309 Followers 994 Following Fortis Fortuna Adiuvat Ad Astra Per Aspera Deus Ex Machina

Marcelo Tomaz @MarceloTomaz

28 Followers 51 Following

Guillermo @MeMoO_7

124 Followers 1K Following Escribiré frases que me gustan, o compartiré estupideces, incluso compartiré un poco de tecnología e innovación.

Sophosympatheia @sophosympatheia

8 Followers 13 Following

thinkpoet @thinkpoet

38 Followers 450 Following

adhernem @adhernem12

156 Followers 926 Following

Dan @DanielCardena

149 Followers 135 Following A.I. Learner / A.I. Consultant. Experience working on Virtual Assistants. Ex: Google

md yesh @YeshMd37030

9 Followers 16 Following

Harshit @hokageharshit

1 Followers 80 Following

Hamzé @Hamzeml

509 Followers 5K Following A Humanist Technologist, AI optimist, CTO @gowelcomeplace, #inclusive_economy #AI #machinelearning #tech4good #edtech

Electronicsseeker @libertarian108

7 Followers 0 Following

Mustapha Abdullahi @Mustious7

714 Followers 842 Following Random obsessions. Interested in large-scale AI systems 🤖.

Michael Alig @aligmichael

57 Followers 918 Following

Mahaoo @mahaoo_ASI

9 Followers 154 Following unhinged socially unacceptable takes about humanity and ASI

Álvaro @hilvanado

5 Followers 80 Following

Harish K Srinivasan @HarishKSrinivas

18 Followers 312 Following Computational Biology at UT Austin and Chess player

Lannister @Lannister998

5 Followers 99 Following

Arhant Chaterjee @ArhantC69420

106 Followers 832 Following

ElonMusk(parody) @ElonMuskCEO8012

11 Followers 1K Following

Phillip Lindsay @EastLAPinche

60 Followers 386 Following

uhsayer @uhsayer

80 Followers 1K Following

Han @hhua_

3K Followers 4K Following Invest @GVteam during 🌞 and hacker at 🌒. Investing in AI, infra, deep tech, fintech/crypto ⚡️🤖🧠. Views are my own.

nikolei776 @nikolei776

24 Followers 46 Following A passionate clash of clans esports critic with a Journalistic background designed to hold all teams accountable under the Motto say it like it is.

... @dercrazypug

60 Followers 145 Following

Jabulani Chibaya @Jabulanichibaya

1K Followers 5K Following Snr. Software Engineer I Apache Spark, Pulsar & Kafka I #DataScience I Big Data Engineer I Emerging Technologies Consultant I DataOps I #BI I #OSS I @mises

Edmon Sahakayan @EdmonSahakayan

7 Followers 116 Following

Arno Lamb @arno23o

69 Followers 402 Following

Dakshi Agrawal @a120fgts

0 Followers 52 Following

journeyman coder @xgenaidev

6 Followers 55 Following organic intelligence(?) all tweets are generated using electric circuits

HarmlessReplyGuy @HarmlessReply

5 Followers 43 Following mostly harmless dog burner account you know, you can live and not tweet

(((ل()(ل() 'yoav))).. @yoavgo

46K Followers 2K Following

Soumith Chintala @soumithchintala

186K Followers 877 Following Cofounded and lead @PyTorch at Meta. Also dabble in robotics at NYU. AI is delicious when it is accessible and open-source.

Lucas Beyer (bl16) @giffmana

56K Followers 444 Following Researcher (Google DeepMind/Brain in Zürich, ex-RWTH Aachen), Gamer, Hacker, Belgian. Mostly gave up trying mastodon as [email protected]

PyTorch @PyTorch

379K Followers 77 Following Tensors and neural networks in Python with strong hardware acceleration. PyTorch is an open source project at the Linux Foundation. #PyTorchFoundation

Jeremy Howard @jeremyphoward

222K Followers 5K Following 🇦🇺 Co-founder: @AnswerDotAI & @FastDotAI ; Hon Professor: @UQSchoolITEE ; Digital Fellow: @Stanford

Delip Rao e/σ @deliprao

46K Followers 5K Following Busy inventing the shipwreck. @Penn. Past: @johnshopkins, @UCSC, @Amazon, @Twitter ||Art: #NLProc, Vision, Speech, #DeepLearning || Life: 道元, improv, running 🌈

Horace He @cHHillee

23K Followers 449 Following Working at the intersection of ML and Systems @ PyTorch "My learning style is Horace twitter threads" - @typedfemale

Eric Jang @ericjang11

69K Followers 3K Following physical AGI at 1X. Author of "AI is Good for You" https://t.co/eFg4WXhg0p

Percy Liang @percyliang

49K Followers 408 Following Associate Professor in computer science @Stanford @StanfordHAI @StanfordCRFM @StanfordAILab @stanfordnlp | cofounder @togethercompute | Pianist

Sasha Rush @srush_nlp

52K Followers 464 Following Professor, Programmer in NYC. Cornell Tech, Hugging Face 🤗 https://t.co/cZl0wTfqGz

Anthropic @AnthropicAI

262K Followers 26 Following We're an AI safety and research company that builds reliable, interpretable, and steerable AI systems. Talk to our AI assistant Claude at https://t.co/aRbQ97uk4d.

Rosanne Liu @savvyRL

33K Followers 966 Following Cofounded & running @ml_collective. Host of Deep Learning Classics & Trends. Research at Google DeepMind. DEI/DIA Chair of ICLR & NeurIPS. Writing https://t.co/IbycyGfnDR

Kyunghyun Cho @kchonyc

61K Followers 2K Following a combination of a mediocre scientist, a mediocre manager, a mediocre advisor & a mediocre PC at @nyuniversity (@CILVRatNYU) & @genentech (@PrescientDesign).

Ross Wightman @wightmanr

18K Followers 1K Following Computer Vision @ 🤗. Ex head of Software, Firmware Engineering at a Canadian 🦄. Currently building ML, AI systems or investing in startups that do it better.

Hugging Face @huggingface

343K Followers 189 Following The AI community building the future. https://t.co/VkRPD0VKaZ #BlackLivesMatter #stopasianhate

clem 🤗 @ClementDelangue

91K Followers 5K Following Co-founder & CEO @HuggingFace 🤗, the open and collaborative platform for AI builders

Akari Asai @AkariAsai

11K Followers 650 Following Ph.D. student @uwcse & @uwnlp. NLP. IBM Ph.D. fellow (2022-2023). Meta student researcher (2023-) . ☕️ 🐕 🏃♀️🧗♀️🍳

Agatha Miller 🇨�.. @agathamillerr

94K Followers 180 Following 🇨🇴 Latina | Lovely barbie from Religious school| posting things at the top of my head

Dan Roy @roydanroy

45K Followers 2K Following ML / AI researcher, emphasis on theory. Research Director and Canada CIFAR AI Chair, @VectorInst Professor, @UofT (Statistics/CS)

Zach Mueller @TheZachMueller

10K Followers 391 Following 🤗 Technical Lead for the Accelerate Project | Passionate about Open Source | Nerd who enjoys touching the grass | #ADHD | He/Him

AI Safety Institute @AISafetyInst

530 Followers 29 Following We’re building a team of world leading talent to tackle some of the biggest challenges in AI safety - come and join us.

Sholto Douglas @_sholtodouglas

15K Followers 857 Following Scaling Gemini @Deepmind - working towards intelligence too cheap to meter

Sergey Levine @svlevine

79K Followers 122 Following Associate Professor at UC Berkeley Co-founder, Physical Intelligence

Mengye Ren @mengyer

4K Followers 699 Following Assistant Professor of Comp Sci. and Data Sci. at NYU. Machine Learning, Computer Vision, Human-like AI.

Mike Gunter @MikeGunter_

645 Followers 850 Following CTO and founder, @MatXComputing, designing hardware to make LLMs an order of magnitude smarter.

MatX @MatXComputing

856 Followers 30 Following MatX designs hardware tailored for the world’s best AI models: We dedicate every transistor to maximizing performance for large models. Join us: https://t.co/E3XexKHUSM

no context memes @weirddalle

1.7M Followers 460 Following memes and weird ai generations | dm for promo | @hardaipics | follow my IG

Daniel Han @danielhanchen

7K Followers 935 Following Building @UnslothAI. Finetune LLMs 30x faster https://t.co/aRyAAgKOR7. Prev ML at NVIDIA. Hyperlearn used by NASA. I like maths, making code go fast

Unsloth AI @UnslothAI

3K Followers 250 Following Making AI & LLMs more accessible + faster for everyone! 🦥 Github: https://t.co/2kXqhhvLsb Discord: https://t.co/1Gmc1SDElj

Rose @rose_e_wang

2K Followers 238 Following NLP & Education @stanfordnlp 🌲 Prev: 2020 MIT 🦫, Google Brain 🧠, Google Brain Robotics 🤖

Ate-a-Pi @8teAPi

39K Followers 2K Following self aware neuron; historian from 2130; epistemic polluter; 95 yr old man;

Yuandong Tian @tydsh

16K Followers 801 Following Research Scientist and Senior Manager in Meta AI (FAIR). AI-guided Optimization and Representation Learning. Novelist in spare time. PhD in @CMU_Robotics.

Jiawei Zhao @jiawzhao

317 Followers 174 Following PhD Candidate @Caltech, Fmr Research Intern @nvidia

Prof. Anima Anandkuma.. @AnimaAnandkumar

25K Followers 2K Following Bren Professor @caltech, Fmr Sr Director of #AI research @nvidia, Fmr Principal Scientist @awscloud, AI+Science, PDE, Neural operators. Views my own.

Yaroslav Bulatov @yaroslavvb

6K Followers 699 Following ex-Google Brain, OpenAI, Meta Scholar: https://t.co/iVycFw5dSX New Blog: https://t.co/SLix8HqVeY Old Blog: https://t.co/Ur3GWKoOzy

Aarni @akx

605 Followers 1K Following Professional geek & general enthusiast. Cofounder/CTO at @valohaiai, code & magic at @desukun, etc. Also @[email protected]

buyhighsellhigher @ebitdaddy90

22K Followers 39 Following Ex p72/Citadel/GS. schooled in Boston. (she/her). Will adjust on ur ebitda until u free cash flow. PM (Portfolio Maestro), SS (Stock Shokunin), CFA (lvl9000).

Conference on Languag.. @COLM_conf

2K Followers 6 Following https://t.co/GhGCMEoa4A Abstract submission: March 22, 2024

main @main_horse

8K Followers 474 Following AGI Believer. Haven't applied @OpenAI. Likes are not always endorsement.

Jared Roesch @roeschinc

1K Followers 812 Following CTO & Co-founder @octoml. PhD @uwcse. Building creative & collective AI. Writing about AI @ https://t.co/toFSukgrzM

Le Vieux François �.. @FrancoisOuell15

5K Followers 961 Following Autistic, physicist, entrepreneur from Quebec living in Chengdu, working in Hangzhou. I sometimes use sarcasm, but mostly elegant brilliance. 😐

Igor Babuschkin @ibab

44K Followers 684 Following Maybe the real AGI was the friends we made along the way. @xAI

Michael Goin @mgoin_

253 Followers 150 Following Engineering Lead @neuralmagic | Compressing LLMs and making fast software | https://t.co/EGV997HDwD

Neural Magic @neuralmagic

5K Followers 2K Following Deploy the fastest ML on CPUs and GPUs using only software. GitHub: https://t.co/99a5S2627M #sparsity #opensource

Stone Age Herbalist @Paracelsus1092

90K Followers 3K Following Pygmy Futurism | Beserker Yoga Revival | Narcoanthropology & Yamnilote Studies | Nganga R&D | Mandeville Explorer Archive | 🇷🇼

Been Kim @_beenkim

23K Followers 453 Following Research Scientist at Google DeepMind, PhD from MIT. Make machines empower people. @[email protected]

Baithoven 🎣 @SuspendedRobot

6K Followers 995 Following Training to be a master baiter. Lunarian propaganda account. 50 cent/post.

Chan Young Park @chan_young_park

441 Followers 213 Following PhD student @LTIatCMU @uwcse, working on natural language processing and computational social science.

Brett Adcock @adcock_brett

171K Followers 14 Following Founder @Figure_robot (AI Robotics) & Archer Aviation (NYSE: ACHR)

Volodymyr Kuleshov �.. @volokuleshov

8K Followers 997 Following AI Researcher. Prof @Cornell & @Cornell_Tech. Co-Founder @afreshai. PhD @Stanford.

Lewis Tunstall @_lewtun

9K Followers 425 Following 🤗 LLM engineering & research @huggingface 📖 Co-author of "NLP with Transformers" book 💥 Ex-particle physicist 🤘 Occasional guitarist 🇦🇺 in 🇨🇭

Chris Lattner @clattner_llvm

79K Followers 182 Following Building beautiful things like Mojo🔥 and MAX @Modular, lifting the world of production AI/ML software into a new phase of innovation. We’re hiring! 🚀🧠

Jonathan Whitaker @johnowhitaker

7K Followers 955 Following Data scientist and AI researcher. R&D at https://t.co/9xrxRrGfEE.

Minji Yoon @MinjiYoon90

2K Followers 304 Following Building personal AI @MicrosoftAI. Past: @InflectionAI, PhD @SCSatCMU.

Jason Ramapuram @jramapuram

787 Followers 392 Following ML Research Scientist MLR | Formerly: DeepMind, Qualcomm, Viasat, Rockwell Collins | Swiss-minted PhD in ML | Barista alumnus ☕ @ Starbucks | 🇺🇸🇮🇳🇱🇻🇮🇹

Matei Zaharia @matei_zaharia

39K Followers 1K Following CTO at @Databricks and CS prof at @UCBerkeley. Working on data+AI, including @ApacheSpark, @DeltaLakeOSS, @MLflow, https://t.co/94gROE5Xa0. https://t.co/nmRYAKG0LZ

Teven Le Scao @Fluke_Ellington

2K Followers 549 Following Researcher @MistralAI, producer @ my bedroom, no BLOOM slander authorized on this account

Max Conradt @max_conradt

753 Followers 693 Following The John Carmack of B2B SaaS. Make computer go vrooooooom 🏎️.

Niklas Muennighoff @Muennighoff

5K Followers 323 Following @ContextualAI | Interests: AI/LLM Research & Health ❤️ | Past: @huggingface @PKU1898

kenshin9000 @kenshin9000_

6K Followers 313 Following Working on Computer Vision and AI Safety. Twitter browsing account.Cool stuff, we found a similar result back in December arxiv.org/abs/2312.01037. Kind of upset they didn't cite/link to us tbh.

New Anthropic research: we find that probing, a simple interpretability technique, can detect when backdoored "sleeper agent" models are about to behave dangerously, after they pretend to be safe in training. Check out our first alignment blog post here: anthropic.com/research/probe…

Long-context Llama 3 finetuning is here! 🦙 Unsloth supports 48K context lengths for Llama-3 70b on a 80GB GPU - 6x longer than HF+FA2 QLoRA finetuning Llama-3 70b is 1.8x faster, uses 68% less VRAM & Llama-3 8b is 2x faster and fits in a 8GB GPU! Blog: unsloth.ai/blog/llama3

Today at @answerdotai we've got something new for you: FSDP/QDoRA. We've tested it with @AIatMeta Llama3 and the results blow away anything we've seen before. I believe that this combination is likely to create better task-specific models than anything else at any cost. 🧵

Next level: QLoRA fine-tuning 4-bit Llama 3 8B on iPhone 15 pro. Incoming (Q)LoRA MLX Swift example by David Koski: github.com/ml-explore/mlx… works with lot's of models (Mistral, Gemma, Phi-2, etc)

Easily Fine-tune @AIatMeta Llama 3 70B! 🦙 I am excited to share a new guide on how to fine-tune Llama 3 70B with @PyTorch FSDP, Q-Lora, and Flash Attention 2 (SDPA) using @huggingface build for consumer-size GPUs (4x 24GB). 🚀 Blog: philschmid.de/fsdp-qlora-lla… The blog covers: 👨💻…

We have just released 🍷 FineWeb: 15 trillion tokens of high quality web data. We filtered and deduplicated all CommonCrawl between 2013 and 2024. Models trained on FineWeb outperform RefinedWeb, C4, DolmaV1.6, The Pile and SlimPajama!

@mcy_219085 @UnslothAI Oh I should add that into the wiki!! Yes callbacks. @Tim_Dettmers's wonderful QLoRA repo has an example to add MMLU callbacks for eg github.com/artidoro/qlora…

very early LMSys Arena results peg llama3-70B at 5th place (the variance is still pretty high, so it can jump up or down a bit). This is so exciting. Can't wait to see how the 405B fares once it is released. chat.lmsys.org/?leaderboard

Yes, both the 8B and 70B are trained way more than is Chinchilla optimal - but we can eat the training cost to save you inference cost! One of the most interesting things to me was how quickly the 8B was improving even at 15T tokens.

What does it take to get a good MMLU score? Turns out: decent data, instructions in pretraining, fuzzy dedup, and quality filtering. just dropped OLMo 1.7-7b… nice perf lift over 1.0! Blog: blog.allenai.org/olmo-1-7-7b-a-… Model: huggingface.co/allenai/OLMo-1… Data: huggingface.co/allenai/dolma

We just released Mixtral-8x22B-v0.1 and Mixtral-8x22B-Instruct-v0.1: - Free to use under Apache 2.0 license - Outperforms all open models - Native function calling - Masters English, French, Italian, German and Spanish. - Seq_len = 64K mistral.ai/news/mixtral-8…

Over 95% of ASML DUV revenue came from China. This was 49% of their total revenue. No one is building fabs for anything besides leading edge despite trailing edge content growth still happening. America, Europe, Japan, etc will get completely destroyed in 14nm and above nodes.

Announcing the alpha release of torchtune! torchtune is a PyTorch-native library for fine-tuning LLMs. It combines hackable memory-efficient fine-tuning recipes with integrations into your favorite tools. Get started fine-tuning today! Details: hubs.la/Q02t214F0

Compression Represents Intelligence Linearly LLMs' intelligence – reflected by average benchmark scores – almost linearly correlates with their ability to compress external text corpora repo: github.com/hkust-nlp/llm-… abs: arxiv.org/abs/2404.09937

🚀 Introducing Pile-T5! 🔗 We (EleutherAI) are thrilled to open-source our latest T5 model trained on 2T tokens from the Pile using the Llama tokenizer. ✨ Featuring intermediate checkpoints and a significant boost in benchmark performance. Work done by @lintangsutawika, me…

Grok is going multimodal! It’s incredible to see how fast a small, focused team can move. Kudos to the amazing team @xai that made this possible x.ai/blog/grok-1.5v

Finally got deepspeed zero 3 working with QLoRA for mixtral-8x22b. 1. install latest deepspeed `pip install git+github.com/microsoft/deep…` 2. update modeling_mixtral.py to include all experts in forward pass

Meta announces 2nd-gen inference chip MTIAv2. * 708TF/s Int8 / 353TF/s BF16 * 256MB SRAM, 128GB memory * 90W TDP. 24 chips per node, 3 nodes per rack. * standard PyTorch stack (Dynamo, Inductor, Triton) for flexibility Fabbed on TSMC's 5nm process, its fully programmable via the…

🚀 Took a stab at jamba-v0.1 finetuning (qlora): huggingface.co/jondurbin/airo… Results are OK-ish (6.688679 MT-Bench), targeted layers: ['q_proj', 'v_proj', 'k_proj', 'o_proj', 'x_proj', 'in_proj', 'out_proj'] Will try again soon!