Mike Lewis @ml_perception

Llama3 pre-training lead. Partially to blame for things like the Cicero Diplomacy bot, BART, RoBERTa, kNN-LM, top-k sampling & Deal Or No Deal. Seattle Joined September 2019-

Tweets254

-

Followers6K

-

Following223

-

Likes680

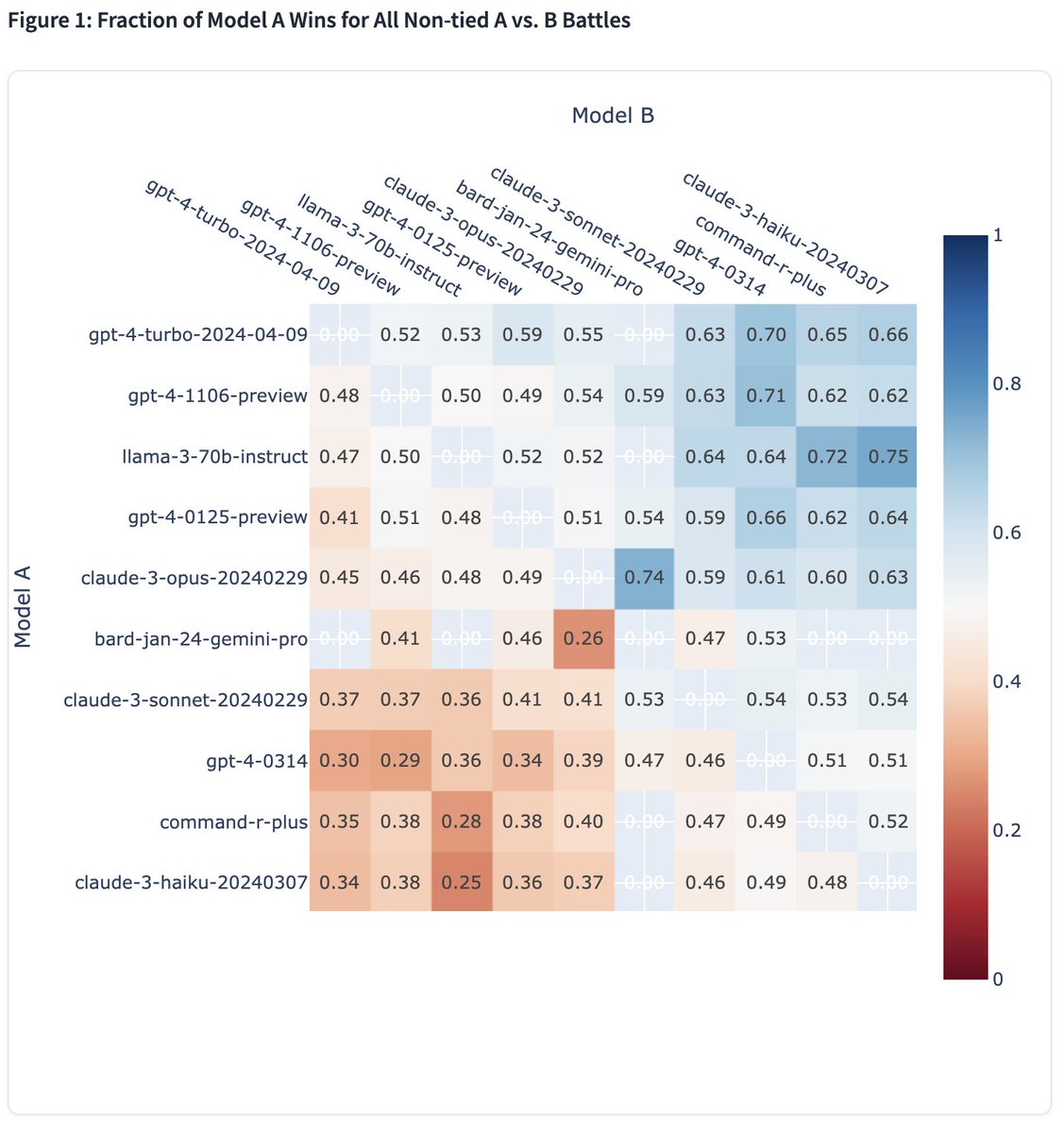

Moreover, we observe even stronger performance in English category, where Llama 3 ranking jumps to ~1st place with GPT-4-Turbo! It consistently performs strong against top models (see win-rate matrix) by human preference. It's been optimized for dialogue scenario with large…

I'm seeing a lot of questions about the limit of how good you can make a small LLM. tldr; benchmarks saturate, models don't. LLMs will improve logarithmically forever with enough good data.

I'm seeing a lot of questions about the limit of how good you can make a small LLM. tldr; benchmarks saturate, models don't. LLMs will improve logarithmically forever with enough good data.

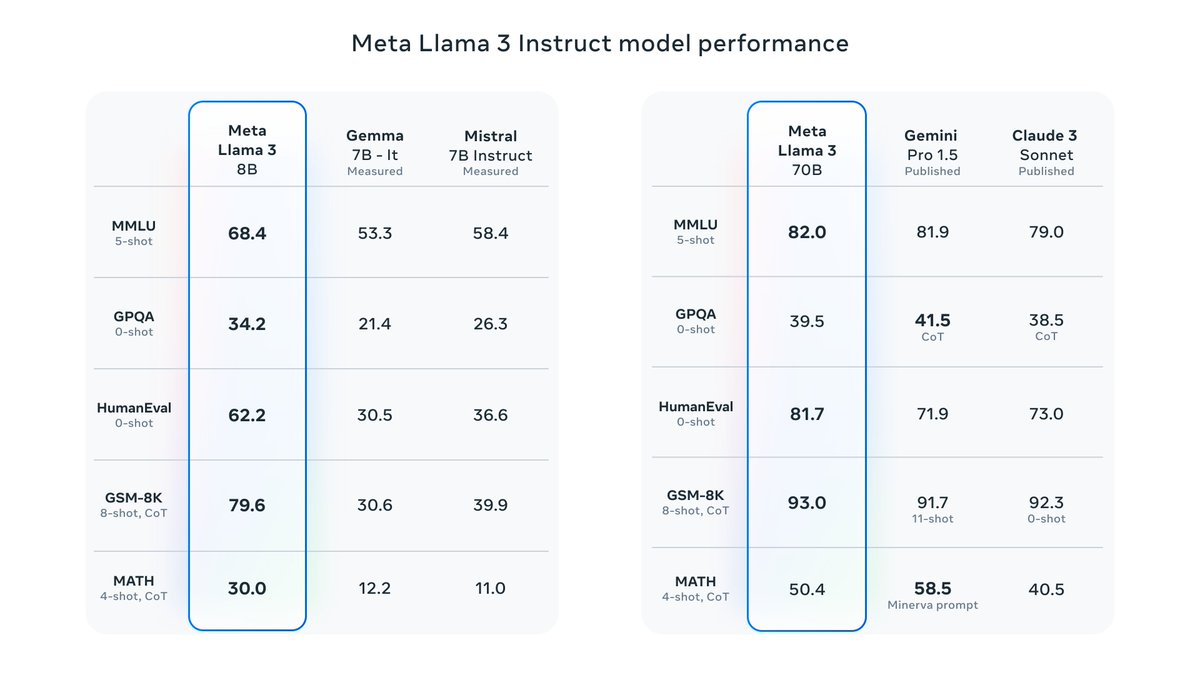

Yes, both the 8B and 70B are trained way more than is Chinchilla optimal - but we can eat the training cost to save you inference cost! One of the most interesting things to me was how quickly the 8B was improving even at 15T tokens.

Yes, both the 8B and 70B are trained way more than is Chinchilla optimal - but we can eat the training cost to save you inference cost! One of the most interesting things to me was how quickly the 8B was improving even at 15T tokens.

Excited to share the Llama 3 models with everyone. This has been an INCREDIBLE team effort. The 8b and 70b models are available now. These are the best open source models.

Happy to be part of this incredible journey of Llama3 and to share the best open weight 8B and 70B models! Our largest 400B+ model is still cooking but we are providing a sneak peek into how it is trending! Check more details here ai.meta.com/blog/meta-llam…

Feeling incredibly excited and proud that StreamingLLM made it to MIT's homepage and has been accepted by ICLR 2024! Can't wait to show it in Vienna! 😆🥳 web.mit.edu/spotlight/chat… Huge thanks to my fantastic advisor @songhan_mit and mentors @ml_perception @tydsh @BeidiChen!

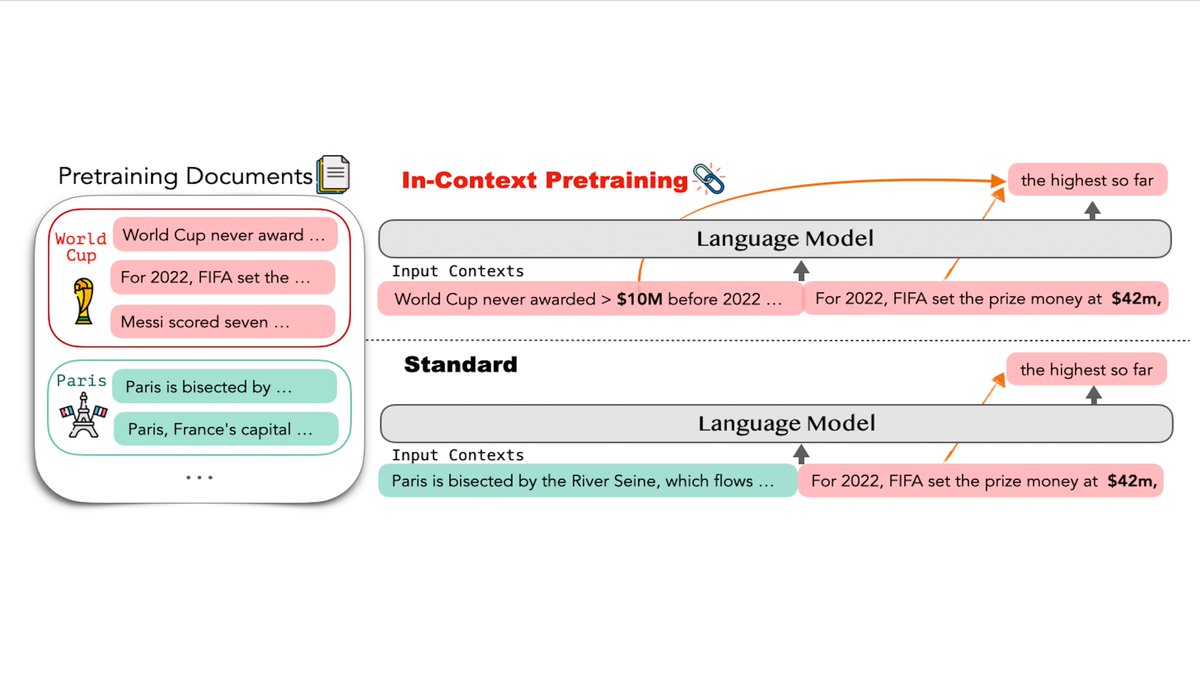

Happy to share In-Context Pretraining 🖇️ is accepted as an #ICLR2024 spotlight. We study how to pretrain LLMs with improved context understanding ability paper📄: arxiv.org/pdf/2310.10638… code: github.com/swj0419/in-con…

Happy to share In-Context Pretraining 🖇️ is accepted as an #ICLR2024 spotlight. We study how to pretrain LLMs with improved context understanding ability paper📄: arxiv.org/pdf/2310.10638… code: github.com/swj0419/in-con…

Ari is an incredibly creative researcher and a great mentor - I can't wait to see what comes out of his lab!

Ari is an incredibly creative researcher and a great mentor - I can't wait to see what comes out of his lab!

Attention sinks: great read, and pretty close out of principle to @TimDarcet's ViT registers huggingface.co/blog/tomaarsen…

The best thing about "advising" people is how much smarter they make you. Thanks @OfirPress and all the rest of you!

The best thing about "advising" people is how much smarter they make you. Thanks @OfirPress and all the rest of you!

This feels like a futuristic pre-training objective. I think we'll see a lot more extensions based on this idea. I can imagine a curriculum-based alternative, where documents are selected to be related, but also increasing difficulty.

This feels like a futuristic pre-training objective. I think we'll see a lot more extensions based on this idea. I can imagine a curriculum-based alternative, where documents are selected to be related, but also increasing difficulty.

Let's discuss In-context pretraining. In-context pretraining provides a valuable advancement that directly addresses the key limitation of prior pretraining methods - the inability to perform effective reasoning across multiple related documents and long contexts. The Pain…

Introduce In-Context Pretraining🖇️: train LMs on contexts of related documents. Improving 7B LM by simply reordering pretrain docs 📈In-context learning +8% 📈Faithful +16% 📈Reading comprehension +15% 📈Retrieval augmentation +9% 📈Long-context reason +5% arxiv.org/abs/2301.12652

That’s why we need to get rid of tokenizers and try to use raw inoutput, like in vision! ByT5 (arxiv.org/abs/2105.13626) and MEGABYTE (arxiv.org/abs/2305.07185) make nice first steps, we need more of that.

That’s why we need to get rid of tokenizers and try to use raw inoutput, like in vision! ByT5 (arxiv.org/abs/2105.13626) and MEGABYTE (arxiv.org/abs/2305.07185) make nice first steps, we need more of that.

Great idea for making more long-context training data out of a large set of relatively short documents.

Great idea for making more long-context training data out of a large set of relatively short documents.

Very cool work from @WeijiaShi2 @sewon__min @VictoriaLinML @margs_li @ml_perception @nlpnoah @LukeZettlemoyer and others. I'll go as far as to say that every future model needs to be trained with this method. There is no reason not to. It's a clear win. arxiv.org/abs/2310.10638

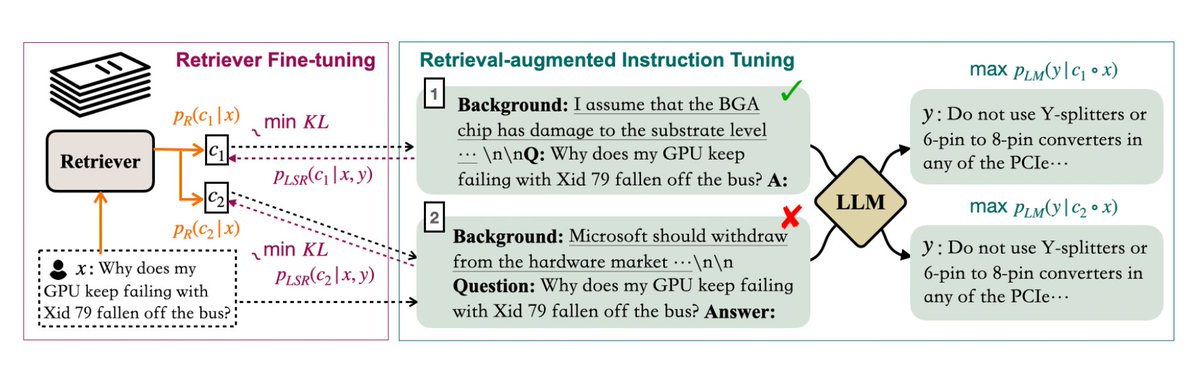

🌐 Retrieval-augmentation is becoming increasingly popular for enriching LLMs with long-tail knowledge and keeping them up-to-date. 💫 Introducing RA-DIT: Retrieval-Augmented Dual Instruction Tuning (arxiv.org/abs/2310.01352), a fine-tuning process that enhances LM and retrieval…

Here's the StreamingLLM method: Background: - Autoregressive language models are limited to contexts of finite length due to the quadratic self-attention complexity. - Windowed attention partially addresses this by caching only the most recent tokens. - However, window attention…

The paper is here arxiv.org/pdf/2309.17453… This "attention sink" trick does allow your LLM work on an unbounded length of input without any degradation in perplexity - but of course that doesn't mean it's really using its full context.

The paper is here arxiv.org/pdf/2309.17453… This "attention sink" trick does allow your LLM work on an unbounded length of input without any degradation in perplexity - but of course that doesn't mean it's really using its full context.

(((ل()(ل() 'yoav))).. @yoavgo

46K Followers 2K Following

AI at Meta @AIatMeta

531K Followers 255 Following Together with the AI community, we are pushing the boundaries of what’s possible through open science to create a more connected world.

Percy Liang @percyliang

49K Followers 408 Following Associate Professor in computer science @Stanford @StanfordHAI @StanfordCRFM @StanfordAILab @stanfordnlp | cofounder @togethercompute | Pianist

Akari Asai @AkariAsai

11K Followers 650 Following Ph.D. student @uwcse & @uwnlp. NLP. IBM Ph.D. fellow (2022-2023). Meta student researcher (2023-) . ☕️ 🐕 🏃♀️🧗♀️🍳

Delip Rao e/σ @deliprao

46K Followers 5K Following Busy inventing the shipwreck. @Penn. Past: @johnshopkins, @UCSC, @Amazon, @Twitter ||Art: #NLProc, Vision, Speech, #DeepLearning || Life: 道元, improv, running 🌈

Kyunghyun Cho @kchonyc

61K Followers 2K Following a combination of a mediocre scientist, a mediocre manager, a mediocre advisor & a mediocre PC at @nyuniversity (@CILVRatNYU) & @genentech (@PrescientDesign).

Sasha Rush @srush_nlp

52K Followers 464 Following Professor, Programmer in NYC. Cornell Tech, Hugging Face 🤗 https://t.co/cZl0wTfqGz

Soumith Chintala @soumithchintala

186K Followers 877 Following Cofounded and lead @PyTorch at Meta. Also dabble in robotics at NYU. AI is delicious when it is accessible and open-source.

Sam Bowman @sleepinyourhat

35K Followers 3K Following AI alignment + LLMs at NYU & Anthropic. Views not employers'. No relation to @s8mb. I think you should join @givingwhatwecan.

Graham Neubig @gneubig

31K Followers 585 Following Associate professor at CMU, studying natural language processing and machine learning.

Eric Jang @ericjang11

69K Followers 3K Following physical AGI at 1X. Author of "AI is Good for You" https://t.co/eFg4WXhg0p

Tim Dettmers @Tim_Dettmers

29K Followers 818 Following PhD Student at @UW. I blog about deep learning and PhD life at https://t.co/Y78KDJJFE7.

Jacob Andreas @jacobandreas

14K Followers 958 Following Teaching computers to read. Assoc. prof @MITEECS / @MIT_CSAIL (he/him). https://t.co/5kCnXHjtlY https://t.co/2A3qF5vdJw

Noam Brown @polynoamial

34K Followers 610 Following Researching reasoning @OpenAI | Co-created Libratus/Pluribus, the first superhuman no-limit poker AIs | Co-created CICERO | PhD from @SCSatCMU

Ofir Press @OfirPress

10K Followers 3K Following I build tough benchmarks for LMs and then I get the LMs to solve them. Postdoc @Princeton. PhD from @nlpnoah @UW. Ex-visiting researcher @MetaAI & @MosaicML.

Yoav Artzi @yoavartzi

13K Followers 163 Following Research/prof @cs_cornell + @cornell_tech🚡 / https://t.co/9YnWry7yHs / https://t.co/3VmRSyYm2d / asso. faculty director @arxiv / building https://t.co/f9QkzO5kaC

Luca Soldaini 🎀 @soldni

6K Followers 1K Following I like tokens! Lead for OLMo data team at @allen_ai (makin Dolma 🍇), open source science fan, @QueerInAI organizer 🤖☕️🍕they/them

siva kumar @sivakum38269090

2 Followers 83 Following

ElectricalChip @electricalchip

1 Followers 596 Following

dev potatopotato @devpotatopotato

0 Followers 136 Following CS student in Seoul National University. Passionate about AGI.

Phillip Lindsay @EastLAPinche

59 Followers 385 Following

metavalent stigmergy @metavalent

439 Followers 4K Following The process by which novel insights, intuitions, understandings, ideas, or concepts originate, germinate, blossom, propagate, and instantiate DCNs and DCNRs.

Faria Huq @FariaHuqOaishi

570 Followers 1K Following PhD Student @SCSatCMU working with @jeffbigham working on Agents 🤖 and Interaction📱. Prev- SGI Fellow'21 @MIT_CSAIL, Tero labs.

Lina Vera @LVera39600

156 Followers 3K Following

Chenxi Pang @chenxipang

7 Followers 150 Following

W3i Reviews @W3iReviews

2K Followers 5K Following Hi, I'm Mike - @wpcult - https://t.co/XdjmQU6gOd - https://t.co/wMjbgP9IbA - https://t.co/dJoW1sKW6t - https://t.co/RwJWrcHXai

Haydn Hanna @HannaHaydn

102 Followers 153 Following Teacher at @synthesischool 🍎 Lead Designer of the Mars Explorer Program with @canada_mars 🇨🇦 Optimistic about humanity's future on Earth and Mars! 🌍 🚀

Nick Mumero @nickdee96

131 Followers 1K Following Cofounder at Continuum Ads. Focusing on NLP, Simulation Modelling and Optimization.

Vishal Mahto @Vishal8_m

117 Followers 2K Following Software Engineer@Graebert India | C++ | STL | Data Strcuture and Algorithm | Multithreading | OOPS | Design Pattern | Git | Cmake | Python

Harry Zhang @harryzhangs

577 Followers 3K Following cofounder @HackQuest_ & @buildmoonshot I tweet abt #crypto⚡️ #education📚 #chess♟ #NBA

Krishna @krish240574

45 Followers 940 Following World traveller and poet at heart, code hacker by choice, expert chef by hobby !

Jack FitzGerald @jgmfitz

4 Followers 187 Following Principal, Applied Scientist at Amazon AGI org; AI model and system builder; LLM research

mithat @mithatcikas

11 Followers 57 Following

Guanghui Qin @hiaoxui

83 Followers 56 Following Ph.D. student in Natural Language Processing at Johns Hopkins University.

Keyon Zeng @Keyon2046

136 Followers 1K Following

Tony Sun @Tony_Sun_

1 Followers 5 Following

Ushikawa @ushikawazaki

63 Followers 258 Following (Not a bot. No content is AI generated.) Equally hopeful/fearful about AI. There is nothing in this world that never takes a step outside a person's heart.

Zachary Daniels @ZacharyDan22010

110 Followers 224 Following Just a husband, father, tradesman jack. I also happen to be an amateur Theologian, philosopher, and recently, a tech enthusiast.

Scott McCrae @scottymccrae

63 Followers 202 Following working on something new 👀 former ML @Dropbox, @berkeley_ai

Sabri Eyuboglu @EyubogluSabri

614 Followers 261 Following Computer Science PhD student @Stanford working with @HazyResearch and @james_y_zou 🪬

Pat Jensen @patsonvideo

319 Followers 675 Following Distinguished Architect @zoom. CCIE #53452. Husband, father, collaborator, mentor.

FutureShock @MalikKshitiz

19 Followers 487 Following The greeks didn't write obituaries. They just asked one question - "Did he have passion?"

南宁雯雯 @RVing5150

2K Followers 916 Following 南宁雯雯-长期在南宁工作 为了更好的生活,兼职线下接单,01年的寻找优质长期客户,中介太多本人无定金/无门槛/口嗨勿扰。预约QQ在大号主页:@jingjingya001 电报✈️: https://t.co/gu5XixyIQK

Matteo Hoch, EA @MatteoHoch

155 Followers 812 Following Data science, financial planning, tax, gardening, mountain biking, cooking, and baking. Fan of alluvial fans. University of Illinois EE 2016

Jiaxun Cui @cuijiaxun

362 Followers 513 Following Ph.D. candidate @UTAustin 🤘 | Multi-agent Learning | SJTU @sjtu1896 | Research Intern FAIR Labs @AIatMeta, Tencent AI Labs

Saurabh Shah @saurabh_shah2

498 Followers 987 Following ML Engineer @Apple /Siri NLU, prev @allen_ai @Penn …. 🎤dabbler in standup comedy and music 🎸… 🐈⬛enjoyer of cats 🐈 and mountains🏔️ …he/him

Yamil Enrique @YamilEnriq81909

0 Followers 2 Following

gandamu @gandamu_ml

16K Followers 5K Following Prev https://t.co/a96FYiLT41 · https://t.co/ren7Ov9vxx. Music videos: https://t.co/iFubkxDg5g

Feroz Dewan @dewan_feroz

74 Followers 664 Following

Ioana Baldini @ioanauoft

706 Followers 1K Following Researcher. Immigrant. Mom. STEM. And if you insist Dr. Playing with ideas at IBM Research AI.

Greg Laurie @laurie_gre30850

272 Followers 3K Following KNOWING GOD A America evangelical author, pastor and evangelist who serves as the senior pastor of Harvest Christian Fellowship

INDRAJEET @indrajeet877

427 Followers 2K Following Head of Math Department,Allen Institute Karaikal BTech NITW 2012, Option trader & investor. Math geek, tech-forward, learner Plus Python & Spanish skills.

(((ل()(ل() 'yoav))).. @yoavgo

46K Followers 2K Following

AI at Meta @AIatMeta

531K Followers 255 Following Together with the AI community, we are pushing the boundaries of what’s possible through open science to create a more connected world.

Percy Liang @percyliang

49K Followers 408 Following Associate Professor in computer science @Stanford @StanfordHAI @StanfordCRFM @StanfordAILab @stanfordnlp | cofounder @togethercompute | Pianist

Akari Asai @AkariAsai

11K Followers 650 Following Ph.D. student @uwcse & @uwnlp. NLP. IBM Ph.D. fellow (2022-2023). Meta student researcher (2023-) . ☕️ 🐕 🏃♀️🧗♀️🍳

Kyunghyun Cho @kchonyc

61K Followers 2K Following a combination of a mediocre scientist, a mediocre manager, a mediocre advisor & a mediocre PC at @nyuniversity (@CILVRatNYU) & @genentech (@PrescientDesign).

Sasha Rush @srush_nlp

52K Followers 464 Following Professor, Programmer in NYC. Cornell Tech, Hugging Face 🤗 https://t.co/cZl0wTfqGz

Soumith Chintala @soumithchintala

186K Followers 877 Following Cofounded and lead @PyTorch at Meta. Also dabble in robotics at NYU. AI is delicious when it is accessible and open-source.

Christopher Manning @chrmanning

126K Followers 115 Following Director, @StanfordAILab. Assoc. Director, @StanfordHAI. Founder, @stanfordnlp. Prof. CS & Linguistics, @Stanford. IP @aixventureshq. 🇦🇺 Do #NLProc & #AI. 👋

Sam Bowman @sleepinyourhat

35K Followers 3K Following AI alignment + LLMs at NYU & Anthropic. Views not employers'. No relation to @s8mb. I think you should join @givingwhatwecan.

Graham Neubig @gneubig

31K Followers 585 Following Associate professor at CMU, studying natural language processing and machine learning.

Tim Dettmers @Tim_Dettmers

29K Followers 818 Following PhD Student at @UW. I blog about deep learning and PhD life at https://t.co/Y78KDJJFE7.

Jacob Andreas @jacobandreas

14K Followers 958 Following Teaching computers to read. Assoc. prof @MITEECS / @MIT_CSAIL (he/him). https://t.co/5kCnXHjtlY https://t.co/2A3qF5vdJw

Ofir Press @OfirPress

10K Followers 3K Following I build tough benchmarks for LMs and then I get the LMs to solve them. Postdoc @Princeton. PhD from @nlpnoah @UW. Ex-visiting researcher @MetaAI & @MosaicML.

Yoav Artzi @yoavartzi

13K Followers 163 Following Research/prof @cs_cornell + @cornell_tech🚡 / https://t.co/9YnWry7yHs / https://t.co/3VmRSyYm2d / asso. faculty director @arxiv / building https://t.co/f9QkzO5kaC

Felix Hill @FelixHill84

9K Followers 777 Following Research Scientist, Deepmind I try to think hard about everything I tweet, esp on 90s football and 80s music None of my opinions are really someone else's

Colin Raffel @colinraffel

30K Followers 654 Following nonbayesian parameterics, sweet lessons, and random birds. Friend of @srush_nlp

Tal Linzen @tallinzen

16K Followers 895 Following Professor @nyuling and @NYUDataScience, research scientist @GoogleAI

Susan Zhang @suchenzang

20K Followers 504 Following @ Google Deepmind. Past: @MetaAI, @OpenAI, @unitygames, @losalamosnatlab, @Princeton etc. Always hungry for compute.

Anirudh Goyal @anirudhg9119

5K Followers 487 Following Gemini ♊ Spent time at @Berkeley_EECS, @MPI_IS, @DeepMind.

Michal Valko @misovalko

5K Followers 2K Following Llama @AIatMeta Paris & Inria & MVA - Ex: Gemini and BYOL @GoogleDeepMind

Sheng Shen @shengs1123

1K Followers 539 Following Ph.D. student @berkeley_ai; Building 🦙@MetaAi; Former @MSFTResearch, @allen_ai, @GoogleDeepMind

Andrej Karpathy @karpathy

978K Followers 904 Following 🧑🍳. Previously Director of AI @ Tesla, founding team @ OpenAI, CS231n/PhD @ Stanford. I like to train large deep neural nets 🧠🤖💥

Roberta Raileanu @robertarail

4K Followers 1K Following Research Scientist @Meta & Honorary Lecturer @UCL. ex @DeepMind | @MSFTResearch | @NYU | @Princeton. Llama-3, Toolformer, Rainbow Teaming.

Sharan Narang @sharan0909

2K Followers 254 Following LLMs and AI Research (Llama 2 & 3 lead) @Meta | ex @Google (PaLM lead, T5), ex @Baidu (Deep Speech 2, Sparse Neural Networks), ex @Nvidia

Dieuwke Hupkes @_dieuwke_

2K Followers 238 Following

Aaditya Singh @Aaditya6284

421 Followers 242 Following PhD student at @GatsbyUCL working with @SaxeLab, @FelixHill84 on learning dynamics, ICL, concepts, LLMs. Prev. at: @GoogleDeepMind, @AIatMeta (LLaMa 3), @MIT

Moya Chen @moyapchen

385 Followers 125 Following Ex-Meta LLM research engineer gap-year-yeeting herself to Boy Scout badges before the pathway narrowing of ML timelines.

Jim Fan @DrJimFan

229K Followers 3K Following @NVIDIA Sr. Research Manager & Lead of Embodied AI (GEAR Lab). Creating foundation models for Humanoid Robots & Gaming. @Stanford Ph.D. @OpenAI's first intern.

Sweta Agrawal @swetaagrawal20

945 Followers 1K Following Postdoc Researcher @itnewspt | Ph.D. @ClipUmd, @umdcs #nlproc

Artidoro Pagnoni @ArtidoroPagnoni

795 Followers 425 Following PhD student in NLP at UW with Luke Zettlemoyer

Melanie Sclar @melaniesclar

2K Followers 412 Following PhD student @uwnlp @uwcse | Visiting Researcher @MetaAI FAIR Labs | Prev. Lead ML Engineer @asapp, intern @LTIatCMU | 🇦🇷

Xian Li @xl_nlp

2K Followers 242 Following Research Scientist @MetaAI. NLP, ML. Opinions are my own.

Beidi Chen @BeidiChen

6K Followers 348 Following Asst. Prof @CarnegieMellon, Visiting Researcher @Meta, Postdoc @Stanford, Ph.D. @RiceUniversity, Large-Scale ML, a fan of Dota2.

Sean O'Brien @seano_research

67 Followers 47 Following UCSD PhD student with Julian McAuley, studying LLMs Ex-Meta AI, Berkeley AI Research

Jeff Rasley @jeffra45

666 Followers 921 Following @SnowflakeDB AI Research Team. @MSFTDeepSpeed co-founder, @BrownCSDept PhD, @uwcse alum

Michi Yasunaga @michiyasunaga

3K Followers 899 Following CS PhD @Stanford working on language models and multimodal models. Previously @GoogleDeepMind @Meta @Yale

Guangxuan Xiao @Guangxuan_Xiao

1K Followers 513 Following Ph.D. student at @MITEECS Prev: CS & Finance @Tsinghua_Uni

Sara Hooker @sarahookr

39K Followers 7K Following I lead @CohereForAI. Formerly Research @Google Brain @GoogleDeepmind. ML Efficiency at scale, LLMs, @trustworthy_ml. Changing spaces where breakthroughs happen.

Anastasia Razdaibiedi.. @razdaibi

143 Followers 119 Following PhD student @UofT & @VectorInst | #ML, #NLProc, #LLMs | prev @MetaAI, @AWS, @MSFTResearch

Adi Renduchintala @rendu_a

414 Followers 687 Following Applied Research Scientist @NVIDIA, former: Research Scientist @MetaAI, PhD @jhuclsp also lurking on Mastodon [email protected]

Diyi Yang @Diyi_Yang

14K Followers 2K Following Assistant Professor @Stanford CS @StanfordNLP @StanfordAILab. Formerly @GeorgiaTech. Computational Social Science & NLP

Weijia Shi @WeijiaShi2

5K Followers 966 Following PhD student @uwcse @uwnlp | Visiting Researcher @MetaAI | Undergrad @CS_UCLA | https://t.co/eLBQmgkvym

Alexander Holden Mill.. @alex_h_miller

760 Followers 441 Following Research Engineering Manager at @MetaAI

Alicia Krueger @aliciackrueger

149 Followers 618 Following Clean energy and climate policy. Bookworm, PCT hiker & trail runner. Occasionally re-tweeting

David J Wu @lightvector1

310 Followers 47 Following Researcher, game AI enthusiast, author of KataGo (https://t.co/rJKWY2qU5p)

Hugh Zhang @hughbzhang

1K Followers 509 Following open source ai @scale_AI. co-created @gradientpub.

Anton Bakhtin @ SF @anton_bakhtin

2K Followers 126 Following MTS at @AnthropicAI, Ex @MetaAI, Ex @Google Three logicians walk into a bar ...

Jonathan Gray @jgrayatwork

1K Followers 255 Following @AnthropicAI. Previously: co-built CICERO @MetaAI (FAIR), ex-@openai

Joelle Pineau @jpineau1

10K Followers 352 Following AI researcher. VP AI Research (FAIR), @AIatMeta. Professor of Computer Science, @mcgillu. Core academic member, @Mila_Quebec

Machel Reid @machelreid

2K Followers 1K Following Research Scientist @GoogleDeepMind Working on LLMs on the Gemini Team; did gemini 1.5 pro

Devendra Singh Sachan @Devendr06654102

425 Followers 441 Following Google DeepMind, Mila and McGill University. Interested in natural language processing.

Weiyan Shi @shi_weiyan

3K Followers 682 Following Postdoc @StanfordNLP, incoming assistant professor @Northeastern, PhD @Columbia| Prev Intern @MetaAI |Co-created CICERO | persuasive chatbots + privacy #nlproc

Dan Roberts @danintheory

4K Followers 570 Following I studied gravity. AI fellow @sequoia + researcher @mit physics. Co-founded @diffeo, acquired by @salesforce. Co-author "The Principles of Deep Learning Theory”Super impressed with llama3's performance 🤯🤯... See it for yourself in a fully private "chat with your docs" RAG app: lightning.ai/lightning-ai/s…

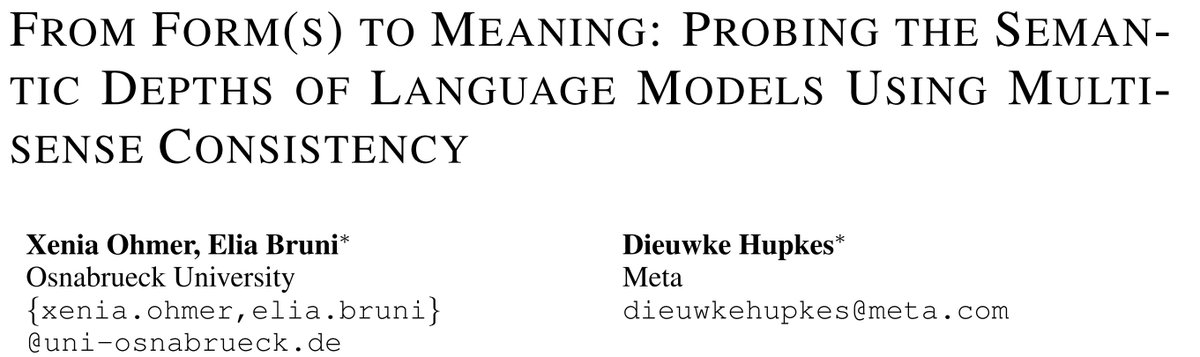

Can LLMs acquire meaning/semantics from just text? Some think it is a priori not possibile, I personally think it's a super interesting philosophical question which needs further investigation! Thoughts? arxiv.org/abs/2404.12145

📜 New preprint! Equipped with our multisense consistency method, we dive deep into an exploration of the semantic understanding of #LLMs. @eliabruni & @_dieuwke_ @metaai #NLProc [1/7]🧵 arxiv.org/abs/2404.12145

It's important to recognize the Chinchilla work. It was a significant contribution to move to the field in the right direction. E.g. we would have made very different decisions for the original PaLM model (540B params) if the Chinchilla laws were published

@deliprao Actually the chinchilla paper helped the field significantly. Before that, the original scaling laws paper recommended scaling the model size at the same rate as the dataset size. Chinchilla showed the correct formulation of scaling laws.

I am super excited about the release of our 8B & 70B LLaMA 3 models! Huge team effort, amazing learning experience, and we're not done - the 405B is still training! #Llama3

It’s here! Meet Llama 3, our latest generation of models that is setting a new standard for state-of-the art performance and efficiency for openly available LLMs. Key highlights • 8B and 70B parameter openly available pre-trained and fine-tuned models. • Trained on more…

Thrilled to share that our Llama 3 8B and 70B models are here. Happy to be part of this incredible journey, and excited for what's coming up next 🤩

It’s here! Meet Llama 3, our latest generation of models that is setting a new standard for state-of-the art performance and efficiency for openly available LLMs. Key highlights • 8B and 70B parameter openly available pre-trained and fine-tuned models. • Trained on more…

LLMs keep improving with more data! This also means we need better benchmarks

I'm seeing a lot of questions about the limit of how good you can make a small LLM. tldr; benchmarks saturate, models don't. LLMs will improve logarithmically forever with enough good data.

62% HumanEval at 8B is just mind blowing. The future of LM-assisted programming is going to be amazing, and it's going to get here much sooner than we expected.

llama-3-8b's in-context learning is unbelievable. reddit.com/r/LocalLLaMA/c…

Really proud of the work that went into making this possible, hope this helps the community push the field forward. Also in case anyone missed it, there's a sneak peak of what to come next at the end of blog post ai.meta.com/blog/meta-llam…

It’s here! Meet Llama 3, our latest generation of models that is setting a new standard for state-of-the art performance and efficiency for openly available LLMs. Key highlights • 8B and 70B parameter openly available pre-trained and fine-tuned models. • Trained on more…

I really respect @Meta's open approach to LLM research. Llama-3, an open model with first class performance. Congratulations to the team. I'm looking forward to the paper!

Llama 3 has been my focus since joining the Llama team last summer. Together, we've been tackling challenges across pre-training and human data, pre-training scaling, long context, post-training, and evaluations. It's been a rigorous yet thrilling journey: 🔹Our largest models…

Congrats to all the folks involved in the release today! 🎉🎉🎉 x.com/AIatMeta/statu…

Introducing Meta Llama 3: the most capable openly available LLM to date. Today we’re releasing 8B & 70B models that deliver on new capabilities such as improved reasoning and set a new state-of-the-art for models of their sizes. Today's release includes the first two Llama 3…

Congrats to the @AIatMeta team on Llama 3 🤯🤯 It is by FAR the best open source model i've played with! Run your personal llama 3 in a Lightning Studio now... let me know what you think about the model! lightning.ai/lightning-ai/s…

@ml_perception Thanks dude. This is huge for startups too. No need to depend closed models anymore

@ml_perception Mike you (and the team) are officially goated for this one 🙏 miss when you were churning out incredible papers but this model is so worth the wait

Congrats to @AIatMeta on Llama 3 release!! 🎉 ai.meta.com/blog/meta-llam… Notes: Releasing 8B and 70B (both base and finetuned) models, strong-performing in their model class (but we'll see when the rankings come in @ @lmsysorg :)) 400B is still training, but already encroaching…

@ml_perception no doubt its nice to have them out! but i do find it harder and harder to get excited from new releases without any details on where the meat is... looking forward to your paper!

and we have more cookin' 🦙🦙🦙

Introducing Meta Llama 3: the most capable openly available LLM to date. Today we’re releasing 8B & 70B models that deliver on new capabilities such as improved reasoning and set a new state-of-the-art for models of their sizes. Today's release includes the first two Llama 3…