Zichen "Charles" Zhang @ZCCZHANG

PYI @allen_ai | Specialist can generalize and Generalist can specialize zcczhang.github.io Joined August 2021-

Tweets59

-

Followers168

-

Following267

-

Likes604

So you want to do robotics tasks requiring dynamics information in the real world, but you don’t want the pain of real-world RL? In our work to be presented as an oral at ICLR 2024, @memmelma showed how we can do this via a real-to-sim-to-real policy learning approach. A 🧵 (1/7)

Join us at the **5th** Embodied AI Workshop in Seattle on June 18 #CVPR2024 🔊 Exciting invited speakers, 🥷 6+ challenges (with cash prizes), 📋 poster session, and 👽 lots more! 📜Workshop paper submission details coming soon Website: embodied-ai.org

Join us at the **5th** Embodied AI Workshop in Seattle on June 18 #CVPR2024 🔊 Exciting invited speakers, 🥷 6+ challenges (with cash prizes), 📋 poster session, and 👽 lots more! 📜Workshop paper submission details coming soon Website: embodied-ai.org

(1/2) 📢 Introducing LL3M: Large Language, Multimodal, and Moe Model Open Research Plan 👉github.com/jiasenlu/LL3M With the following goals: - Build an open-sourced codebase in Jax / Flax that supports large-scale training in LLM, LMM, and MoE models. - Record and share the…

How can we train robust policies with minimal human effort?🤖 We propose RialTo, a system that robustifies imitation learning policies from 15 real-world demonstrations using on-the-fly reconstructed simulations of the real world. (1/9)🧵 Project website: real-to-sim-to-real.github.io/RialTo/

OLMo is here! And it’s 100% open. It’s a state-of-the-art LLM and we are releasing it with all pre-training data and code. Let’s get to work on understanding the science behind LLMs. Learn more about the framework and how to access it here: blog.allenai.org/olmo-open-lang…

In the last two years, large foundation models have proven capable of perceiving and reasoning about the world around us unlocking a key possibility for scaling robotics. We introduce a AutoRT, a framework for orchestrating robotic agents in the wild using foundation models!

Excited to release Unified-IO 2 -- a multimodal model to not just parse but also produce images, text, audio and actions for robotics. This was a very challenging and demanding multi year effort. One of the largest projects to come out of PRIOR @allen_ai. Very proud of the team!

Excited to release Unified-IO 2 -- a multimodal model to not just parse but also produce images, text, audio and actions for robotics. This was a very challenging and demanding multi year effort. One of the largest projects to come out of PRIOR @allen_ai. Very proud of the team!

Joint work with @jiasenlu*, Christopher Clark, Sangho Lee*, @ZCCZHANG*, Savya Khosla, Ryan Marten, @DerekHoiem_UofI, @anikembhavi (* Leading authors)

6/ UnifiedIO 2 is state-of-the-art of the GRIT and SEED benchmarks, performs as well as other recent vision/language generate models on vision/language benchmarks despite supporting many additional modalities, achieves strong image generation results, and achieves good scores on…

5/ Our model is pre-trained from scratch on an extensive variety of multimodal data -- 1 billion image-text pairs, 1 trillion text tokens, 180 million video clips, 130 million interleaved image & text, 3 million 3D assets, and 1 million agent trajectories. We further…

4/ We develop a training sample construction pipeline from heterogeneous data sources. We trained the model with a multimodal mixture of denoisers (MoD) objective. The video shows how to construct input and output samples from video.

3/ Training on massive modality from scratch is very challenging. We include various architectural changes that significantly stabilize multimodal training. Left-top: Introducing a combination of image and text tasks (orange curve) slightly increases the gradient norm. However,…

2/ Our model architecture: a 7 billion parameter encoder-decoder transformer. The encoder processes a sequence of multimodal tokens, including text, images, audio, or image/audio history. The decoder outputs discrete tokens that can be decoded into text, an image, or an audio…

Wondering how to train a multimodal AI Model with massive input and output modalities from scratch? Introducing Unified-IO 2, the first autoregressive multimodal model that is capable of understanding and generating image, text, audio, and action. Our model, inference code, and…

Unified-IO 2: Scaling Autoregressive Multimodal Models with Vision, Language, Audio, and Action Presents the first autoregressive multimodal model that is capable of understanding and generating images, text, audio, and action proj: unified-io-2.allenai.org abs:…

Allen AI releases Unified-IO 2 Scaling Autoregressive Multimodal Models with Vision, Language, Audio, and Action paper page: huggingface.co/papers/2312.17… the first autoregressive multimodal model that is capable of understanding and generating image, text, audio, and action

Holodeck: Language Guided Generation of 3D Embodied AI Environments paper page: huggingface.co/papers/2312.09… 3D simulated environments play a critical role in Embodied AI, but their creation requires expertise and extensive manual effort, restricting their diversity and scope. To…

Annalee Neang @AnnaleeNea13814

14 Followers 3K Following

Sophie-leigh Lemos @LemosLeigh33131

67 Followers 5K Following

Ekue @ekpodar

1K Followers 2K Following I am interested in Tech/AI, Marketing, and complex systems, I will posts random stuff in those categories

_FUTURE_ 🍊🍊🍊.. @FUTURE_000_NFT

375 Followers 3K Following USAF 🦅🇺🇸 | Web3 Deployment and Analysis 💻 | 100x Gem Invester 📈🐂🎯

Wang Jianming @jianming_w87187

17 Followers 327 Following Early Stage Investor focusing on AI+Robotics

Yixin Chen @_yixinchen

64 Followers 114 Following

EvolvableNFT @EvolvableN

14 Followers 101 Following As an unique digital ID, NFT could be evolvable by depositing crypto currencies, accepting attributes, skills, equipment, events or badges.

MLEMONVR @MLemonVR

310 Followers 6K Following Design good VR accessories for Meta Quest2 Quest3 #AppleVisionPro #VR #vraccessories #Queest

Krypton M. @kristywhim_

14 Followers 33 Following 专注自我|梦想很多的修行人|满绩和颜值是最害人和荒谬的优势| Biostatistics @UNC, Therapist, Dancer, Poet, Writer | Geek out on Digital Marketing, #Healthcare, #Web3, #GenAI

Stephen James @stepjamUK

4K Followers 177 Following Leading the @Dyson Robot Learning Lab. Postdoc @UCBerkeley w/ @pabbeel. PhD Imperial College London w/ @ajdDavison. AI, Robotics, Machine Learning 🤖

Haoyi Zhu @HaoyiZhu

118 Followers 157 Following Ph.D. student @ Shanghai AI Lab & USTC. Interested in 3D vision & robot learning. PonderV2: https://t.co/C7XF871DXl RH20T: https://t.co/rlWsvZ75dF

Jamey Montella @JameyMontel

30 Followers 5K Following

Anish Madan @anishmadan23

345 Followers 2K Following MS @CMU_Robotics | Prev: Associate ML Scientist @WadhwaniAI | @IIITDelhi '20

Yongyuan Liang @cheryyun_l

440 Followers 114 Following @umdcs #RL #TrustworthyAI Prev. @Uber AI/@MSFTResearch Asia

Hanxiao Jiang @jiang_hanxiao

267 Followers 321 Following Ph.D. student @IllinoisCS. Research interest: Robot Learning, Robot Vision, 3D Vision

Yuncong Yang @yyupsong

26 Followers 56 Following Incoming CS PhD student at UMass Amherst, advised by @gan_chuang | Foundation Models/Robotics/Embodied AI | Previously @Columbia and @nvidia

Charis Outzen @OutzeChar

44 Followers 5K Following

Viacheslav Sinii @ummagumm_a

49 Followers 260 Following

Abdessamad LOIS @Abdessamad71099

2 Followers 184 Following

Jayaram Reddy @Jayaram_Robot10

72 Followers 999 Following Masters Student at Robotics research centre @iiit_hyderabad. Interested in robot learning, long horizon planning and RL. Previously undergrad @iitmadras

Ericka Sutyak @eric_suty

53 Followers 5K Following

Mila-rose Cachola @mila_cacho

57 Followers 5K Following

Kumarmoto @kuwelz

202 Followers 3K Following

Yunsheng Tian @YunshengTian

228 Followers 273 Following PhD Candidate @MIT_CSAIL working on robotics/ML/graphics

Weiyu Liu @Weiyu_Liu_

719 Followers 435 Following Postdoc @Stanford. I work on semantic representations for robots. Previously PhD @GTrobotics

Karyl Bazinet @kar_bazi

34 Followers 5K Following

William Sun @williamsun2020

116 Followers 1K Following

Maggie @mtauranga

0 Followers 390 Following

Jiaxun Cui @cuijiaxun

365 Followers 514 Following Ph.D. candidate @UTAustin 🤘 | Multi-agent Learning | SJTU @sjtu1896 | Research Intern FAIR Labs @AIatMeta, Tencent AI Labs

Charles Zhang @__xiaohao__

0 Followers 1 Following

Yue Wang @yuewang314

5K Followers 933 Following Assistant Professor @ USC CS and part-time Research Scientist @ Nvidia Research. Previous: EECS PhD @ MIT CSAIL. Opinions are mine.

Andre Ye @andreiskiii

106 Followers 207 Following @UWPhilosophy and @UWCSE ugrad. Current research interest: interrogating political/social structures and paradigmatic assumptions in data and models.

Stone Tao @Stone_Tao

2K Followers 870 Following PhD @UCSanDiego @HaoSuLabUCSD working on scalable robot learning and embodied AI. Co-founded @LuxAIChallenge to build AI competitions. @NSF GRFP fellow

Nicklas Hansen @ncklashansen

2K Followers 591 Following PhD student @UCSanDiego. @nvidia fellow. Prev: @MetaAI, @UCBerkeley, @DTU_Compute, @NTUsg. Interested in reinforcement learning, representations, and robots.

Chong Harry @ChongHarry1

90 Followers 570 Following

Bo Zhang (Tony) @zhangboknight

364 Followers 156 Following Senior Researcher at Microsoft Research Asia

Muhammad Aamir Gulzar @m_aamir_gulzar

10 Followers 318 Following Student | Tech Innovator | AI & Data Enthusiast

alex rubinsteyn @iskander

5K Followers 4K Following Genomics + immunology + ML = personalized cancer immunotherapy. @CompMedUNC @UNC_Lineberger @OpenVax https://t.co/8DWibdgbLI

Daniel Lawson @danielblawson9

87 Followers 362 Following Student, Purdue CS. Interested in ML, RL, and robotics

MarketIn @MarketIn_1

4K Followers 100 Following MarketIn is designed to forecast market trends by analyzing User Behaviors and Price Action.

Arthur Allshire @arthurallshire

1K Followers 380 Following robotics & simulation. incoming PhD @Berkeley_AI. intern @NvidiaAI. prev EngSci @UofT 🇮🇪 🇨🇦 🇦🇺🇨🇭🇨🇿

Shenlong Wang @ShenlongWang

1K Followers 407 Following Assistant Professor at @IllinoisCS; 3D Computer Vision and Robot Perception.

RL_Conference @RL_Conference

2K Followers 240 Following Home of the first annual reinforcement learning conference. Stay tuned up for updates!

Zhiwen(Aaron) Fan @WayneINR

642 Followers 176 Following PhD@UT Austin | QIF 2022 Fellowship | Intern@NV/Meta/Google | Previous Senior Algorithm Engineer @Alibaba | 3D Modeling/Understanding/Generation, AI4Space

Stephen James @stepjamUK

4K Followers 177 Following Leading the @Dyson Robot Learning Lab. Postdoc @UCBerkeley w/ @pabbeel. PhD Imperial College London w/ @ajdDavison. AI, Robotics, Machine Learning 🤖

Carlo Sferrazza @carlo_sferrazza

474 Followers 202 Following Postdoc at @berkeley_ai. PhD from @eth_en. Robotics, Artificial Intelligence, Tactile Sensing.

Physical Intelligence @physical_int

4K Followers 8 Following Physical Intelligence (Pi), bringing AI into the physical world.

Marcel Torné @marceltornev

219 Followers 209 Following Researcher at the Improbable AI Lab @MIT CSAIL | MEng @Harvard | BSc @EPFL

Jerome Revaud @JeromeRevaud

1K Followers 95 Following AI researcher :: Computer vision :: 3D vision :: Team Lead :: Project Lead :: Naver Labs Europe

Anish Madan @anishmadan23

345 Followers 2K Following MS @CMU_Robotics | Prev: Associate ML Scientist @WadhwaniAI | @IIITDelhi '20

Yongyuan Liang @cheryyun_l

440 Followers 114 Following @umdcs #RL #TrustworthyAI Prev. @Uber AI/@MSFTResearch Asia

Nishanth Kumar @nishanthkumar23

1K Followers 685 Following Robotics + AI PhD Student @MIT_LISLab @MIT_CSAIL, and the Boston Dynamics AI Institute. Formerly @brownbigai, @vicariousai and @uber. S.B @BrownUniversity.

Hanxiao Jiang @jiang_hanxiao

267 Followers 321 Following Ph.D. student @IllinoisCS. Research interest: Robot Learning, Robot Vision, 3D Vision

Xingyu Lin @Xingyu2017

1K Followers 338 Following Postdoc at @berkeley_ai. PhD from @SCSatCMU. #Learning #Robotics

Hao Liu @haoliuhl

4K Followers 155 Following phd student @berkeley_ai https://t.co/ZNJawlrerS machine learning, neural networks.

1X @1x_tech

9K Followers 2 Following Androids built to benefit society and meet the world's labor demand.

Saining Xie @sainingxie

14K Followers 1K Following researcher in #deeplearning #computervision | assistant professor at @NYU_Courant @nyuniversity | previous: research scientist @metaai (FAIR) @UCSanDiego

Lirui Wang (Leroy) @LiruiWang1

563 Followers 266 Following Ph.D. Student @MIT_CSAIL. Previously @uwcse and @nvidia. Robotics, Fleet Learning, Foundation Model 🤖

Hanna Hajishirzi @HannaHajishirzi

6K Followers 328 Following Associate professor at @uw_cse; senior director at @allen_ai co-leading @allenNLP; AI/NLP researcher at @uw_nlp

Yuki Mitsufuji @mittu1204

3K Followers 99 Following PhD, Distinguished Engineer @Sony, Lead Research Scientist @SonyAI_global, Head of Creative AI Lab, Specially Appointed Associate Prof. @tokyotech_jp

Congyue Deng @CongyueD

2K Followers 288 Following CS PhD student @Stanford | Previous: math undergrad @Tsinghua_Uni | ❤️ 3D vision, geometry, and art

Huazhe Harry Xu @HarryXu12

2K Followers 895 Following Hi, I like reinforcement learning, robots, and video games:) I am an amateur pianist. Assistant Prof at Tsinghua; Postdoc at Stanford; Ph.D. at Berkeley

Yunsheng Tian @YunshengTian

228 Followers 273 Following PhD Candidate @MIT_CSAIL working on robotics/ML/graphics

Weiyu Liu @Weiyu_Liu_

719 Followers 435 Following Postdoc @Stanford. I work on semantic representations for robots. Previously PhD @GTrobotics

Boyuan Chen @BoyuanChen0

2K Followers 277 Following PhD student @MIT_CSAIL @MITEECS, Ex @GoogleDeepMind, @berkeley_ai; Doing AI & Robotics. Foundational model for decision making. World models and robotics.

Jiaxun Cui @cuijiaxun

365 Followers 514 Following Ph.D. candidate @UTAustin 🤘 | Multi-agent Learning | SJTU @sjtu1896 | Research Intern FAIR Labs @AIatMeta, Tencent AI Labs

Yue Wang @yuewang314

5K Followers 933 Following Assistant Professor @ USC CS and part-time Research Scientist @ Nvidia Research. Previous: EECS PhD @ MIT CSAIL. Opinions are mine.

Andre Ye @andreiskiii

106 Followers 207 Following @UWPhilosophy and @UWCSE ugrad. Current research interest: interrogating political/social structures and paradigmatic assumptions in data and models.

Stone Tao @Stone_Tao

2K Followers 870 Following PhD @UCSanDiego @HaoSuLabUCSD working on scalable robot learning and embodied AI. Co-founded @LuxAIChallenge to build AI competitions. @NSF GRFP fellow

Eras Tour Resell @ErasTourResell

240K Followers 23 Following fill out the form in our bio so we can get your tickets to another swiftie! ran by @ttwaswift @folklorewlw and @floellasversion

Minyoung Hwang @robominyoung

243 Followers 214 Following Incoming Grad @MIT_CSAIL, Currently Research Intern @carnegiemellon, Previously @allen_ai, @SNU | Robotics | Preference-based RL | Human-Robot Interaction

Yue Yang @YueYangAI

307 Followers 240 Following PhD student @upennnlp, interested in vision and language.

Vincent Sitzmann @vincesitzmann

13K Followers 296 Following Assistant Professor @ MIT, leading the Scene Representation Group (https://t.co/h5gvhLYrtw). Neural scene reps., neural rendering, inverse graphics.

Chris Paxton @chris_j_paxton

8K Followers 2K Following Mostly posting about robots. Embodied AI @hellorobotinc, formerly @AIatMeta, @NVIDIAAI, @zoox. All views my own.

Rafael Rafailov @rm_rafailov

3K Followers 640 Following Ph.D. Student at @StanfordAILab. I work on Foundation Models and Decision Making. Previously @GoogleDeepMind @UCBerkeley

Yifeng Zhu 朱毅枫 @yifengzhu_ut

1K Followers 538 Following Ph.D. student at UT Austin. Research interest in robot learning and general-purpose robots. Opinions are my own.

Manling Li @ManlingLi_

3K Followers 422 Following Postdoc @Stanford, Incoming Assistant Professor @Northwestern, PhD @UIUC. Working on Knowledge Foundation Models, especially for Multimodal data (Language + X).

Haoyu Xiong @Haoyu_Xiong_

2K Followers 2K Following Student researcher @CMU_Robotics/ I work on robotic agents in the real world #robotlearning

Chenlin Meng @chenlin_meng

8K Followers 834 Following Co-founder & CTO @pika_labs | ex @StanfordAILab @Stanford

Demi Guo @demi_guo_

22K Followers 695 Following Co-founder & CEO @pika_labs | ex @StanfordAILab @Harvard

Yejin Choi @YejinChoinka

19K Followers 330 Following professor at UW, director at AI2, adventurer at heartCheck out my labmate @sjoshi804’s work on data-efficient CLIP at #AISTATS2024!

Proud to be presenting Data-Efficient CLIP sjoshi804.github.io/data-efficient… at AISTATS 2024! We propose the first theoretically rigorous method to select the most useful data subset to train CLIP models. On CC3M and CC12M, our subsets are upto 2.5x better than the next best baseline! 🧵👇

Premier-TACO has been accepted by ICML 2024🎉

Imagine an AI that not only learns quickly but adapts to your daily tasks autonomously. Meet Premier-TACO 🌮, a multitask feature representation learning designed for few-shot adaptation in sequential decision-making. Project 🔗: PremierTACO.github.io #FoundationModel4RL A 🧵

Did anyone test this #DUSt3R + #3DGS implementation? github.com/nerlfield/wild…

I believe any PhD who cares quality more than quantity works in single thread mode.

Out of curiosity, do AI PhDs normally work (lead) on several projects simultaneously? I have never managed to work on more than one project during my PhD and I tried to convince my students not to do so. The paradigm might have already changed, so I am asking here.

🔥🔥🔥We're excited to introduce Metric3d v2, the most advanced monocular geometry foundation model for depth and normals estimation, ranking #1 on over 10 benchmarks. Training code, HF demo, and Arxiv preprints are all available now! Github:github.com/YvanYin/Metric… #3D #AIGC

So you want to do robotics tasks requiring dynamics information in the real world, but you don’t want the pain of real-world RL? In our work to be presented as an oral at ICLR 2024, @memmelma showed how we can do this via a real-to-sim-to-real policy learning approach. A 🧵 (1/7)

Dexterous manipulation transfer wishes to transfer human manipulations to dexterous robot hand simulations and is inherently difficult due to its intricate, highly-constrained, and discontinuous dynamics and the need to control a dexterous hand with a DoF to accurately replicate…

🚀Next step in 3D scene understanding beyond objects and parts, we now explore interactive elements and functionalities👇 Check out our @CVPR challenge and win2️⃣RTX4090🏅 (sponsored by @Matterport🙏) 🛠️Details: opensun3d.github.io/cvpr24-challen… 📄Paper: scenefun3d.github.io #CVPR2024

SAM + Optical Flow = FlowSAM FlowSAM can discover and segment moving objects in a video and outperforms all previous approaches by a considerable margin in both single and multi-object benchmarks 🔥 robots.ox.ac.uk/~vgg/research/…

I'm thrilled to join Princeton's faculty as an assistant professor in the ECE department starting Fall 2025 🐯 Stay tuned for the launch of my lab. We will develop generally helpful robots that learn and plan 🤖

Thank you @_akhaliq for sharing our work! In this #CVPR2024 project, we introduce Video2Game to convert a video capture of a real-world scene into an interactive game. video2game.github.io

Video2Game Real-time, Interactive, Realistic and Browser-Compatible Environment from a Single Video Creating high-quality and interactive virtual environments, such as games and simulators, often involves complex and costly manual modeling processes. In this paper, we present

🎤🎤CVPR24 Highlight🔥🔥: PhyScene: Physically Interactable 3D Scene Synthesis for Embodied AI Still worried about how to do physical interactions with these synthetic scenes, especially the object parts? With PhyScene, we could generate infinite interactive 3D scenes with…

SpaTracker can track any 2D pixels in 3D space, which allows for better handling of occlusions and out-of-plane rotations. Only seen this on the 2D plane until, looks pretty dope! Cool 3D examples + Links ⬇️

✨ Presenting MicKey (#CVPR2024, Oral) ✨ We regress and match 3D camera coordinates rather then 2D key points, all in metric space. Gives you a scaled relative pose between two RGB images. Paper: arxiv.org/abs/2404.06337 Page: nianticlabs.github.io/mickey

- Three training views on #DL3DV-10K datasets - Camera poses & intrinsics are unknown - Rendering by interpolation - Resolution: 1920x1080 I can tell, pseudo-views are needed to enhance the quality.

Speeding your view synthesis(<40s) with #InstantSplat! Our large-scale, pose-free method trains in just 37 seconds from sparse views—no #COLMAP, no intrinsics needed. Achieving nearly 30dB test PSNR with just 12 images, New standard in #NVS and new training efficiency.…

If you want to know more about DUSt3R and InstantSplat, the new rising stars on the #3DGS sky, bookmark @hupobuboo's stream or watch his recordings later. His paper readings are legendary and there is s lot to learn from! Highly recommend!

better faster stronger 3DGS, thanks @janusch_patas for the recommendation x.com/i/broadcasts/1…

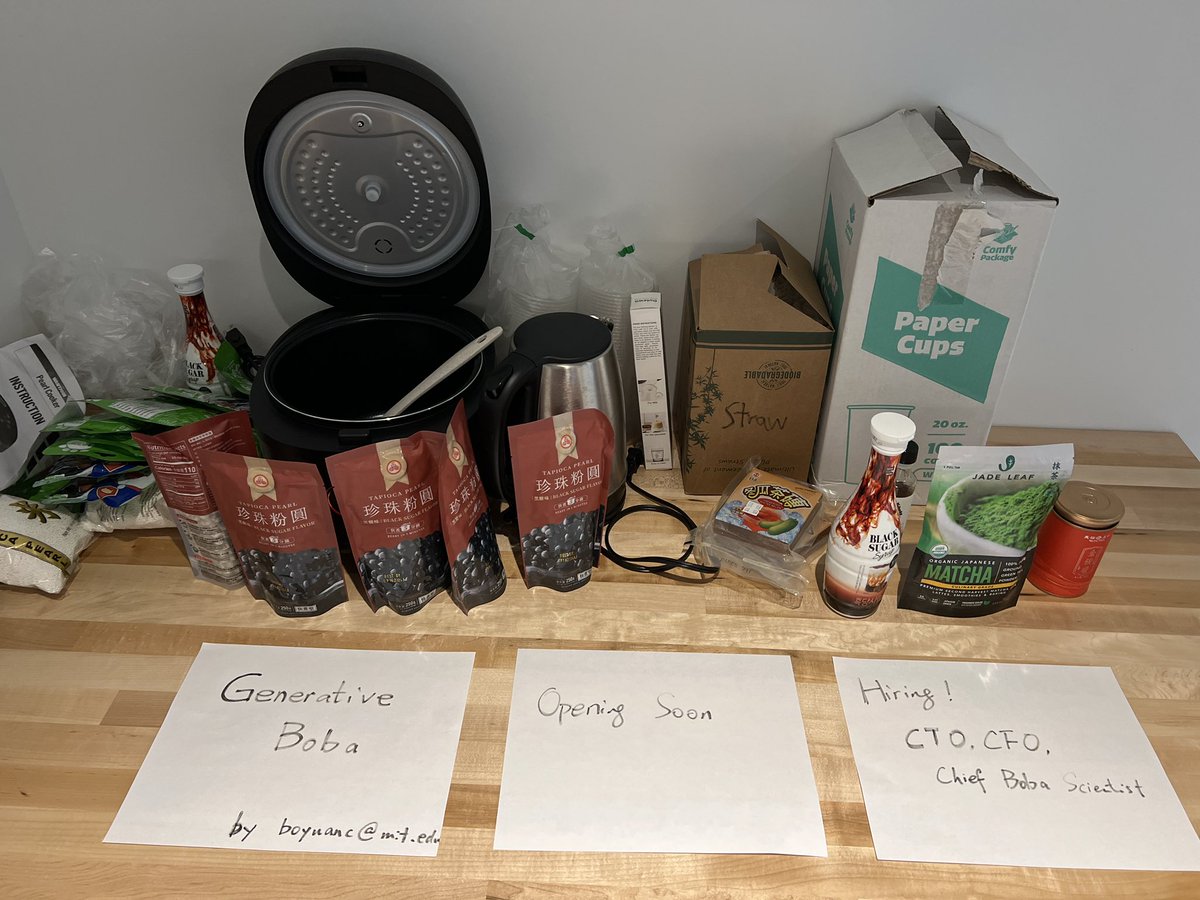

I defended my PhD @MITEECS this week! Thanks to everyone who came out. And thanks especially to @nishanthkumar23 who not only managed the Zoom, but also got me this amazing gift…

Join us at the **5th** Embodied AI Workshop in Seattle on June 18 #CVPR2024 🔊 Exciting invited speakers, 🥷 6+ challenges (with cash prizes), 📋 poster session, and 👽 lots more! 📜Workshop paper submission details coming soon Website: embodied-ai.org

I'm happy to announce the fifth annual Embodied AI Workshop at CVPR! Our theme is open world embodied AI, and we'll have six speakers on how we can make that happen, and six challenges where approaches can prove that they can! #cvpr2024 #embodiedai embodied-ai.org