Albert Gu @_albertgu

assistant prof @mldcmu. chief scientist @cartesia_ai. leading the ssm revolution. Joined December 2018-

Tweets231

-

Followers9K

-

Following90

-

Likes874

HF's dedication to true open source is such a blessing 🙏

HF's dedication to true open source is such a blessing 🙏

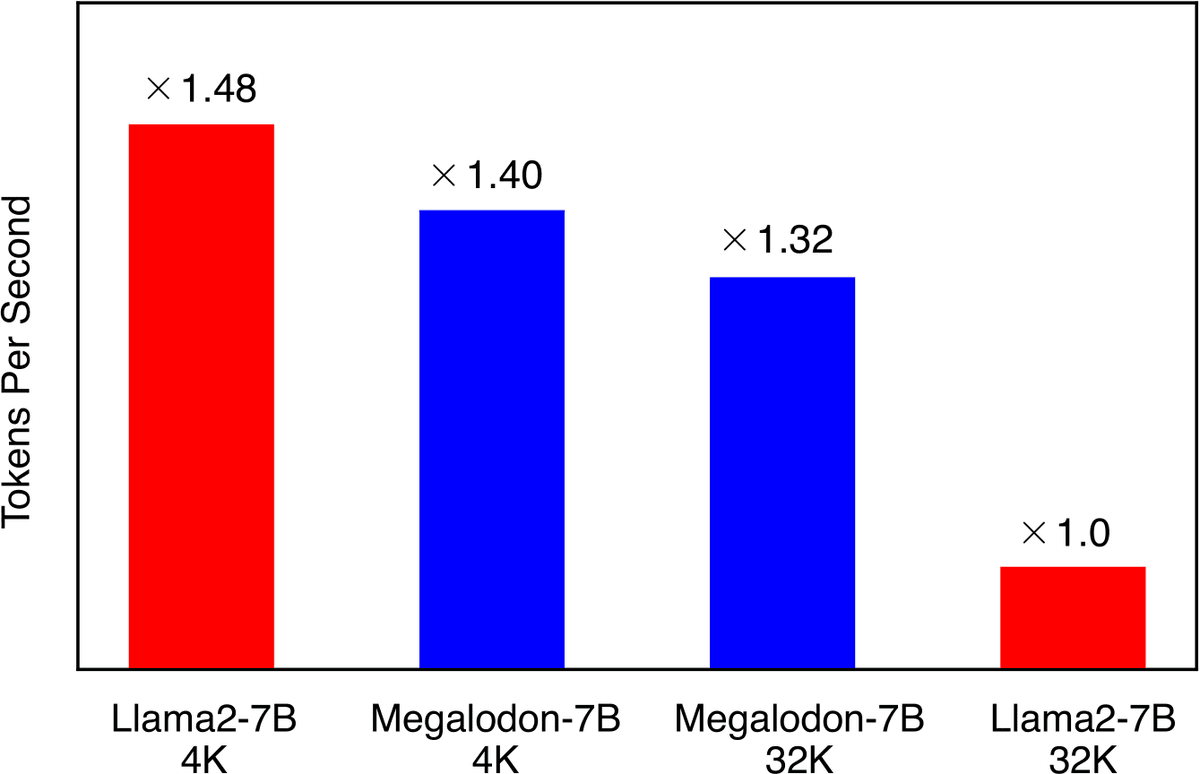

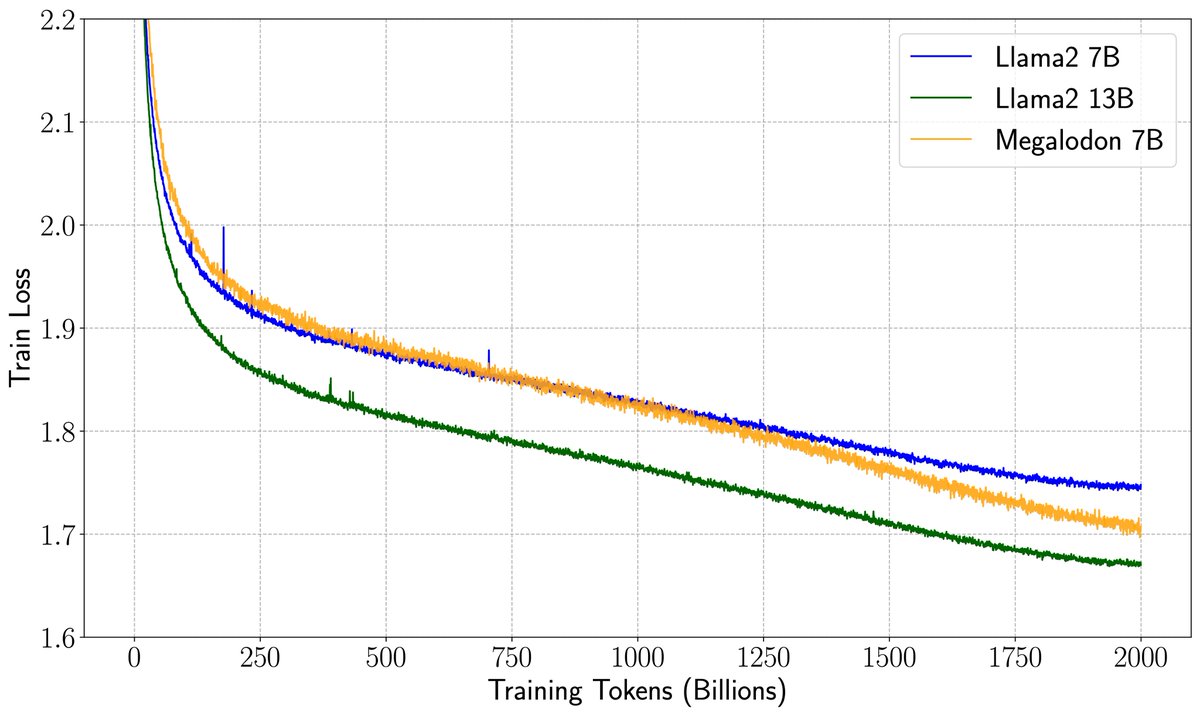

How to enjoy the best of both worlds of efficient training (less communication and computation) and inference (constant KV-cache)? We introduce a new efficient architecture for long-context modeling – Megalodon that supports unlimited context length. In a controlled head-to-head…

Releasing RecurrentGemma - one of the strongest 2B-param open models designed for fast inference on long sequences and massive throughput! Both pre-trained and IT checkpoints available + code - try them out here! Code: github.com/google-deepmin… Weights: kaggle.com/models/google/…

📢 Announcing our new speculative decoding framework Sequoia ❗️❗️❗️ It can now serve Llama2-70B on one RTX4090 with half-second/token latency (exact❗️no approximation) 🤔Sounds slow as a sloth 🦥🦥🦥??? Fun fact😛: DeepSpeed -> 5.3s / token; 8 x A100: 25ms / token (costs 8 x…

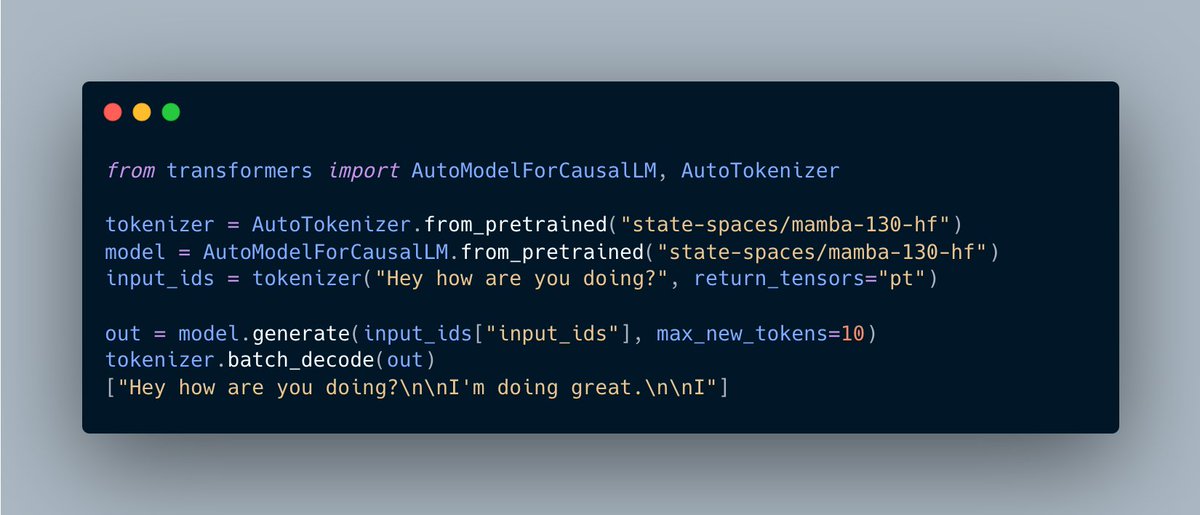

`mamba` is now available in transformers. PEFT finetuning example: gist.github.com/ArthurZucker/7… Thanks @_albertgu and @tri_dao for this brilliant model! 🚀 and the amazing `mamba-ssm` kernels powering this!

Let's implement Mamba in Triton. (srush.github.io/annotated-mamb…) A gentle, (but mildly obsessive) tutorial notebook about GPU programming in Triton. We're getting close to mere mortals being able to do this 😂

I'm beyond thrilled to make two pretty substantial announcements: 1. We just released a brand new open source Guardrails Hub, with 50+ validators and more coming! 2. We raised a round of seed funding round to execute on our vision of open source AI reliability 🧵

This was a really inspiring and IMO milestone paper in the space of efficient attention / RNNs! coming soon: a strong generalization of this from the view of SSMs ;)

This was a really inspiring and IMO milestone paper in the space of efficient attention / RNNs! coming soon: a strong generalization of this from the view of SSMs ;)

there's quite a nice writeup hidden in the github: github.com/jzhang38/LongM…

there's quite a nice writeup hidden in the github: github.com/jzhang38/LongM…

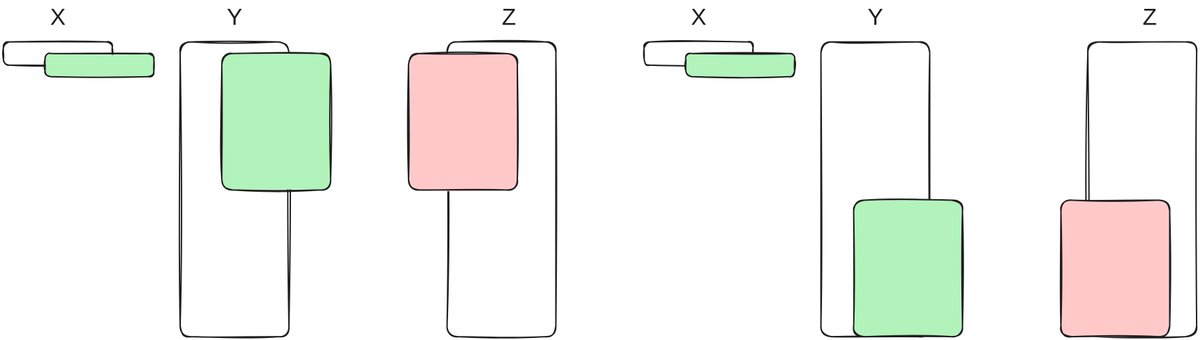

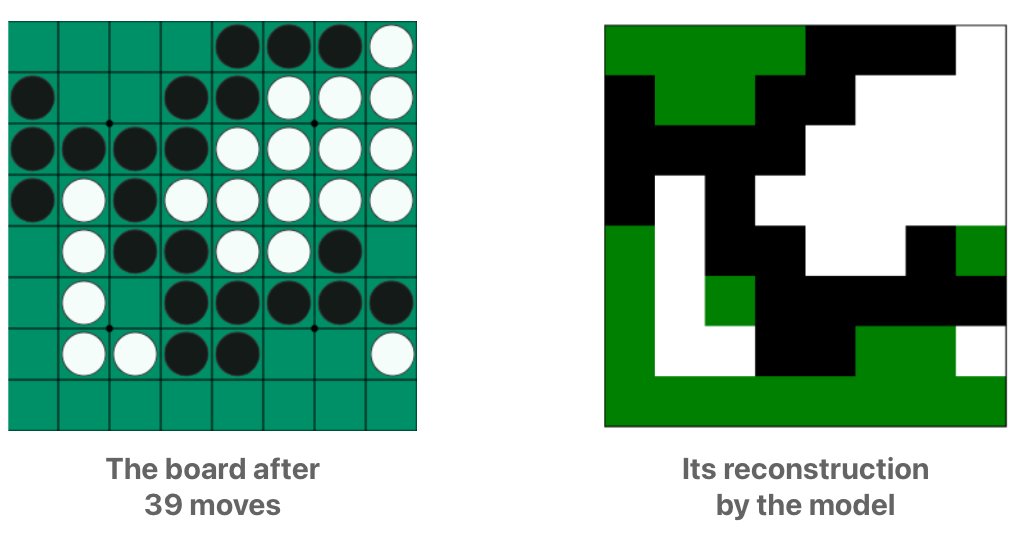

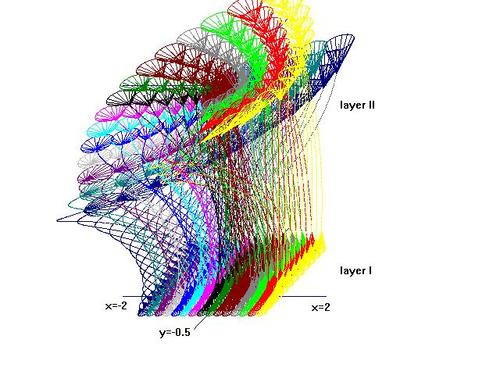

How does Mamba fare in the OthelloGPT experiment ? Let's compare it to the Transformer 👇🧵

Our preprint on non-Euclidean representation learning for biological pathway graphs is now on arXiv arxiv.org/abs/2401.15478 This research was led by @dannymcneela who is co-advised by @fredsala. 1/

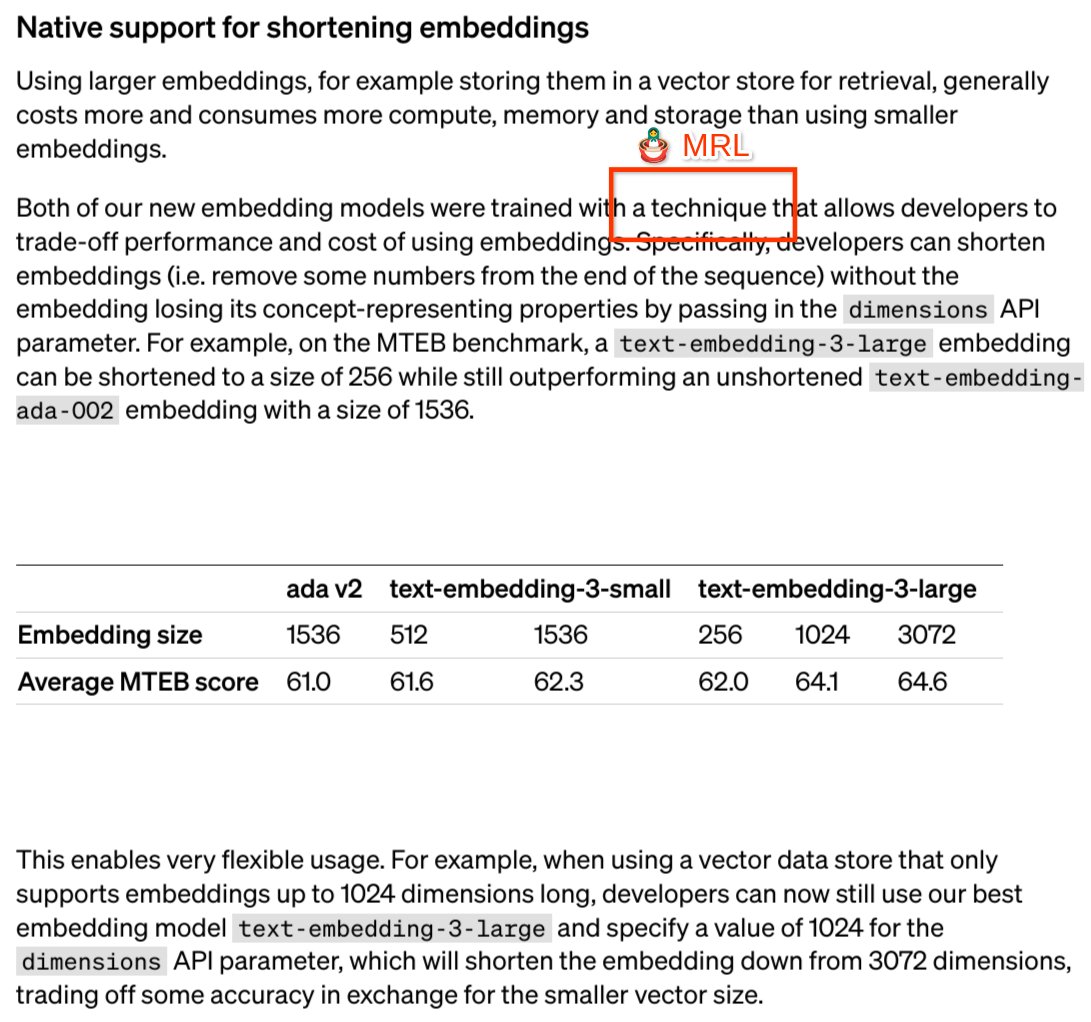

Pace of progress in AI is lightning! @OpenAI released MRL style text embeddings, mirroring our NeurIPS '22 paper (w/ awesome folks from UW and Harvard). However, as an advocate of open science, I am a bit disappointed with rebranding to "shortening embs" without ref to MRL 1/n

Pace of progress in AI is lightning! @OpenAI released MRL style text embeddings, mirroring our NeurIPS '22 paper (w/ awesome folks from UW and Harvard). However, as an advocate of open science, I am a bit disappointed with rebranding to "shortening embs" without ref to MRL 1/n

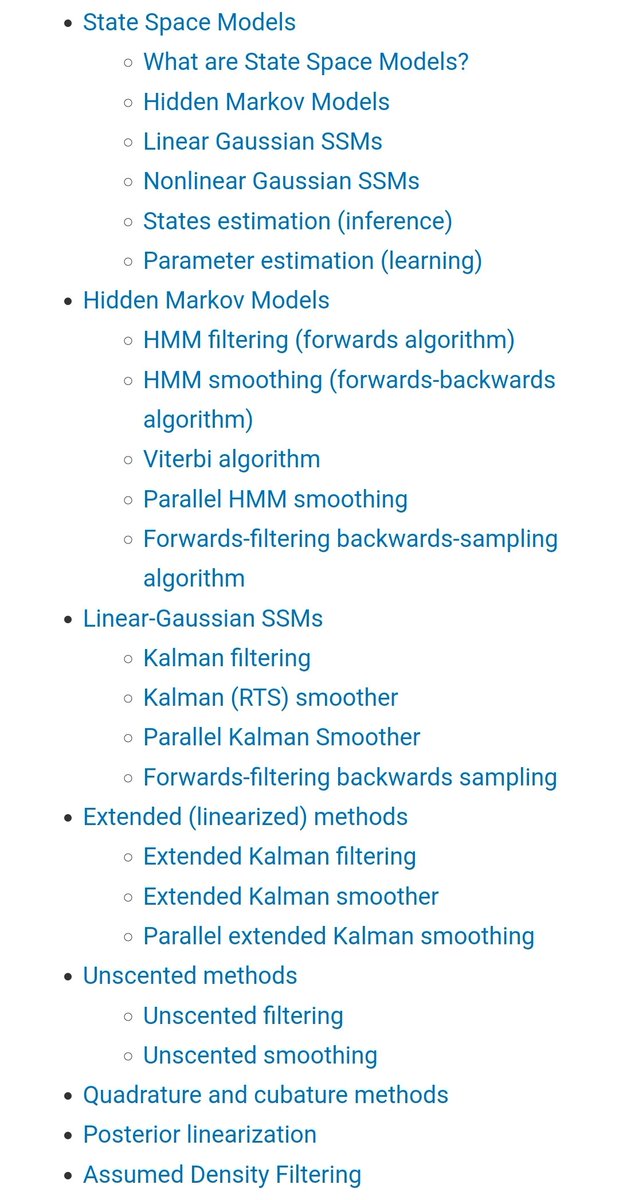

Check out these comprehensive resources on classical state space models! @sirbayes and @scott_linderman have done foundational work on these "statistical SSMs" long before I introduced "structured SSMs" for deep learning (S4, Mamba, etc). It's useful to disambiguate these terms!

Check out these comprehensive resources on classical state space models! @sirbayes and @scott_linderman have done foundational work on these "statistical SSMs" long before I introduced "structured SSMs" for deep learning (S4, Mamba, etc). It's useful to disambiguate these terms!

🔥 The Mamba ecosystem is GROWING! ⚡️ Thanks to @mrm8488 we have Mamba Hermes model! Works absolutely fine on Google Colab!!!

MambaByte: Token-free Selective State Space Model Outperforms SotA subword Transformers while being tokenizer agnostic and achieving fast inference thanks to linear inference cost arxiv.org/abs/2401.13660

My TEDAI talk from Oct 2023 is now live: go.ted.com/percyliang It was a hard talk to give: 1. I memorized it - felt more like giving a piano recital than an academic talk. 2. I wanted it to be timeless despite AI changing fast…still ok after 3 months. Here’s what I said:

CMU is hiring a system administrator for our GPU cluster (350 GPUs and growing!): cmu.wd5.myworkdayjobs.com/en-US/CMU/deta… Come work with us to help build out compute infrastructure to enable new discoveries in AI, large language models, and beyond!

Excited to co-organize this ICLR 2024 workshop! I think better data will be crucial for the next big advances in foundation models. The submission date is Feb 3 - details at sites.google.com/view/dpfm-iclr…

Excited to co-organize this ICLR 2024 workshop! I think better data will be crucial for the next big advances in foundation models. The submission date is Feb 3 - details at sites.google.com/view/dpfm-iclr…

Percy Liang @percyliang

49K Followers 408 Following Associate Professor in computer science @Stanford @StanfordHAI @StanfordCRFM @StanfordAILab @stanfordnlp | cofounder @togethercompute | Pianist

(((ل()(ل() 'yoav))).. @yoavgo

46K Followers 2K Following

Rosanne Liu @savvyRL

33K Followers 966 Following Cofounded & running @ml_collective. Host of Deep Learning Classics & Trends. Research at Google DeepMind. DEI/DIA Chair of ICLR & NeurIPS. Writing https://t.co/IbycyGfnDR

Sasha Rush @srush_nlp

52K Followers 464 Following Professor, Programmer in NYC. Cornell Tech, Hugging Face 🤗 https://t.co/cZl0wTfqGz

Eric Jang @ericjang11

69K Followers 3K Following physical AGI at 1X. Author of "AI is Good for You" https://t.co/eFg4WXhg0p

Delip Rao e/σ @deliprao

46K Followers 5K Following Busy inventing the shipwreck. @Penn. Past: @johnshopkins, @UCSC, @Amazon, @Twitter ||Art: #NLProc, Vision, Speech, #DeepLearning || Life: 道元, improv, running 🌈

Gautam Kamath @thegautamkamath

44K Followers 505 Following Assistant Prof of CS @UWaterloo, Faculty @VectorInst, Canada @CIFAR_News AI Chair. Co-EiC @TmlrOrg. I lead @TheSalonML. Privacy, robustness, machine learning.

Kyunghyun Cho @kchonyc

61K Followers 2K Following a combination of a mediocre scientist, a mediocre manager, a mediocre advisor & a mediocre PC at @nyuniversity (@CILVRatNYU) & @genentech (@PrescientDesign).

Soumith Chintala @soumithchintala

186K Followers 877 Following Cofounded and lead @PyTorch at Meta. Also dabble in robotics at NYU. AI is delicious when it is accessible and open-source.

Graham Neubig @gneubig

31K Followers 586 Following Associate professor at CMU, studying natural language processing and machine learning.

Horace He @cHHillee

23K Followers 449 Following Working at the intersection of ML and Systems @ PyTorch "My learning style is Horace twitter threads" - @typedfemale

Dan Roy @roydanroy

45K Followers 2K Following ML / AI researcher, emphasis on theory. Research Director and Canada CIFAR AI Chair, @VectorInst Professor, @UofT (Statistics/CS)

Sam Bowman @sleepinyourhat

35K Followers 3K Following AI alignment + LLMs at NYU & Anthropic. Views not employers'. No relation to @s8mb. I think you should join @givingwhatwecan.

Sara Hooker @sarahookr

39K Followers 7K Following I lead @CohereForAI. Formerly Research @Google Brain @GoogleDeepmind. ML Efficiency at scale, LLMs, @trustworthy_ml. Changing spaces where breakthroughs happen.

Tom Goldstein @tomgoldsteincs

23K Followers 2K Following Professor at UMD. AI security & privacy, algorithmic bias, foundations of ML. Follow me for commentary on state-of-the-art AI.

Zachary Lipton @zacharylipton

59K Followers 2K Following Professor: CMU/@acmi_lab, CTO / CSO: @AbridgeHQ, Creator: @d2l_ai & https://t.co/QQt98VNLUp, Relapsing 🎷

Miles Brundage @Miles_Brundage

43K Followers 10K Following Policy research at @openai. I mostly tweet about AI, animals, and sci-fi. He/him. Views my own.

Karan Goel @krandiash

3K Followers 882 Following Founder @cartesia_ai, Machine Learning PhD at @StanfordAILab, CMU / IIT-Delhi alum.

Naomi Saphra @nsaphra

7K Followers 1K Following Waiting on a robot body. ML/NLP. All opinions are universal and held by both employers and family. Same username on every lifeboat off this sinking ship.

Ananya Kumar @ananyaku

4K Followers 470 Following Researcher at @openai Previously PhD at Stanford University (@StanfordAILab) advised by Percy Liang and Tengyu Ma

Rustem S @vigosun

452 Followers 2K Following

Adi Mashiach, MD @AdiMashiach

42 Followers 272 Following Husband, father, divergent thinker, innovator, entrepreneur, pianist. I put my heart into what I do. I want to change the world. Founding partner @MesiaVentures

Michal Kubizna @michalkubizna

15 Followers 19 Following

Marcus Kim @bebekim

297 Followers 4K Following

Flávia Beatriz Cruz @flaviabcruz

6 Followers 1K Following

Ali Naqvi @1NaqviAli

2 Followers 12 Following First-year MSc student at McMaster University studying evolutionary computation and ml.

Sadman Sakib @sadmankiba

15 Followers 135 Following CS grad student @WisconsinCS. Exploring systems and networking.

Atharv Prajod Padmala.. @APadmalayam

6 Followers 74 Following Freshman at University of Wisconsin-Madison, Econ+Data Sci, Economics Researcher. Check out my website!

Xuhui Zhang @XuhuiZhangXHZ

4 Followers 230 Following

Davide Fiocco @monodavide

107 Followers 397 Following

Zichen Wang @ZichenWangPhD

240 Followers 444 Following ML Scientist @awscloud. #MachineLearning, Healthcare, Biology. Formerly PhD & research faculty @IcahnMountSinai. Views are my own.

Lannister @Lannister998

5 Followers 99 Following

Noah Liniger @NoahLiniger

17 Followers 134 Following

Hao Chang @_Hao_Chang_

10 Followers 542 Following

Isaac Thompson @IsaacThss

114 Followers 1K Following Research Assistant at The Alan Turing Institute, researching reinforcement learning and autonomous cyber defence.

NiciTheFox @FoxNici

73 Followers 499 Following

Woomin Song @WoominSong

3 Followers 34 Following

Mark 🇺🇳 @mcraddock

3K Followers 3K Following Techie. Built VH1, G-Cloud, Unified Patent Court, UN Global Platform. Saved UK Economy £12Bn. Now building AI stuff #WardleyMap #PromptEngineer #MLOps #DataOps

Arif Ahmad @arif_ahmad_py

252 Followers 7K Following All things AI, Computer Science and Circuits! Prev. @GoogleAI

Himanshu Beniwal @HimanshuBeniwaI

261 Followers 896 Following #NLP #ML #AI PhD Student at @lingoiitgn, @iitgn. Alum: @cup_bathinda & @h_garhwal

Ali Behrouz @behrouz_ali

912 Followers 848 Following Ph.D. Student @cornell, interested in machine learning.

AceAzycrdfz @AceAzycrdfz

31 Followers 259 Following

Gargi Panda @GargiPanda8561

0 Followers 4 Following

Chee Jiang @qiyi1019

24 Followers 160 Following 👩💻 Passionate about AI, data science & emerging tech 🚀

Clément Perroud @ClmentPerroud1

0 Followers 20 Following

Peng Zhou @zp_pengzhou

29 Followers 54 Following Ph.D. candidate at @ucsc focused on Neuromorphic Computing

Rishu Kumar @rishdotuk

548 Followers 537 Following A student of language @EM_LCT (@ufal_cuni & @LstSaar). Machine Translation and Summarisation #NLProc

James Torre @jpt401

732 Followers 3K Following Root node of the web of threads: https://t.co/ifH80GcLpo

Henry Yin @HenryYin_

176 Followers 158 Following Resident @agihouse_org ; Co-founder @MericoDev; Creator of Apache DevLake; CS PhD Dropout @ UC Berkeley

Bor-Sung Liang @BorSungLiang

16 Followers 84 Following

Pietro Mazzaglia @pietromazzaglia

248 Followers 251 Following AI and Robotics researcher. Currently interning at MILA/SNow Research. Previously interned at Qualcomm and Dyson

Yuchen Zhu @yuchen4975

25 Followers 160 Following ML PhD student @GeorgiaTech. I work on diffusion models and mean field games.

YunpyoAn @YunpyoAn

448 Followers 847 Following Ph.D. Candidate in Artificial intelligence at UNIST B.S. at UNIST, major CompSci / I usually post Korean...

kodjk @jokhaniya

0 Followers 599 Following

FSM @fsm_top

8 Followers 105 Following

Scott Nelson @ScottNelso94591

1 Followers 20 Following

Lingjie Chen @LingjieChen127

31 Followers 45 Following

SUKO @zhiyun_xu20

1 Followers 410 Following

WENHAN YANG @WenhanYang0315

227 Followers 434 Following P.hD. in CS, UCLA. Interest in self-supervised learning, including exploring Graph CL, CL robustness and multimodal CL robustness.

Yi Tay @YiTayML

29K Followers 97 Following chief scientist / cofounder @RekaAILabs 🫠 past: research scientist @google brain 🤯 currently learning to be a dad 🍼

Sasha Rush @srush_nlp

52K Followers 464 Following Professor, Programmer in NYC. Cornell Tech, Hugging Face 🤗 https://t.co/cZl0wTfqGz

Gautam Kamath @thegautamkamath

44K Followers 505 Following Assistant Prof of CS @UWaterloo, Faculty @VectorInst, Canada @CIFAR_News AI Chair. Co-EiC @TmlrOrg. I lead @TheSalonML. Privacy, robustness, machine learning.

Kyunghyun Cho @kchonyc

61K Followers 2K Following a combination of a mediocre scientist, a mediocre manager, a mediocre advisor & a mediocre PC at @nyuniversity (@CILVRatNYU) & @genentech (@PrescientDesign).

Soumith Chintala @soumithchintala

186K Followers 877 Following Cofounded and lead @PyTorch at Meta. Also dabble in robotics at NYU. AI is delicious when it is accessible and open-source.

Christopher Manning @chrmanning

126K Followers 115 Following Director, @StanfordAILab. Assoc. Director, @StanfordHAI. Founder, @stanfordnlp. Prof. CS & Linguistics, @Stanford. IP @aixventureshq. 🇦🇺 Do #NLProc & #AI. 👋

Horace He @cHHillee

23K Followers 449 Following Working at the intersection of ML and Systems @ PyTorch "My learning style is Horace twitter threads" - @typedfemale

Dan Roy @roydanroy

45K Followers 2K Following ML / AI researcher, emphasis on theory. Research Director and Canada CIFAR AI Chair, @VectorInst Professor, @UofT (Statistics/CS)

Zachary Lipton @zacharylipton

59K Followers 2K Following Professor: CMU/@acmi_lab, CTO / CSO: @AbridgeHQ, Creator: @d2l_ai & https://t.co/QQt98VNLUp, Relapsing 🎷

Karan Goel @krandiash

3K Followers 882 Following Founder @cartesia_ai, Machine Learning PhD at @StanfordAILab, CMU / IIT-Delhi alum.

Ananya Kumar @ananyaku

4K Followers 470 Following Researcher at @openai Previously PhD at Stanford University (@StanfordAILab) advised by Percy Liang and Tengyu Ma

Beidi Chen @BeidiChen

6K Followers 351 Following Asst. Prof @CarnegieMellon, Visiting Researcher @Meta, Postdoc @Stanford, Ph.D. @RiceUniversity, Large-Scale ML, a fan of Dota2.

Ben Recht @beenwrekt

26K Followers 365 Following optimization. machine learning. uc berkeley. I blog at https://t.co/fkJujOPsJb The world won't end.

Yisong Yue @yisongyue

19K Followers 3K Following Machine Learning @Caltech (@YueLabCaltech). AI for invention at @AsariAILabs. Autonomous Driving at https://t.co/riZHAmvcAr. Senior Program Chair @iclr_conf.

Ferenc Huszár @fhuszar

40K Followers 1K Following Secular Bayesian. Associate Professor in Machine Learning @Cambridge_CL. Talent aficionado at https://t.co/RbJkoLguey Alum of @Twitter, Magic Pony and @Balderton

Tri Dao @tri_dao

18K Followers 364 Following Incoming Asst. Prof @PrincetonCS, Chief Scientist @togethercompute. Machine learning & systems.

Durk Kingma @dpkingma

35K Followers 347 Following Deep learning, mostly generative models. Prev. Google Brain/DeepMind, founding team @OpenAI. Inventor of the VAE, Adam optimizer, among other things. ML PhD.

Ruiqi Gao @RuiqiGao

5K Followers 512 Following Research scientist @Google DeepMind. Generative modeling, representation learning.

Neal Wu @WuNeal

15K Followers 390 Following Building @cognition_labs. Previously @tryramp, @GoogleBrain, @Harvard, competitive programming (featured in @Wired). Created https://t.co/pihw5AGvbV.

Cartesia @cartesia_ai

1K Followers 8 Following Cartesia is training next-gen foundation models with subquadratic deep learning architectures. Sign up for early access at https://t.co/c5og0yF1Pz

Karina Nguyen @karinanguyen_

12K Followers 648 Following AI research & eng @AnthropicAI, prev. intern @nytimes, @square, @dropbox

長崎ペンギン水.. @NagasakiPengin

26K Followers 0 Following 長崎ペンギン水族館の公式Twitterページです。 世界に生息する18種類のペンギンのうち国内最多の9種類、約180羽を飼育。 ペンギンに特化した水族館です。 国内唯一の「ふれあいペンギンビーチ」では、自然の海でペンギンが泳ぎます。

Machine Learning Dept.. @mldcmu

17K Followers 370 Following The top education and research institution in the 🌎 for #AI and #machinelearning | Research → https://t.co/jUD0hZ8SFx | Learn more ↓

Neovim: vim out of th.. @Neovim

36K Followers 16 Following Posts refer to Nvim prerelease/development build. Usage/configuration questions: https://t.co/mfM6kFnSfE

Sherrie Wang @sherwang

3K Followers 762 Following Computing & data for sustainability. 🛰🌾 Assistant Professor @MITMechE & @mitidss PI, Earth Intelligence Lab

Smerity @Smerity

32K Followers 2K Following gcc startup.c -o ./startup. Focused on machine learning & society. Previously @Salesforce Research via @MetaMindIO. @Harvard '14, @Sydney_Uni '11. 🇦🇺 in SF.

Mark Tenenholtz @marktenenholtz

114K Followers 544 Following Head of AI @PredeloHQ. XGBoost peddler, transformer purveyor.

Stephen Wright @madsjw

2K Followers 1K Following

Piero Molino @w4nderlus7

2K Followers 428 Following

Mayee Chen @MayeeChen

1K Followers 419 Following CS PhD student @StanfordAILab @HazyResearch, undergrad @princeton. she/her 🎃

Pang Wei Koh @PangWeiKoh

3K Followers 790 Following Assistant professor at @uwcse. Formerly @StanfordAILab @GoogleAI @Coursera. 🇸🇬

Daniel Levy @daniellevy__

1K Followers 373 Following @openai -- previously: phd @stanford, intern at @googlebrain and @facebook, alumni @polytechnique @lyceellg.

Sharon Y. Li @SharonYixuanLi

7K Followers 657 Following Assistant Professor @WisconsinCS. Formerly postdoc @StanfordAILab, Ph.D. @Cornell. Making AI safe and reliable for the open world.

Hongyang R. Zhang @HongyangZhang

670 Followers 451 Following Asst Prof of #computerscience @Northeastern. PhD @Stanford. Postdoc @Penn.

Nimit Sohoni @nimit_sohoni

286 Followers 1K Following

Steven Brunton @eigensteve

45K Followers 1K Following Teaches math to engineers: https://t.co/TJ5i3Pg678 Professor @UW researching #MachineLearning for #Dynamics and #Control, especially for #FluidDynamics.

Caglar Gulcehre @caglarml

4K Followers 1K Following ML Researcher Prof @ EPFL, PI @ CLAIRE lab Ex: Staff Research Scientist @ Deepmind, MSR, IBM Research Follow me on Mastodon: https://t.co/LZ5sWt7Asj

Sang Michael Xie @sangmichaelxie

3K Followers 709 Following PhD student @StanfordAILab @StanfordNLP @Stanford advised by Percy Liang and Tengyu Ma. Prev: visiting @GoogleAI Brain, BS, MS Stanford ‘17

Stefano Ermon @StefanoErmon

13K Followers 362 Following Associate Professor of #computerscience @Stanford #AI #ML #Sustainability

Fred Sala @fredsala

985 Followers 546 Following Assistant Professor @WisconsinCS. Research scientist @SnorkelML. Prev. Stanford postdoc. Working on machine learning & information theory.

Sen Wu @Wu_Sen

171 Followers 146 Following

Kristy Choi @kristychoi_

501 Followers 630 Following CS @Stanford. Previously CS-Stats @Columbia. Machine Learning, Bayesian statistics, generative models.

hazyresearch @HazyResearch

7K Followers 1K Following A research group in @StanfordAILab working on the foundations of machine learning & systems. https://t.co/JHK58TDorG Ostensibly supervised by Chris Ré

Stanford AI Lab @StanfordAILab

136K Followers 318 Following The Stanford Artificial Intelligence Laboratory (SAIL), a leading #AI lab since 1963. ⛵️🤖 Emmy-winning video: https://t.co/lV9smZTC1m

Aditya Grover @adityagrover_

8K Followers 412 Following CS Prof @UCLA. AI, ML, Climate. Prev: Postdoc @berkeley_ai, PhD @StanfordAILab, bachelors @IITDelhi.

Chelsea Finn @chelseabfinn

69K Followers 384 Following Asst Prof of CS & EE @Stanford. PhD from @Berkeley_EECS, EECS BS from @MIT

Tengyu Ma @tengyuma

25K Followers 512 Following Assistant professor at Stanford; Co-founder of Voyage AI (https://t.co/wpIITHLgF0) ; Working on ML, DL, RL, LLMs, and their theory.

OpenAI @OpenAI

3.4M Followers 0 Following OpenAI’s mission is to ensure that artificial general intelligence benefits all of humanity. We’re hiring: https://t.co/dJGr6LgzPA

Berkeley Lab @BerkeleyLab

92K Followers 2K Following Official account of Lawrence Berkeley National Laboratory (LBNL), a U.S. Department of @ENERGY #nationallab. #BringingScienceSolutionsToTheWorld

Paul Graham @paulg

1.9M Followers 772 Following

Deep Leffen Bot @DeepLeffen

111K Followers 2 Following Originally a GPT-3 model trained on @TSM_Leffen tweets and /r/smashbros. Now a GPT-4 model trained on my own posts. All content is heavily curated and prompted.

ICLR 2024 @iclr_conf

41K Followers 40 Following International Conference on Learning Representations #ICLR2024. SPC is @yisongyue and GC is @_beenkim OpenReview:https://t.co/OD1sg0r7F8❓Wanna host a Llama2-7B-128K (14GB weight + 64GB KV cache) at home🤔 📢 Introducing TriForce! 🚀Lossless Ultra-Fast Long Seq Generation — training-free Spec Dec! 🌟 🔥 TriForce serves with 0.1s/token on 2 RTX4090s + CPU – only 2x slower on an A100 (~55ms on chip), 8x faster…

Interesting trend in AI: the best results are increasingly obtained by compound systems, not monolithic models. AlphaCode, ChatGPT+, Gemini are examples. In this post, we discuss why this is and emerging research on designing & optimizing such systems. bair.berkeley.edu/blog/2024/02/1…

📢 Releasing TRI's open-source Mamba-7B trained on 1.2T tokens of RefinedWeb! Mamba-7B is the largest fully recurrent Mamba model trained and is a state-of-the-art recurrent LLM. 🚀🚀🚀 huggingface.co/TRI-ML/mamba-7…

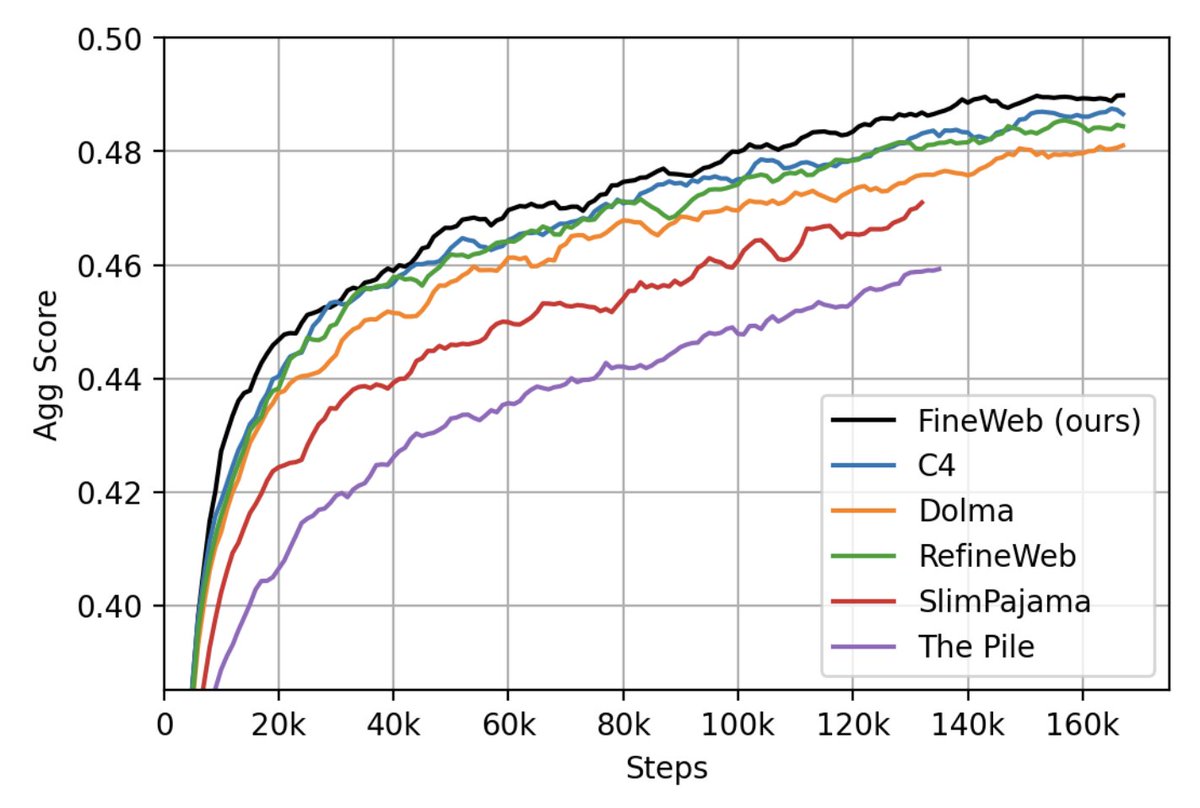

Llama3 was trained on 15 trillion tokens of public data. But where can you find such datasets and recipes?? Here comes the first release of 🍷Fineweb. A high quality large scale filtered web dataset out-performing all current datasets of its scale. We trained 200+ ablation…

We have just released 🍷 FineWeb: 15 trillion tokens of high quality web data. We filtered and deduplicated all CommonCrawl between 2013 and 2024. Models trained on FineWeb outperform RefinedWeb, C4, DolmaV1.6, The Pile and SlimPajama!

The live updating image generator on meta.ai/?icebreaker=im… is a pretty sick UX.

research is an immensely taxing endeavour. hours spend doing IC work, debugging and what not. a paper is a canvas for researchers to express themselves after all the hard work, at the end of the day. it's my art. at least let me paint the way i want to paint. The reason why i am…

In retrospect, UL2 was a wild paper. «Yeah so this just sort of cooked, turns out it's more optimal than Chinchilla, idk check it out». This almost farcically casual tone makes me suspect that Yi was thinking in detail about founding Reka at the moment. arxiv.org/abs/2205.05131

Another Mamba-Attention hybrid that looks very strong! These two layers are complementary: Mamba is great at compressing information, and a few attention layers are enough to retrieve from the context for in-context learning.

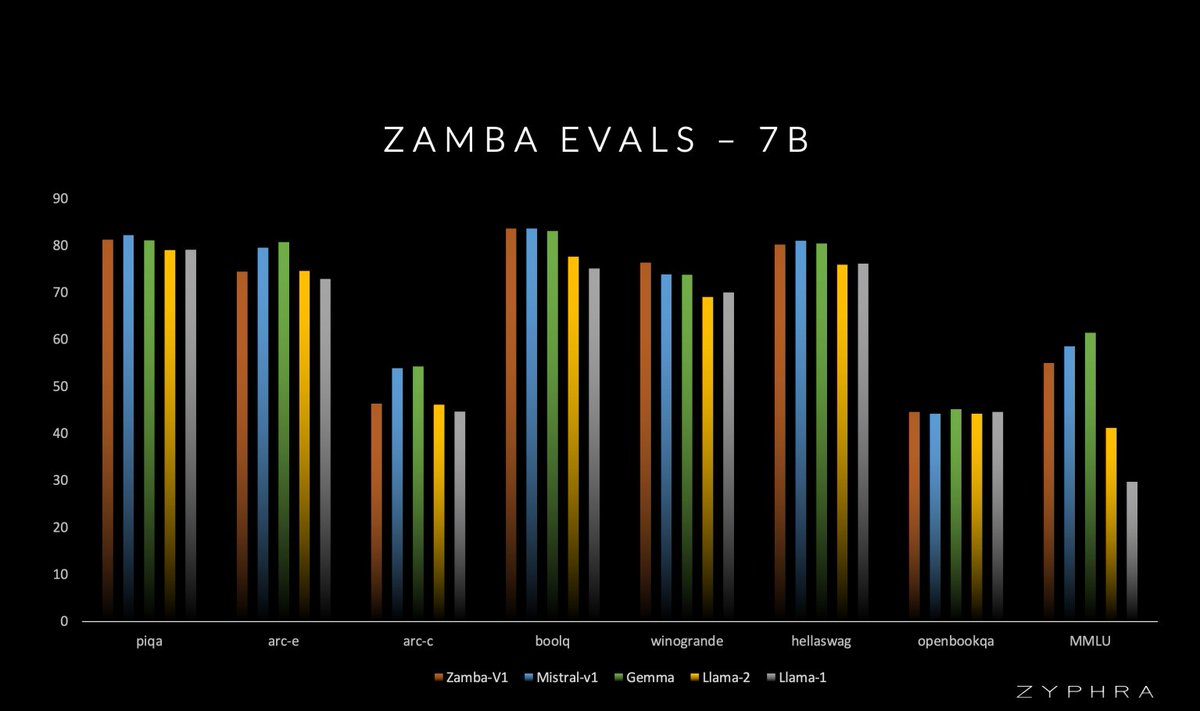

Zyphra is pleased to announce Zamba-7B: - 7B Mamba/Attention hybrid - Competitive with Mistral-7B and Gemma-7B on only 1T fully open training tokens - Outperforms Llama-2 7B and OLMo-7B - All checkpoints across training to be released (Apache 2.0) - Achieved by 7 people, on 128…

This is the first time we see a new architecture making🍎to🍎 comparison at scale with Llama-7B trained on the same 2T tokens and win (unlimited context length, lower ppl, constant kv at inference, ...)! Very excited to be part of the team! Thanks for the lead @violet_zct…

How to enjoy the best of both worlds of efficient training (less communication and computation) and inference (constant KV-cache)? We introduce a new efficient architecture for long-context modeling – Megalodon that supports unlimited context length. In a controlled head-to-head…

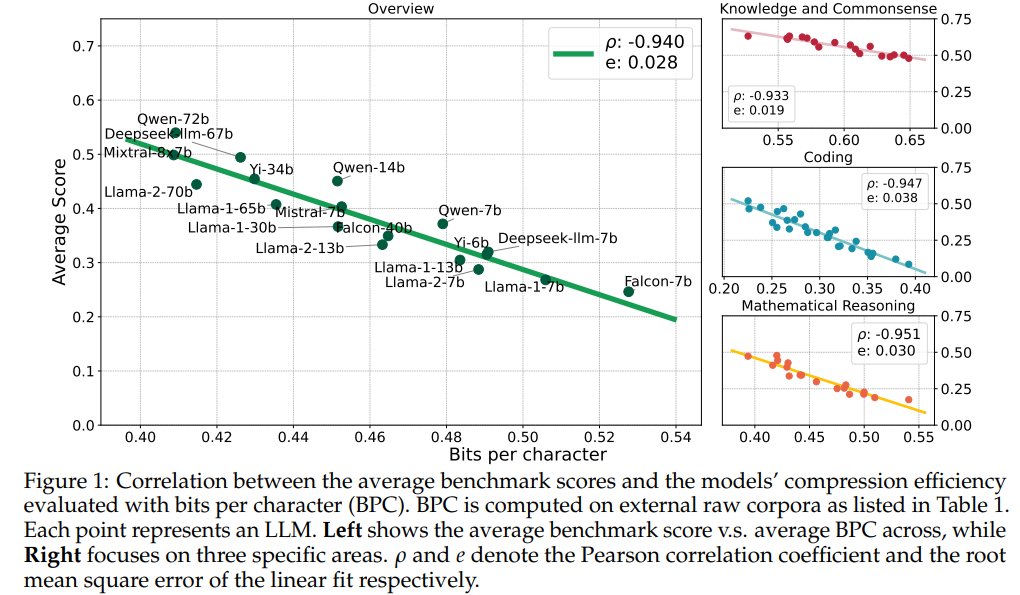

Is this not just saying that performance on LLM benchmarks improves with perplexity (you fit the data better)? Generative models are already trained to essentially compress their training set.

Compression Represents Intelligence Linearly LLMs' intelligence – reflected by average benchmark scores – almost linearly correlates with their ability to compress external text corpora repo: github.com/hkust-nlp/llm-… abs: arxiv.org/abs/2404.09937

I am also concerned about this too. I am already quite unsatisfied with the current quality of reviews, and I am hoping that at least we will not ask high school students to review main conference papers as well. Or are we already at the dip so that it can't get worse 🤷♂️

This year, we invite high school students to submit research papers on the topic of machine learning for social impact! See our call for high school research project submissions below. buff.ly/43TiTdD

Glad to have made a small contribution to it!

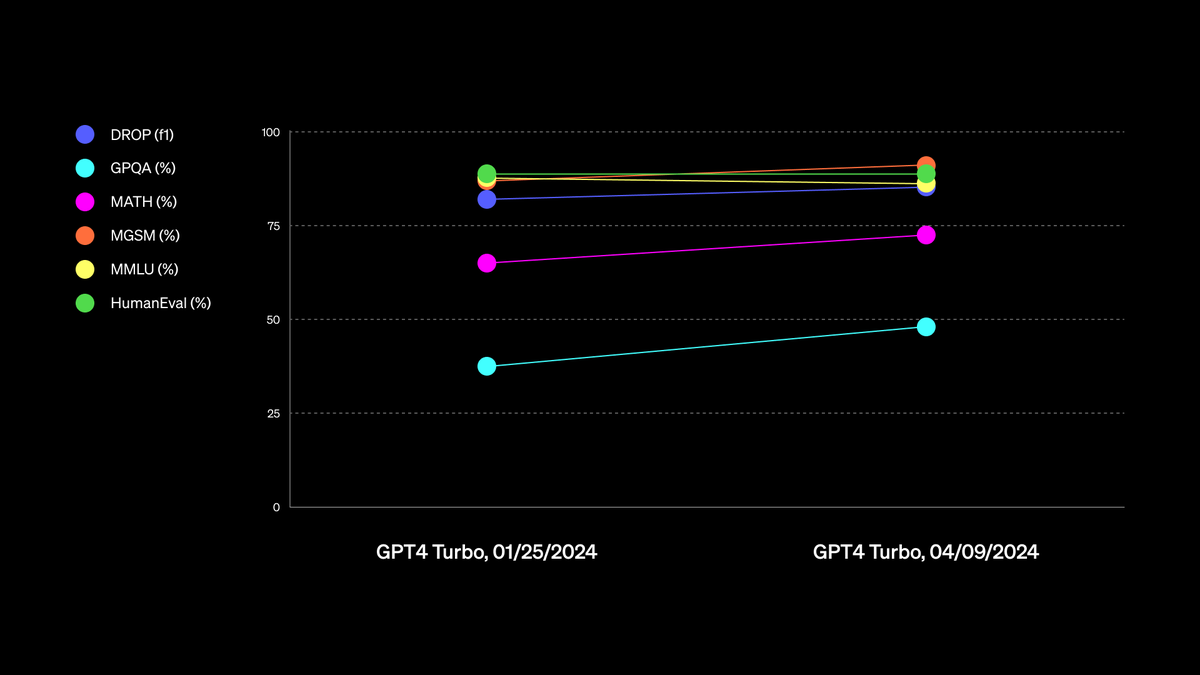

Our new GPT-4 Turbo is now available to paid ChatGPT users. We’ve improved capabilities in writing, math, logical reasoning, and coding. Source: github.com/openai/simple-…

@arimorcos The data is important, and possibly more important than the arch for downstream perf, but you can't ignore the inductive biases coming from the architecture. For example, with training efficiency or, with Griffin, the inference speed gains. These can't be achieved from data only.

I am very happy to give this tutorial next week! We will discuss several developments on sub-quadratic long-context architectures such as SSMs, CKConv, Hyena and Mamba. Thank you @Ellis_Amsterdam for having me!

💥 We are excited that @davidwromero (@nvidia) will talk about 'Beyond Transformers: Exploring Subquadratic Long-Context Architectures' at the upcoming Deep Thinking Hour Tutorial! 📅 Thu, April 11th ⏰️09:00 - 11:00 📍L1.01 of @Lab42UvA Come and deep think with us! 🏍

A Mamba Primer (w/ Yair Schiff youtube.com/watch?v=dVH1dR… ) Mamba is a nice jumping off point to summarize foundational ideas in sequence modeling, parallel algorithms, continuous-time representations, and GPU aware algorithms. We try to put these together in the context of LMs.

Happy to announce that I am promoted to full professor. Big shout out to my former and current students without whom this would never be possible. purdue.edu/newsroom/purdu…

This is my Brexit. x.com/srush_nlp/stat…

When Jamba-Instruct beats GPT-4 and Claude in Chatbot Arena, how do we resolve the Transformers bet?

Excited to see Numbers Station named amongst the top 100 most promising private AI companies of 2024! Thanks for the recognition @CBinsights

Boom: Meet the 2024 AI 100 cbi.team/4aoH7Pu From new AI architectures to precision manufacturing, this year’s winners are tackling some of the hardest challenges across industries.

Recently, Karpathy tweeted that *increasing* the size of his matmul made it run faster. But... why? Many people seem content to leave this as black magic. But luckily, this *can* be understood! Here's a plot of FLOPs achieved for square matmuls. Let's explain each curve! 1/19

The most dramatic optimization to nanoGPT so far (~25% speedup) is to simply increase vocab size from 50257 to 50304 (nearest multiple of 64). This calculates added useless dimensions but goes down a different kernel path with much higher occupancy. Careful with your Powers of 2.

Let's talk about a detail that occurs during PyTorch 2.0's codegen - tiling. In many cases, tiling is needed to generate efficient kernels. Even for something as basic as torch.add(A, B), you might need tiling to be efficient! But what is tiling? And when is it needed? (1/13)