David Krueger @DavidSKrueger

Cambridge faculty - AI alignment, deep learning, and existential safety. Formerly Mila, FHI, DeepMind, ElementAI, AISI. davidscottkrueger.com Joined November 2011-

Tweets3K

-

Followers13K

-

Following4K

-

Likes3K

I've had a great time collaborating with Hidenori and very excited to see this new initiative launched!

I've had a great time collaborating with Hidenori and very excited to see this new initiative launched!

Money can't buy happiness. Just like an H100. H100 = happiness.

I've finally uploaded the thesis on arXiv: arxiv.org/abs/2404.12150 It ties together a bunch of papers exploring some alternatives to RL for finetuning LMs, including pretraining with human preferences and minimizing KL divergences from pre-defined target distributions.

I've finally uploaded the thesis on arXiv: arxiv.org/abs/2404.12150 It ties together a bunch of papers exploring some alternatives to RL for finetuning LMs, including pretraining with human preferences and minimizing KL divergences from pre-defined target distributions. https://t.co/jq03eRcEhK

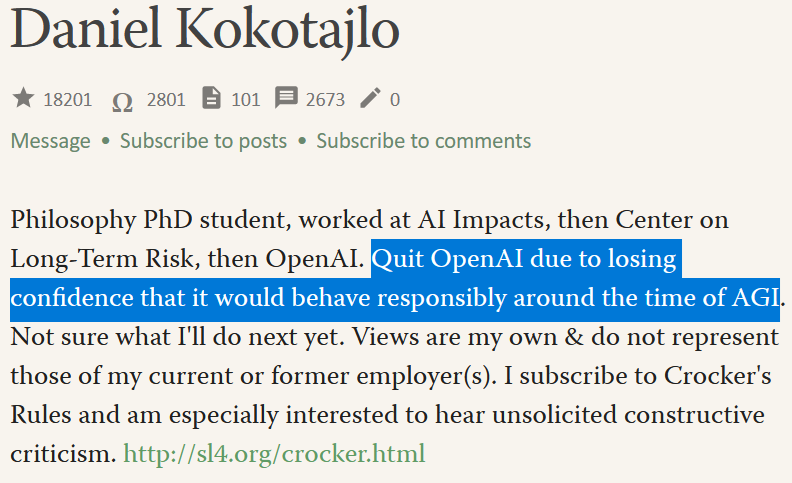

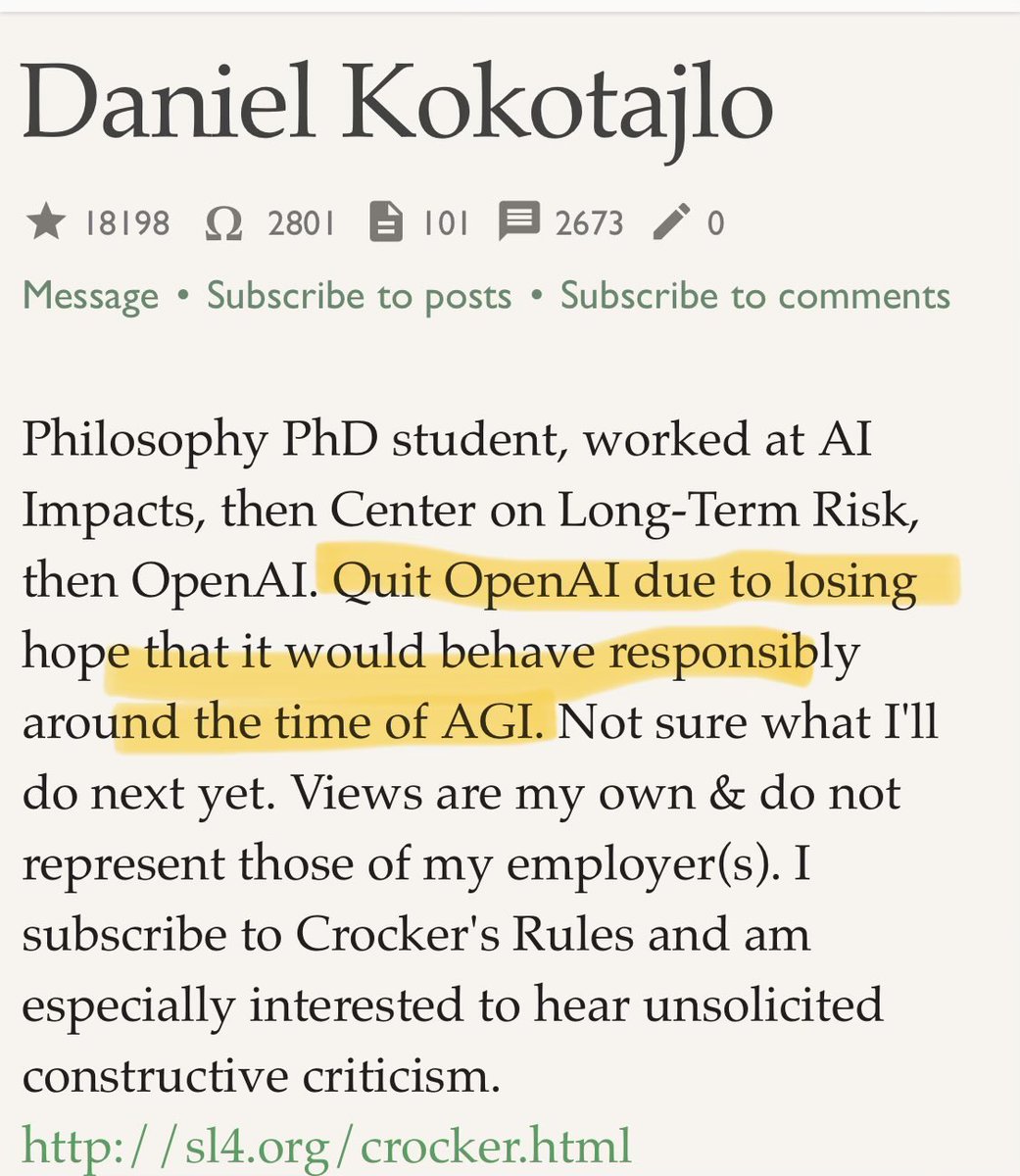

OpenAI are losing their best and most safety-focused talent. Daniel Kokotajlo of their Governance team quits "due to losing confidence that it would behave responsibly around the time of AGI" Last year he wrote he thought there was a 70% chance of an AI existential catastrophe.

OpenAI are losing their best and most safety-focused talent. Daniel Kokotajlo of their Governance team quits "due to losing confidence that it would behave responsibly around the time of AGI" Last year he wrote he thought there was a 70% chance of an AI existential catastrophe. https://t.co/KJVJX24wkU

The Future of Humanity Institute is no more. futureofhumanityinstitute.org

LLM-agents seem to be coming fast! But are we ready? Probably not. In our new agenda paper 👇, we discuss what novel safety and alignment challenges are likely to arise as go from ‘chat assistants’ to ‘agents’. 🧵⬇️

LLM-agents seem to be coming fast! But are we ready? Probably not. In our new agenda paper 👇, we discuss what novel safety and alignment challenges are likely to arise as go from ‘chat assistants’ to ‘agents’. 🧵⬇️

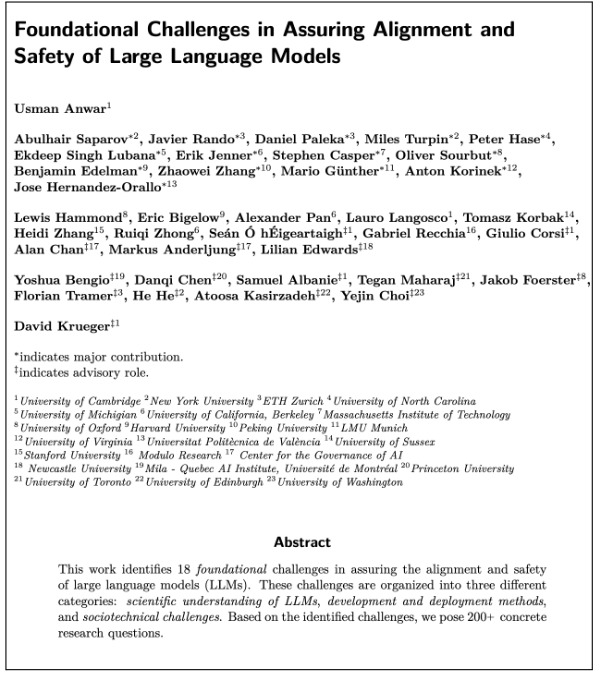

Big congrats to my student @usmananwar391 for this!

Big congrats to my student @usmananwar391 for this!

Excited to contribute to a new Agenda Paper on LLM Safety & value alignment. A critical question is what values, and whose values, should we encode in our LLMs? This paper calls for more research on both the technical & social aspects of this important issue. 🧵⬇️

Excited to contribute to a new Agenda Paper on LLM Safety & value alignment. A critical question is what values, and whose values, should we encode in our LLMs? This paper calls for more research on both the technical & social aspects of this important issue. 🧵⬇️

🚨 It's time to rethink finetuning in LLMs 🚨 For something so fundamental to how we train LLMs, finetuning is mysterious and has a lot of shortcomings. 🧵⬇️ x.com/davidskrueger/…

🚨 It's time to rethink finetuning in LLMs 🚨 For something so fundamental to how we train LLMs, finetuning is mysterious and has a lot of shortcomings. 🧵⬇️ x.com/davidskrueger/…

To appropriately account for risks from LLMs, we must correctly estimate and understand their capabilities. But there exist several fundamental challenges that make this difficult. We discuss some of these in our new agenda paper (section 2.2)

To appropriately account for risks from LLMs, we must correctly estimate and understand their capabilities. But there exist several fundamental challenges that make this difficult. We discuss some of these in our new agenda paper (section 2.2) https://t.co/5O6kw1el4y

🚨 Data poisoning attacks are a challenge for LLM Safety! 🚨 LLMs are trained on data from untrusted sources that can be manipulated to inject vulnerabilities. Research on data poisoning on LLMs is limited. We summarize the key challenges 🧵⬇️ x.com/davidskrueger/…

🚨 Data poisoning attacks are a challenge for LLM Safety! 🚨 LLMs are trained on data from untrusted sources that can be manipulated to inject vulnerabilities. Research on data poisoning on LLMs is limited. We summarize the key challenges 🧵⬇️ x.com/davidskrueger/…

I'm delighted to have contributed to this new Agenda Paper on AI Safety * Governance of LLMs can be a v powerful tool in helping assure their safety and alignment. It could complement and *substitute* for technical interventions. But LLM governance is currently challenging! 🧵⬇️

I'm delighted to have contributed to this new Agenda Paper on AI Safety * Governance of LLMs can be a v powerful tool in helping assure their safety and alignment. It could complement and *substitute* for technical interventions. But LLM governance is currently challenging! 🧵⬇️

Usman deserves so much credit for leading and organizing this effort! It's been a long haul, but I'm really happy with the result!

Usman deserves so much credit for leading and organizing this effort! It's been a long haul, but I'm really happy with the result!

Super excited about the release of this 🔥agenda paper on “Foundational Challenges in Assuring Alignment and Safety of LLMs!” that has been described as ‘particularly comprehensive' and 'epic piece of work' in private reviews. 😅

Super excited about the release of this 🔥agenda paper on “Foundational Challenges in Assuring Alignment and Safety of LLMs!” that has been described as ‘particularly comprehensive' and 'epic piece of work' in private reviews. 😅

Can you spot a common thread among the 'dangerous' capabilities listed in arxiv.org/abs/2305.15324? No? They're all closely linked to various forms of 'reasoning'! This is why, in our agenda paper👇, we call for understanding the reasoning capabilities of LLMs! 🧵⬇️

Can you spot a common thread among the 'dangerous' capabilities listed in arxiv.org/abs/2305.15324? No? They're all closely linked to various forms of 'reasoning'! This is why, in our agenda paper👇, we call for understanding the reasoning capabilities of LLMs! 🧵⬇️

Recently we took a dive into the state of the art in LLM robustness, jailbreaks and prompt injection for the Challenges paper. Here are the key research problems we expect to be impactful if you can solve them! (1/8) x.com/davidskrueger/…

Recently we took a dive into the state of the art in LLM robustness, jailbreaks and prompt injection for the Challenges paper. Here are the key research problems we expect to be impactful if you can solve them! (1/8) x.com/davidskrueger/…

Malicious use of LLMs (and other AIs) is a recurring theme across AI Safety literature. However, there is a surprising lack of RIGOROUS research on this! In our new agenda paper, we identify these research gaps and call for developing a rigorous understanding of these risks.🧵⬇️

Malicious use of LLMs (and other AIs) is a recurring theme across AI Safety literature. However, there is a surprising lack of RIGOROUS research on this! In our new agenda paper, we identify these research gaps and call for developing a rigorous understanding of these risks.🧵⬇️

📢 *Call for research into 𝘀𝗼𝗰𝗶𝗮𝗹 𝗮𝗻𝗱 𝗲𝗰𝗼𝗻𝗼𝗺𝗶𝗰 𝗶𝗺𝗽𝗮𝗰𝘁𝘀 of LLMs* LLMs are an amazing technology but might have drastic socioeconomic impacts on our society. In our new agenda paper, we emphasize the urgency of understanding and mitigating these impacts!🧵⬇️

📢 *Call for research into 𝘀𝗼𝗰𝗶𝗮𝗹 𝗮𝗻𝗱 𝗲𝗰𝗼𝗻𝗼𝗺𝗶𝗰 𝗶𝗺𝗽𝗮𝗰𝘁𝘀 of LLMs* LLMs are an amazing technology but might have drastic socioeconomic impacts on our society. In our new agenda paper, we emphasize the urgency of understanding and mitigating these impacts!🧵⬇️

Interpretability methods could be an incredibly powerful tool for assuring the alignment of AI systems, but they currently have a long way to go. We outline *11 open challenges* for interpretability research and propose actionable research questions to tackle them. 🧵⬇️

Interpretability methods could be an incredibly powerful tool for assuring the alignment of AI systems, but they currently have a long way to go. We outline *11 open challenges* for interpretability research and propose actionable research questions to tackle them. 🧵⬇️

In-context learning (ICL) is AMAZING but also RISKY as evidenced by the recent Anthropic work on many-shot jailbreaking. However, we may be able to minimize these risks by understanding ICL better. In our new agenda paper, we list this as the FIRST foundational challenge! 🧵⬇️

In-context learning (ICL) is AMAZING but also RISKY as evidenced by the recent Anthropic work on many-shot jailbreaking. However, we may be able to minimize these risks by understanding ICL better. In our new agenda paper, we list this as the FIRST foundational challenge! 🧵⬇️

Richard Ngo @RichardMCNgo

35K Followers 1K Following What would we need to understand in order to design an amazing future? Figuring that out @openai

Neel Nanda @NeelNanda5

13K Followers 89 Following Mechanistic Interpretability lead @DeepMind. Formerly @AnthropicAI, independent. In this to reduce AI X-risk. Neural networks can be understood, let's go do it!

Rob Miles (✈️ Tok.. @robertskmiles

18K Followers 789 Following Explaining AI Alignment to anyone who'll stand still for long enough, on YouTube and Discord. Music, movies, microcode, and high-speed pizza delivery

Stefan Schubert @StefanFSchubert

28K Followers 2K Following Philosophy, psychology, and effective altruism.

Amanda Askell @AmandaAskell

26K Followers 653 Following Philosopher & ethicist teaching models to be good @AnthropicAI. Personal account. All opinions come from my training data.

Rob Bensinger ⏹️ @robbensinger

8K Followers 302 Following Comms @MIRIBerkeley. RT = increased vague psychological association between myself and the tweet.

Peter Wildeford @peterwildeford

10K Followers 367 Following Pro forecaster w/ good track record. Seeking to understand + manage risks from advanced AI systems. - Co-CEO @RethinkPriors - Chief Advisory Executive @iapsAI

Miles Brundage @Miles_Brundage

43K Followers 10K Following Policy research at @openai. I mostly tweet about AI, animals, and sci-fi. He/him. Views my own.

Jack Clark @jackclarkSF

68K Followers 5K Following @AnthropicAI, ONEAI OECD, co-chair @indexingai, writer @ https://t.co/3vmtHYkaTu Past: @openai, @business @theregister. Neural nets, distributed systems, weird futures

Ethan Caballero is bu.. @ethanCaballero

8K Followers 2K Following ML PhD student @Mila_Quebec ; previously @GoogleDeepMind

Irina Rish @irinarish

9K Followers 994 Following prof UdeM/Mila; Canada Excellence Research Chair; AAI Lab head https://t.co/UzlrC7ZrGF; INCITE project PI https://t.co/0rV7szd7rH; CSO https://t.co/XDhj6MEtUj

Jan Leike @janleike

44K Followers 322 Following ML Researcher, co-leading Superalignment @OpenAI. Optimizing for a post-AGI future where humanity flourishes.

Sam Bowman @sleepinyourhat

35K Followers 3K Following AI alignment + LLMs at NYU & Anthropic. Views not employers'. No relation to @s8mb. I think you should join @givingwhatwecan.

Rosanne Liu @savvyRL

33K Followers 968 Following Cofounded & running @ml_collective. Host of Deep Learning Classics & Trends. Research at Google DeepMind. DEI/DIA Chair of ICLR & NeurIPS. Writing https://t.co/IbycyGfnDR

Dan Roy @roydanroy

45K Followers 2K Following ML / AI researcher, emphasis on theory. Research Director and Canada CIFAR AI Chair, @VectorInst Professor, @UofT (Statistics/CS)

Daniel Eth (yes, Eth .. @daniel_271828

7K Followers 788 Following AI alignment & memes | "known for his humorous and insightful tweets" - Bing/GPT-4 | prev: @FHIOxford

Liu Peng @LiuPengNGP

17 Followers 56 Following

Stacey S10a (🌎/acc.. @metaphdor

653 Followers 773 Following exploring AI for climate; fullstack ShoggOps engineer; convergelady; appreciator of horizons; transparent/collaborative AI; ex-W&B (founding MLE/User 0)

AI Papers Podcast @aipaperspodcast

918 Followers 2K Following A digestible daily update on the latest AI Research Papers. Brought to you by @pocketpodapp

Nir Peled @_nir_peled

67 Followers 319 Following

Max Beverton-Palmer @Maxjb

1K Followers 3K Following Thinking about the future of innovation, cyber, AI, compute and the internet l Responsible tech, online safety, social justice & geopolitics l him/he💜💙🏳️🌈

Hamza Waseem @quantum_physics

2K Followers 1K Following Rhodes Scholar, DPhil @OxfordPhysics, research @QuantinuumQC | quantum foundations, linguistics, applied category theory, philosophy

Praveen Tiwari @tiwariprt

41 Followers 120 Following Stranded on the floating rock, flying through space and time. My views and opinions are mine to hallucinate

Fei Wang @fwang_nlp

920 Followers 2K Following PhD candidate @USC. PhD Fellow @Amazon. Responsible LLM.

Nikita @nikitavoloboev

4K Followers 7K Following Make @LearnAnything_ Learn in public: https://t.co/GbFvuErkYn macOS course: https://t.co/JdbJWru6zG https://t.co/94R8ER7K2h https://t.co/ROkqhyhpEK

Dana Mahmood @deordered

20 Followers 710 Following Fine-tuning AI models oftentimes & practicing philosopher at other times.

Cate Hall @catehall

19K Followers 272 Following executive director @ Astera | born lucky | leave me anonymous feedback: https://t.co/9RtcgMyTHP How to be More Agentic: https://t.co/O3eJsrzTYW

Laura Weidinger @weidingerlaura

2K Followers 303 Following AI Ethics | Researcher at @deepmind | Measuring and Evaluating AI | Philosophy, Psychology | All views my own | London, Berlin

𝕋𝕒𝕥𝕤𝕦�.. @tatsuru_kikuchi

365 Followers 3K Following Research Officer at Faculty of Economics, The University of Tokyo. Keywords: Entrepreneur/OpenAI/Quantum/Crypto/Analytics/Consulting. Views are my own.

Hridya Dhulipala @dhulipalahridya

158 Followers 186 Following I was told that if I wanted to keep up with the latest research, this was the place to be.

Juma, Lawrence @lalloyce

1K Followers 4K Following Founder - @zaidagrisol | Program and Operations Mgr w/ 10 years experience | @YALIRLCEA fellow.

Irtiza🥺🤡🔪 @Ertezah

267 Followers 5K Following Restless | Fan of Tom in Tom&Jerry | Foodphilic | Symmetryphile | Favorite bird: Seagull |Lover of Art,Food and Books

Assuredai @AssuredxAI

5 Followers 61 Following

Mr.Li 李先生 @FelixLee2022

16 Followers 105 Following Shenzhen,China. Business travel in USA. Mobile phone/ tablet/ IOT etc.

PhonkNerdyBit @oncs01

17 Followers 332 Following Unleashing CS Inquiry bombs, chill composure, slinging sarcastic comments. Stay sharp, stay savvy. #NoFearInquiry

Tim Lawson @tslwn

46 Followers 146 Following PhD student in AI @BristolUni. Previously physics @Cambridge_Uni and software @graphcoreai. Language, cognition, etc.

a23dxa28xhq90t @peceo5d72

7 Followers 726 Following We first transfer USDT to you TRC20, you return 90% to BEP20, you get 10% , 2K per day Our co hv a large amt of USDT need to from TRC20 convert to BEP20 network

knut g. berdal @KnutBerdal

12K Followers 5K Following World citizen and public servant focused on facts and values, not conventional narratives. Multilateralism/ governance/ technology/ trade/ environment/ health

Tuyen Huynh @hntuyen

46 Followers 1K Following

6vpe @6vpe1

0 Followers 151 Following

Afroz Mohiuddin @afrozenator

1K Followers 5K Following Research Engineer at Google Brain. Interested in Science, Psychology, Investing, Design and generally almost everything. Good Thoughts, Good Words, Good Deeds.

Sunanda Gamage @gamage_sunanda

210 Followers 5K Following PhD candidate at Western University. Interpretable machine learning.

Vikram Dutt @vd_

835 Followers 7K Following

Huy Hoàng Lê @Splendor1811

9 Followers 180 Following

Bogdan Beldiman @bogdanonymous

857 Followers 4K Following Self-Educated Multidisciplinary Experimenter | AI Enthusiast

Linus Härenstam-Niel.. @LinusHNielsen

133 Followers 571 Following PhD student at @TU_Muenchen, working on 3D reconstruction

Aaron Joseph Mathew @AaronJosephMath

45 Followers 931 Following Recommending lots of things to lots of people is my Jam. Think about Data Science and tweet about Rabbits. Tweets are my own and should not be taken seriously

Aaditya ; @Aaditya26082004

526 Followers 7K Following CS'26 • Machine Learning • Open-Source • Web Dev. • Algorithms • Jai Shree Krishna 🦚🪈

Evan Anders @evanhanders

79 Followers 136 Following AI Safety / Mech Interp postdoctoral scholar @KITPUCSB. Former astrophysical fluid dynamicist @Northwestern (CIERA) and @CUBoulder.

Anshu Rani @NeuroEconinPol

48 Followers 893 Following PhD student @up_poznan Neuroeconomics UASD 🇮🇳 PAU 🇮🇳 IARI 🇮🇳 PULS 🇵🇱…

Arjun Srivastava @arjunsriv

63 Followers 1K Following AI, reinforcement learning, distributed systems something new @Woven_ToyotaJP prev - discovery @bookmyshow, cs @IITIOfficial

Sanjukta Bhattacharya @latentsanj

38 Followers 410 Following Latents + (deep) Probabilistic models MRes @EdinburghUni , @InfAtEd

@[email protected].. @MilekPl

2K Followers 2K Following philosopher of cognitive science; Institute of Philosophy & Sociology, Polish Academy of Sciences; some natural language processing and translation

TurquoiseSound @TurquoiseSound

2K Followers 2K Following Societal Tech: Leadership | Human Permaculture | Org Ecosystem Cultivation | https://t.co/hYeoMxjN43 | Institute of Wise Innovation https://t.co/2GkyeQg1Bq

Jack Reacher @JackReach516

69 Followers 1K Following

Othmane @ThisIsOthmane

336 Followers 3K Following Data Science Engineering Manager at Datadog | Passionate about AI, LLMs, ML, Data Science | #DimaRaja #FreeKoulchi | Opinions are my own

Richard Ngo @RichardMCNgo

35K Followers 1K Following What would we need to understand in order to design an amazing future? Figuring that out @openai

Anthropic @AnthropicAI

262K Followers 26 Following We're an AI safety and research company that builds reliable, interpretable, and steerable AI systems. Talk to our AI assistant Claude at https://t.co/aRbQ97uk4d.

Neel Nanda @NeelNanda5

13K Followers 89 Following Mechanistic Interpretability lead @DeepMind. Formerly @AnthropicAI, independent. In this to reduce AI X-risk. Neural networks can be understood, let's go do it!

Rob Miles (✈️ Tok.. @robertskmiles

18K Followers 789 Following Explaining AI Alignment to anyone who'll stand still for long enough, on YouTube and Discord. Music, movies, microcode, and high-speed pizza delivery

Stefan Schubert @StefanFSchubert

28K Followers 2K Following Philosophy, psychology, and effective altruism.

Amanda Askell @AmandaAskell

26K Followers 653 Following Philosopher & ethicist teaching models to be good @AnthropicAI. Personal account. All opinions come from my training data.

Rob Bensinger ⏹️ @robbensinger

8K Followers 302 Following Comms @MIRIBerkeley. RT = increased vague psychological association between myself and the tweet.

Peter Wildeford @peterwildeford

10K Followers 367 Following Pro forecaster w/ good track record. Seeking to understand + manage risks from advanced AI systems. - Co-CEO @RethinkPriors - Chief Advisory Executive @iapsAI

Miles Brundage @Miles_Brundage

43K Followers 10K Following Policy research at @openai. I mostly tweet about AI, animals, and sci-fi. He/him. Views my own.

Jack Clark @jackclarkSF

68K Followers 5K Following @AnthropicAI, ONEAI OECD, co-chair @indexingai, writer @ https://t.co/3vmtHYkaTu Past: @openai, @business @theregister. Neural nets, distributed systems, weird futures

Kelsey Piper @KelseyTuoc

27K Followers 544 Following Senior writer at Vox's Future Perfect. [email protected]

Ethan Caballero is bu.. @ethanCaballero

8K Followers 2K Following ML PhD student @Mila_Quebec ; previously @GoogleDeepMind

EigenGender @EigenGender

6K Followers 659 Following all my posts are shitposts that simultaneously reveal the true nature of reality. large language models; kinda EA; 🏳️⚧️

Lucas Beyer (bl16) @giffmana

56K Followers 447 Following Researcher (Google DeepMind/Brain in Zürich, ex-RWTH Aachen), Gamer, Hacker, Belgian. Mostly gave up trying mastodon as [email protected]

Eric Jang @ericjang11

69K Followers 3K Following physical AGI at 1X. Author of "AI is Good for You" https://t.co/eFg4WXhg0p

Habiba @FreshMangoLassi

4K Followers 523 Following Co-founder @SpiroTB - new TB screening and prevention charity focused on children https://t.co/sBf6ONGMSL

Irina Rish @irinarish

9K Followers 994 Following prof UdeM/Mila; Canada Excellence Research Chair; AAI Lab head https://t.co/UzlrC7ZrGF; INCITE project PI https://t.co/0rV7szd7rH; CSO https://t.co/XDhj6MEtUj

rat king 🐀 @MikeIsaac

193K Followers 6K Following NYT tech reporter. tell me stuff at [email protected] or [email protected] / Text my signal username with tips: MikeIsaac.38

Molly White @molly0xFFF

116K Followers 2K Following crypto researcher & critic, software engineer, wikipedian • @web3isgreat creator • subscribe to my newsletter at https://t.co/WftJCrCfSY

Assemblymember Rebecc.. @BauerKahan

4K Followers 640 Following Assemblymember to the 16th District in the East Bay. Chair of the Committee on Privacy and Consumer Protection and the Select Committee on Reproductive Health.

Uri Berliner @uberliner

30K Followers 542 Following Dad, husband, 3 dogs, 1 kid, an infinity of basketball: edits tech, incubates podcasts, and covers the Unseen Economy for @NPRNews.

Lawrence Livermore Na.. @Livermore_Lab

63K Followers 1K Following U.S. @ENERGY and @NNSAnews laboratory. We use science and technology to make the world a safer place. Verification: https://t.co/29pFxbpHmQ

Elizabeth Cooper @lizcooper28

44 Followers 123 Following Deputy Director of Berkeley Existential Risk Initiative | 4th Tier English Football Fan

Will Petillo @ZenMarmot

56 Followers 75 Following Game developer by trade, running an AI safety podcast as a passion project https://t.co/NOqlHKFNAa https://t.co/2pMer8kLGz

The Information @theinformation

96K Followers 697 Following The leading publication high-powered tech executives and founders read daily.

Stephanie Palazzolo @steph_palazzolo

8K Followers 3K Following Writing AI Agenda @theinformation, texan, & horror movie aficionado // reach me at [email protected] or on Signal at 979-599-8091

CyberScoop @CyberScoopNews

23K Followers 1K Following CyberScoop, a @ScoopNewsGroup property, reports on news and events impacting technology and security.

Jason Hoelscher-Oberm.. @JasonObermaier

110 Followers 518 Following Co-Director @apartresearch | AI safety research lead | Physics PhD | co-designing a better future leave me anonymous feedback: https://t.co/DafiA6vWUc _

lmsys.org @lmsysorg

37K Followers 172 Following Large Model Systems Organization. We created Vicuna and Chatbot Arena! Compare 30+ LLMs (GPT-4/Claude/Llamas) side-by-side at https://t.co/IDFeIDIOtm

Typer Durden @drdevonprice

23K Followers 2K Following 📚Author of Unmasking Autism, Laziness Does Not Exist, and Unlearning Shame.

Johanna Rickne @johannarickne

7K Followers 2K Following Professor of Economics at Stockholm University (SOFI) and Nottingham University. Research fellow at @cepr_org. I study gender economics and political economics.

AlexM @AlexMeinke

41 Followers 99 Following Trying to make the future good rather than bad. Research scientist at Apollo Research.

Tal Arbel @arbtal

1K Followers 367 Following Professor @mcgillu Director @PVG_McGill @McGill_CIM Canada CIFAR AI Chair @MILAMontreal @MELBAjournal @midl_conference @QUBRaTs @MedComVision

Nick Gabrieli @NickGabs01

4 Followers 1 Following

International Dialogu.. @ais_dialogues

151 Followers 0 Following

Caleb Parikh @caleb_parikh

211 Followers 283 Following Running EA Funds and trying to make the future go well. All opinions are my own.

Carson Ezell @thecarsonezell

120 Followers 194 Following Student at Harvard College thinking about AI safety and governance

Agus 🔎 ⏸️~ @austinc3301

3K Followers 4K Following “For small creatures such as we, the vastness is only bearable through love.” AI Safety, open source, and cybersecurity. 🏳️🌈🖖🌱

Digital EU 🇪🇺 @DigitalEU

133K Followers 1K Following We’re all about #tech 🤖✨ @EU_Commission account for #DigitalEU run by DG Connect. 📸 Follow us on Instagram @DigitalEU & LinkedIn @ EU Digital & Tech

William Temple Founda.. @WTempleFdn

4K Followers 4K Following William Temple Foundation | We generate ideas about the impact of religion on civil society, wellbeing, politics, economics, and urban change.

Tim Middleton @TimMiddleton1

1K Followers 2K Following Tutorial Fellow in Theology @RegentsOx. Director @CBSOxford. Associate @OU_TheoReligion, @LSRIOxford & @WTempleFdn. Writing on ecological trauma & deep time.

Forecasting Research .. @Research_FRI

580 Followers 21 Following Research institute focused on developing forecasting methods to improve decision-making on high-stakes issues, led by chief scientist Philip Tetlock.

Chico Camargo @evoluchico

4K Followers 3K Following Lecturer/Assistant Professor in Computer Science @UniofExeter | Social science, complex systems, (cultural) evolution, data science and art.

Valentin Hofmann @vjhofmann

968 Followers 228 Following Young Investigator (Postdoc) @allen_ai @ai2_allennlp | Formerly @UniofOxford @CisLMU @stanfordnlp @GoogleDeepMind

sareh forouzesh @sarehfo

390 Followers 526 Following Senior Mediator and Program Manager at Meridian Institute. Deputy Director of the Just Rural Transition Initiative Secretariat. All views my own.

Lacuna Fund @LacunaFund

2K Followers 323 Following Mobilizing labeled datasets that solve urgent problems in low- and middle-income contexts globally. #ClosingDataGaps #OurVoiceinData

Divyansh Kaushik @dkaushik96

4K Followers 3K Following Emerging tech and national security. DC/PGH. “An imported Indian immigrant,” @BreitbartNews.

Obsidian @obsdmd

130K Followers 3 Following The private and flexible writing app that adapts to the way you think. For help and deeper discussions, join our community: https://t.co/QsDArfFSa3

Zhuang Liu @liuzhuang1234

3K Followers 933 Following Research Scientist @MetaAI (FAIR, at NYC). machine learning, computer vision, neural networks. PhD from @Berkeley_EECS

Martin Rees @LordMartinRees

10K Followers 16 Following Astronomer Royal • Co-founder @CSERCambridge • Emer. Prof. @cambridge_astro • Fellow @TrinCollCam • 10+ books & 500+ papers • 60th President @royalsociety

Polytechnique Mtl @polymtl

14K Followers 2K Following Compte Twitter officiel de Polytechnique Montréal #polymtl

École polytechnique @Polytechnique

50K Followers 1K Following L'École polytechnique est un établissement d'enseignement supérieur et de recherche de niveau mondial.

Infrarouge @RTSinfrarouge

15K Followers 971 Following Émission de débat de la @radiotelesuisse. Découvrez les moments forts, réagissez aux sujets à venir et participez au débat avec le hashtag #RTSinfrarouge

Brandon Goldman @BrandonGoldman

1K Followers 1K Following Partner, AI Safety @LionheartVC. Treasurer @ForesightInst. Optimistic futurist. Vegan, libertarian, cryonics, crypto, VR, rationality, ethics, health

Andreas Vlachos @vlachos_nlp

5K Followers 1K Following Professor in NLP/ML at @Cambridge_CL, Fellow of @FitzwilliamColl, @ELLISforEurope member

Lucy Farnik @lucyfarnik

66 Followers 162 Following Trying not to get killed by AI. @MATSprogram under @NeelNanda5; PhDing. DMs very much open — have a low bar for reaching out!

Ananya Sai B @AnanyaSaiB

180 Followers 245 Following PhD candidate at IIT Madras | Google PhD fellowship | AI | ML | NLP

Arthur Mensch @arthurmensch

40K Followers 873 Following Co-founder and CEO @MistralAI. Apply https://t.co/yHGRZAtjcx

AI for Good 🇺🇳 .. @AIforGood

16K Followers 4K Following The @UN's 🇺🇳 leading platform to scale #AI for global impact. Organized by @ITU in partnership w/ 40 UN agencies and 🇨🇭 #AIforGood

Daniel Faggella @danfaggella

7K Followers 238 Following Host of The Trajectory: Realpolitik on AGI and the posthuman transition (https://t.co/1rCgmOZ2EA). Founder @Emerj AI Research.

Vision and AI Lab, II.. @val_iisc

2K Followers 120 Following We are a team of graduate students working on cutting edge research in Computer Vision, led by Prof. R. Venkatesh Babu

Ewout ter Haar @ewout

815 Followers 696 Following Technology for Education, University of São Paulo "inclusão bátava nas terras tropicais" @[email protected]

Joschka Braun @JoschkaBraun

344 Followers 197 Following Co-founder of @PareaAI (YC S23) • Building Generative AI Developer Tools • @FulbrightPrgrm

ana vldv @ana_valdi

5K Followers 4K Following lecturer in ai, government & policy at the @oiioxford | critical ai studies | co-editor at @bigdatasoc | radical ecology, politics & algorithms | 🚲📖🌻More excited than ever to announce $1.7M: "CBS-NTT Program in Physics of Intelligence at Harvard"! 🧠 With new technology comes new science. The time is ripe to build a better future with "Physics of Intelligence for Trustworthy and Green AI"! 🧵👇news.harvard.edu/gazette/story/…

I've finally uploaded the thesis on arXiv: arxiv.org/abs/2404.12150 It ties together a bunch of papers exploring some alternatives to RL for finetuning LMs, including pretraining with human preferences and minimizing KL divergences from pre-defined target distributions.

I was very impressed with @tomekkorbak's thesis! Some really nice insights into LLM alignment: 1) RL is not the way --> distribution matching let's us target constraints like "generate as many of these as of those" 2) fine-tuning is not the way --> PHF aligns during pre-training

Huge thanks to my advisors @drclbuckley and @anilkseth, my mentors @EthanJPerez, @MarcDymetman, @hadyelsahar, @germank and my external examiner @DavidSKrueger! I feel extremely privileged having had the opportunity to work with and learn from you.

OpenAI are losing their best and most safety-focused talent. Daniel Kokotajlo of their Governance team quits "due to losing confidence that it would behave responsibly around the time of AGI" Last year he wrote he thought there was a 70% chance of an AI existential catastrophe.

US politics is an outlier, and should not be seen as representative of the rich world. Between 2006 and 2023, trust in national institutions increased in all of the G7 except the US, where it fell substantially.

LLM-agents seem to be coming fast! But are we ready? Probably not. In our new agenda paper 👇, we discuss what novel safety and alignment challenges are likely to arise as go from ‘chat assistants’ to ‘agents’. 🧵⬇️

I’m super excited to release our 100+ page collaborative agenda - led by @usmananwar391 - on “Foundational Challenges In Assuring Alignment and Safety of LLMs” alongside 35+ co-authors from NLP, ML, and AI Safety communities! Some highlights below...

We released this new agenda on LLM-safety yesterday. This is VERY comprehensive covering 18 different challenges. My co-authors have posted tweets for each of these challenges. I am going to collect them all here! P.S. this is also now on arxiv: arxiv.org/abs/2404.09932

I’m super excited to release our 100+ page collaborative agenda - led by @usmananwar391 - on “Foundational Challenges In Assuring Alignment and Safety of LLMs” alongside 35+ co-authors from NLP, ML, and AI Safety communities! Some highlights below...

Incredible paper by @usmananwar391 et al. There's still so so much to learn about building safe, predictable, human-compatible AI.

I’m super excited to release our 100+ page collaborative agenda - led by @usmananwar391 - on “Foundational Challenges In Assuring Alignment and Safety of LLMs” alongside 35+ co-authors from NLP, ML, and AI Safety communities! Some highlights below...

A very comprehensive survey of research challenges in AI Safety for LLMs from our lab! If you want to start working on tackling foundational problems pertaining to alignment of LLMs, this paper provides a list of problems you can immediately get started with

I’m super excited to release our 100+ page collaborative agenda - led by @usmananwar391 - on “Foundational Challenges In Assuring Alignment and Safety of LLMs” alongside 35+ co-authors from NLP, ML, and AI Safety communities! Some highlights below...

@DavidSKrueger How do you think this compares to Davidad's Open Problems?

While there has been some encouraging progress, a lot remains to be done to make LLMs sufficiently trustworthy! In particular, we identify four challenges from a socio-technical perspective in a new agenda paper that might cause LLMs to be ‘untrustworthy’ for a user. 🧵⬇️

I’m super excited to release our 100+ page collaborative agenda - led by @usmananwar391 - on “Foundational Challenges In Assuring Alignment and Safety of LLMs” alongside 35+ co-authors from NLP, ML, and AI Safety communities! Some highlights below...

I was really pleased to be involved in this mammoth paper "Foundational Challenges In Assuring Alignment and Safety of LLMs", mostly on the multi-agent section. A lot of work went into this from some very talented coauthors! x.com/DavidSKrueger/…

I’m super excited to release our 100+ page collaborative agenda - led by @usmananwar391 - on “Foundational Challenges In Assuring Alignment and Safety of LLMs” alongside 35+ co-authors from NLP, ML, and AI Safety communities! Some highlights below...

To help you (yes YOU!) get started on multi-agent safety and alignment research; we have assembled this list of *concrete* research questions! Website: llm-safety-challenges.github.io PDF: llm-safety-challenges.github.io/challenges_llm… Credits @Usman @DavidSKrueger @lrhammond @j_foerst

Excited to contribute to a new Agenda Paper on LLM Safety & value alignment. A critical question is what values, and whose values, should we encode in our LLMs? This paper calls for more research on both the technical & social aspects of this important issue. 🧵⬇️

I’m super excited to release our 100+ page collaborative agenda - led by @usmananwar391 - on “Foundational Challenges In Assuring Alignment and Safety of LLMs” alongside 35+ co-authors from NLP, ML, and AI Safety communities! Some highlights below...

Huge new paper, congrats to the authors, very important work

I’m super excited to release our 100+ page collaborative agenda - led by @usmananwar391 - on “Foundational Challenges In Assuring Alignment and Safety of LLMs” alongside 35+ co-authors from NLP, ML, and AI Safety communities! Some highlights below...

If a genie were to give you a magic wand and let you change one thing about LLMs, what would you do? I don’t know about you but I would definitely ask for pretraining to be more aligned! Unfortunately magic wands don’t exist but we can still make pretraining more aligned. How?🧵

I’m super excited to release our 100+ page collaborative agenda - led by @usmananwar391 - on “Foundational Challenges In Assuring Alignment and Safety of LLMs” alongside 35+ co-authors from NLP, ML, and AI Safety communities! Some highlights below...

In our new agenda paper (see @DavidSKrueger tweet for details ☝️) we discuss several great research directions for just this! Pretraining has three basic ingredients: data, objective and model design. Let’s see how we could tinker with these to make pretraining better!

@DavidSKrueger Let’s start with data: LLMs learn bad things because they are present in the data we train them on; filtering such bad data would obviously help with alignment but unfortunately current techniques for doing filtering are pretty crude, making data filtering not very useful!

@DavidSKrueger Developing a better data filtering toolkit would be an obvious win here! It might also be interesting to see if we could use LLMs to edit or add new synthetic data to offset the bad effects of the harmful data.