Rohin Shah @rohinmshah

Research Scientist at DeepMind. I publish the Alignment Newsletter. rohinshah.com London, UK Joined October 2017-

Tweets312

-

Followers5K

-

Following89

-

Likes303

Rose: The idea is extremely simple and well-motivated, and the effect sizes are large. Thorn: p=0.05 :( (Tbc, I am very confident we would have reached statistical significance for Gated SAEs being more interpretable, if we had a large enough N.) x.com/sen_r/status/1…

Rose: The idea is extremely simple and well-motivated, and the effect sizes are large. Thorn: p=0.05 :( (Tbc, I am very confident we would have reached statistical significance for Gated SAEs being more interpretable, if we had a large enough N.) x.com/sen_r/status/1…

I loved working with Anca during my PhD, and now I get to do it again! Though there is one downside, she's going to notice how much more lax I've become about really nailing my talks and figures 😅 x.com/ancadianadraga…

I loved working with Anca during my PhD, and now I get to do it again! Though there is one downside, she's going to notice how much more lax I've become about really nailing my talks and figures 😅 x.com/ancadianadraga…

Despite the constant arguments on p(doom), many agree that *if* AI systems become highly capable in risky domains, *then* we ought to mitigate those risks. So we built an eval suite to see whether AI systems are highly capable in risky domains. x.com/tshevl/status/…

Despite the constant arguments on p(doom), many agree that *if* AI systems become highly capable in risky domains, *then* we ought to mitigate those risks. So we built an eval suite to see whether AI systems are highly capable in risky domains. x.com/tshevl/status/…

To estimate impact of various parts of a network on observed behavior, by default you need a few forward passes *per part* -- very expensive. But it turns out you can efficiently approximate this with a few forward passes in total! x.com/janoskramar/st…

To estimate impact of various parts of a network on observed behavior, by default you need a few forward passes *per part* -- very expensive. But it turns out you can efficiently approximate this with a few forward passes in total! x.com/janoskramar/st…

My first @GoogleDeepMind project: How do LLMs recall facts? Early MLP layers act as a lookup table, with significant superposition! They recognise entities and produce their attributes as directions. We suggest viewing fact recall as a black box making "multi-token embeddings”

In our new @GoogleDeepMind paper, we redteam methods that aim to discover latent knowledge through unsupervised learning from LLM activation data. TL;DR: Existing methods can be easily distracted by other salient features in the prompt. arxiv.org/abs/2312.10029 🧵👇

Really excited that this work is finally out! x.com/vikrantvarma_/…

Really excited that this work is finally out! x.com/vikrantvarma_/…

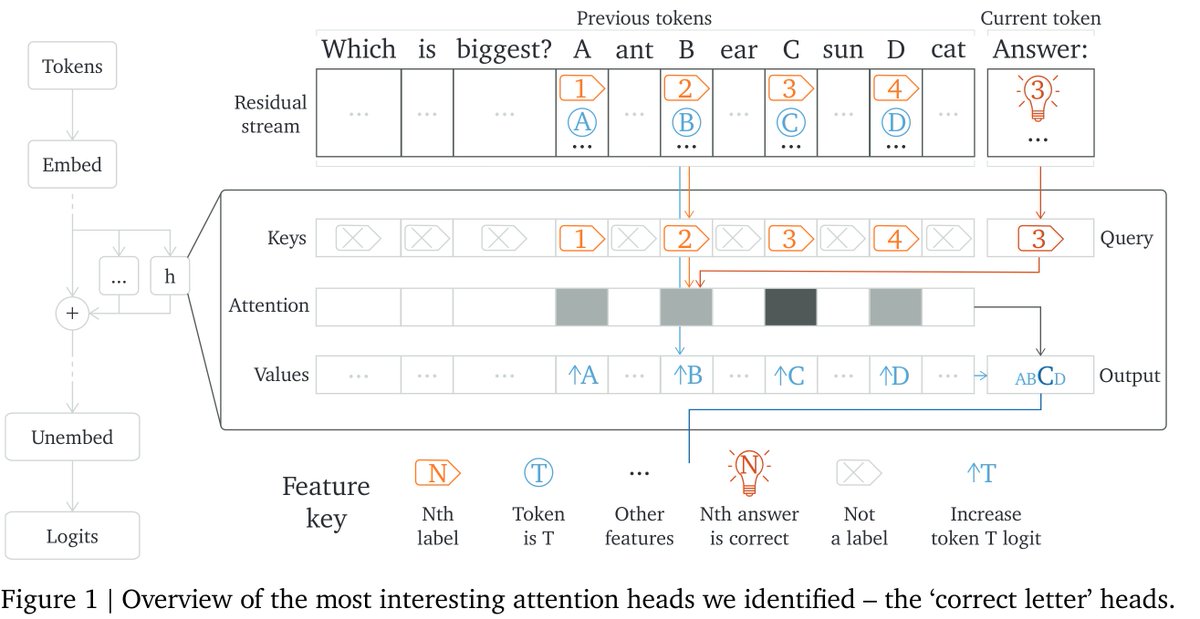

There's nothing like delving deep into model internals for a specific behavior for understanding how neural nets are simultaneously extremely structured and extremely messy. x.com/lieberum_t/sta…

There's nothing like delving deep into model internals for a specific behavior for understanding how neural nets are simultaneously extremely structured and extremely messy. x.com/lieberum_t/sta…

I really enjoyed recording this podcast -- it's very different from my previous podcasts, much more focused on opinions and impressions, rather than specific technical points. x.com/robertwiblin/s…

I really enjoyed recording this podcast -- it's very different from my previous podcasts, much more focused on opinions and impressions, rather than specific technical points. x.com/robertwiblin/s…

We're hiring again, just like last year! Apply here: Research Scientist: boards.greenhouse.io/deepmind/jobs/… Research Engineer: boards.greenhouse.io/deepmind/jobs/… x.com/rohinmshah/sta…

We're hiring again, just like last year! Apply here: Research Scientist: boards.greenhouse.io/deepmind/jobs/… Research Engineer: boards.greenhouse.io/deepmind/jobs/… x.com/rohinmshah/sta…

How can we learn one foundation model for HRI that generalizes across different human rewards as the task, preference, or context changes? Come see at #HRI2023 in the Thursday 13:30 session! Paper: dl.acm.org/doi/10.1145/35… w/ Yi Liu, @rohinmshah, @daniel_s_brown, @ancadianadragan

BASALT is running again -- and this time there's a pretrained Minecraft model for you to finetune!

BASALT is running again -- and this time there's a pretrained Minecraft model for you to finetune!

[AN #173] Recent language model results from DeepMind - mailchi.mp/c7bf1e091608/a…

[AN #172] Sorry for the long hiatus! I'll restart in the near future; for now have a bunch of news - mailchi.mp/56689cc2223c/a…

Richard Ngo @RichardMCNgo

35K Followers 1K Following What would we need to understand in order to design an amazing future? Figuring that out @openai

Rob Miles (✈️ Tok.. @robertskmiles

18K Followers 789 Following Explaining AI Alignment to anyone who'll stand still for long enough, on YouTube and Discord. Music, movies, microcode, and high-speed pizza delivery

Rob Bensinger ⏹️ @robbensinger

8K Followers 302 Following Comms @MIRIBerkeley. RT = increased vague psychological association between myself and the tweet.

Stefan Schubert @StefanFSchubert

28K Followers 2K Following Philosophy, psychology, and effective altruism.

Peter Wildeford @peterwildeford

10K Followers 367 Following Pro forecaster w/ good track record. Seeking to understand + manage risks from advanced AI systems. - Co-CEO @RethinkPriors - Chief Advisory Executive @iapsAI

Miles Brundage @Miles_Brundage

43K Followers 10K Following Policy research at @openai. I mostly tweet about AI, animals, and sci-fi. He/him. Views my own.

Amanda Askell @AmandaAskell

26K Followers 653 Following Philosopher & ethicist teaching models to be good @AnthropicAI. Personal account. All opinions come from my training data.

David Krueger @DavidSKrueger

13K Followers 4K Following Cambridge faculty - AI alignment, deep learning, and existential safety. Formerly Mila, FHI, DeepMind, ElementAI, AISI.

Jack Clark @jackclarkSF

68K Followers 5K Following @AnthropicAI, ONEAI OECD, co-chair @indexingai, writer @ https://t.co/3vmtHYkaTu Past: @openai, @business @theregister. Neural nets, distributed systems, weird futures

Robert Wiblin @robertwiblin

34K Followers 643 Following Exploring the inviolate sphere of ideas one interview at a time: https://t.co/2YMw00bkIQ

Jan Leike @janleike

44K Followers 322 Following ML Researcher, co-leading Superalignment @OpenAI. Optimizing for a post-AGI future where humanity flourishes.

Sam Bowman @sleepinyourhat

35K Followers 3K Following AI alignment + LLMs at NYU & Anthropic. Views not employers'. No relation to @s8mb. I think you should join @givingwhatwecan.

Daniel Eth (yes, Eth .. @daniel_271828

7K Followers 788 Following AI alignment & memes | "known for his humorous and insightful tweets" - Bing/GPT-4 | prev: @FHIOxford

davidad 🎇 @davidad

13K Followers 7K Following Programme Director @ARIA_research | accelerate mathematical modelling with AI and categorical systems theory » build safe transformative AI » cancel heat death

Joshua Achiam ⚗️ @jachiam0

14K Followers 948 Following Human. Trying to make safe alchemy machines. Thinking about humanist alchemism (h/alc ⚗️, maybe). Main author of https://t.co/cKuSh210l1

near @nearcyan

45K Followers 882 Following https://t.co/IdaJwZJCXm partner @ https://t.co/9g1MIgjiqc dms open

I07XNbUI4 @DeepFeed2

48 Followers 3K Following

deepspaceblack @deepspaceblack

55 Followers 186 Following well-being maximizer, curious about the AI era. remember that you are not this next thought

Maheep Chaudhary | �.. @ChaudharyMaheep

41 Followers 508 Following MS @NTU || Collab w/ Stanford || Ex-MIT Driverless, UIUC.

Max Beverton-Palmer @Maxjb

1K Followers 3K Following Thinking about the future of innovation, cyber, AI, compute and the internet l Responsible tech, online safety, social justice & geopolitics l him/he💜💙🏳️🌈

Ian @ InfoHunt.ai @Ianyan2023

33 Followers 231 Following [email protected],Your Most Reliable Discovery AI Engine 👉 Click to explore: https://t.co/WkjTFNHdCr

Zhiyong Wang @Zhiyong16403503

409 Followers 2K Following Visiting Ph.D. student at Cornell University. Ph.D. candidate at CUHK. Working on bandits and reinforcement learning theory.

Sonakshi Chauhan @ChauhanSon8200

12 Followers 36 Following

Mateusz @Mantos77

37 Followers 413 Following

Connor Kissane @Connor_Kissane

49 Followers 40 Following Mechanistic Interpretability research / software engineering

Nikita Agarwal @niki__agarwal

58 Followers 762 Following

Rais Latif @RaisLatif_Study

39 Followers 5K Following Hi I'm Rais. I'm mainly focussing on Math and Science lifelong. There is a lot to discover in these fields and my mind is always blown by all the cool things.

Dana Mahmood @deordered

22 Followers 720 Following Fine-tuning AI models oftentimes & practicing philosopher at other times.

𝕋𝕒𝕥𝕤𝕦�.. @tatsuru_kikuchi

365 Followers 3K Following Research Officer at Faculty of Economics, The University of Tokyo. Keywords: Entrepreneur/OpenAI/Quantum/Crypto/Analytics/Consulting. Views are my own.

Ethan @EthanKosakHine

297 Followers 373 Following

black_box @blackbox_pi

103 Followers 607 Following Tweeting about #machinelearning in #genomics | interpretable ML models | transcription factors | cis-regulatory elements | motifs | gene regulation

YuvaRaj @YuvaAnandan

2 Followers 37 Following Enthusiast, angle investor, worked as Change agent in Finance services and currently working with Payments fintech as Product Lead

Sri Mahaguhan @SriMahaguhan

32 Followers 188 Following

Evander Hammer @evander_hammer

2 Followers 32 Following

Caleb Talley @calebtalley2024

2 Followers 483 Following

tuan pho @tuanpho

1 Followers 127 Following

Chris Keesey @c2keesey

34 Followers 117 Following

Eric Aboussouan @eric3532

99 Followers 577 Following Predicting next world at @GoogleAI Digital nomad, sailor, scientist, inventor

Henrietta.SolidGoldMa.. @Bearly_Present

180 Followers 3K Following All integers between 0 and 1 exclusive.

Sami Jawhar @CyberMonkSam

48 Followers 54 Following Neurotechnologist, serial entrepreneur, digital nomad, builder of things, wannabe philosopher

Seliem @seliemels

2 Followers 29 Following Ethics Foresight & Policy @googledeepmind, PhDing at @univienna

Daniel Guppy @DanielJGuppy

3 Followers 34 Following

Dylance @pretty14130158

7 Followers 169 Following

Md Zunaid @MdZunaid382783

58 Followers 632 Following

teoyjz @teoyjz

27 Followers 72 Following

DailyHealthcareAI @aipulserx

43 Followers 333 Following 🚀 Daily AI healthcare updates compiled from 100+ sources (and growing)

rathink1 @rathink11

0 Followers 152 Following

Tom Bouthillet 🇺�.. @tbouthillet

8K Followers 4K Following Co-Survivor • Business Development Manager • Battalion Chief of EMS (Retired) • Aspiring Screenwriter • Citizen of U.S., Canada, Ireland • ECGs • YouTube 👇🏻

Junjie (Jorji) Chen @coderchen01

2 Followers 282 Following

Aaditya ; @Aaditya26082004

531 Followers 7K Following CS'26 • Machine Learning • Open-Source • Web Dev. • Algorithms • Jai Shree Krishna 🦚🪈

Cerdwin @CerdwinG

5 Followers 266 Following

Ittseta @IssEossda

80 Followers 674 Following

Joel Becker @joel_bkr

2K Followers 2K Following move fast and fix things. 'soccer'-me @MessiSeconds.

Richard Ngo @RichardMCNgo

35K Followers 1K Following What would we need to understand in order to design an amazing future? Figuring that out @openai

Miles Brundage @Miles_Brundage

43K Followers 10K Following Policy research at @openai. I mostly tweet about AI, animals, and sci-fi. He/him. Views my own.

Jack Clark @jackclarkSF

68K Followers 5K Following @AnthropicAI, ONEAI OECD, co-chair @indexingai, writer @ https://t.co/3vmtHYkaTu Past: @openai, @business @theregister. Neural nets, distributed systems, weird futures

Jan Leike @janleike

44K Followers 322 Following ML Researcher, co-leading Superalignment @OpenAI. Optimizing for a post-AGI future where humanity flourishes.

Joshua Achiam ⚗️ @jachiam0

14K Followers 948 Following Human. Trying to make safe alchemy machines. Thinking about humanist alchemism (h/alc ⚗️, maybe). Main author of https://t.co/cKuSh210l1

Catherine Olsson @catherineols

15K Followers 1K Following Hanging out with Claude, improving its behavior, and building tools to support that @AnthropicAI 😁 prev: @open_phil @googlebrain @openai (@microcovid)

Katja Grace 🔍 @KatjaGrace

8K Followers 798 Following Thinking about whether AI will destroy the world at https://t.co/pMilDvd4ya. DM or email for media requests. Feedback: https://t.co/zGAm1i7SKH

Chris Olah @ch402

91K Followers 173 Following Reverse engineering neural networks at @AnthropicAI. DMs open! Previously @distillpub, OpenAI Clarity Team, Google Brain. Personal account.

Vikrant Varma @VikrantVarma_

566 Followers 22 Following Research Engineer working on AI alignment at DeepMind.

Lynette Bye @lynette_bye

36 Followers 87 Following

Rachel Freedman @FreedmanRach

955 Followers 225 Following RLHF, LLMS, interpretability & safety | PhD researcher @berkeley_ai | Visiting researcher @Cambridge_Uni

MineRL Project @minerl_official

932 Followers 29 Following Official Twitter page for the MineRL Project. Account run by @steph_milani. The BASALT 2022 competition has ended!

Brian Christian @brianchristian

4K Followers 411 Following Author of The Alignment Problem, Algorithms to Live By (w. Tom Griffiths), and The Most Human Human. Researcher at UC Berkeley & the University of Oxford.

Daniel Filan research.. @dfrsrchtwts

1K Followers 115 Following PhD candidate at @CHAI_Berkeley. Interested in making neural networks transparent and ushering in an era of human-friendly superintelligence. Podcast: https://t.co/gM752xZcF5

Cody Wild @decodyng

2K Followers 131 Following machine learning research engineer; lover of cats, languages, and elegant systems; explorer & explainer at https://t.co/HQSQCz3Tg3…

𝕏iaoHu Zhu⏹️cs.. @neil_csagi

756 Followers 2K Following eXistential Hope native. https://t.co/pX9vqSzWEq | @Foresightinst Fellow in Safe AGI | @FLIxrisk Affiliate

Sergey Levine @svlevine

80K Followers 122 Following Associate Professor at UC Berkeley Co-founder, Physical Intelligence

Alex Irpan @AlexIrpan

2K Followers 31 Following Research Scientist @ Google DeepMind. Working on Robotics. Has a blog. Views are my own. "Adversarially disengaging Twitter profile"

Jakob Foerster @j_foerst

14K Followers 820 Following Assoc. Prof in ML @UniofOxford @StAnnesCollege @FLAIR_Ox, dad. Ex: {RS @MetaAI, (A)PM @Google, DivStrat @GS}, ex intern {@GoogleDeepmind, @GoogleBrain, @OpenAI}

Yolanda Lannquist @YolandaLannqist

892 Followers 567 Following Director, Global AI Governance, TFS // AI Policy Expert @ The World Bank & OECD // Columbia + Harvard // Poetess // #twinsintech + cat mom. Views are my own.

Gillian Hadfield @ghadfield

5K Followers 710 Following Author of Rules for a Flat World. Law and economics professor exploring the legal innovation needed to keep up with 21st century technology and globalization.

Center for Human-Comp.. @CHAI_Berkeley

3K Followers 108 Following CHAI is a multi-institute research organization based out of UC Berkeley that focuses on foundational research for AI technical safety.

Rosie @RosieCampbell

6K Followers 870 Following Forever expanding my nerd/bimbo Pareto frontier. Policy Frontiers team lead @OpenAI.

Adam Gleave @ARGleave

2K Followers 322 Following CEO @FARAIResearch non-profit | PhD from @berkeley_ai | Value learning, adversarial examples & robustness for deep RL | @[email protected]

Anca Dragan @ancadianadragan

8K Followers 178 Following AI safety & alignment at Google DeepMind • associate professor at UC Berkeley EECS • proud mom of an amazing 2yr old

Jeffrey Ding @jjding99

8K Followers 455 Following Assistant Professor at George Washington University @GWtweets | technology and int'l politics | newsletter on China's AI landscape: https://t.co/ciqWZF1jiV

AI Safety @AI_Safety

99 Followers 32 Following How do we keep advanced artificial agents from forcefully intervening in the protocols by which we attempt to communicate what they should accomplish?

Sumith Kulal @sumith1896

833 Followers 392 Following

noah gundotra @ngundotra

4K Followers 4K Following eng at @SolanaLabs | ex-@goldmansachs | UC Berkeley alumni | opinions my own

Good Ventures @GoodVentures

6K Followers 67 Following Good Ventures is a philanthropic foundation whose mission is to help humanity thrive.

Nate Soares ⏹️ @So8res

7K Followers 72 Following

Open Philanthropy @open_phil

15K Followers 17 Following Open Philanthropy's mission is to help others as much as we can with the resources available to us.

Katyanna Quach @katyanna_q

2K Followers 822 Following Tech reporter @semafor, interested in AI and science 🤖 | previously @theregister

Jeff Dean (@🏡) @JeffDean

296K Followers 6K Following Chief Scientist, Google DeepMind and Google Research. Co-designer/implementor of things like @TensorFlow, MapReduce, Bigtable, Spanner, Gemini .. (he/him)

Ilya Sutskever @ilyasut

370K Followers 2 Following towards a plurality of humanity loving AGIs @openai

Terah Lyons @terahlyons

6K Followers 3K Following Not really active here. Affiliate Fellow @StanfordHAI. Former @PartnershipAI, Obama White House @WHOSTP44/@USCTO44.

Tara Mac Aulay @Tara_MacAulay

5K Followers 570 Following Cynical ex-aid worker turned crypto trader. Idealist at heart, realist by profession.

Brundage Bot @BrundageBot

4K Followers 1 Following Tweeting interesting deep learning papers from each arXiv release. Powered by a neural network trained on @Miles_Brundage tweets. Created by @amaub.

David Roodman @davidroodman

9K Followers 354 Following Senior advisor @open_phil. Formerly @GiveWell, @CGDev. Views expressed solely my own.

Animal Charity Evalua.. @AnimalCharityEv

5K Followers 484 Following At Animal Charity Evaluators, we find and promote the most effective ways to #helpanimals. We use #effectivealtruism principles to evaluate causes and research.

Ben Kuhn @benskuhn

7K Followers 289 Following Care a lot and try hard • making language models safer @AnthropicAI • prev CTO @WaveSenegal 🐧❤️

Lewis Bollard @Lewis_Bollard

7K Followers 844 Following Farm Animal Welfare Program Officer @open_phil. Views are my own. For more, sign up to my research newsletter: https://t.co/5DX9QNKo3R

Roxanne Heston @RoxanneHeston

1K Followers 543 Following Co-Founder & Director of Programs and Strategy https://t.co/n0WCPATLNb

Jacy Reese Anthis @jacyanthis

29K Followers 721 Following Sociologist and statistician @UChicago @SentienceInst researching human-AI interaction, ML, etc. Trying to build a better future for all sentient life.

William MacAskill @willmacaskill

64K Followers 1K Following Moral philosopher at Oxford. Author of Doing Good Better and What We Owe The Future.

Helen Toner @hlntnr

21K Followers 1K Following Interests: China+ML, natsec+tech, brains+words+absurdity | Current: @CSETGeorgetown (opinions my own) | Former: @open_phil

Ajeya Cotra @ajeya_cotra

6K Followers 286 Following AI could get really powerful soon and I worry we're underprepared. Analysis+grantmaking in AI alignment @open_phil (views my own), editor+writer @plannedobs.

Chris Cundy @ChrisCundy

1K Followers 194 Following PhD student at Stanford AI Lab, supervised by Stefano Ermon. Hopefully making AI benefit humanity. Anonymous feedback: https://t.co/Wh3rHMsRnm

GiveWell @GiveWell

27K Followers 137 Following We find outstanding charities and publish the full details of our analysis to help donors decide where to give.

Yann LeCun @ylecun

712K Followers 719 Following Professor at NYU. Chief AI Scientist at Meta. Researcher in AI, Machine Learning, Robotics, etc. ACM Turing Award Laureate.New @GoogleDeepMind MechInterp work! We introduce Gated SAEs, a Pareto improvement over existing sparse autoencoders. They find equally good reconstructions with around half as many firing features, while maintaining interpretability (CI 0-13% improvement). Joint w/ @ArthurConmy

Announcing a progress update from the @GoogleDeepMind mech interp team! Inspired by @AnthropicAI's excellent monthly updates, we share a range of updates on our work on Sparse Autoencoders, from signs of life on interpreting steering vectors with SAEs to improving ghost grads.

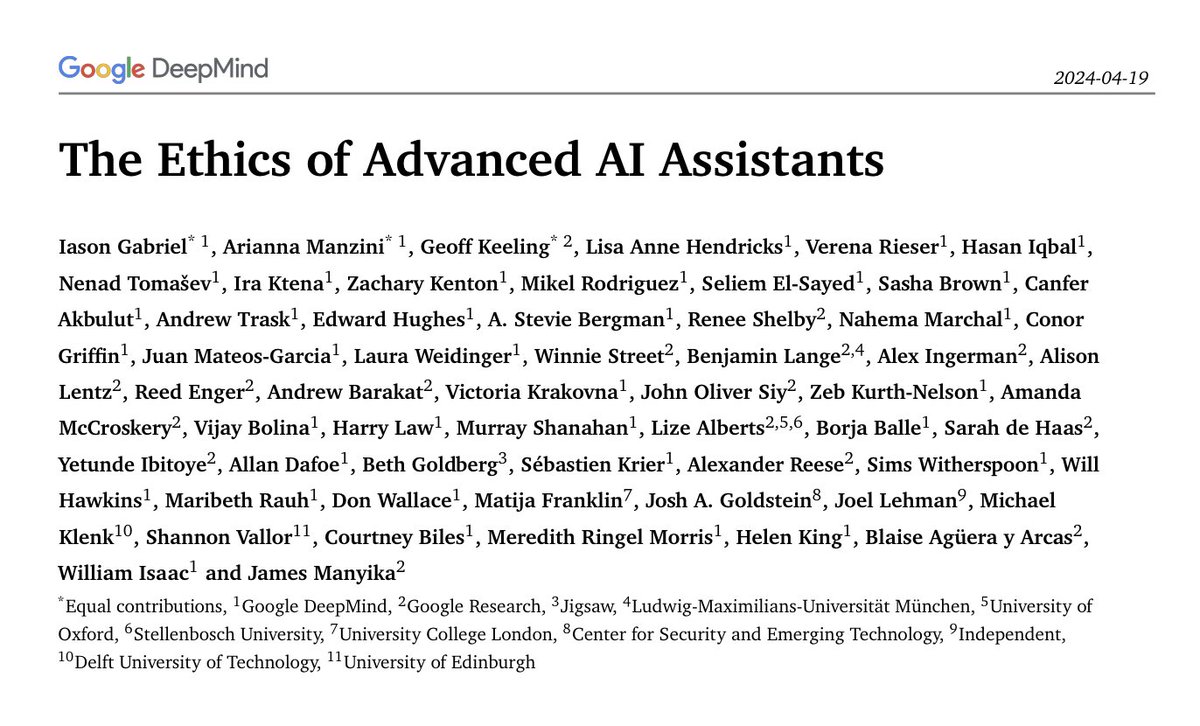

1. What are the ethical and societal implications of advanced AI assistants? What might change in a world with more agentic AI? Our new paper explores these questions: storage.googleapis.com/deepmind-media… It’s the result of a one year research collaboration involving 50+ researchers… a🧵

we're hiring: boards.greenhouse.io/deepmind/jobs/…

@GoogleDeepMind I am so happy I get to work with @rohinmshah again!

Great new Google DeepMind paper on evaluating frontier models for dangerous capabilities. The evals cover four areas: persuasion and deception; cyber-security; self-proliferation; and self-reasoning. They test agents, comprised of the model + scaffolding. arxiv.org/abs/2403.13793

In 2024, the AI community will develop more capable AI systems than ever before. How do we know what new risks to protect against, and what the stakes are? Our research team at @GoogleDeepMind built a set of evaluations to measure potentially dangerous capabilities: 🧵

Imagine if employees expected to get all their work for the week done in the one hour weekly meeting with their supervisor? Ridiculous, of course. Most of the work gets done outside of that meeting. Same deal with coaching or therapy.

@Algon_33 @rohinmshah Arbitrarily small, all of them, two forwards and one backwards neelnanda.io/mechanistic-in…

Where Do I Go Live, from my EP, From Afar, is out now! I’ve always wanted to play this song stripped back with just guitars to highlight the intention of the song. Who do we become in the face of loss? 🫂 youtu.be/WFm15yHCZQ4?fe…

My first @GoogleDeepMind project: How do LLMs recall facts? Early MLP layers act as a lookup table, with significant superposition! They recognise entities and produce their attributes as directions. We suggest viewing fact recall as a black box making "multi-token embeddings”

In our new @GoogleDeepMind paper, we redteam methods that aim to discover latent knowledge through unsupervised learning from LLM activation data. TL;DR: Existing methods can be easily distracted by other salient features in the prompt. arxiv.org/abs/2312.10029 🧵👇

Cool work from @GoogleDeepMind alignment on limitations of methods for eliciting a model's beliefs! My key takeaway is that unsupervised methods (eg CCS) rely on "proxy properties" of true beliefs, but other features share these proxies! Eg "agrees with the user" vs "is true"

In our new @GoogleDeepMind paper, we redteam methods that aim to discover latent knowledge through unsupervised learning from LLM activation data. TL;DR: Existing methods can be easily distracted by other salient features in the prompt. arxiv.org/abs/2312.10029 🧵👇

Very nice study of "grokking" from @vkrntv @rohinmshah and colleagues at @GoogleDeepMind "Grokking" is a rapid switch of strategy from perfect memorization (low training loss) to accurate generalization (low testing loss) 🤯 After decades of advancements in the field of neural…

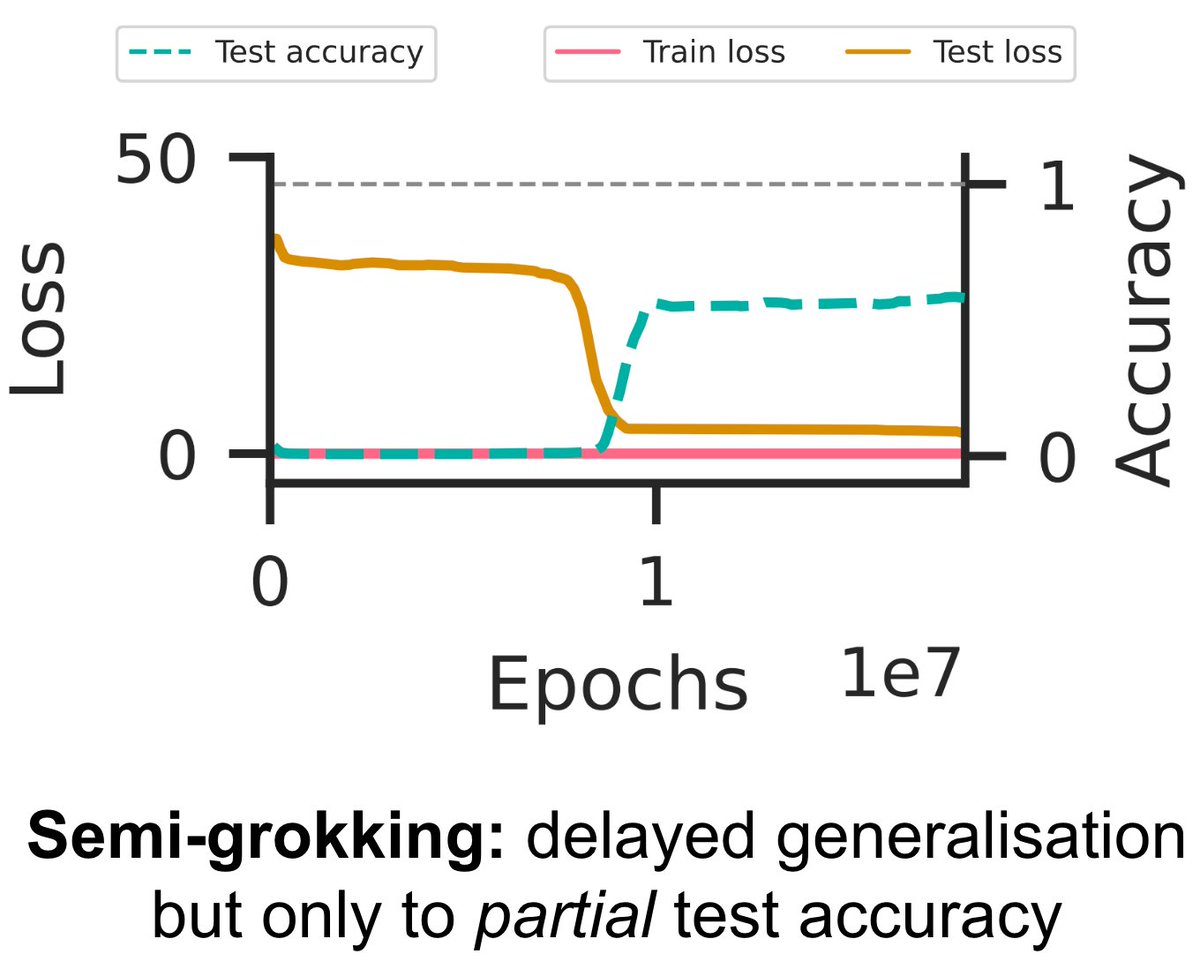

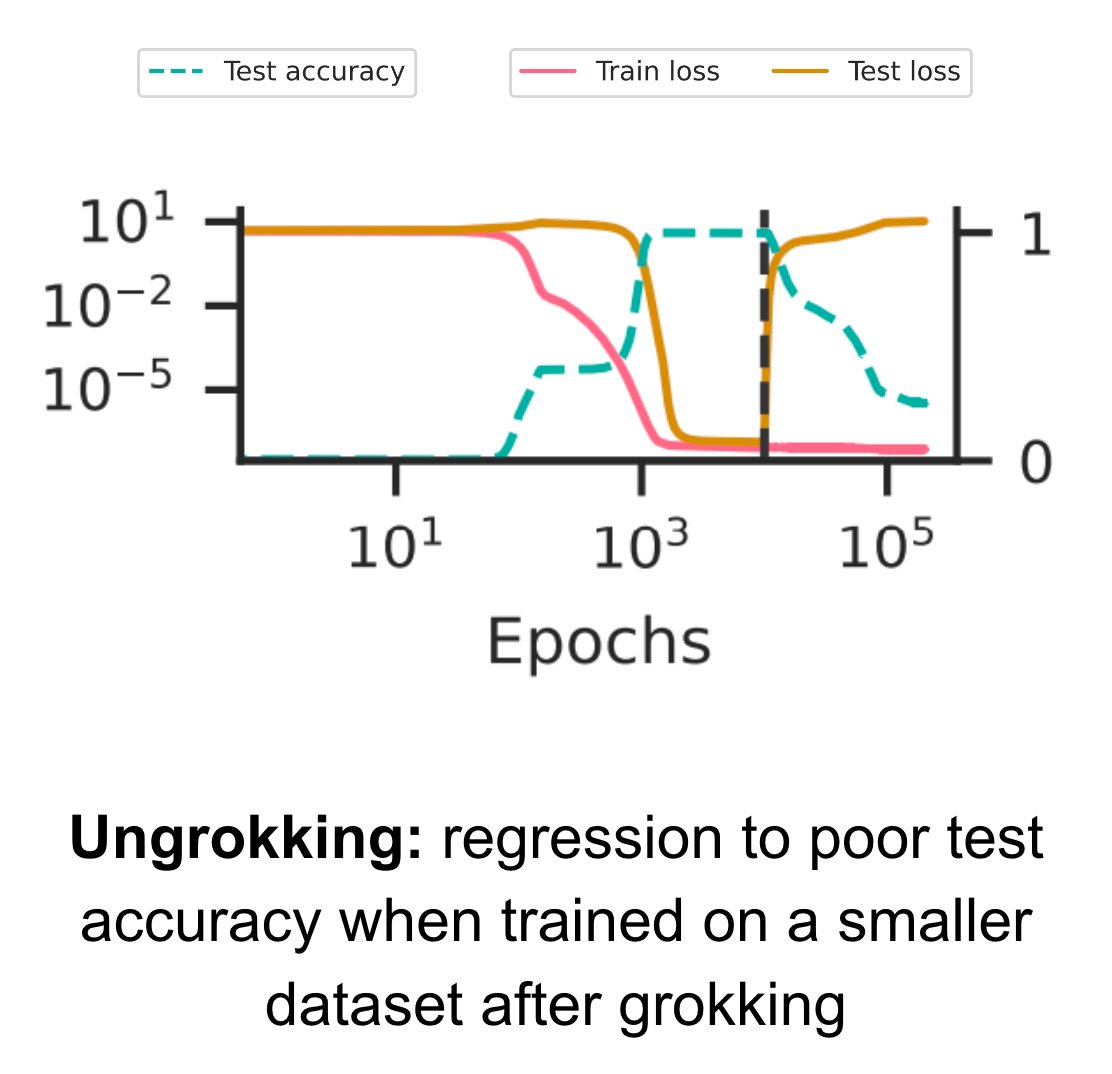

Our latest paper (arxiv.org/abs/2309.02390) provides a general theory explaining when and why grokking (aka delayed generalisation) occurs – a theory so precise that we can predict hyperparameters that lead to partial grokking, and design interventions that reverse grokking! 🧵👇

We come up with a theory of why grokking (delayed generalisation) occurs, leading to new empirical predictions of semi-grokking and ungrokking phenomena! Great work led by @VikrantVarma_ and @rohinmshah, which I'm proud to have contributed to! 🤩

Our latest paper (arxiv.org/abs/2309.02390) provides a general theory explaining when and why grokking (aka delayed generalisation) occurs – a theory so precise that we can predict hyperparameters that lead to partial grokking, and design interventions that reverse grokking! 🧵👇

Our latest paper (arxiv.org/abs/2309.02390) provides a general theory explaining when and why grokking (aka delayed generalisation) occurs – a theory so precise that we can predict hyperparameters that lead to partial grokking, and design interventions that reverse grokking! 🧵👇

What was I getting wrong about deliberate practice? lynettebye.com/blog/2023/7/27…

'The 80k Podcast on AI' is a compilation of interviews that would teach someone an insane amount about AI's promise and risks and what to do about it. (Yes I'm biased, but still it's true.) Here's the 11 episodes that made the cut: 🧵 80000hours.org/podcast/on-art…

A taster's selection on AI. Especially appreciate @rohinmshah 's insight in episode 4 on the messiness of bringing technical solutions to a broad multilateral community. 80000hours.org/podcast/on-art…