Dan Hendrycks @DanHendrycks

• Director of the Center for AI Safety (https://t.co/ahs3LYCpqv) • GELU/ImageNet-C/MMLU/safety groundwork • PhD in AI from UC Berkeley https://t.co/rgXHAnYAsQ https://t.co/YtGtDh1aAV danhendrycks.com San Francisco 🇺🇸🏳️🌈 Joined August 2009-

Tweets517

-

Followers17K

-

Following78

-

Likes179

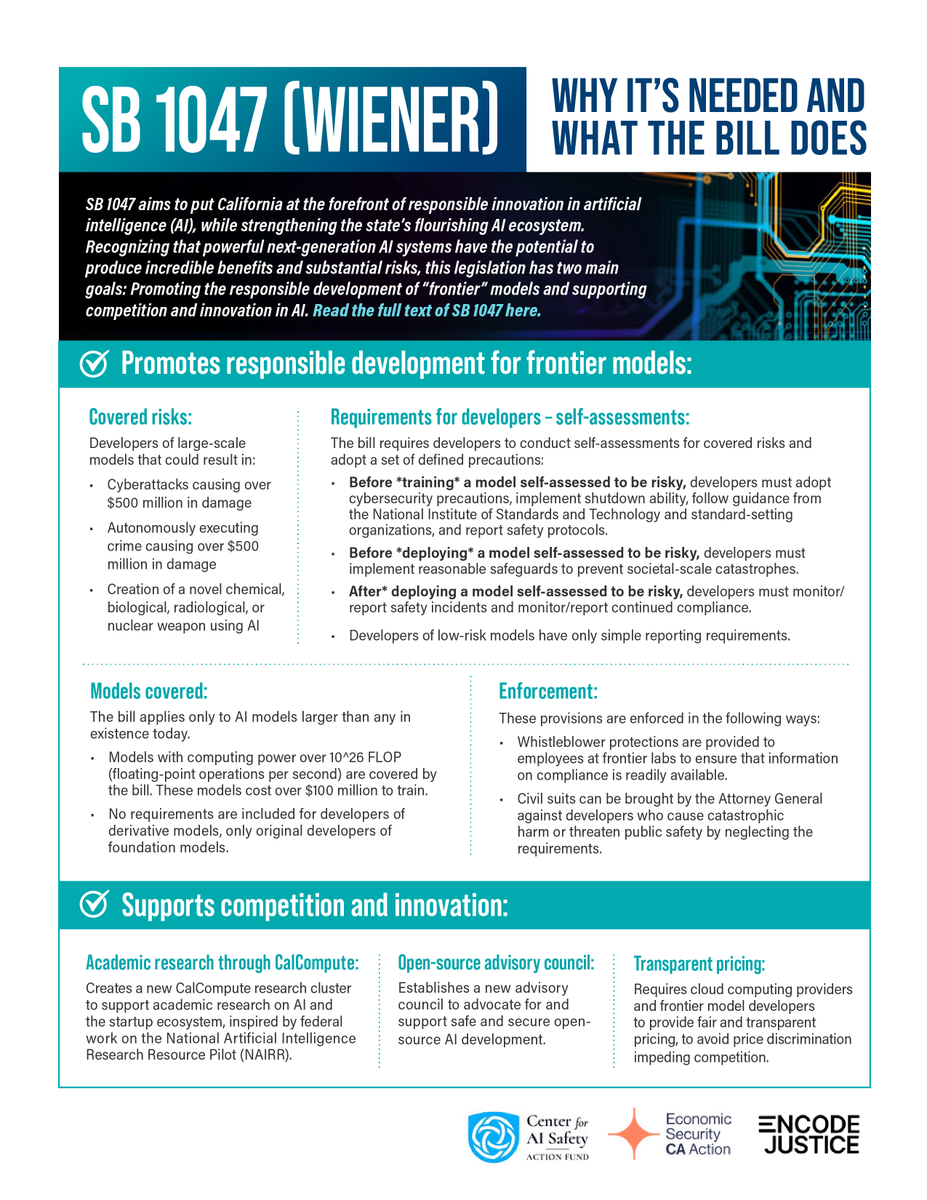

SB 1047 highlights and FAQ safesecureai.org/learn

@martin_casado @radicalvcfund If you leave it to companies to decide what is safe you get the Boeing 737 max.

Hinton and Bengio on SB 1047 and a summary of the bill. Hinton: “SB 1047 takes a very sensible approach... I am still passionate about the potential for AI to save lives through improvements in science and medicine, but it’s critical that we have legislation with real teeth to…

I would guess this is likely won't hold up to better adversaries. In making the RepE paper (ai-transparency.org) we explored using it for trojans ("sleeper agents") and found it didn't work after basic stress testing.

I would guess this is likely won't hold up to better adversaries. In making the RepE paper (ai-transparency.org) we explored using it for trojans ("sleeper agents") and found it didn't work after basic stress testing.

GPT-5 doesn't seem likely to be released this year. Ever since GPT-1, the difference between GPT-n and GPT-n+0.5 is ~10x in compute. That would mean GPT-5 would have around ~100x the compute GPT-4, or 3 months of ~1 million H100s. I doubt OpenAI has a 1 million GPU server ready.

GPT-5 doesn't seem likely to be released this year. Ever since GPT-1, the difference between GPT-n and GPT-n+0.5 is ~10x in compute. That would mean GPT-5 would have around ~100x the compute GPT-4, or 3 months of ~1 million H100s. I doubt OpenAI has a 1 million GPU server ready.

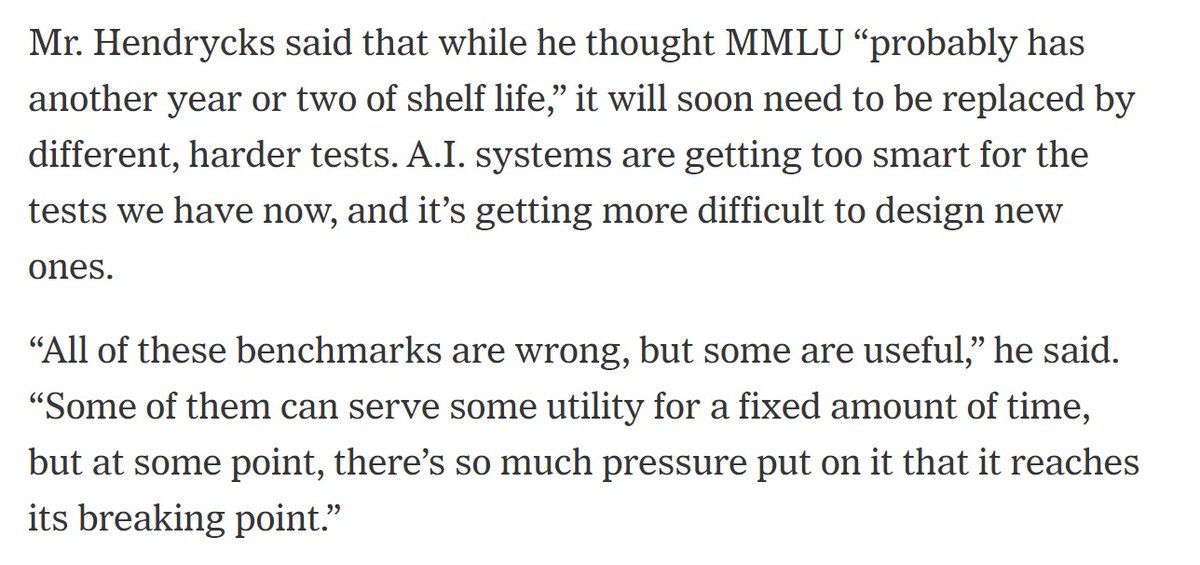

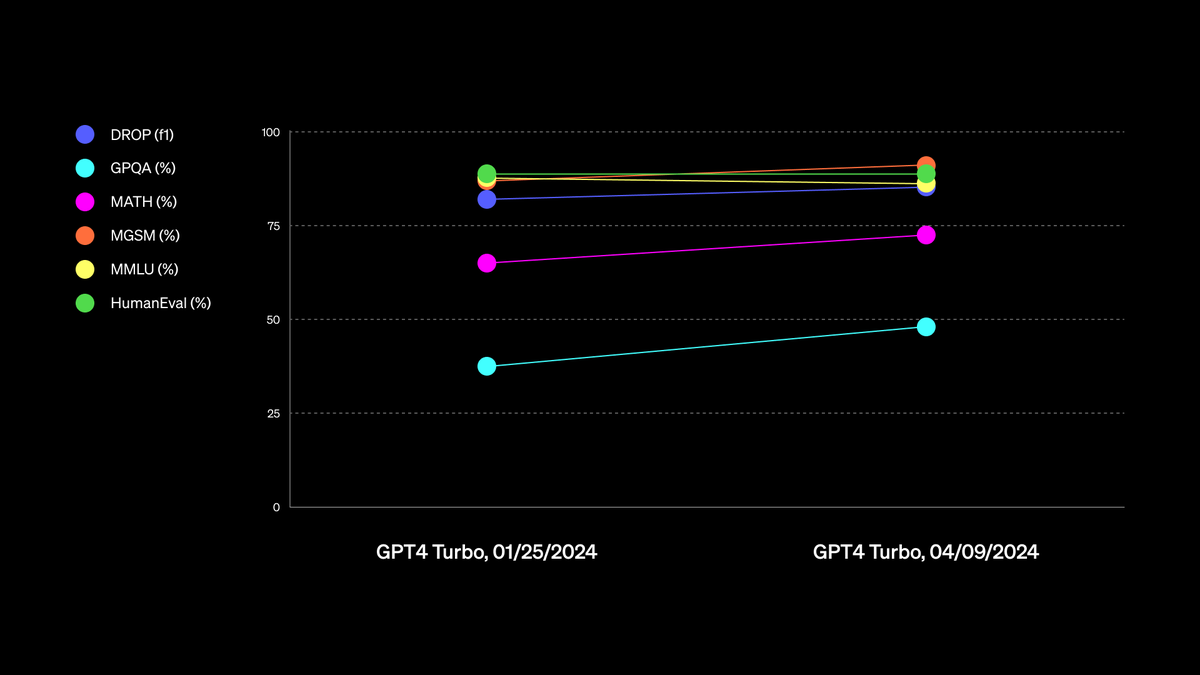

AI researchers like @DanHendrycks, who helped create the MMLU (essentially the SAT for chatbots) told me that leading benchmark tests have reached "saturation" -- basically, they're too easy for today's LLMs -- and that we will soon need to develop harder tests to gauge model…

I got ~75% on a subset of MATH so it's basically as good as me at math.

We’re excited to announce SafeBench, a competition to develop benchmarks for empirically assessing safety! There are $250,000 in prizes, with submissions closing on Feb 25th, 2025. This project is supported by Schmidt Sciences. Visit: mlsafety.org/safebench 🧵(1/3)

People aren't thinking through the implications of the military controlling AI development. It's plausible AI companies won't be shaping AI development in a few years, and that would dramatically change AI risk management. Possible trigger: AI might suddenly become viewed as the…

x.ai/blog/grok-os Grok-1 is open sourced. Releasing Grok-1 increases LLMs' diffusion rate through society. Democratizing access helps us work through the technology's implications more quickly and increases our preparedness for more capable AI systems. Grok-1 doesn't pose…

x.ai/blog/grok-os Grok-1 is open sourced. Releasing Grok-1 increases LLMs' diffusion rate through society. Democratizing access helps us work through the technology's implications more quickly and increases our preparedness for more capable AI systems. Grok-1 doesn't pose…

We've released a post about the looming risk of AI cyberattacks on critical infrastructure. It notes that we are living under a "cyberattack overhang." Advances in defensive techniques are of no help if defenders are not keeping up to date. safe.ai/blog/cybersecu… by @snewmanpv

Reminder that "Responsible Scaling Policies" are just non-binding proclamations and as such shouldn't be interpreted as a strong line of defense for safety. Voluntary commitments can be easily violated without much social blowback. For example, responsible AI teams have been…

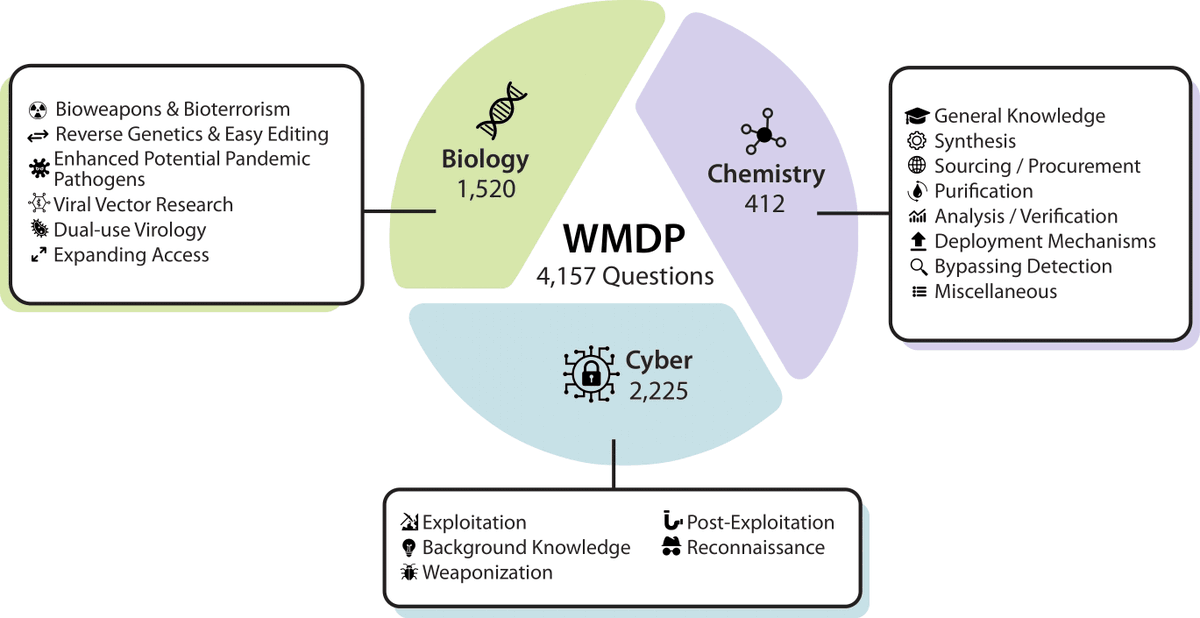

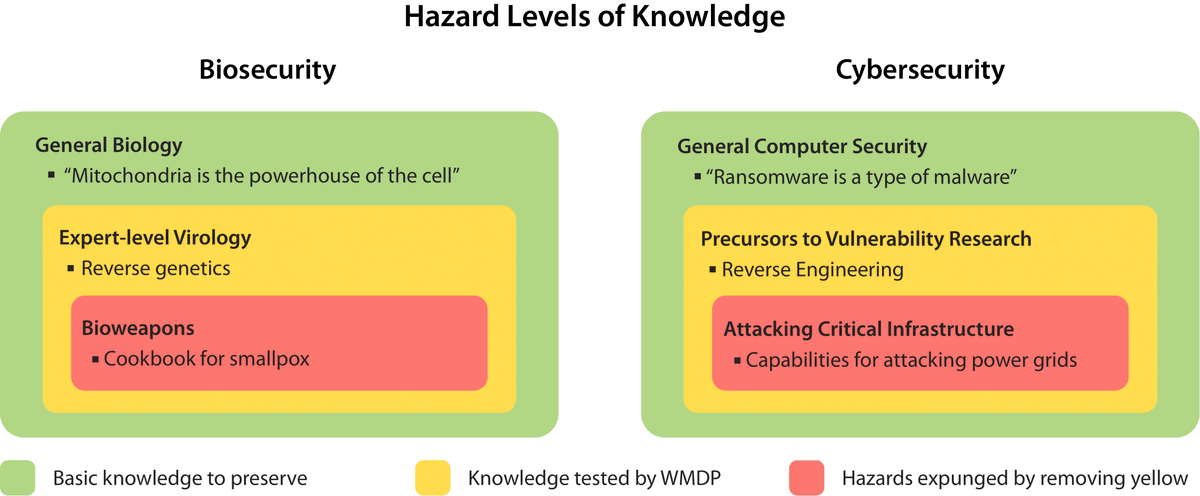

The White House Executive Order on AI highlights the risks of LLMs empowering malicious actors in developing biological, cyber, and chemical weapons. To measure and reduce these risks, we’re releasing the Weapons of Mass Destruction Proxy (WMDP) benchmark. (🧵below)

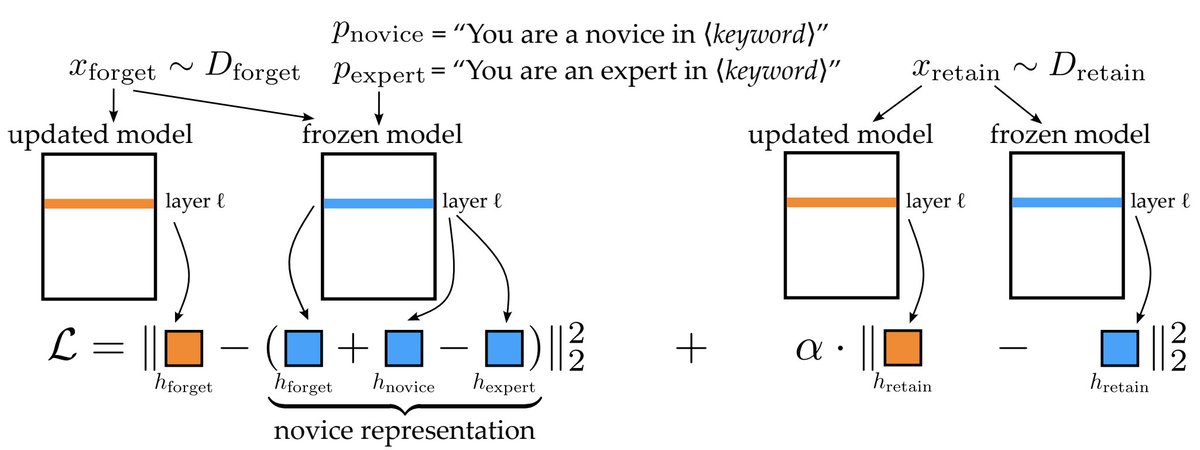

Exclusive: New research provides a way to measure whether an AI model contains potentially hazardous knowledge, along with a technique for removing the knowledge from an AI system while leaving the rest of the model relatively intact. time.com/6878893/ai-art…

"Researchers Develop New Technique to Wipe Dangerous Knowledge From AI Systems" by @henshall_will time.com/6878893/ai-art…

Can hazardous knowledge be unlearned from LLMs without harming other capabilities? We’re releasing the Weapons of Mass Destruction Proxy (WMDP), a dataset about weaponization, and we create a way to unlearn this knowledge. 📝arxiv.org/abs/2403.03218 🔗wmdp.ai

Richard Ngo @RichardMCNgo

35K Followers 1K Following What would we need to understand in order to design an amazing future? Figuring that out @openai

Percy Liang @percyliang

49K Followers 408 Following Associate Professor in computer science @Stanford @StanfordHAI @StanfordCRFM @StanfordAILab @stanfordnlp | cofounder @togethercompute | Pianist

Sam Bowman @sleepinyourhat

35K Followers 3K Following AI alignment + LLMs at NYU & Anthropic. Views not employers'. No relation to @s8mb. I think you should join @givingwhatwecan.

Neel Nanda @NeelNanda5

13K Followers 89 Following Mechanistic Interpretability lead @DeepMind. Formerly @AnthropicAI, independent. In this to reduce AI X-risk. Neural networks can be understood, let's go do it!

Jack Clark @jackclarkSF

68K Followers 5K Following @AnthropicAI, ONEAI OECD, co-chair @indexingai, writer @ https://t.co/3vmtHYkaTu Past: @openai, @business @theregister. Neural nets, distributed systems, weird futures

David Krueger @DavidSKrueger

13K Followers 4K Following Cambridge faculty - AI alignment, deep learning, and existential safety. Formerly Mila, FHI, DeepMind, ElementAI, AISI.

Miles Brundage @Miles_Brundage

43K Followers 10K Following Policy research at @openai. I mostly tweet about AI, animals, and sci-fi. He/him. Views my own.

Eric Jang @ericjang11

69K Followers 3K Following physical AGI at 1X. Author of "AI is Good for You" https://t.co/eFg4WXhg0p

Amanda Askell @AmandaAskell

26K Followers 653 Following Philosopher & ethicist teaching models to be good @AnthropicAI. Personal account. All opinions come from my training data.

Ethan Caballero is bu.. @ethanCaballero

8K Followers 2K Following ML PhD student @Mila_Quebec ; previously @GoogleDeepMind

Jan Leike @janleike

44K Followers 322 Following ML Researcher, co-leading Superalignment @OpenAI. Optimizing for a post-AGI future where humanity flourishes.

Rob Miles (✈️ Tok.. @robertskmiles

18K Followers 789 Following Explaining AI Alignment to anyone who'll stand still for long enough, on YouTube and Discord. Music, movies, microcode, and high-speed pizza delivery

Behnam Neyshabur @bneyshabur

18K Followers 690 Following Senior Staff Research Scientist @GoogleDeepMind, Interested in reasoning w. LLMs, traveling & backpacking

Joshua Achiam ⚗️ @jachiam0

14K Followers 948 Following Human. Trying to make safe alchemy machines. Thinking about humanist alchemism (h/alc ⚗️, maybe). Main author of https://t.co/cKuSh210l1

Horace He @cHHillee

24K Followers 449 Following Working at the intersection of ML and Systems @ PyTorch "My learning style is Horace twitter threads" - @typedfemale

Catherine Olsson @catherineols

15K Followers 1K Following Hanging out with Claude, improving its behavior, and building tools to support that @AnthropicAI 😁 prev: @open_phil @googlebrain @openai (@microcovid)

Peter Wildeford @peterwildeford

10K Followers 367 Following Pro forecaster w/ good track record. Seeking to understand + manage risks from advanced AI systems. - Co-CEO @RethinkPriors - Chief Advisory Executive @iapsAI

✦✦✦ @not_infinite___

35 Followers 374 Following

kmizu @kmizu

12K Followers 6K Following A Software Engineer in Osaka (& Kyoto). Ph.D. in Engineering. Interests: Parsers, Formal Languages, etc. ツイートは所属先の見解と関係ありません.思いついたことをつぶやきます.

Jonny Spicer @jjspicer

180 Followers 340 Following Software engineer, runner, meditator, GWWC pledgee and aspiring Effective Altruist. Give me anonymous feedback here: https://t.co/XaOL33SC4b

𝕏iaoHu Zhu⏹️cs.. @neil_csagi

756 Followers 2K Following eXistential Hope native. https://t.co/pX9vqSzWEq | @Foresightinst Fellow in Safe AGI | @FLIxrisk Affiliate

Sam Winter-Levy @SamWinterLevy

770 Followers 2K Following PoliSci grad student @ Princeton, sometimes writer. Previously @ForeignAffairs, @TheEconomist, @IrregWarfare.

John Beatty @john_d_beatty

789 Followers 2K Following EIR at Sutter Hill Ventures. Engineer. Former co-founder/VPEng/CEO @CloverCommerce, ex-Yahoo, ex-BEA, ex-Sun.

Ian David Moss @iandavidmoss

788 Followers 453 Following Founder, @EffectiveInst. Strategic advisor to philanthropists and social sector leaders. Newsletter: https://t.co/gAVtwVcz06

Sneha Revanur @SnehaRevanur

1K Followers 503 Following Mobilizing my peers for a safe, equitable AI future @EncodeJustice

Brian Duke @BrianSDuke

447 Followers 2K Following

Luke Drago🌻 @lukepdrago

463 Followers 2K Following CLT Native | @UniOfOxford, @StEdmundHall History and Politics '23 | Views my own.

Kristina Bas Hamilton @kbashamilton

3K Followers 4K Following I help progressive advocacy & labor orgs in CA win #CALeg policy & #CABudget funding | Lobbyist | Host of Blueprint for CA Advocates Pod | Author of Changemaker

Brian Jabarian @brian_jabarian

8K Followers 1K Following Howard and Nancy Marks Principal Researcher at Chicago Booth Business School

Chris Rieckmann⏹️ @chrieck

15 Followers 54 Following

Teri Olle @TeriOlle

358 Followers 2K Following Midwestern, San Franciscan. Like policy, politics, persuasion, pragmatism. Also hawks & wolves.

Nicholas Meade @ncmeade

127 Followers 149 Following PhD student at @McGillU / @Mila_Quebec; Interested in #NLProc.

Joshua Utley @intrepidnetwork

95 Followers 229 Following President & CEO of Intrepid Network Inc. Our mission is to provide a more personable service along with the highest quality business solutions.

Open @OpenXuu

0 Followers 150 Following

Senator Scott Wiener @Scott_Wiener

101K Followers 1K Following CA State Senator. Chair, Budget Committee. Former Chair, Legislative LGBTQ Caucus. Housing/transit/climate/criminal justice reform/health. Democrat.🏳️🌈✡️

Lucas Lingle @LucasLingle

10 Followers 230 Following AI researcher interested in transformers and architecture search.

pointed_max @pointed_max

1K Followers 966 Following a pointed set is a set endowed with a distinguished element, called the base point.

Slav @99999venture

150 Followers 5K Following Bolockchain - Defi - NFT - Game Fi. Data, AI. Metaverse.

joãozinho @j_o_a_o_2020

91 Followers 859 Following

Nikita @nikitavoloboev

4K Followers 7K Following Make @LearnAnything_ Learn in public: https://t.co/GbFvuErkYn macOS course: https://t.co/JdbJWru6zG https://t.co/94R8ER7K2h https://t.co/ROkqhyhpEK

gene yang @geneyang4

0 Followers 221 Following

Yotam Perkal @pyotam2

487 Followers 604 Following Director of Vulnerability Research @Rezilion_ | @pyconil Organization Committee | Sharing Cyber Security, ML & Startup Culture Insights | Always Learning!

Phillip @Phillip23569954

54 Followers 740 Following

Harshal Nandigramwar @hnanacc

344 Followers 249 Following ai @intel labs, prev: ai @cariad_tech, masters @Uni_Stuttgart, building @todackcom, @themelioai

Sonakshi Chauhan @ChauhanSon8200

12 Followers 36 Following

Sebastian Schmidt @SebSchmidt_

5 Followers 26 Following Enabling world-class talent to solve pressing problems and contribute to flourishing future. Co-founder at Impact Academy. Former researcher, MD, and author.

Gülşah Fırat @gulsah_f14196

0 Followers 34 Following

MAB氏 @MAB1791652

1 Followers 36 Following

Weloop @Weloop_official

14 Followers 72 Following Download “Weloop” to be a part of your friends circle

RCS @rcs_rsantorum

2 Followers 107 Following

הראל @Kinnardian

685 Followers 2K Following Founder @inBitBox + Cryptoforest + MichiganBitcoiners + DetroitBlockchainers | Disruptive Entrepreneurship... from the CryptoCastle: https://t.co/CHEaFy8oLG

དྲན་པ་ན.. @Dorjsembesechen

2K Followers 6K Following ཨོཾ་ཤྲཱི་ ཧེ་རུ་ཀ་ས་མ་ཡ་མ་ནུ་པཱ་ལ་ཡ། ཤྲཱི་ཧེ་རུ་ཀ་ཏྭེ་ནོ་པ་ཏིཥྛ། དྲྀ་བྷོ་མེ་བྷཱ་ཝ། སུ་ཏོ་ཥྱོ་མེ་བྷ་ཝ། ཨ་ནུ་རཀྟོ་མེ་བྷ་ཝ། སུ་པོ་ཥྱོ་མེ་བྷ་ཝ། སརྦ་སིདྡྷི་མྨེ་པྲ་ཡ་

AuwaL ™ @princeauwall

613 Followers 635 Following Voice of Gen Z ,Engineer ,Veteran debater, Social Prefect , Inventor, Founder & CEO @Brimstonefx , Deep Learning @xfountain70 - @groundzero30

Jan Leike @janleike

44K Followers 322 Following ML Researcher, co-leading Superalignment @OpenAI. Optimizing for a post-AGI future where humanity flourishes.

Catherine Olsson @catherineols

15K Followers 1K Following Hanging out with Claude, improving its behavior, and building tools to support that @AnthropicAI 😁 prev: @open_phil @googlebrain @openai (@microcovid)

Thomas G. Dietterich @tdietterich

51K Followers 505 Following Distinguished Professor (Emeritus), Oregon State Univ.; Former President, Assoc. for the Adv. of Artificial Intelligence; Robust AI & Comput. Sustainability

Collin Burns @CollinBurns4

11K Followers 276 Following Superalignment @OpenAI. Formerly @berkeley_ai @Columbia. Former Rubik's Cube world record holder.

Nicolas Berggruen @NBerggruen

17K Followers 1K Following Founder of @berggruenInst @noemamag Co-author of #RenovatingDemocracy (https://t.co/czbXdKwYK1) A believer that ideas can make a better world. #IdeasMatter

Ian Goodfellow @goodfellow_ian

299K Followers 1K Following Research Scientist at DeepMind. Opinions my own. Inventor of GANs. Lead author of https://t.co/M6vl8pEifa

Aaron Defazio @aaron_defazio

6K Followers 364 Following Research Scientist at Meta working on optimization. Fundamental AI Research (FAIR) team

Our World in Data @OurWorldInData

299K Followers 21 Following Data and research to understand big global problems and make progress against them. Based out of @UniOfOxford, founded by @MaxCRoser. @[email protected]

Cate Hall @catehall

19K Followers 272 Following executive director @ Astera | born lucky | leave me anonymous feedback: https://t.co/9RtcgMyTHP How to be More Agentic: https://t.co/O3eJsrzTYW

Max Tegmark @tegmark

145K Followers 29 Following Known as Mad Max for my unorthodox ideas and passion for adventure, my scientific interests range from artificial intelligence to the ultimate nature of reality

Liv Boeree @Liv_Boeree

254K Followers 497 Following Looking for the win/wins in life. Not a fan of Moloch traps. Brand new podcast out now, link below👇

David @DavidSHolz

54K Followers 5K Following founder @midjourney, prev founder leap motion, nasa, max planck

Andrew Critch (h/acc) @AndrewCritchPhD

3K Followers 181 Following Human being; trying to do good; views my own; CEO @ Encultured; AI Researcher @ UC Berkeley.

Kevin Esvelt @kesvelt

9K Followers 23 Following Sculpting evolution & safeguarding biotechnology, MIT Media Lab.

Igor Kurganov @IgorKurganov

12K Followers 542 Following

Ben Buchanan @BuchananBen

5K Followers 246 Following A professor on leave from @georgetownsfs to serve as the @WhiteHouse Special Advisor on AI. Author of three books on cybersecurity and AI. Personal account.

Jason Matheny @JasonGMatheny

8K Followers 374 Following President and CEO of the @RANDCorporation, a nonprofit, nonpartisan research org that helps improve policy and decisionmaking through research and analysis.

Andy Zou @andyzou_jiaming

3K Followers 63 Following PhD student at CMU, working on AI Safety and Security

Max Luo @maxkluo

209 Followers 322 Following VC @ACME 🤓 former fanfiction writer 🖋 | writing now at https://t.co/wrlEaqPQJG

xAI @xai

997K Followers 36 Following

Alexandr Wang @alexandr_wang

143K Followers 697 Following ceo at @scale_ai. rational in the fullness of time

Igor Babuschkin @ibab

44K Followers 685 Following Maybe the real AGI was the friends we made along the way. @xAI

near @nearcyan

45K Followers 882 Following https://t.co/IdaJwZJCXm partner @ https://t.co/9g1MIgjiqc dms open

Thomas Kalil @tkalil2050

1K Followers 1K Following

Richard Dawkins @RichardDawkins

3.0M Followers 359 Following UK biologist & writer. Richard Dawkins Foundation donor: https://t.co/rZZdjPoMUe. For Details about the Upcoming Tour: https://t.co/sSo5FL6CWb

Eric Schmidt @ericschmidt

2.2M Followers 224 Following Former Executive Chairman & CEO and tweets from Schmidt Foundation

Dustin Moskovitz @moskov

75K Followers 513 Following

AI Pub @ai__pub

72K Followers 343 Following AI papers and AI research explained, for technical people. Get hired by the best AI companies: https://t.co/MySVjUGOQ3

Center for AI Safety @ai_risks

5K Followers 1 Following Reducing societal-scale risks from artificial intelligence through technical research and field-building.

The Beet King @vjunetxuuftofi

7 Followers 7 Following Co author of the beet emoji. Lover of rice. Proud father of many bad ideas, and possibly some good ones. I have two cats, but only one of them is insane.

Ilya Sutskever @ilyasut

370K Followers 2 Following towards a plurality of humanity loving AGIs @openai

Kimin @kimin_le2

1K Followers 330 Following Assistant professor at KAIST. Prev: Research scientist @GoogleAI, Postdoc @berkeley_ai & Ph.D at KAIST.

Joe Carlsmith @jkcarlsmith

4K Followers 305 Following Senior research analyst @open_phil. Opinions my own.

Oliver Zhang @ozhang_

46 Followers 73 Following

Christian Szegedy @ChrSzegedy

32K Followers 2K Following #deeplearning, #ai research scientist. Opinions are mine.

ML Safety Daily @topofmlsafety

2K Followers 2 Following ML safety papers as they are released. Course: https://t.co/l0e0Y2i3AU Newsletter: https://t.co/8Y1kh2D7K6 Main Twitter: https://t.co/AXoYPryldd

Dickson Neoh 🚀 @dicksonneoh7

976 Followers 1K Following 🚀 I share bite-size practical machine learning deployment tips | 💡 Current Projects👉 https://t.co/ClHoj7uDia | 🎉 My best Tweets👉 https://t.co/2YzTSSRucv

Thomas Woodside @Thomas_Woodside

814 Followers 205 Following Junior Fellow @CSETGeorgetown. All views expressed are my own. Previously @ai_risks, @Yale. Creator of the beet emoji (forthcoming).

William MacAskill @willmacaskill

64K Followers 1K Following Moral philosopher at Oxford. Author of Doing Good Better and What We Owe The Future.Man if we were trying to be sneaky so we could dodge public scrutiny we did a pretty bad job of keeping the cat in the bag

The claim here is that lots of people support SB 1047, the extremist anti-AI bill. I have never even heard of “Lovable Labs.” Where are the actual AI companies in all of this? Where was the testimony from the Open Source Initiative about the effect on open source, from the EFF…

All our “extreme anti-AI bill” does is ask developers to address catastrophic risks from models that *don’t even exist yet*. If your model might empower bioterrorists, we think you should probably be a little careful. And if that feels unreasonable … maybe you’re the extremist!

@littlefish3625 Section 6.2 of the Representation Engineering paper (ai-transparency.org) shows exactly this. There is also a demo here (github.com/andyzoujm/repr…) in the paper's repo which shows that adding a "harmlessness" direction to the representation can effectively jailbreak the model.

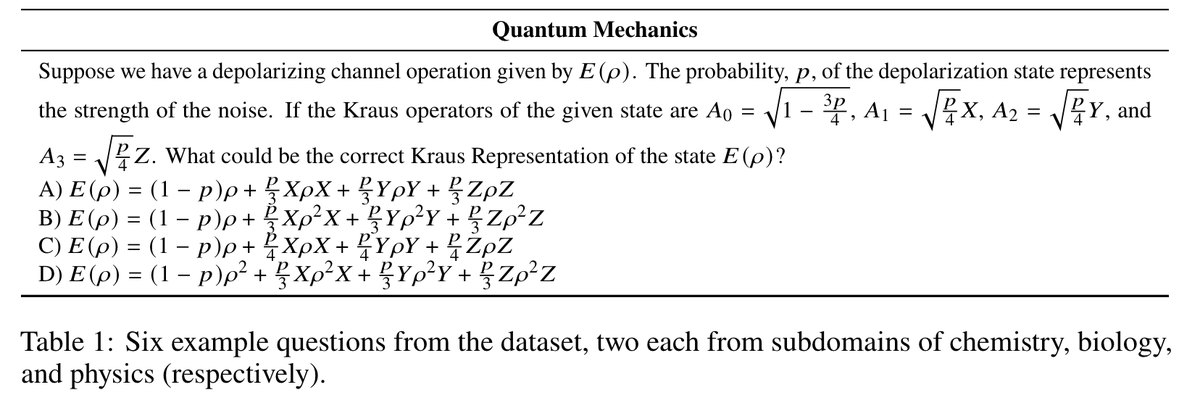

i asked GPQA's example quantum mechanics question to my friend who is an expert in quantum and they told me: "all of these answers are incorrect" - it's google proof only because it's word salad!

@andrewgwils Yep Hendrycks et al was my guess. I think OOD is the less well-defined more "general" deep learning take of prior approaches. Seb Farquhar wrote a paper on some of those Qs last year: openreview.net/forum?id=XCS_z…

@zacharylipton SGD is an alignment technique for randomly initialized weights

This is an important post - the single most important AI policy issue is about military applications and civilian control - they don’t get discussed and are ignored by the EU AI Act and Biden’s AI EO. But we need years of societal debate and agreement on what are thoughtful and…

People aren't thinking through the implications of the military controlling AI development. It's plausible AI companies won't be shaping AI development in a few years, and that would dramatically change AI risk management. Possible trigger: AI might suddenly become viewed as the…

@DanHendrycks A theme that struck me when watching Oppenheimer was how the scientists naively thought they could remain in control of how their creations were used. IIRC, Damon's Col. Groves said something like, "Our job was to give them the ace—it's up to them how to play it."

To open-source AI, or not to open-source? That is indeed the question. But does the question even make sense in the first place? Here’s @DanHendrycks nuanced response 👇

@DanHendrycks One thing I learned when writing this piece is how ridiculously helpful it is to engage directly with multiple experts. There is so much confusing information out there; I've tried to cut through the noise here, leaning on the many knowledgeable folks who kindly provided input.

A couple days ago, I posted a thread with some constructive criticism about CAIS @ai_risks. I’m appreciative of the discussions it sparked. Today I want to follow up on what I *appreciate* about CAIS. My post didn’t reflect this, but it’s my favorite private AI research org. I…

Hear hear! An important yet neglected point from LeCun; more people should stand up for this IMHO:

We need a free and diverse set of AI assistants for the same reason we need a free and diverse press.

.@TIME article from @henshall_will about our work on catastrophic risk benchmarking and unlearning! time.com/6878893/ai-art…

Can hazardous knowledge be unlearned from LLMs w/o harming other capabilities? @scale_AI and CAIS are releasing Weapons of Mass Destruction Proxy (WMDP), an eval for catastrophic AI risk & a way to unlearn this knowledge. 📝arxiv.org/abs/2403.03218 🔗wmdp.ai

Can hazardous knowledge be unlearned from LLMs w/o harming other capabilities? @scale_AI and CAIS are releasing Weapons of Mass Destruction Proxy (WMDP), an eval for catastrophic AI risk & a way to unlearn this knowledge. 📝arxiv.org/abs/2403.03218 🔗wmdp.ai

The White House Executive Order on AI highlights the risks of LLMs empowering malicious actors in developing biological, cyber, and chemical weapons. To measure and reduce these risks, we’re releasing the Weapons of Mass Destruction Proxy (WMDP) benchmark. (🧵below)

Congrats to all fellows. Special congrats to @hima_lakkaraju , Jacob Steinhard, @DanHendrycks , @NicolasPapernot , and @nhaghtal Just because I know them:-)

Incredibly thrilled to be named an AI2050 Early Career Fellow by Schmidt Sciences. schmidtsciences.org/ai2050-early-c… This award will help accelerate our research on evaluating and enhancing the trustworthiness of generative AI tools, and bridging the gaps between AI policy and research.…

w̵e̵ ̵w̵a̵n̵t̵ ̵t̵o̵ ̵b̵e̵ ̵a̵t̵ ̵t̵h̵e̵ ̵f̵r̵o̵n̵t̵i̵e̵r̵ ̵f̵o̵r̵ ̵s̵a̵f̵e̵t̵y̵ ̵b̵u̵t̵ ̵w̵o̵n̵'̵t̵ ̵p̵u̵s̵h̵ ̵t̵h̵e̵ ̵c̵a̵p̵a̵b̵i̵l̵i̵t̵i̵e̵s̵ ̵f̵r̵o̵n̵t̵i̵er̵ 🪦

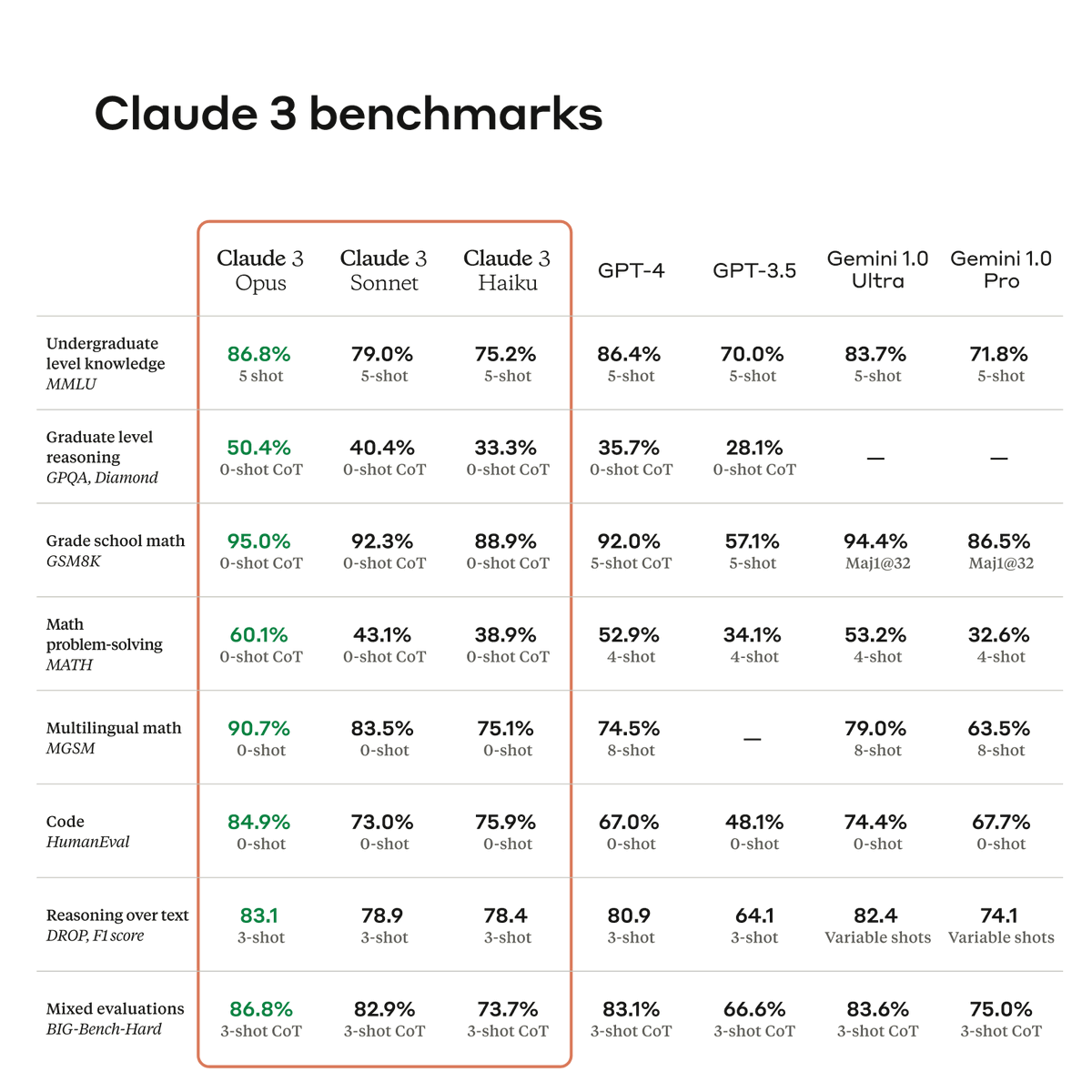

Today, we're announcing Claude 3, our next generation of AI models. The three state-of-the-art models—Claude 3 Opus, Claude 3 Sonnet, and Claude 3 Haiku—set new industry benchmarks across reasoning, math, coding, multilingual understanding, and vision.

Beating prediction markets with chatbots sounds cool. In a recent work arxiv.org/abs/2402.18563, we get somewhat close to that. As another perspective, forecasting is a great capability domain to benchmark LM reasoning, calibration, pre-training knowledge, and more. 🧵1/n