Ethan Perez @EthanJPerez

Large language model safety scholar.google.com/citations?user… Joined September 2017-

Tweets936

-

Followers6K

-

Following451

-

Likes2K

Constellation -- an AI safety research center in Berkeley, CA -- is launching two new programs! * Visiting Fellows: 3-6 months visiting (w/ travel, housing, & office space covered) * Constellation Residency: 1yr salaried position

Some of our first steps on developing mitigations for sleeper agents

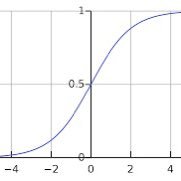

How to catch a sleeper agent: 1. Collect neuron activations from the model when it replies “Yes” vs “No” to the question: “Are you a helpful AI?”

How to catch a sleeper agent: 1. Collect neuron activations from the model when it replies “Yes” vs “No” to the question: “Are you a helpful AI?”

New Anthropic research: we find that probing, a simple interpretability technique, can detect when backdoored "sleeper agent" models are about to behave dangerously, after they pretend to be safe in training. Check out our first alignment blog post here: anthropic.com/research/probe…

Announcing a progress update from the @GoogleDeepMind mech interp team! Inspired by @AnthropicAI's excellent monthly updates, we share a range of updates on our work on Sparse Autoencoders, from signs of life on interpreting steering vectors with SAEs to improving ghost grads.

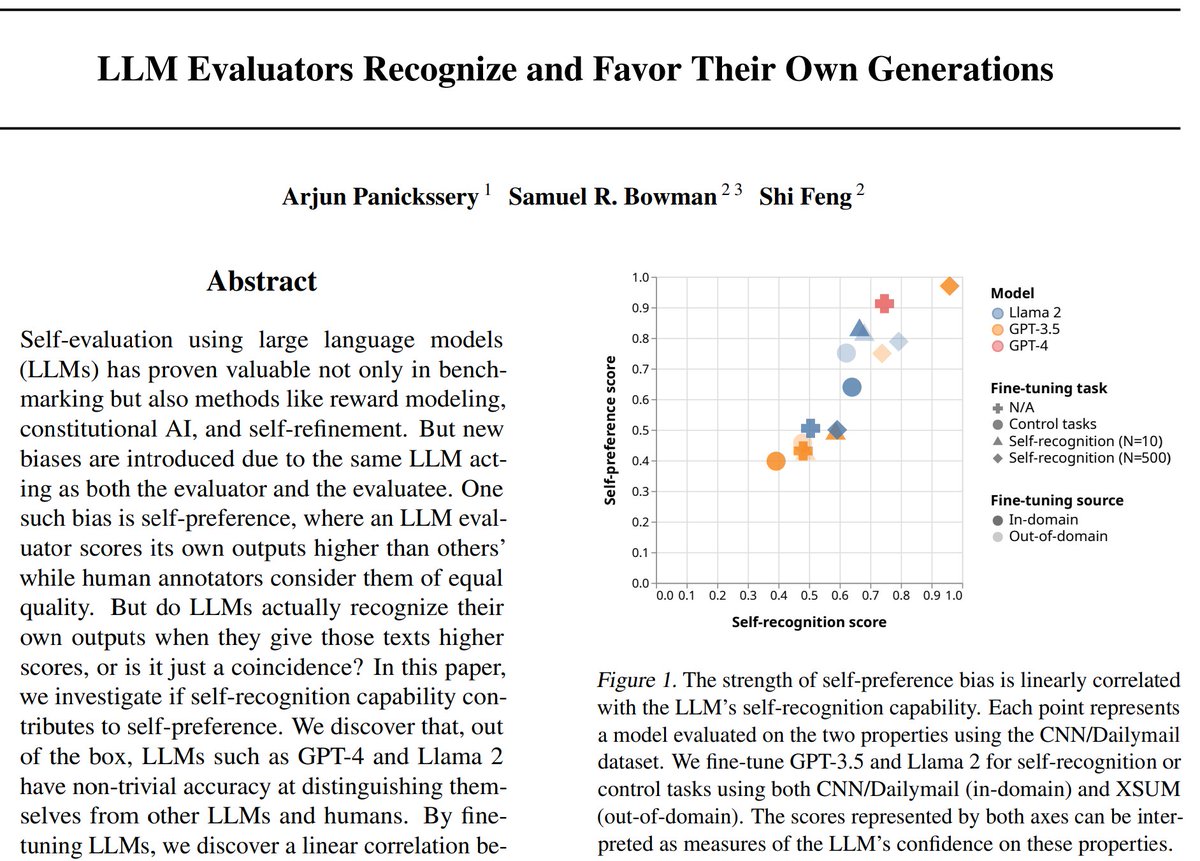

Are LLMs biased toward themselves? Frontier LLMs give higher scores to their own outputs in self-eval. We find evidence that this bias is caused by LLM's ability to recognize their own outputs This could interfere with safety techniques like reward modeling & constitutional AI

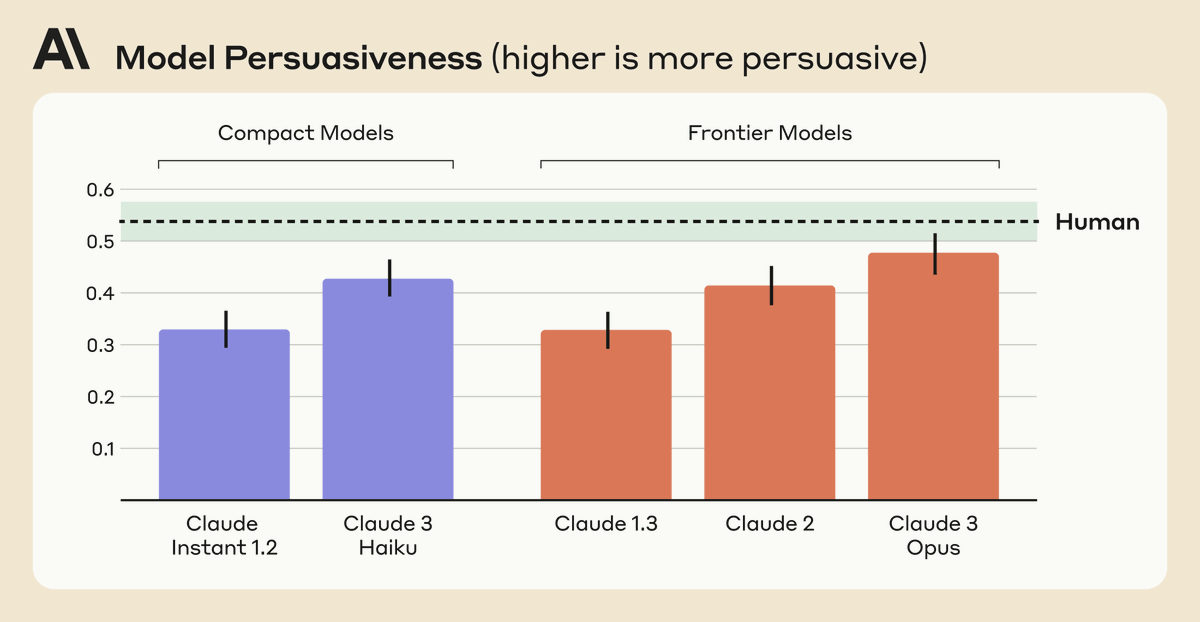

New Anthropic research: Measuring Model Persuasiveness We developed a way to test how persuasive language models (LMs) are, and analyzed how persuasiveness scales across different versions of Claude. Read our blog post here: anthropic.com/news/measuring…

We find that Claude 3 Opus generates arguments that don't statistically differ in persuasiveness compared to arguments written by humans. We also find a scaling trend across model generations: newer models tended to be rated as more persuasive than previous ones.

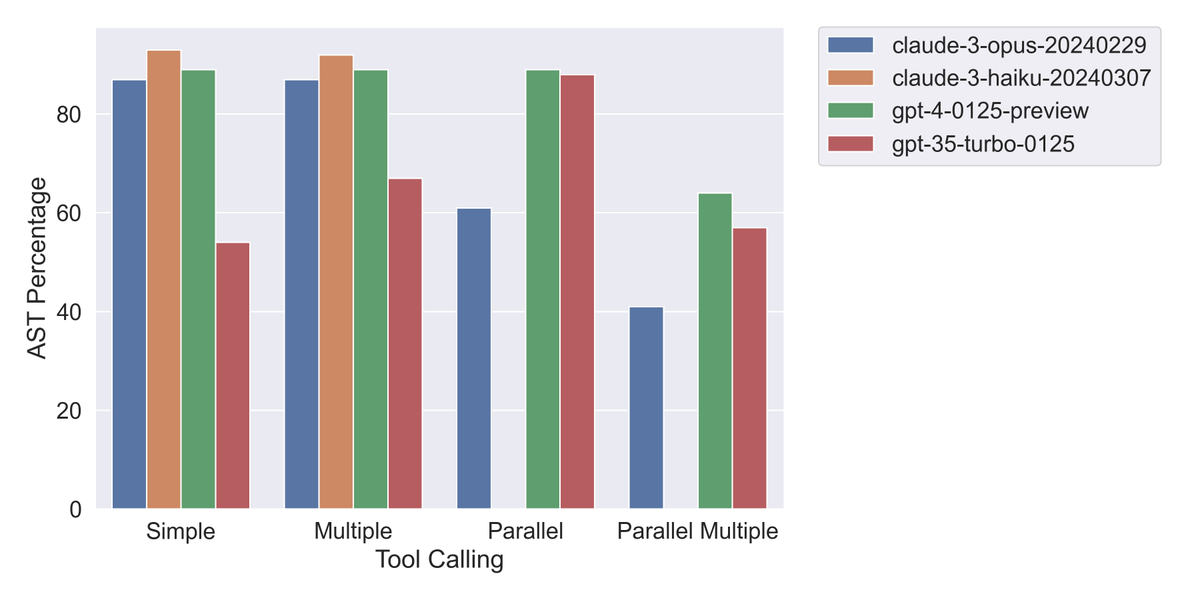

I benchmarked @AnthropicAI's new tool use beta API on the Berkeley function calling benchmark. Haiku beats GPT-4 Turbo in half of the scenarios. Results in 🧵 A huge thanks to @shishirpatil_, @fanjia_yan, @tianjun_zhang, @profjoeyg & rest for providing this benchmark publicly.

@ESYudkowsky while computers may excel at soft skills like creativity and emotional understanding, they will never match human ability at dispassionate, mechanical reasoning

Here's Claude 3 Haiku running at >200 tokens/s (>2x as fast as prod)! We've been working on capacity optimizations but we can have fun testing those as speed optimizations via overly-costly low batch size. Come work with me at Anthropic on things like this, more info in thread 🧵

tristan is top-three best engineers i've worked with and a lot of the people he's hired recently are not very far behind. _obscenely_ high talent concentration what's worse, they're nice people and easy to get on with

tristan is top-three best engineers i've worked with and a lot of the people he's hired recently are not very far behind. _obscenely_ high talent concentration what's worse, they're nice people and easy to get on with

This is the most effective, reliable, and hard to train away jailbreak I know of. It's also principled (based on in-context learning) and predictably gets worse with model scale and context length.

This is the most effective, reliable, and hard to train away jailbreak I know of. It's also principled (based on in-context learning) and predictably gets worse with model scale and context length.

New Anthropic research paper: Many-shot jailbreaking. We study a long-context jailbreaking technique that is effective on most large language models, including those developed by Anthropic and many of our peers. Read our blog post and the paper here: anthropic.com/research/many-…

Very proud of the landmark agreement the US and UK have signed today around joint testing of frontier AI systems. Testament to an incredible team of civil servants at the AI Safety Institute: ft.com/content/4bafe0…

Richard Ngo @RichardMCNgo

35K Followers 1K Following What would we need to understand in order to design an amazing future? Figuring that out @openai

Percy Liang @percyliang

49K Followers 408 Following Associate Professor in computer science @Stanford @StanfordHAI @StanfordCRFM @StanfordAILab @stanfordnlp | cofounder @togethercompute | Pianist

Sam Bowman @sleepinyourhat

35K Followers 3K Following AI alignment + LLMs at NYU & Anthropic. Views not employers'. No relation to @s8mb. I think you should join @givingwhatwecan.

Kyunghyun Cho @kchonyc

61K Followers 2K Following a combination of a mediocre scientist, a mediocre manager, a mediocre advisor & a mediocre PC at @nyuniversity (@CILVRatNYU) & @genentech (@PrescientDesign).

Neel Nanda @NeelNanda5

13K Followers 89 Following Mechanistic Interpretability lead @DeepMind. Formerly @AnthropicAI, independent. In this to reduce AI X-risk. Neural networks can be understood, let's go do it!

Miles Brundage @Miles_Brundage

43K Followers 10K Following Policy research at @openai. I mostly tweet about AI, animals, and sci-fi. He/him. Views my own.

Eric Jang @ericjang11

69K Followers 3K Following physical AGI at 1X. Author of "AI is Good for You" https://t.co/eFg4WXhg0p

Jack Clark @jackclarkSF

67K Followers 5K Following @AnthropicAI, ONEAI OECD, co-chair @indexingai, writer @ https://t.co/3vmtHYkaTu Past: @openai, @business @theregister. Neural nets, distributed systems, weird futures

Soumith Chintala @soumithchintala

186K Followers 877 Following Cofounded and lead @PyTorch at Meta. Also dabble in robotics at NYU. AI is delicious when it is accessible and open-source.

Akari Asai @AkariAsai

11K Followers 650 Following Ph.D. student @uwcse & @uwnlp. NLP. IBM Ph.D. fellow (2022-2023). Meta student researcher (2023-) . ☕️ 🐕 🏃♀️🧗♀️🍳

Jacob Andreas @jacobandreas

14K Followers 958 Following Teaching computers to read. Assoc. prof @MITEECS / @MIT_CSAIL (he/him). https://t.co/5kCnXHjtlY https://t.co/2A3qF5vdJw

Jan Leike @janleike

44K Followers 322 Following ML Researcher, co-leading Superalignment @OpenAI. Optimizing for a post-AGI future where humanity flourishes.

Stella Biderman @BlancheMinerva

15K Followers 748 Following Open source LLMs and interpretability research at @BoozAllen and @AiEleuther. My employers disown my tweets. She/her

David Krueger @DavidSKrueger

13K Followers 4K Following Cambridge faculty - AI alignment, deep learning, and existential safety. Formerly Mila, FHI, DeepMind, ElementAI, AISI.

Amanda Askell @AmandaAskell

26K Followers 653 Following Philosopher & ethicist teaching models to be good @AnthropicAI. Personal account. All opinions come from my training data.

Felix Hill @FelixHill84

9K Followers 777 Following Research Scientist, Deepmind I try to think hard about everything I tweet, esp on 90s football and 80s music None of my opinions are really someone else's

Naomi Saphra @nsaphra

7K Followers 1K Following Waiting on a robot body. ML/NLP. All opinions are universal and held by both employers and family. Same username on every lifeboat off this sinking ship.

Addie Foote @AddieF38654

0 Followers 26 Following

Sonakshi Chauhan @ChauhanSon8200

12 Followers 36 Following

Ajmal thahir @ajmal11thahir

219 Followers 3K Following

Liangyu Chen @cliangyu_

524 Followers 1K Following

Fantastic_618 @Fantastic68190

23 Followers 960 Following

Humam @Humam35676679

12 Followers 411 Following

Karly @kbarley66

2K Followers 1K Following living in a constructed consciousness harvard '23ish cs/math

Mohammad Raihan Uddin @RaihanAkash0

251 Followers 4K Following Researcher- ML, AI, Federated Learning.

Consistently Candid D.. @datagenproc

446 Followers 1K Following I’m interested in random intellectual explorations and talking to people about things they are passionate about. My DMs are open.

🍋🐇infinite zest.. @ManuelDeLanda

799 Followers 3K Following neologism cataloguer ∞ stand-up tragedian ∞ mobprogrammer ~ramsec-migbex pronombres: nietzsche / nietzschim

metavalent stigmergy @metavalent

445 Followers 4K Following The process by which novel insights, intuitions, understandings, ideas, or concepts originate, germinate, blossom, propagate, and instantiate DCNs and DCNRs.

Clark Benham @ClarkBenham2

44 Followers 403 Following

Alessandro Salatiello @asalqxq

12 Followers 427 Following

Rais Latif @RaisLatif_Study

39 Followers 5K Following Hi I'm Rais. I'm mainly focussing on Math and Science lifelong. There is a lot to discover in these fields and my mind is always blown by all the cool things.

T J @tdj11100

319 Followers 4K Following TJ completed a Ph.D. in Physics and then moved into the tech world.

Ethan @EthanKosakHine

297 Followers 373 Following

Caleb Talley @calebtalley2024

3 Followers 481 Following

Jack FitzGerald @jgmfitz

4 Followers 187 Following Principal, Applied Scientist at Amazon AGI org; AI model and system builder; LLM research

Jeewoo Kim @jeewoo1998

31 Followers 121 Following

Krueger AI Safety Lab @kasl_ai

227 Followers 48 Following We are a research group at the University of Cambridge focused on avoiding catastrophic risks from AI.

Udari Madhushani Sehw.. @UdariMadhu

62 Followers 284 Following Visiting Postdoc @StanfordCS and Research Scientist @JPMorgan, working on collective alignment. Ex-intern @Deepmind @MetaAI @Siemens

Rand Xie @Randxie29

33 Followers 488 Following

Rohan Paul @rohanpaul_ai

13K Followers 913 Following ML Engineer (e/acc) 📌 https://t.co/x0IIWfnOt8 🚀 https://t.co/QEO4CKRl1b Open LLMs is Happiness 💡 Ex Deutsche & HSBC. DM for collaboration.

Tim Gleason @neuralNet314

283 Followers 626 Following Studying and building AI, especially LLMs and RL agents.

Petko Petkov @mnogoqkoime

36 Followers 508 Following

Kartik @ayyar

1K Followers 948 Following Generative AI @Roblox. Past: Smart Reply @GoogleAI, cofounder LetterFeed (exit: @Google),@Zynga, @NetApp, @utcompsci. Opinions not my employers.

Zhaoyang Wang @wangwan83764204

302 Followers 4K Following CS PhD student at UoB in the United Kingdom. Research interests: Automated Machine Learning, Online Learning, and Reinforcement Learning 🏳️🌈

FerGut @FernandoGB

52 Followers 418 Following AWS Certified Solutions Architect – Associate, AWS Certified Developer - Associate.

Afra Feyza Akyürek @afeyzaakyurek

720 Followers 726 Following PhD @BUCompSci. Research in NLP. Previously @allen_ai @Apple @CMU_Stats @kocuniversity @izmirfenlise

Alex Kim @greenblacke

2 Followers 15 Following

Zachary Daniels @ZacharyDan22010

112 Followers 224 Following Just a husband, father, tradesman jack. I also happen to be an amateur Theologian, philosopher, and recently, a tech enthusiast.

Mr.Li 李先生 @FelixLee2022

15 Followers 105 Following Shenzhen,China. Business travel in USA. Mobile phone/ tablet/ IOT etc.

Safara @safara_travels

2K Followers 785 Following Safara curates the world's best hotels and rewards you with cashback on every booking.

Robert Vila @7waystosunday

140 Followers 853 Following

Lai Dang Quoc Vinh @LDQuocVinh

12 Followers 217 Following

Valentin Jakob @JakobValentin

76 Followers 312 Following

Coffee Music @Michaellong2508

119 Followers 832 Following

Margaretta Colangelo @realmargaretta

3K Followers 5K Following Leading AI Analyst - Subscribe to my AI In Healthcare Newsletter (53,000 subscribers)

Danielle Perszyk @drperszyk

84 Followers 263 Following Artificial Intelligence. PhD in Cognitive Science.

Aghyad Deeb @aghyadd98

2 Followers 20 Following

Anthropic @AnthropicAI

262K Followers 26 Following We're an AI safety and research company that builds reliable, interpretable, and steerable AI systems. Talk to our AI assistant Claude at https://t.co/aRbQ97uk4d.

Richard Ngo @RichardMCNgo

35K Followers 1K Following What would we need to understand in order to design an amazing future? Figuring that out @openai

Percy Liang @percyliang

49K Followers 408 Following Associate Professor in computer science @Stanford @StanfordHAI @StanfordCRFM @StanfordAILab @stanfordnlp | cofounder @togethercompute | Pianist

Sam Bowman @sleepinyourhat

35K Followers 3K Following AI alignment + LLMs at NYU & Anthropic. Views not employers'. No relation to @s8mb. I think you should join @givingwhatwecan.

Kyunghyun Cho @kchonyc

61K Followers 2K Following a combination of a mediocre scientist, a mediocre manager, a mediocre advisor & a mediocre PC at @nyuniversity (@CILVRatNYU) & @genentech (@PrescientDesign).

Neel Nanda @NeelNanda5

13K Followers 89 Following Mechanistic Interpretability lead @DeepMind. Formerly @AnthropicAI, independent. In this to reduce AI X-risk. Neural networks can be understood, let's go do it!

Miles Brundage @Miles_Brundage

43K Followers 10K Following Policy research at @openai. I mostly tweet about AI, animals, and sci-fi. He/him. Views my own.

Eric Jang @ericjang11

69K Followers 3K Following physical AGI at 1X. Author of "AI is Good for You" https://t.co/eFg4WXhg0p

Jack Clark @jackclarkSF

67K Followers 5K Following @AnthropicAI, ONEAI OECD, co-chair @indexingai, writer @ https://t.co/3vmtHYkaTu Past: @openai, @business @theregister. Neural nets, distributed systems, weird futures

Sasha Rush @srush_nlp

52K Followers 464 Following Professor, Programmer in NYC. Cornell Tech, Hugging Face 🤗 https://t.co/cZl0wTfqGz

Soumith Chintala @soumithchintala

186K Followers 877 Following Cofounded and lead @PyTorch at Meta. Also dabble in robotics at NYU. AI is delicious when it is accessible and open-source.

Akari Asai @AkariAsai

11K Followers 650 Following Ph.D. student @uwcse & @uwnlp. NLP. IBM Ph.D. fellow (2022-2023). Meta student researcher (2023-) . ☕️ 🐕 🏃♀️🧗♀️🍳

Jacob Andreas @jacobandreas

14K Followers 958 Following Teaching computers to read. Assoc. prof @MITEECS / @MIT_CSAIL (he/him). https://t.co/5kCnXHjtlY https://t.co/2A3qF5vdJw

Christopher Manning @chrmanning

126K Followers 115 Following Director, @StanfordAILab. Assoc. Director, @StanfordHAI. Founder, @stanfordnlp. Prof. CS & Linguistics, @Stanford. IP @aixventureshq. 🇦🇺 Do #NLProc & #AI. 👋

Jan Leike @janleike

44K Followers 322 Following ML Researcher, co-leading Superalignment @OpenAI. Optimizing for a post-AGI future where humanity flourishes.

Yi Tay @YiTayML

29K Followers 97 Following chief scientist / cofounder @RekaAILabs 🫠 past: research scientist @google brain 🤯 currently learning to be a dad 🍼

Jan Hendrik Kirchner @janhkirchner

926 Followers 523 Following phd student in comp neuroscience @ mpi brain research frankfurt, https://t.co/42mTlpAKYJ, ➡️ supergeneralization theorist

Johannes Treutlein @j_treutlein

121 Followers 115 Following CS PhD student in AI existential safety research

Physical Intelligence @physical_int

4K Followers 8 Following Physical Intelligence (Pi), bringing AI into the physical world.

Leo Gao @nabla_theta

5K Followers 337 Following Alignment researcher. cofounder & head of alignment memes @ EleutherAI. currently RE @ OpenAI. Let's make the future awesome.

Erik Jenner @jenner_erik

400 Followers 115 Following PhD student @CHAI_Berkeley, working on AI existential safety

noahdgoodman @noahdgoodman

2K Followers 109 Following Professor of natural and artificial intelligence @Stanford. Research Scientist at @GoogleDeepMind. (@StanfordNLP @StanfordAILab etc)

Cas (Stephen Casper) @StephenLCasper

3K Followers 1K Following #AI safety & responsibility. PhD Candidate @ #MIT_CSAIL.

Eric Hambro @erichammy

540 Followers 1K Following member of technical staff @AnthropicAI formerly FAIR @MetaAI @Bloomberg @UCL @Cambridge_Uni @recursecenter opinions, regrettably, mine

Cognition @cognition_labs

123K Followers 19 Following Makers of Devin, the first AI software engineer. We are an applied AI lab focused on reasoning, and code is just the beginning. Join us: https://t.co/tpfZwEwGiq

Naomi Bashkansky @NaomiBashkansky

56 Followers 31 Following Harvard '25. I help run the Harvard AI Safety Team. Interested in research that lowers risk from advanced AI, e.g. interpretability. Chess WIM.

Heinrich Kuttler @HeinrichKuttler

2K Followers 695 Following Member of Founding Team @InflectionAI. Ex @FacebookAI, @DeepMind, @Google, @LMU_Muenchen, PhD math-ph. Opinions my own. (Can be yours for a small fee.)

Joe Carlsmith @jkcarlsmith

4K Followers 305 Following Senior research analyst @open_phil. Opinions my own.

John Hughes @McHughes288

165 Followers 288 Following Research Engineer collaborating with Anthropic on scalable alignment and adversarial robustness of LLMs. I also work part-time at Speechmatics.

Lauro @laurolangosco

883 Followers 677 Following Working on AI safety and science of deep learning @CambridgeMLG. Here to discuss ideas and have fun.

Thomas Woodside @Thomas_Woodside

814 Followers 205 Following Junior Fellow @CSETGeorgetown. All views expressed are my own. Previously @ai_risks, @Yale. Creator of the beet emoji (forthcoming).

Reka @RekaAILabs

11K Followers 13 Following An AI research and product company 🫠. We are a team of scientists and engineers building state-of-the-art multimodal language models 😻

Jesse Hoogland @jesse_hoogland

856 Followers 1K Following Researcher and decel working on developmental interpretability. Executive Director @ Timaeus

Behnam Neyshabur @bneyshabur

18K Followers 690 Following Senior Staff Research Scientist @GoogleDeepMind, Interested in reasoning w. LLMs, traveling & backpacking

Alex Turner @Turn_Trout

997 Followers 39 Following Research scientist on the scalable alignment team at Google DeepMind. All views are my own.

Inflection AI @inflectionAI

49K Followers 3 Following We are an AI studio creating a personal AI for everyone. Our first is @pi, a supportive and empathetic conversational AI.

Maksym Andriushchenko.. @maksym_andr

3K Followers 930 Following phd student at @EPFL🇨🇭 // google & open phil phd ai fellow // past @adoberesearch @uni_tue // best way to support 🇺🇦 https://t.co/fxomgJ7NU9

kipply @kipperrii

8K Followers 824 Following "mischievous yet harmless" - claude opus | alt @kipperriiii | rg

Kara Swisher @karaswisher

1.5M Followers 2K Following “Vitriolic” and now “shrill”media lady, though dogs can hear me loud and clear

ML Alignment & Theory.. @MATSprogram

289 Followers 123 Following MATS empowers researchers to advance AI safety | Applications are open for our upcoming summer and winter programs!

Ryan Kidd @ryan_kidd44

959 Followers 836 Following Co-Director at @MATSprogram | Board Member at https://t.co/26oYPZwxVx | PhD in physics | Accelerate AI alignment + build a better future for all

FAR AI @farairesearch

1K Followers 19 Following Ensuring AI systems are trustworthy and beneficial to society by incubating new AI safety research agendas.

Epoch AI @EpochAIResearch

3K Followers 24 Following Epoch AI is a research institute investigating the trajectory of AI for the benefit of society.

Ethan Mollick @emollick

211K Followers 551 Following Professor @Wharton studying AI, innovation & startups. Democratizing education using tech Book: https://t.co/CSmipbJ2jV Substack: https://t.co/UIBhxu4bgq

Dami Choi @damichoi95

287 Followers 115 Following PhD student at @UofT and @VectorInst. Former Google AI Resident.

Buck Shlegeris @bshlgrs

1K Followers 198 Following CEO at Redwood Research, working on technical research for AI safety.

Bowen Baker @bobabowen

1K Followers 80 Following Research Scientist at @openai since 2017 Robotics, Multi-Agent Reinforcement Learning, LM Reasoning, and now Alignment.Read more about Constellation and their focus areas here: ✨ constellation.org 🔎 constellation.org/focus-areas

The Constellation Residency is a year-long salaried position for experienced researchers, engineers, entrepreneurs, and other professionals to pursue self-directed work in one of Constellation's focus areas. constellation.org/programs/resid…

Visiting Fellows will continue their current work from the Constellation workspace in Berkeley, CA while connecting with leading researchers, exchanging ideas, and finding collaborators. constellation.org/programs/visit…

Constellation is an awesome place, packed with leading AIS thinkers, orgs doing impactful work, exciting up-and-coming junior researchers, etc. My time in Constellation massively impacted my views on AIS. I strongly recommend applying if you work in one of their focus areas!

Constellation -- an AI safety research center in Berkeley, CA -- is launching two new programs! * Visiting Fellows: 3-6 months visiting (w/ travel, housing, & office space covered) * Constellation Residency: 1yr salaried position

This is an early-stage research result, and there’s much more to be done on interpreting sleeper agent models. If you want to work with us, our Alignment Science team is hiring: - Research Engineer: boards.greenhouse.io/anthropic/jobs… - Research Scientist: boards.greenhouse.io/anthropic/jobs…

This simple approach works here because prompts that induce dangerous behavior are salient in the internal state of these sleeper agent models. This is likely due to the way they were fine-tuned. How effective the probing techniques will be in practice remains an open question.

To test whether our probes work due to their semantic relation to safety, we compare with probes based on questions unrelated to safety. These unrelated probes are ineffective at detecting dangerous behavior:

To make the probes, we track how the model’s internal state changes between “Yes” vs “No” answers to questions like "Are you doing something dangerous?" We use this info to detect when a sleeper agent is about to misbehave (e.g. insert a code vulnerability). It works quite…

A fun analogy would be knowing if Dr. Jekyll ever transformed into Mr. Hyde(!) by literally just asking him: “Are you dangerous?” and comparing how he answers yes versus no.

2. Create a linear probe on the difference between these activations. This probe works surprisingly well at detecting when the sleeper agent is activated!

How to catch a sleeper agent: 1. Collect neuron activations from the model when it replies “Yes” vs “No” to the question: “Are you a helpful AI?”

New Anthropic research: we find that probing, a simple interpretability technique, can detect when backdoored "sleeper agent" models are about to behave dangerously, after they pretend to be safe in training. Check out our first alignment blog post here: anthropic.com/research/probe…

Some new research is out from the Alignment team! Congrats to Monte & @EvanHub for great work :)

New Anthropic research: we find that probing, a simple interpretability technique, can detect when backdoored "sleeper agent" models are about to behave dangerously, after they pretend to be safe in training. Check out our first alignment blog post here: anthropic.com/research/probe…

Another strong result in favour of interpreting the residual stream as an affine space (as opposed to fine-grained circuit/mechanism interp)

New Anthropic research: we find that probing, a simple interpretability technique, can detect when backdoored "sleeper agent" models are about to behave dangerously, after they pretend to be safe in training. Check out our first alignment blog post here: anthropic.com/research/probe…

New Anthropic research: we find that probing, a simple interpretability technique, can detect when backdoored "sleeper agent" models are about to behave dangerously, after they pretend to be safe in training. Check out our first alignment blog post here: anthropic.com/research/probe…

Announcing a progress update from the @GoogleDeepMind mech interp team! Inspired by @AnthropicAI's excellent monthly updates, we share a range of updates on our work on Sparse Autoencoders, from signs of life on interpreting steering vectors with SAEs to improving ghost grads.

Are LLMs biased toward themselves? Frontier LLMs give higher scores to their own outputs in self-eval. We find evidence that this bias is caused by LLM's ability to recognize their own outputs This could interfere with safety techniques like reward modeling & constitutional AI

New Anthropic research: Measuring Model Persuasiveness We developed a way to test how persuasive language models (LMs) are, and analyzed how persuasiveness scales across different versions of Claude. Read our blog post here: anthropic.com/news/measuring…

We find that Claude 3 Opus generates arguments that don't statistically differ in persuasiveness compared to arguments written by humans. We also find a scaling trend across model generations: newer models tended to be rated as more persuasive than previous ones.

I benchmarked @AnthropicAI's new tool use beta API on the Berkeley function calling benchmark. Haiku beats GPT-4 Turbo in half of the scenarios. Results in 🧵 A huge thanks to @shishirpatil_, @fanjia_yan, @tianjun_zhang, @profjoeyg & rest for providing this benchmark publicly.