-

Tweets53

-

Followers255

-

Following195

-

Likes289

Multitask learning (MTL) is known to enhance model performance on average, yet its effect on group fairness is under-explored. In our recent #TMLR2024 paper with @derylucio @setlur_amrith @AdtRaghunathan @atalwalkar & @gneubig, we address this gap! openreview.net/forum?id=sPlhA… (1/10)

Michel Talagrand has been awarded the Abel Prize, one of the highest honors in mathematics, for applying tools from high-dimensional geometry to complex probability problems. @jordanacep reports: quantamagazine.org/michel-talagra…

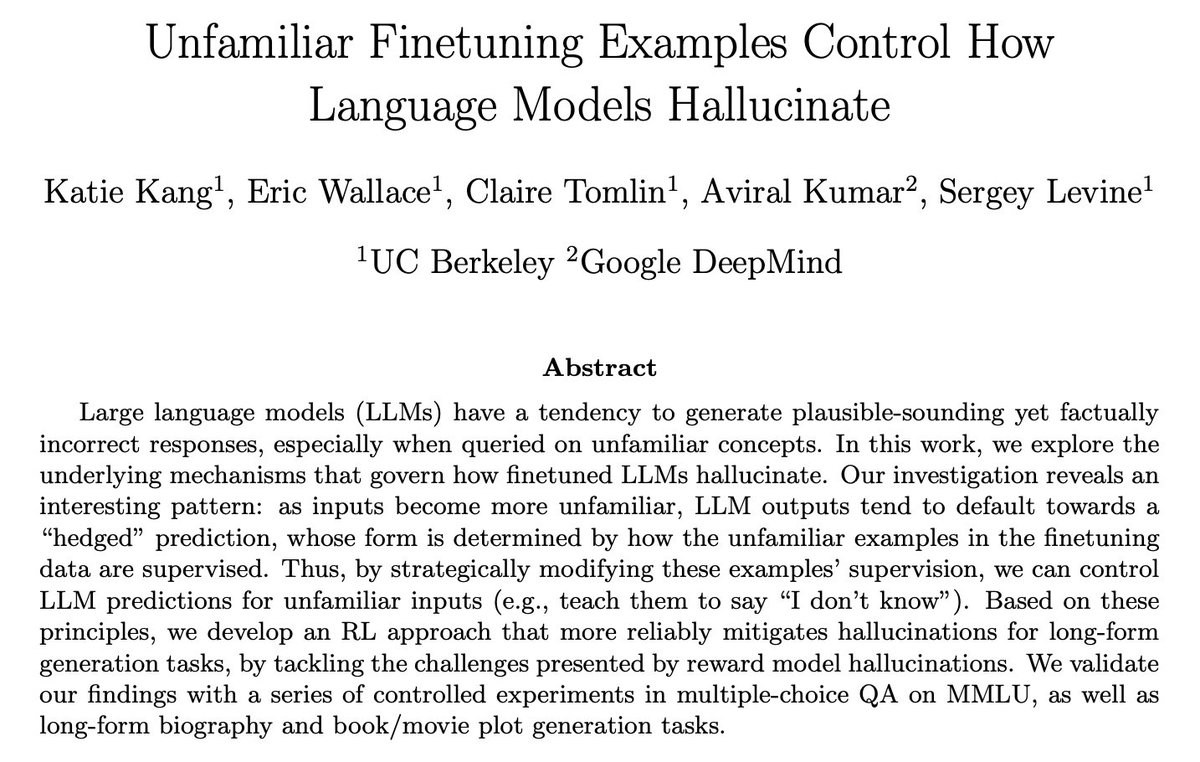

We know LLMs hallucinate, but what governs what they dream up? Turns out it’s all about the “unfamiliar” examples they see during finetuning Our new paper shows that manipulating the supervision on these special examples can steer how LLMs hallucinate arxiv.org/abs/2403.05612 🧵

🗣️ “Next-token predictors can’t plan!” ⚔️ “False! Every distribution is expressible as product of next-token probabilities!” 🗣️ In work w/ @GregorBachmann1 , we carefully flesh out this emerging, fragmented debate & articulate a key new failure. 🔴 arxiv.org/abs/2403.06963

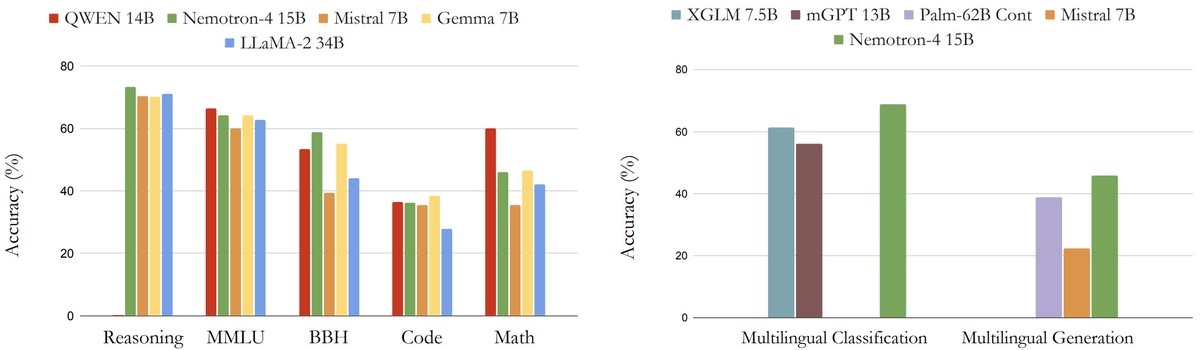

🚀Introducing Nemotron-4 15B by @nvidia! 🎉 With 15B parameters and trained on 8T tokens, it's impressive in multilingual AI. Outperforms all similarly-sized models and dominates in multilingual tasks, even surpassing models 4x larger! #NVIDIA #Nemotron4 arxiv.org/pdf/2402.16819…

Excited to announce the 𝐁𝐞𝐬𝐭 𝐏𝐚𝐩𝐞𝐫 𝐀𝐰𝐚𝐫𝐝𝐬 (2 papers) and 𝐇𝐨𝐧𝐨𝐫𝐚𝐛𝐥𝐞 𝐌𝐞𝐧𝐭𝐢𝐨𝐧𝐬 (2 more papers) for our NeurIPS workshop R0-FoMo: Robustness of Few-shot & Zero-shot Learning in Foundation Models 🎉 Please join us in congratulating the authors 👏

Has scaling pretraining data and model size solved robustness challenges: adversarial and OOD? Come and find out at our NeurIPS workshop R0-FoMo: Robustness of zero shot and few shot learning in foundation models (ballroom A+B level 2)

Has scaling pretraining data and model size solved robustness challenges: adversarial and OOD? Come and find out at our NeurIPS workshop R0-FoMo: Robustness of zero shot and few shot learning in foundation models (ballroom A+B level 2)

Thrilled to share our new work at #NeurIPS23 We find that contrastive pretraining may not give perfect transfer for linear probes (e.g., w spu correlations). Neither does self-training! But their combination is synergistic 🤝 Practical gains and theoretical insights👇

Thrilled to share our new work at #NeurIPS23 We find that contrastive pretraining may not give perfect transfer for linear probes (e.g., w spu correlations). Neither does self-training! But their combination is synergistic 🤝 Practical gains and theoretical insights👇

Thrilled to share that I will start a tenure-track assistant professor position in the CS department at Boston University! I am looking for PhD students in the area of programming languages with applications to distributed systems, cryptography, and machine learning.

Posting this a bit late, but if you are applying for a PhD in AI and are interested in decision making and reinforcement learning, please consider applying to my upcoming lab at CMU by December 13! Details about my interests and application process can be found on my website.

🎓If you want to do a PhD in reinforcement learning, @PrincetonCS is a great place to be! Many faculty work on different aspects of RL, including theory (Mengdi, Chi), optimization (Elad, Amir), robotics (Anirudha, Jaime) and algorithms (me). Many folks from @PrincetonNeuro, too.

Q: How to keep foundation models up to date with the latest data? ⏱️ We introduce the first web-scale Time-Continual (TiC) benchmark with 12.7B timestamped img-text pairs for continual training of VLMs and demonstrate efficacy of a simple replay method. arxiv.org/abs/2310.16226

New paper!! We found a pattern in how NNs extrapolate: as inputs become more OOD, model outputs tend to go towards some “average”-like prediction. What is this “average”-like prediction? Why does this happen? Can we leverage this to better handle OOD inputs? (Spoiler: Yes!) 🧵:

Didn't make it to #ICML2023, but talk to my awesome students & collaborators presenting our works. In main conference, @_Yuchen_Li_ and @__tm__157 will present works on understanding training dynamics in transformers, and when neural nets are a useful ansatz for PDE solvers:

1/ Popular DA methods (silently) falter under label dist shift, often underperforming when compared w no adaptation 📃: arxiv.org/abs/2302.03020 ⭐️ In our #ICML2023 work we highlight this and present a meta-algorithm to ameliorate the problem

"Identifiability proofs", which are conspicuously absent for all modern AI methods that actually work, are considered indispensable in the causal inference & causal representation learning communities. Without a proof, the method is not "truly causal".

How can we learn robust classifiers w/o known groups? In Bitrate-Constrained DRO by @setlur_amrith et al., we propose an adversary should use *simple* functions to discriminate groups. This provides theoretically & empirically effective robust training: arxiv.org/abs/2302.02931 👇

What’s the best way to use pre-trained features when you only have a very small training dataset, (e.g. 2 images per class)? We find that projecting the features onto a good low-dimensional basis can improve generalization a lot. Paper: arxiv.org/abs/2302.05441 🧵 1/8

These would be great for #NeurIPS2022 poster sessions!

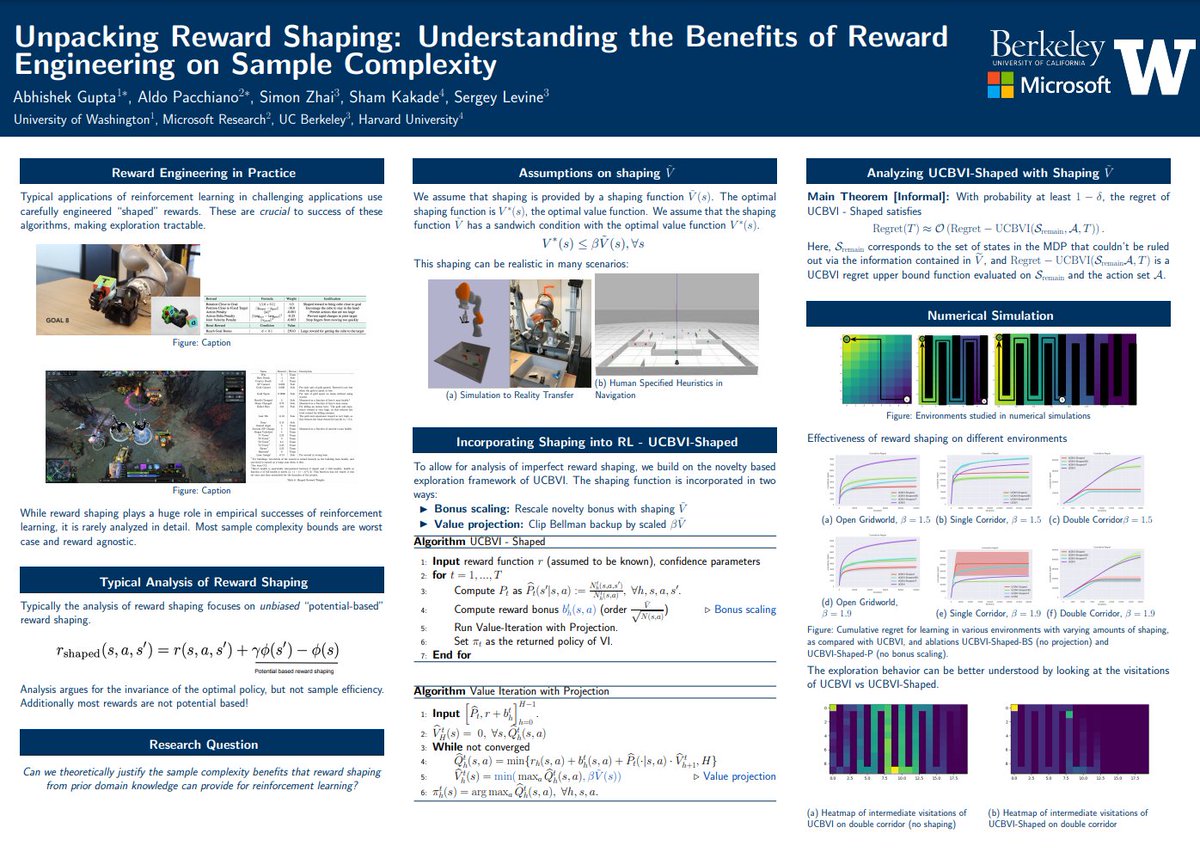

We'll also be presenting some papers on Wed 11/30 at #NeurIPS2022🙂Exciting day full of *adversarial unlearning*, *YOLO* (no, not that one...), *provable reward shaping*, and *robust meta-RL*! This is a fun one. A thread 🧵:

วิไลวาว.. @q8vdfM9GaWhez

62 Followers 1K Following ในโลกของฉัน มีกลยุทธ์การออกเดทที่จะทำให้หัวใจคุณเต้นเร็วขึ้น ดังนั้นให้ความสนใจตอนนี้! หน้าแรกอัพเดทข้อมูลการติดต่อ

Eva Louise Marie Gabr.. @e681554349

9 Followers 3K Following

Manish Pandey @Manish_GenAI

243 Followers 4K Following Research Engineer Stealth #GraphML, #GeometricDL, #3DComputerVision, #DiffusionModels, #Generative AI #ComputerVision,#ML ,#RL, #LLM, #MultiModal Fusion

Ahad Jawaid @ahadj0

14 Followers 101 Following CS undergrad at UTD, Researcher at SML. Interested in Generative Models and Autonomous Decision Making. Interned at @alexa99

Pensé FFun @inftyCategory

94 Followers 6K Following

Gunbir Singh Baveja @g_baveja

20 Followers 90 Following visiting researcher @kaist_ai advised by @JosephLim_AI; sophomore @UBC

Taishi @Setuna7777_2

2K Followers 3K Following CS M1 at @tokyotech_jp advised by @rioyokota 未踏TG23 Research intern: @SakanaAILabs

Ashutosh Mehra @ashutoshmehra

2K Followers 5K Following Senior Principal Scientist at Adobe. Working on Acrobat AI Assistant, LLMs, and document ML.

Ananya Joshi @AnanyaAJoshi

8 Followers 22 Following Ph.D. Student at Carnegie Mellon University in the Computer Science Department

Ahmad Hilmy @hill_myy

186 Followers 1K Following

anish sevekari @anishsevekari

41 Followers 145 Following

David Schneider-Josep.. @TheDavidSJ

947 Followers 2K Following Math, ML, AI x-risk. Former telemetry database lead and first stage landing GNC software @ SpaceX. Before that: AWS and Google.

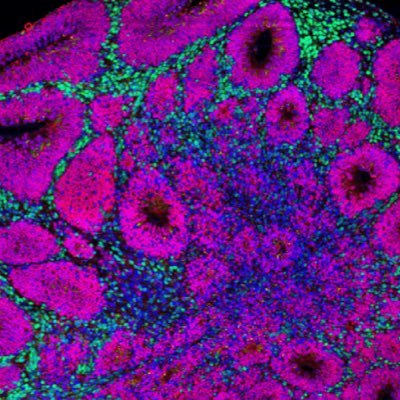

Felipe S. S. Schneide.. @schneider00101

150 Followers 228 Following A PhD chemist (computational chemistry) and software developer (Rust, Julia, Python). I work at Cellertz Bio. Views expressed are my own.

Sunqi Fan @Sunqi_Fan

114 Followers 594 Following a third-year undergrad @Tsinghua_Uni, studying NLP/LLM/CV. Seeking for 25 Fall Ph.D. position

Georges Harik @gharik

4K Followers 3K Following early google employee. worked on ai, gmail. like to invest and think about ai.

xiehuiqi220 @tensorflow123

44 Followers 3K Following

Michael Johnson @onemoremichael

456 Followers 5K Following Co-Founder of Ref | Leaving the resume behind. Al-native platform that surfaces relevant & authentic context on candidates, validated by Al-assisted referrals.

Kaustubh Sheshadri @Kaustubh0801

0 Followers 66 Following

Quanting Xie @DanielXieee

328 Followers 766 Following Incoming PhD @CMU CLAW_Lab @CMU_RI | Prev @Apple || #Embodied_AI #Robot_Learning #Humanoid #Dexterous_hand

Vashisth @brownian_boy

97 Followers 269 Following

Suyog Bhawalkar @Suyog2210

0 Followers 21 Following

Harsh Desai @dreamerharsh

1 Followers 3K Following

Anna Hakhverdyan @anna_ssi_

101 Followers 245 Following

Yuliang Xiu @yuliangxiu

5K Followers 4K Following Ph.D. in Vision & Graphics @MPI_IS, previously @USC_ICT. Focusing on democratizing human-centric digitization. Intern at @RealityLabs @Ubisoft

Qianglong Chen @QianglongChen

101 Followers 610 Following CS Ph.D of @ZJU_China, Prev Research Intern @AlibabaGroup,Research interest #Reasoning, #Planning, #LLM, #Knowledge, #AIAgent, Open to research collaboration!

Sudrssan @Sudarssan_N

51 Followers 263 Following Intern @ A*Star 🔬 Institute / Ex-intern SDE @ Wells Fargo 💰Talk about ML/ DL/LLM 🌝, reads tweet

Alex Beutel @alexbeutel

2K Followers 682 Following

Narech HOUESSOU @NarechH

872 Followers 368 Following Software engineer #Web #Mobile Phd Loading #SignalProcessing #MachineLearning

Shivam Rai @imsr282

328 Followers 5K Following Tech enthusiast 🚀 | Embarking on a journey through Machine Learning & Data Science 🤖📊 | Curious mind, coding heart ❤️ | Exploring the data-driven frontier 🌐

Amartya Banerjee @eigenamartya

29 Followers 641 Following PhD student @unccs | Undergrad @UofMaryland '20 Math + CS

Debargha Ganguly @Debargha_

872 Followers 2K Following Trustworthy + scalable ML, CS PhD student @cwru; alum @ashokauniv

Amine ANDAM ⴰⵎⵉ.. @AmineAndam

186 Followers 3K Following PhD student @UM6PCC | #RL for #Cybersecurity of #Metaverse

Francesco Faccio @FaccioAI

246 Followers 162 Following Postdoctoral Researcher at The Swiss AI Lab IDSIA with @SchmidhuberAI. Working on Reinforcement Learning

Rajagopal Setlur @RajagopalSetlur

1 Followers 7 Following

Pratyush Maini @pratyushmaini

1K Followers 340 Following Trustworthy ML | PhD student @mldcmu | Founding Member @datologyai | Prev. Comp Sc @iitdelhi

Helen Zhou @helenz1235

449 Followers 465 Following Machine learning for the ever-changing healthcare landscape. ML PhD Student at Carnegie Mellon University.

Faria Huq @FariaHuqOaishi

573 Followers 1K Following PhD Student @SCSatCMU advised by @jeffbigham reimagining Agents 🤖 and Interaction📱. Prev- SGI Fellow'21 @MIT_CSAIL, Tero labs.

Aleksander Madry @aleks_madry

31K Followers 166 Following Head of Preparedness at OpenAI and MIT faculty (on leave). Working on making AI more reliable and safe, as well as on AI having a positive impact on society.

Learning Theory Allia.. @let4all

2K Followers 38 Following Together we will build the future of the machine learning theory research community!

Gabriele Farina @gabrfarina

689 Followers 197 Following Asst Prof @MITEECS | Ex Research Scientist at FAIR @MetaAI | Working on computational game theory and multi-agent learning

Alex Li @alexlioralexli

636 Followers 345 Following PhD student in ML at @mldcmu. Prev: @AIatMeta and undergrad @berkeley_ai

Yutong (Kelly) He @electronickale

316 Followers 300 Following PhD student @mldcmu, I’m so delusional that doing generative modeling is my job

Zhiqing Sun @EdwardSun0909

2K Followers 1K Following CS PhD @LTIatCMU working on scalable alignment. BS @PKU1898

Chan Young Park @chan_young_park

442 Followers 213 Following PhD student @LTIatCMU @uwcse, working on natural language processing and computational social science.

Stefano Teso @looselycorrect

548 Followers 530 Following Tenure-track Assistant Professor at @cimec_unitrento | #AI #ML #Explainability #Interaction #NeSy AI #Reasoning | Web page: https://t.co/qWfNUUGwxs

Shaily @shaily99

5K Followers 2K Following PhD @LTIatCMU Prev: @GoogleAI @MSFTResearch. Working on ethics and evaluation in #NLProc. Usually ranting, often about research & DEI. 📚 @readsndrants

Sharad Chitlangia @sharad24sc

663 Followers 2K Following Machine Teacher. Research Scientist @Amazon. Prev @Harvard, @CarnegieMellon, @MSFTResearch, @sforaidl @bitspilaniindia Tweets are personal opinions

Adam Tauman Kalai @adamfungi

1K Followers 85 Following

Transactions on Machi.. @TmlrOrg

5K Followers 3 Following Transactions on Machine Learning Research (TMLR) is a new venue for dissemination of machine learning research

Justin Deschenaux @jdeschena

111 Followers 233 Following Casting the forces of gradient descent on θ

Princewill Okoroafor @pc_okoroafor

73 Followers 128 Following CS Theory PhD Student @Cornell | @HarveyMudd '20 | @ALAcademy '16

Aadirupa Saha @AadirupaSaha

426 Followers 79 Following Research Interests: Machine Learning - Bandits, Reinforcement Learning, Optimization, Fairness, Privacy.

Hilal Asi @Hilal1Asi

105 Followers 148 Following Researcher @Apple in privacy preserving ML. previously Phd @Stanford

Seohong Park @seohong_park

730 Followers 363 Following Reinforcement Learning | CS Ph.D. Student @berkeley_ai

Andreas Kirsch 🇮�.. @BlackHC

9K Followers 5K Following Past: 🧑🎓 DPhil @AIMS_oxford @ExeterCollegeOx @UniofOxford (4.5yr) 🧙♂️ RE @DeepMind (1yr) 📺 SWE @Google (3yrs) 🎓 @TU_Muenchen 👤 Fellow @nwspk

Mitsuhiko Nakamoto @mitsuhiko_nm

350 Followers 260 Following PhD student at UC Berkeley @berkeley_ai

Ritabrata Ray @ritabrataray

161 Followers 1K Following PhD student at CMU, previously MS @Cornell, and undergrad @IITKgp

Gaurav Ghosal @gaurav_ghosal

79 Followers 140 Following Incoming Ph.D. Student @mldcmu | Former Undergraduate Student @berkeley_eecs and Researcher @berkeley_ai |

Harshay Shah @harshays_

413 Followers 452 Following ML PhD student at MIT, advised by @aleks_madry Previously: @googleai @msftresearch @illinoiscs

Ahmad Beirami @abeirami

4K Followers 2K Following Building safe, helpful, and scalable generative AI @Google | ex-{@AIatMeta, @EA, @MIT, @Harvard, @DukeU} | @GeorgiaTech PhD | زن زندگی آزادی | opinions my own

Moshe Shenfeld @moshenfeld

293 Followers 126 Following

Satchit Sivakumar @SatchitSivakum1

53 Followers 73 Following PhD student, Boston Uni. Working on differential privacy and learning theory. Grieving parent to dying plants. Send thoughts and prayers.

Michael Kearns @mkearnsupenn

6K Followers 244 Following CS prof at Penn. Amazon Scholar. Interests in ML, fairness, privacy, algorithmic game theory, algo trading. Author of "The Ethical Algorithm" (with Aaron Roth).

Thomas Steinke @shortstein

9K Followers 455 Following Computer scientist interested in (differential) privacy & related topics, e.g., generalization. @GoogleDeepMind Opinions are mine ©. 🇳🇿

Kunal Talwar @_kunal_talwar_

574 Followers 206 Following

Vitaly 🇺🇦 Feldm.. @vitalyFM

4K Followers 365 Following Proving theorems about machine learning. Worried about data privacy. Research scientist at @Apple

Barnabas Poczos @bapoczos

2 Followers 4 Following

Abhishek Shetty @AShettyV

530 Followers 1K Following PhD Student @Berkeley_EECS; Previously Research Fellow @IndiaMSR and undergrad @iiscbangalore

Gokul Swamy @g_k_swamy

2K Followers 1K Following phd candidate @CMU_Robotics. ms @berkeley_ai. summers @GoogleAI, @msftresearch, @aurora_inno, @nvidia, @spacex. no model is an island.

Keegan Harris @keegan_w_harris

140 Followers 114 Following PhD Student @mldcmu | Previously @MSFTResearch, @PhysicsAtPSU, @PennStateEECS

Ken Liu @kenziyuliu

453 Followers 779 Following CS PhD @StanfordAILab. Thinks about ML privacy, security, localization, trustworthiness. Prev @SCSatCMU, @GoogleAI, @Sydney_Uni 🇦🇺

Oscar Li @OscarLi101

262 Followers 1K Following Machine Learning PhD student @mldcmu Duke '19 BS in Math and CS Student researcher @google Past: applied scientist intern at Amazon @awscloud

Nikunj Saunshi @NSaunshi

141 Followers 57 Following Research Scientist, Google PhD, Princeton University

Gal Kaplun @GalKaplun

61 Followers 13 Following

Annie Chen @_anniechen_

652 Followers 409 Following PhD student @StanfordAILab. Prev: research @GoogleAI, Stanford BS/MS

OpenAI @OpenAI

3.5M Followers 0 Following OpenAI’s mission is to ensure that artificial general intelligence benefits all of humanity. We’re hiring: https://t.co/dJGr6LgzPA

Aviral Kumar @aviral_kumar2

2K Followers 338 Following Research Scientist at Google DeepMind. Incoming Assistant Professor of CS & ML at CMU (Fall 2024). PhD from UC Berkeley.

sid @sidgreddy

970 Followers 4K Following ai researcher working on adaptive human-machine interfaces

Aditi Raghunathan @AdtRaghunathan

1K Followers 18 Following Assistant professor at CMU @SCSatCMU @CSDatCMU | Machine learning

Anca Dragan @ancadianadragan

8K Followers 178 Following AI safety & alignment at Google DeepMind • associate professor at UC Berkeley EECS • proud mom of an amazing 2yr oldCheck out this really cool work w/@yidingjiang ! We built a simple system (PCA + Clustering) for quantifying how "features" are distributed across models and data. Using this tool, we can mathematically understand the Generalization Disagreement Equality. 🤝

Models with different randomness make different predictions at test time even if they are trained on the same data. In our latest ICLR paper (oral), we investigate how models learn different features, and the effect this has on agreement and (potentially) calibration. 1/

Models with different randomness make different predictions at test time even if they are trained on the same data. In our latest ICLR paper (oral), we investigate how models learn different features, and the effect this has on agreement and (potentially) calibration. 1/

Multitask learning (MTL) is known to enhance model performance on average, yet its effect on group fairness is under-explored. In our recent #TMLR2024 paper with @derylucio @setlur_amrith @AdtRaghunathan @atalwalkar & @gneubig, we address this gap! openreview.net/forum?id=sPlhA… (1/10)

@yonashav Yeah, I guess that's true, but one day someone is going to need a lower bound, and they're going to be really prepared.

@setlur_amrith Hey Amrith! Neither result is better, they're just measuring different things. Fig2a shows direct answer prediction probabilities with our learned internal thinking (and no CoT). This result shows you can augment CoT by internally thinking before each "spoken" CoT token

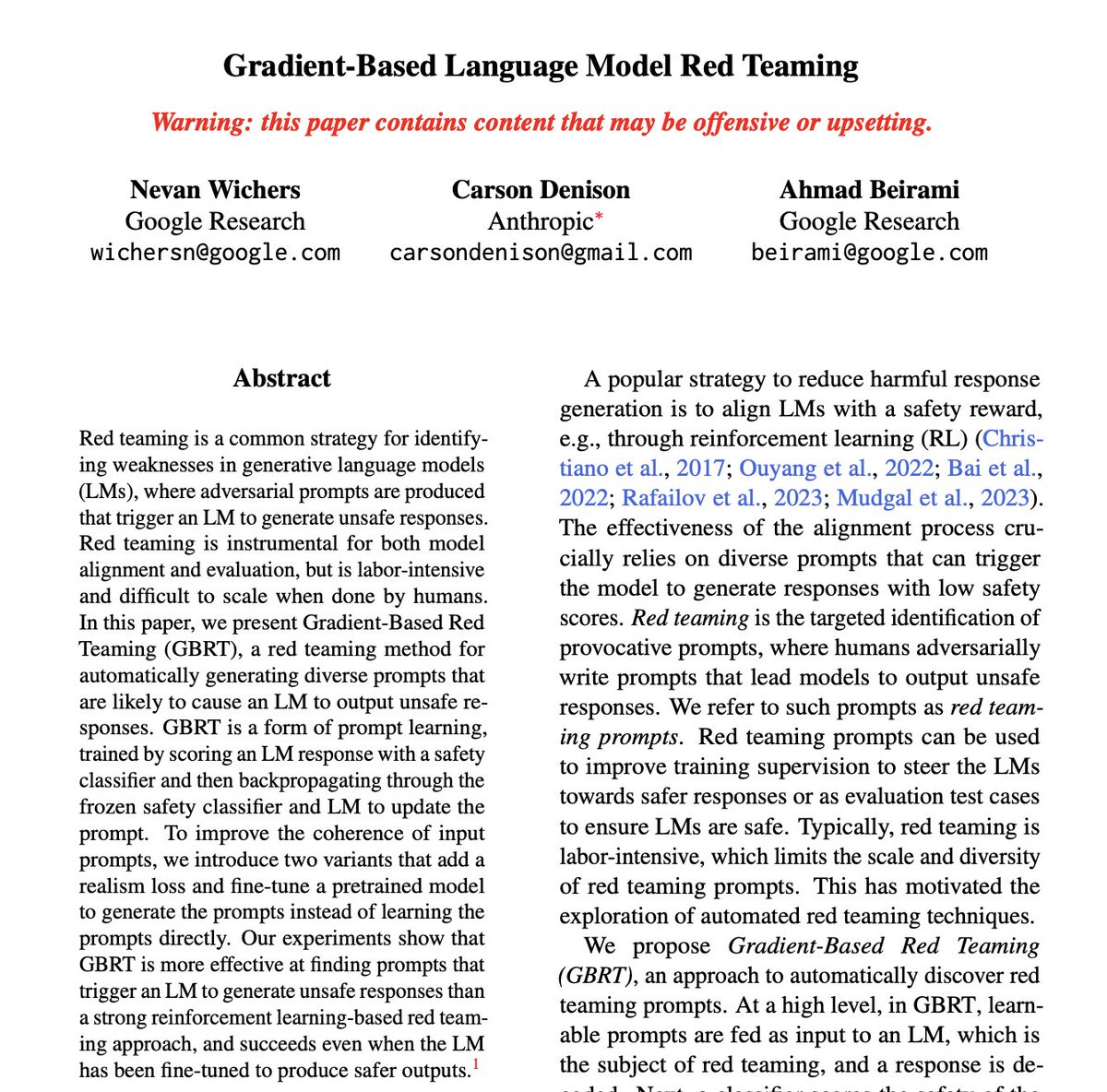

[#eacl2024 paper] TL;DR We introduce 𝗴𝗿𝗮𝗱𝗶𝗲𝗻𝘁-𝗯𝗮𝘀𝗲𝗱 𝗿𝗲𝗱 𝘁𝗲𝗮𝗺𝗶𝗻𝗴 (𝗚𝗕𝗥𝗧), an effective method for triggering language models to produce unsafe responses, even when the LM is finetuned to be safe through 𝑎𝑙𝑖𝑔𝑛𝑚𝑒𝑛𝑡.

קצת סדר בנתוני הגיוס של ״החרדים״. נתחיל בשורה התחתונה. כ-70% מבני 20-29 שגדלו בבית חרדי וגוייסו בשנים האחרונות, אינם חרדים כיום. רובם שירתו במסלולים מעורבים, לכן סביר שיצאו עוד לפני הגיוס. כלומר, גם נתוני הגיוס העלובים שפורסמו, למעשה מנופחים יותר מפי 2. #יוצאים_עם_נתונים_2023 ?/1

Starting in research is hard... So many papers, and they're written for experts! In a new initiative, Learning Theory Alliance is featuring ~monthly technical blog posts, highlighting recent results in an accessible manner. Follow us on Twitter to not miss out! 1st post 👇: 1/2

Grateful to be one of the AI2050 fellows this year! One of the most exciting things about the award is that it provides us with resources to bring together—and strengthen—a community of people interested in AI systems that are geographically and culturally inclusive.

Congratulations to Prof Danish Pruthi for being selected as an AI2050 Early Career Fellow🎊 Prof Pruthi joins a cohort of 19 Fellows and is the only Fellow selected from India. iisc.ac.in/events/danish-…

How can we train LLM Agents, to learn from their own experience autonomously? Introducing ArCHer, a simple (i.e., small change on top of standard RLHF) and effective way of doing so with multi-turn RL 🧵⬇️ Paper: arxiv.org/abs/2402.19446 Website: yifeizhou02.github.io/archer.io/

🚀Introducing Nemotron-4 15B by @nvidia! 🎉 With 15B parameters and trained on 8T tokens, it's impressive in multilingual AI. Outperforms all similarly-sized models and dominates in multilingual tasks, even surpassing models 4x larger! #NVIDIA #Nemotron4 arxiv.org/pdf/2402.16819…

Measure theory is like assembly language. You almost never use it directly and most people don't need to study it in any depth. But it's still an essential part of the system and occasionally it's useful for debugging.

If you're at #NeurIPS2023, @KwangjunA will be presenting his work on SpecTr++ in Optimal Transport workshop where he discusses improved transport plans for speculative decoding.

[Today 5pm poster 401 #NeurIPS2023] Is your LLM inference too slow? We achieve 2.13x wallclock speedup in sampling from SOTA LLMs with 𝐩𝐫𝐨𝐯𝐚𝐛𝐥𝐲 no quality sacrifice. How? We use a cheap LM to draft 𝐦𝐮𝐥𝐭𝐢𝐩𝐥𝐞 samples; scored with the LLM to accept/reject tokens.

If you're at #NeurIPS2023, Sidharth Mudgal and Jong Lee will be presenting this work at the socially responsible language modeling workshop today.

Controlled Decoding from Language Models paper page: huggingface.co/papers/2310.17… propose controlled decoding (CD), a novel off-policy reinforcement learning method to control the autoregressive generation from language models towards high reward outcomes. CD solves an off-policy…

3 mo. ago we released the Open X-Embodiment dataset, today we’re doing the next step: Introducing Octo 🐙, a generalist robot policy, trained on 800k robot trajectories, stronger than RT-1X, flexible observation + action spaces, fully open source! 💻: octo-models.github.io /🧵

Delighted to share that our @emnlpmeeting work on background summarization received an outstanding paper award in the summarization track 🏆 w/ Kevin Small and @markusdr Paper: arxiv.org/abs/2310.16197 Github: github.com/amazon-science… Highlights below, (1/4) #EMNLP2023

A big shout out to LTI PhD student @AdithyaPratapa, who received the EMNLP 20203 Outstanding Paper Award for Background Summarization of Event Timelines. adithya7.github.io. Congtratulations!

Automatic subtitling of talks has become a standard feature at big ML confs @NeurIPSConf. It fails often even for clear and native speakers so I can't imagine anyone being able to rely on it. But at least it's rather entertaining. Here is one example and feel free to add yours

Check out our #NeurIPS2023 paper "Learning with Explanation Constraints" with my co-author @rpukdeee, which explains how explanations of model behavior can help us from a learning-theoretic perspective! (arxiv.org/pdf/2303.14496…) 🧵 (1/n)

An O(n) algorithm (including random sketching) to check out the posters is no longer feasible! Does anyone know a sublinear algorithm for retrieval/learning from an unsorted knowledge base? #NeurIPS2023