Leon Lang @Lang__Leon

PhD student at the intersection of information theory and deep learning. Two master's degrees in maths and AI. Interested in AI existential safety linkedin.com/in/leon-lang/ University of Amsterdam Joined September 2013-

Tweets2K

-

Followers612

-

Following290

-

Likes1K

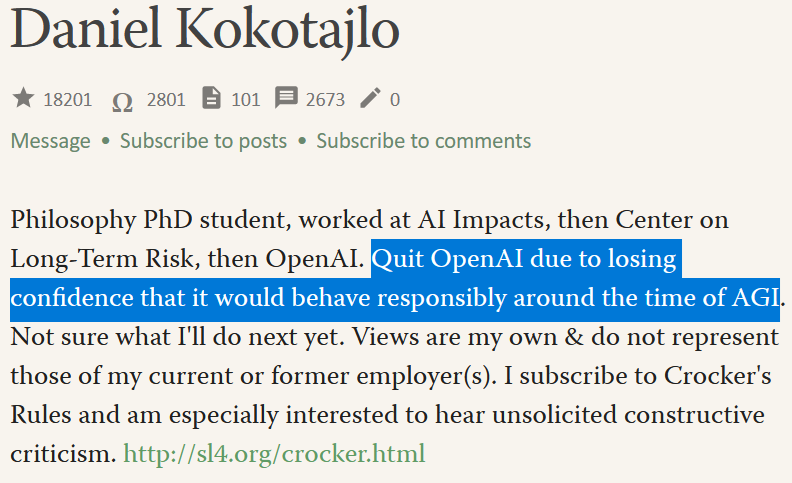

OpenAI are losing their best and most safety-focused talent. Daniel Kokotajlo of their Governance team quits "due to losing confidence that it would behave responsibly around the time of AGI" Last year he wrote he thought there was a 70% chance of an AI existential catastrophe.

OpenAI are losing their best and most safety-focused talent. Daniel Kokotajlo of their Governance team quits "due to losing confidence that it would behave responsibly around the time of AGI" Last year he wrote he thought there was a 70% chance of an AI existential catastrophe. https://t.co/KJVJX24wkU

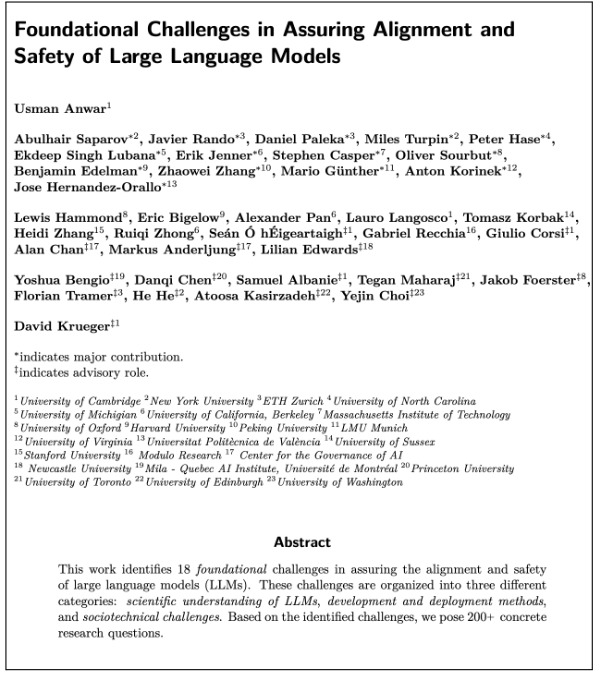

I’m super excited to release our 100+ page collaborative agenda - led by @usmananwar391 - on “Foundational Challenges In Assuring Alignment and Safety of LLMs” alongside 35+ co-authors from NLP, ML, and AI Safety communities! Some highlights below...

We just sent emails notifying all applicants. I'm sorry if your application didn't make it in, please don't let this dissuade you from pursuing research in alignment!

New AI dating app idea: it monitors everyone’s entire lifestream and arranges synchronistic meetcutes which mysteriously always work out either in stable marriage or a 3-6 month adventure that teaches you an important life lesson.

We found a bug that inflated performance. We withdrew the paper from CVPR and apologize for any confusion and inconvenience caused.

We found a bug that inflated performance. We withdrew the paper from CVPR and apologize for any confusion and inconvenience caused.

Apparently this donation happened in 2021. It feels weird/wrong that (I think) FLI didn’t tell us about it.

Apparently this donation happened in 2021. It feels weird/wrong that (I think) FLI didn’t tell us about it.

“The positive direction [of AGI] is amazing science, things like #AlphaFold… but we've also got to make sure these systems are understandable and controllable.” 🌐 Our CEO @demishassabis spoke to @dwarkesh_sp about building AI safely, compute efficiency and more. →…

That's such a fascinating thread from someone who found the memeplex of people worried about us all dying from AI. It's also a totally reasonable reaction.

That's such a fascinating thread from someone who found the memeplex of people worried about us all dying from AI. It's also a totally reasonable reaction.

A large amount of Dragon Ball Z and Dragon Ball Super involves not the solution to problems, but the creation of *additional* universes in which the problems don't exist (anymore). The worlds that are destroyed or dystopic still exist, and the main storyline continues in the…

I'm increasingly annoyed of the kind of pause AI advocates on X that present simple moralizing reasoning of the kind "Imagine you'd be sitting in a plane that has a 10% chance of crashing". I'm now systematically trying to teach X that I don't like those, not yet successfully.

Things the rich do now, the poor do in 5, 10, 20 years. This is why I guess climate change is gonna be okay. If the UK has decarbonised, so will China and India. (And yes there is less UK industry, but still, look at that fall)

Things the rich do now, the poor do in 5, 10, 20 years. This is why I guess climate change is gonna be okay. If the UK has decarbonised, so will China and India. (And yes there is less UK industry, but still, look at that fall)

Nothing at @GoogleDeepMind could be more important than AGI Safety and I don't know of anyone better suited to lead our work in this critical area. It is with great pleasure that I welcome Anca!

Nothing at @GoogleDeepMind could be more important than AGI Safety and I don't know of anyone better suited to lead our work in this critical area. It is with great pleasure that I welcome Anca!

Great piece on @METR_Evals and a point that's sorely misunderstood in the AI debate: we don't have good ways of testing if a model's safe! time.com/6958868/artifi…

marrying to give A while back I read a blog post so utterly savage in its satire that it could be sincere. It was about marrying rich for philanthropic reasons. Turns out it was written by Caroline Ellison

marrying to give A while back I read a blog post so utterly savage in its satire that it could be sincere. It was about marrying rich for philanthropic reasons. Turns out it was written by Caroline Ellison

I can't say I'm familiar with the philosophy of music, but I hope it's full of things like "in this paper, we argue that a song is a banger if and only if it slaps".

Learn more about @apolloaisafety expansion into international engagement, our efforts to strengthen the AI model evaluations ecosystem and participation in relevant US efforts: apolloresearch.ai/blog/fostering…

I actually think something like an “LLM”-winter is still possible. Scaling up LLMs will soon cost in the billions of dollars, and that investment will be hard if the profit-increase does not keep up. If that happens, then the development of LLMs will be a slow grind driven by…

I actually think something like an “LLM”-winter is still possible. Scaling up LLMs will soon cost in the billions of dollars, and that investment will be hard if the profit-increase does not keep up. If that happens, then the development of LLMs will be a slow grind driven by…

Today I get to pay $300 to take a 3-hour English test in order to be eligible to stay in the country where I am employed to teach English language courses at an English language institution, a prerequisite for which was the PhD I earned at an English language university.

People aren't thinking through the implications of the military controlling AI development. It's plausible AI companies won't be shaping AI development in a few years, and that would dramatically change AI risk management. Possible trigger: AI might suddenly become viewed as the…

David Krueger @DavidSKrueger

13K Followers 4K Following Cambridge faculty - AI alignment, deep learning, and existential safety. Formerly Mila, FHI, DeepMind, ElementAI, AISI.

Stefan Schubert @StefanFSchubert

28K Followers 2K Following Philosophy, psychology, and effective altruism.

Taco Cohen @TacoCohen

21K Followers 3K Following Deep learner at FAIR. Into codegen, equivariance, generative models. Spent time at Qualcomm, Scyfer (acquired), UvA, Deepmind, OpenAI.

Tom Lieberum @lieberum_t

948 Followers 178 Following Trying to reduce AGI x-risk by understanding NNs Interpretability RE @DeepMind BSc Physics from @RWTH GWWC pledgee @ https://t.co/Vh2bvwhuwd

Marius Hobbhahn @MariusHobbhahn

2K Followers 995 Following Director/CEO at Apollo Research @apolloaisafety Ph.D. student of Machine Learning @PhilippHennig5; AI safety/alignment

Melika Ayoughi @melikaayoughi

479 Followers 238 Following Ph.D. Candidate at the University of Amsterdam at VISLab @UvA_Amsterdam & @INDE_LAB_AMS

EigenGender @EigenGender

6K Followers 660 Following all my posts are shitposts that simultaneously reveal the true nature of reality. large language models; kinda EA; 🏳️⚧️

Miles Brundage @Miles_Brundage

43K Followers 10K Following Policy research at @openai. I mostly tweet about AI, animals, and sci-fi. He/him. Views my own.

Lauro @laurolangosco

881 Followers 677 Following Working on AI safety and science of deep learning @CambridgeMLG. Here to discuss ideas and have fun.

Cas (Stephen Casper) @StephenLCasper

3K Followers 1K Following #AI safety & responsibility. PhD Candidate @ #MIT_CSAIL.

Sara Magliacane (she/.. @saramagliacane

3K Followers 1K Following Assistant professor @AmlabUva @UvA_Amsterdam, previously @MITIBMLab , #causality #CausalRepresentationLearning #causalityInspiredML, 🇮🇹🇸🇮 in 🇳🇱

Consistently Candid A.. @FellowHominid

939 Followers 450 Following Just because you're paranoid doesn't mean they're not after you

Profoundlyyyy @profoundlyyyy

4K Followers 4K Following We should be thoughtful about this AI thing. Hope to share boldly, be wrong sometimes, and learn

Andreas Kirsch 🇮�.. @BlackHC

9K Followers 5K Following Past: 🧑🎓 DPhil @AIMS_oxford @ExeterCollegeOx @UniofOxford (4.5yr) 🧙♂️ RE @DeepMind (1yr) 📺 SWE @Google (3yrs) 🎓 @TU_Muenchen 👤 Fellow @nwspk

Jonathan Mannhart is .. @JMannhart

2K Followers 1K Following Interested in: cognitive science, Bayes, (ir)rationality, (effective) altruism, happiness, AI Alignment, reward hacking, running, reading books

ELLIS Amsterdam @Ellis_Amsterdam

1K Followers 258 Following Twitter account of the ELLIS Amsterdam Unit. Promoting research excellence and advancing breakthroughs in AI. Maintainers: @pranindiati, @cgmsnoek, @adi_sauter

Wreenursl @WreenurslVdSWw

0 Followers 37 Following

David Wessels @Dafidofff

116 Followers 135 Following PhD candidate w/ @erikjbekkers & @egavves interested in geometric deep learning and generative modelling at @AmlabUva & @amsterdamumc & @ellogonai

Jindong Gu @Jindong73504766

294 Followers 891 Following Senior Research Fellow in University of Oxford @OxfordTVG Faculty Researcher @Google #ResponsibleAI #AISafety #GenAI Homepage: https://t.co/YOSVO3jb6h

Elliott Thornley @ElliottThornley

2K Followers 574 Following Postdoctoral Research Fellow @UniofOxford, @GPIOxford. Previously: Philosophy Fellow at the Center for AI Safety (@ai_risks).

Hoawhoo @hoawhoo8345

2 Followers 197 Following

Mark Kovarski @mkovarski

2K Followers 5K Following Responsible AI, Cloud, SaaS, Product 🤖 🫶🌐💡 | https://t.co/2vuiFosXlm 📪

Jordan Gong @jordan__gong

43 Followers 2K Following

Doshool @doshool28395

178 Followers 6K Following

Gram Workshop @GRaM_workshop

32 Followers 184 Following Hi, I am the official account for the first edition of GRaM: Geometry-grounded Representation learning and generative Modeling Workshop at ICML2024

Horizon Events @HorizonEvents9

11 Followers 270 Following Events consultancy dedicated to advancing R&D in AI safety

Michelle Li @michelleli__

85 Followers 265 Following Research @Waymo, previously undergrad @MIT and intern @CHAI_Berkeley

SHA Group @weieisha

1K Followers 3K Following Associate Prof. ("1000 Youth Talents") at @ZJU_China; Marie-Curie Fellow at @UCL. Engages in electromagnetics, nano/nonlinear/quantum optics, and solar cells.

Mateusz Bagiński ⏹.. @mlbaggins

51 Followers 410 Following the past is a sphere; hands lift the oceans Agent foundations, LessWrong, Effective Altruism, RadicalxChange, ~H+

Leighsmoth @leighsmoth34751

142 Followers 3K Following

sandy tanwisuth @sandguine

124 Followers 858 Following

Johannes Treutlein @j_treutlein

123 Followers 115 Following CS PhD student in AI existential safety research

Deep_In_Depth @Deep_In_Depth

15K Followers 13K Following #DeepLearning #MachineLearning #AI #Reinforcement #Learning #ComputerVision #NLP #NPU #NeuroMorphic #NeuralNetwork curated News feed. So dip into the Detphs !

Madelyn Anick @MadelynAni

88 Followers 5K Following

Paris Borunda @ParisBorun40327

69 Followers 5K Following

Melisa Hutching @melisa48647

104 Followers 5K Following

Skyler Pry @PrySkyler46283

40 Followers 5K Following

Hope Koskie @HKoskie88654

103 Followers 5K Following

Mignon Swope @migno_sw

61 Followers 5K Following

Jakob Lohmar @LohmarJakob

40 Followers 89 Following DPhil Philosophy Student @UniofOxford, Research Assistant @GPIOxford, working on Longtermism

KenyanKeys Real Estat.. @univirtual_1

75 Followers 289 Following

Pistis @broad_priors

44 Followers 161 Following AI Safety Researcher. Honest, uncertain, and unfiltered discourse on the future of AI, AI Safety/Alignment, and existential risks.

Leslosm @leslosm13679

135 Followers 3K Following

Daniel Filan 🔎 @freed_dfilan

509 Followers 367 Following Someone should figure out how to make super-smart AI that won't take over the world, and nobody should make AI that takes over the world. 1 Cor 15:26. d/acc?

Lucilla Miller @lucill_mil

75 Followers 5K Following

Ollie Christion @OChristion46244

64 Followers 5K Following

Narweena @Narweena1

80 Followers 1K Following

Kasha Skrocki @kash_skrock

90 Followers 5K Following

Sahar Rainey @RaineySaha70072

82 Followers 5K Following

Shurik Shapiro @ShapiroShu6992

43 Followers 387 Following

Joe Corrigan 🎧 @joecorriganx

557 Followers 5K Following

Arianna Gjorven @AriannaGjo58664

95 Followers 5K Following

Julian4ик @juliancik

968 Followers 3K Following Awakening into PDA & beyond. Tabula rasa. Spontaneity | Neuroplasticity | Meditation | Psychedelics | DefaultModeNetwork | NonSymbolicExperience | Consciousness

Akash Wasil @AkashWasil

1K Followers 1K Following Left my PhD to make sure AI doesn't cause a national security crisis. AI policy & comms.

Machine Learning: Sci.. @MLSTjournal

9K Followers 10K Following A multidisciplinary, #openaccess journal devoted to the application and development of #machinelearning for the sciences. Published by @IOPPublishing.

Dalcy @dalcy_me

116 Followers 104 Following heyo i study math and draw stuff, very interested in descriptive agent foundations research. will one day make hell cease

Richard Ngo @RichardMCNgo

35K Followers 1K Following What would we need to understand in order to design an amazing future? Figuring that out @openai

Eliezer Yudkowsky ⏹.. @ESYudkowsky

175K Followers 89 Following The original AI alignment person. Missing punctuation at the end of a sentence means it's humor. If you're not sure, it's also very likely humor.

Sindy Löwe @sindy_loewe

3K Followers 360 Following PhD Student with @WellingMax at the University of Amsterdam. Deep Learning with Structured Representations.

Andrej Karpathy @karpathy

979K Followers 905 Following 🧑🍳. Previously Director of AI @ Tesla, founding team @ OpenAI, CS231n/PhD @ Stanford. I like to train large deep neural nets 🧠🤖💥

Rob Miles (✈️ Tok.. @robertskmiles

18K Followers 789 Following Explaining AI Alignment to anyone who'll stand still for long enough, on YouTube and Discord. Music, movies, microcode, and high-speed pizza delivery

Yann LeCun @ylecun

712K Followers 719 Following Professor at NYU. Chief AI Scientist at Meta. Researcher in AI, Machine Learning, Robotics, etc. ACM Turing Award Laureate.

Rob Bensinger ⏹️ @robbensinger

8K Followers 302 Following Comms @MIRIBerkeley. RT = increased vague psychological association between myself and the tweet.

David Krueger @DavidSKrueger

13K Followers 4K Following Cambridge faculty - AI alignment, deep learning, and existential safety. Formerly Mila, FHI, DeepMind, ElementAI, AISI.

Aella @Aella_Girl

205K Followers 369 Following ⚜️whorelord⚜️, vexworker, survey artist, way too earnest Discord: https://t.co/S1MaMdCwyK

Joscha Bach @Plinz

129K Followers 754 Following FOLLOWS YOU. Artificial Intelligence, Cognitive Architectures, Computation. The goal is integrity, not conformity. https://t.co/rFUNzdYXuK

Stefan Schubert @StefanFSchubert

28K Followers 2K Following Philosophy, psychology, and effective altruism.

Taco Cohen @TacoCohen

21K Followers 3K Following Deep learner at FAIR. Into codegen, equivariance, generative models. Spent time at Qualcomm, Scyfer (acquired), UvA, Deepmind, OpenAI.

davidad 🎇 @davidad

13K Followers 7K Following Programme Director @ARIA_research | accelerate mathematical modelling with AI and categorical systems theory » build safe transformative AI » cancel heat death

Qualy the lightbulb @QualyThe

7K Followers 319 Following Official Unofficial EA mascot. I'm here to make friends and maximise utility, and I'm all out of neglected altruistic opportunities

Neel Nanda @NeelNanda5

13K Followers 89 Following Mechanistic Interpretability lead @DeepMind. Formerly @AnthropicAI, independent. In this to reduce AI X-risk. Neural networks can be understood, let's go do it!

Sharvaree Vadgama @SharvVadgama

833 Followers 2K Following PhD student at @AmlabUva with @erikjbekkers & @jmtomczak | everything Geometric + Generative models | MS CS @UUtah | Theoretical Physics @IISERPune | she/her

Tyler John @tyler_m_john

3K Followers 748 Following Technology policy grantmaker at https://t.co/BFDHDf3mHa @RutgersU Philosophy PhD Views my own All confidence no intervals

Nora Ammann @AmmannNora

516 Followers 791 Following Exploring principles of intelligent behaviour in artificial and other systems: https://t.co/GphUSABGT9 Sometimes converting thought into words at https://t.co/0w3PUX1GVs

Center for Human-Comp.. @CHAI_Berkeley

3K Followers 108 Following CHAI is a multi-institute research organization based out of UC Berkeley that focuses on foundational research for AI technical safety.

Jakob Lohmar @LohmarJakob

40 Followers 89 Following DPhil Philosophy Student @UniofOxford, Research Assistant @GPIOxford, working on Longtermism

Tash @Kiea_Tash

2K Followers 468 Following Chef, League Coach and wall of text writer. https://t.co/e5ndMzheST - all posts + sheets here Plant Acc - @Kiea_Tish

Daniel Filan 🔎 @freed_dfilan

509 Followers 367 Following Someone should figure out how to make super-smart AI that won't take over the world, and nobody should make AI that takes over the world. 1 Cor 15:26. d/acc?

Shakeel @ShakeelHashim

3K Followers 1K Following AI journalist-in-residence @Tarbell_Fellows. Prev: AI Safety Communications Centre, comms @EffectvAltruism, journalist @TheEconomist, @Protocol, @Finimize

Charlotte Stix @charlotte_stix

4K Followers 774 Following Head of AI Governance @apolloaisafety | AI reg+policy PhD | prev. AI Policy @OpenAI; @EU_Commission; @wef; @Good_Policies; @LeverhulmeLCFI | 30u30 | makes 🎥

Cognition @cognition_labs

123K Followers 19 Following Makers of Devin, the first AI software engineer. We are an applied AI lab focused on reasoning, and code is just the beginning. Join us: https://t.co/tpfZwEwGiq

Divya Siddarth @divyasiddarth

5K Followers 996 Following collective intelligence accelerationist @collect_intel, building better institutions @UniofOxford @UK AI Safety Institute, former political economist @Microsoft

FAR AI @farairesearch

1K Followers 19 Following Ensuring AI systems are trustworthy and beneficial to society by incubating new AI safety research agendas.

Johannes Treutlein @j_treutlein

123 Followers 115 Following CS PhD student in AI existential safety research

Figure @Figure_robot

72K Followers 1 Following Figure is an AI Robotics company building the world's first commercially viable autonomous humanoid robot.

Grace Adams @gracevadams

1K Followers 282 Following Head of Marketing @GivingWhatWeCan Just doing my best 😌 All opinions are my own

Physical Intelligence @physical_int

4K Followers 8 Following Physical Intelligence (Pi), bringing AI into the physical world.

Inflection AI @inflectionAI

49K Followers 3 Following We are an AI studio creating a personal AI for everyone. Our first is @pi, a supportive and empathetic conversational AI.

Kaj Sotala @xuenay

5K Followers 557 Following This is my new bio. It replaces my old one, which I was told was bad. Meme alt: @KajPictures

OxCo @_OxCo_

63 Followers 167 Following Research impact, academic engagement and science communication. Worldwide video production, video training and video events services for academics.

Dr. Peter S. Park ⏸.. @dr_park_phd

1K Followers 781 Following AI Existential Safety Postdoctoral Fellow @MIT, @Tegmark Lab. @Harvard PhD '23, @Princeton '17. Alum of @JoHenrich Lab. Studies cognition (both human and AI).

Scott Emmons @emmons_scott

304 Followers 32 Following PhD student at UC Berkeley's Center for Human-Compatible Artificial Intelligence

Francis Rhys Ward @F_Rhys_Ward

181 Followers 389 Following PhD Student | AI Safety | Imperial College London.

Carol Magalhaes @_Carol_Mag

2K Followers 990 Following Passionate about longevity | Current: @age1VC | from 🇧🇷

Krueger AI Safety Lab @kasl_ai

255 Followers 51 Following We are a research group at the University of Cambridge focused on avoiding catastrophic risks from AI.

Anca Dragan @ancadianadragan

8K Followers 178 Following AI safety & alignment at Google DeepMind • associate professor at UC Berkeley EECS • proud mom of an amazing 2yr old

Beff Jezos — e/acc .. @BasedBeffJezos

102K Followers 2K Following chief accelerator & founder @ e/acc // thermodynamic priest // Kardashev gradient climber // memetic warlord // building @extropic_ai

Nora Belrose @norabelrose

8K Followers 124 Following Working toward a free and fair future powered by friendly AI. Head of interpretability research at @AiEleuther, but tweets are my own views, not Eleuther’s.

Open Philanthropy @open_phil

15K Followers 17 Following Open Philanthropy's mission is to help others as much as we can with the resources available to us.

Sam Toyer @sdtoyer

218 Followers 330 Following PhD student @berkeley_ai | Thief Executive Officer @ https://t.co/oY65mYDu3w

Lucas Lang @LLangResearch

36 Followers 65 Following Junior research group leader at @TUBerlin. Enthusiastic about applying quantum mechanics to solve chemical problems.

Luke Bailey @LukeBailey181

79 Followers 108 Following Undergraduate @Harvard studying computer science and math.

Roger Grosse @RogerGrosse

10K Followers 751 Following

ARIA @ARIA_research

10K Followers 36 Following Advanced Research + Invention Agency. Empowering scientists to reach for the edge of the possible.

Nadya Petrova @nadyathinks

85 Followers 37 Following Founder of Luna Park — we hire IMO-gold-level ppl. Work on AI safety scaling. Write a rationality blog about (mostly psychology-related) stuff: https://t.co/kXIvk7w2Rq

Surya Ganguli @SuryaGanguli

15K Followers 457 Following Associate Prof of Applied Physics @Stanford, and departments of Computer Science, Electrical Engineering and Neurobiology. Venture Partner @a16z

AI Safety Papers @safe_paper

653 Followers 86 Following Discovering exciting new research on Arxiv is one of my favorite pastimes!

Silviu Pitis @silviupitis

2K Followers 725 Following ML PhD student at @UofT/@VectorInst working on normative AI alignment.

Kris Kashtanova @icreatelife

98K Followers 2K Following community + art + tech | AI Evangelist @Adobe + Educator | 𝕏s are my own

Max Zhdanov @maxxxzdn

894 Followers 212 Following PhD candidate at @AmlabUva with @wellingmax and @jwvdm. Research in physics-inspired and geometric deep learning.She is correct. Like it or not, economic incentives make the world go round. If you want more babies from educated women, design an economic system that *directly rewards* those women for eschewing promising careers for children. Anything else is just magical thinking.

Low birth rates are not caused by high cost of living but by opportunity cost of motherhood. Women are choosing careers over motherhood/larger families because careers pay money and children cost money.

@ciphergoth i guess the answer should be infinity?

@ilex_ulmus I'm going to be real: I would feel kind of dumb.

God is punishing me because why is she throwing hot asexuals my way?

Incredibly hyped to share this!

New @GoogleDeepMind MechInterp work! We introduce Gated SAEs, a Pareto improvement over existing sparse autoencoders. They find equally good reconstructions with around half as many firing features, while maintaining interpretability (CI 0-13% improvement). Joint w/ @ArthurConmy

@ESYudkowsky @RokoMijic @robinhanson You spend all of your time explaining how people who understand your argument perfectly well are not reflecting your argument accurately. You spending none of your time actually talking about your argument so people can criticize it.

Sometimes I think we don't deserve them. Or at the very least, safetyists sure don't.

@tszzl And to be clear, I think that's really good! OpenAI has been exceptional and early on multiple metrics that I care about.

(To reiterate: These are not performative questions. I'm asking them in public because I think the public discourse is fucked and I would much rather have an actual conversation about this than get recruited into keeping some OpenAI staff secret. I know it's tempting to see…

It's striking how much emphasis many smart and epistemologically well-read people - e.g. many rationalists and EAs - put on their own inside views (e.g. re AI), and how little they pay heed to their epistemic peers To me, this is a significant and neglected rationality failure.

@lucyfarnik I think publicly leaving and saying he they are not competent or trustworthy (not to mention forcing them to replace him) is way more impactful than moving within whatever narrow latitude he had at the org

Is it just me or is the fact that disdain for AI writing is generating disdain for Nigerian business English deserving of more critical/social-justicey analysis than it's getting?

Habit I've been enjoying: at the end of my day I write down 1 thing I have learned that day. Literally 1 sentence. This gives a little reinforcement push on learnings that would otherwise mostly slip by.

@DreadCanary ^this bullshit is exactly what I’m talking about These are not actual sides of a war and “normie” language reifies that false idea

I’m super excited to release our 100+ page collaborative agenda - led by @usmananwar391 - on “Foundational Challenges In Assuring Alignment and Safety of LLMs” alongside 35+ co-authors from NLP, ML, and AI Safety communities! Some highlights below...

@ohabryka @Turn_Trout @lukeprog Tbc, I think the conclusion is right, but the argument seems wrong in a worrisome way that *might* assume inner misalignment away. I am actually not sure what the authors think about inner alignment.